Clear Sky Science · en

Large language models versus human examinee performance on Israeli anesthesiology board examinations

Why this matters for doctors and patients

As artificial intelligence tools race into hospitals and classrooms, a key question is how they stack up against real doctors when tested on core medical knowledge. This study looks at how two advanced language models compared with hundreds of human anesthesiology residents on Israel’s official written board exams, offering a glimpse of what AI may and may not be ready to do in medical training.

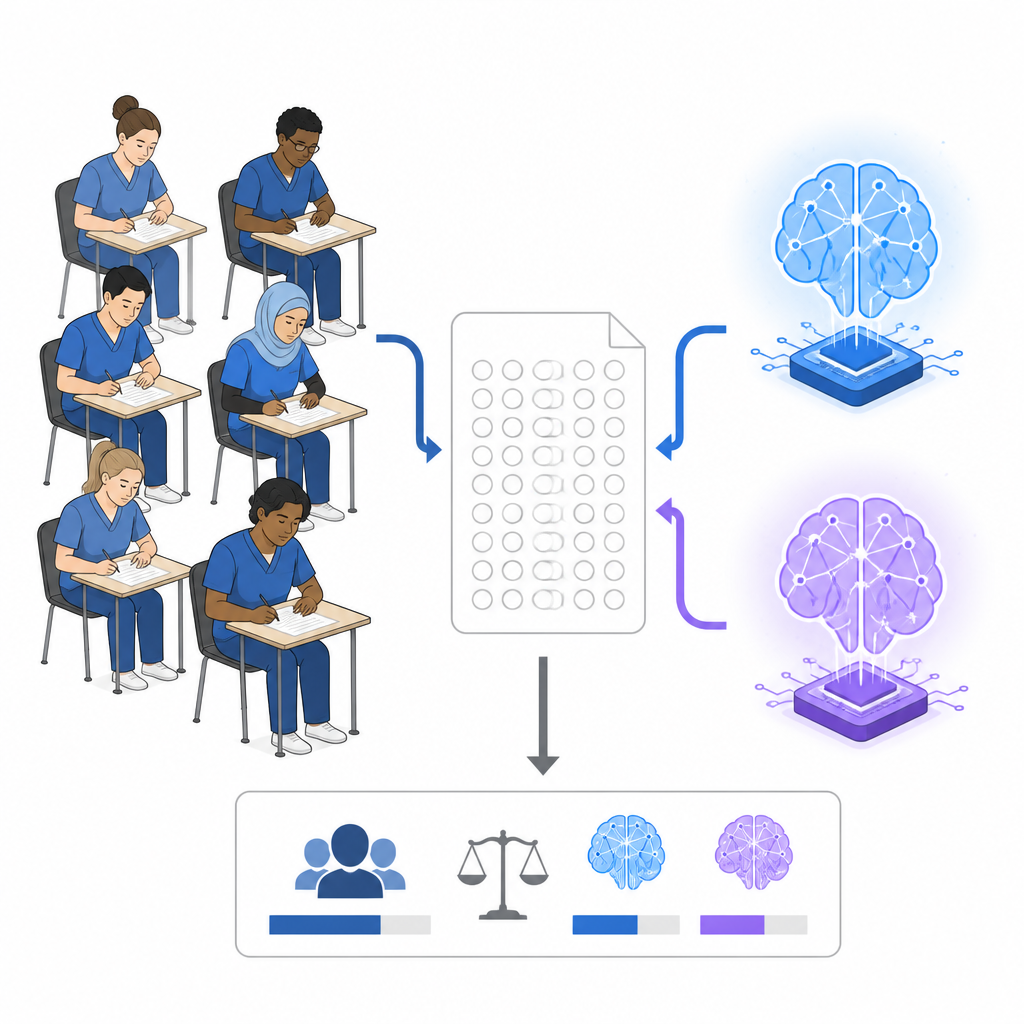

Testing humans and machines on the same exam

The researchers obtained three consecutive years of Israel’s Step 1 anesthesiology board exams, a multiple-choice test taken midway through residency. Each exam contained 150 questions in Hebrew, covering basic science, clinical foundations, subspecialty anesthesia, and emergency care. Alongside anonymized group results from 381 residents, the team also tested two commercial AI systems, Claude 3.7 Sonnet and ChatGPT-4, on all 450 questions. The models answered in Hebrew, saw the same images and waveforms as the residents, and were kept from remembering earlier questions. Each model took every exam twice so the team could measure both accuracy and internal consistency.

How well the AI models scored

On average across all exams, Claude 3.7 Sonnet answered about three out of four questions correctly, clearly above the residents’ overall score of a little over three out of five. ChatGPT-4 did slightly better than residents but not by a large margin. Claude exceeded the official passing mark on every attempt, whereas ChatGPT-4 passed only half the time. Yet when compared with the top quarter of human test takers, who averaged close to four correct answers out of five, both AI systems still lagged behind. In other words, current models outperformed the typical resident on these written tests but did not match the strongest human examinees.

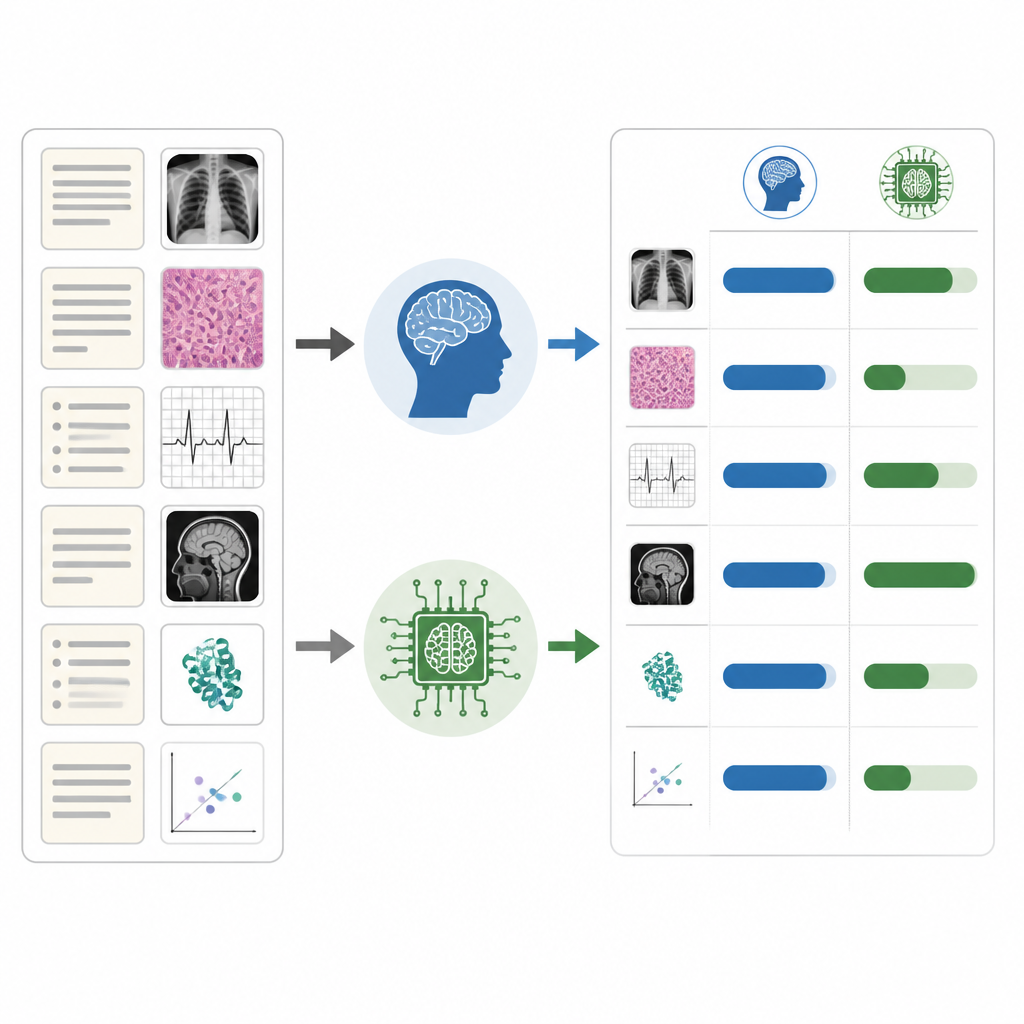

Strengths, weaknesses, and uneven performance

The study revealed that the AI systems were not equally good across all types of questions. Both models handled easier items very well and did relatively best in theoretical areas such as heart function, where rules and concepts are well established. Their performance dropped on harder questions and in practical areas like outpatient anesthesia and regional anesthesia, where context, judgment, and nuanced clinical experience matter. Questions that included images, scans, or monitoring traces also tripped them up more than text-only items, while human residents scored similarly on both formats. Claude and ChatGPT showed substantial internal consistency when asked the same exam twice, yet they only moderately agreed with the answer patterns of human examinees.

What this means for medical education

This uneven picture has important implications for how AI should be used in training doctors. Because the models’ accuracy swings from near-perfect in some topics to worryingly low in others, relying on them as a main study source could mislead learners. For example, a resident might receive excellent explanations in heart physiology but poor guidance in certain anesthesia techniques without realizing the difference. The authors argue that these tools should be used cautiously in education, with close human oversight, fact-checking, and clear awareness of their blind spots, especially in areas involving images and complex real-world decision making.

Takeaway for the future of AI and anesthesia

The study concludes that advanced language models now outperform the average anesthesiology resident on a challenging national written exam, but they still fall short of the best human performers and show large gaps across topics. Passing a multiple-choice test is only one slice of what it means to be a safe anesthesiologist, which also requires hands-on skills, crisis management, and communication with patients and teams. The authors suggest that AI’s real promise lies not in replacing clinicians but in supporting them, helping to strengthen learning and decision making when used thoughtfully alongside human expertise.

Citation: Ronen, A., Fein, S., Orbach-Zinger, S. et al. Large language models versus human examinee performance on Israeli anesthesiology board examinations. Sci Rep 16, 14978 (2026). https://doi.org/10.1038/s41598-026-45411-6

Keywords: anesthesiology education, board examinations, large language models, medical AI assessment, clinical competence