Clear Sky Science · en

Optimization of cross-institutional medical federated learning framework driven by confidential computing

Why Protecting Shared Medical Data Matters

Modern hospitals increasingly rely on artificial intelligence to read scans, spot disease earlier, and guide treatment. Yet life-saving algorithms need huge amounts of patient data, and sharing that data across institutions can clash with strict privacy rules and real concerns about hacking. This paper explores how hospitals can work together to train powerful AI models without pooling raw patient records, and how to guard against subtle hardware attacks that can leak secrets even when the data never leaves the hospital.

Hospitals Working Together Without Sharing Records

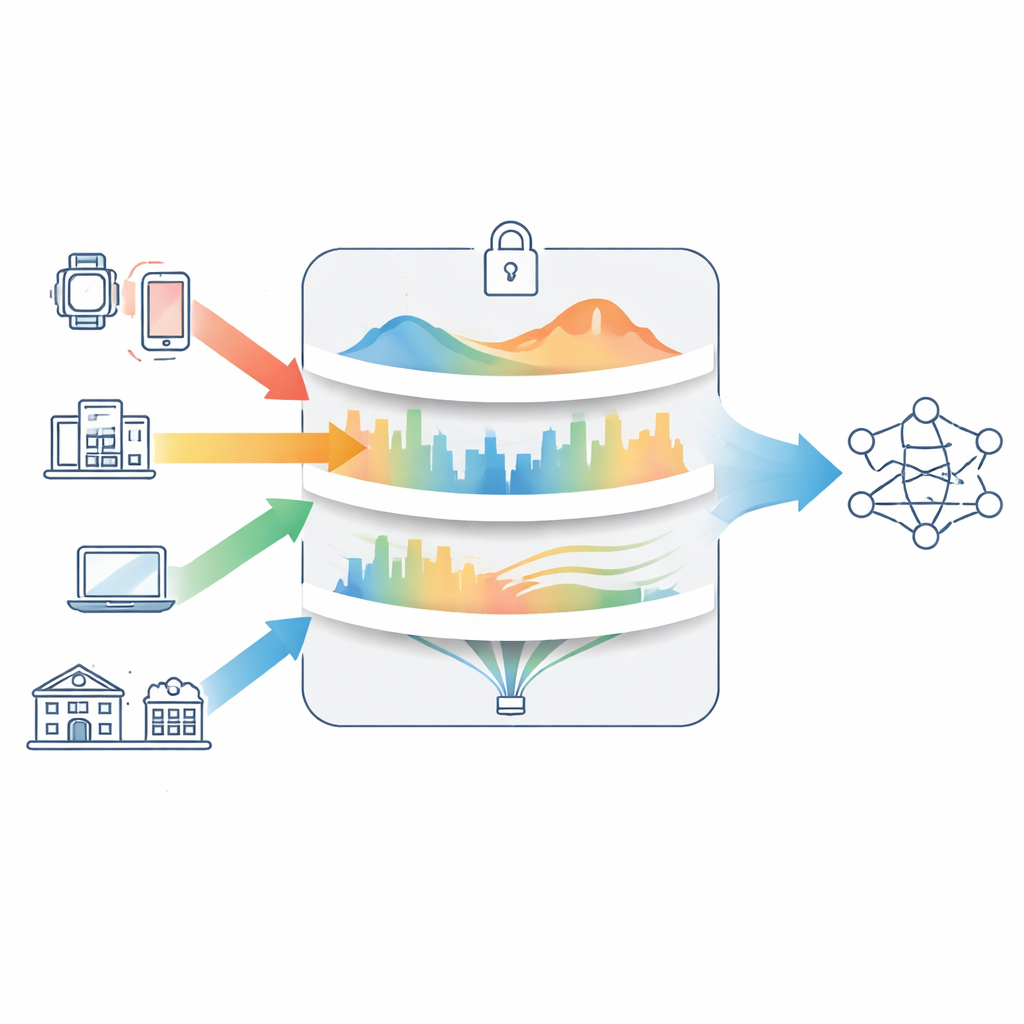

The study is built around a technique called federated learning, where each medical institution trains its own copy of an AI model on local patient data. Instead of sending images or records to a central server, hospitals send only model updates, which the server combines into a single shared model and then sends back. Over many rounds, this process can produce an AI system that reflects knowledge from many hospitals, while each institution keeps raw data behind its own firewall. This setup is attractive for healthcare and finance, where laws and ethics make large central data lakes risky or impossible.

Hidden Leaks Inside “Secure” Hardware

To make this collaboration safer, many systems use confidential computing: special hardware areas called trusted execution environments that isolate sensitive computations from the rest of the computer. In theory, these enclaves shield model training from curious insiders or compromised operating systems. In practice, they are not perfect. Attackers can sometimes infer secrets by watching timing, cache use, or other side effects of computation. Existing federated learning methods rarely model these leaks explicitly. They also tend to treat privacy, model accuracy, and network cost as separate design choices, even though in real deployments hospitals must juggle all three at once.

Balancing Accuracy, Privacy, and Network Load

The authors propose a new training method called Confidential Computing-Aware Projected Gradient Descent (CC-PGD). Instead of focusing only on prediction error, CC-PGD optimizes three things at the same time: how well the model fits the data, how much information could be exposed through side channels inside secure hardware, and how much communication is needed between hospitals and the central server. Privacy risk is measured using the “shape” of the gradients the model produces: if only a few parameters dominate, an attacker may learn more about specific patients; if information is spread more evenly, less can be inferred. The method also includes an indicator for when known hardware weaknesses are more likely to be exploitable, and a cost term that captures how big the model updates are and how long they take to transmit over networks.

A Guided Way to Tune Safety Settings

Under the hood, CC-PGD works like a carefully constrained version of ordinary gradient descent, the standard workhorse of modern AI training. The paper proves that, even with the extra privacy and communication penalties, their optimizer still converges reliably under broad conditions. Importantly, CC-PGD exposes two adjustable “knobs” that system designers can turn: one that strengthens privacy protection and one that limits communication overhead. Turning these knobs up or down lets operators decide, for example, whether to favor maximum accuracy for a research study or stronger privacy and lower bandwidth for a rural clinic with slow internet.

What the Experiments Show

To test the idea, the authors simulate multiple medical institutions training image-recognition models on well-known public datasets (MNIST and CIFAR-10). They compare CC-PGD with popular federated learning methods, including standard federated averaging, a technique that adds random noise for privacy, and a variant designed for uneven data across clients. Across both simple and more complex image tasks, and under both evenly and unevenly split data, CC-PGD keeps accuracy close to what would be achieved if all data were centralized. At the same time, it cuts privacy leakage measures by roughly a quarter to a third and reduces communication cost by about a fifth compared with the baselines.

What This Means for Real-World Healthcare AI

In plain terms, this work shows that hospitals do not have to choose strictly between model quality, privacy, and practicality when training AI together. By explicitly modeling how information might leak from supposedly secure hardware, and by building privacy and bandwidth costs into the training process itself, CC-PGD offers a principled way to design safer collaborative systems. While the experiments use benchmark images rather than real scans, the framework is general and could be applied to larger models, including generative AI tools and language models that analyze medical notes or cybersecurity logs. With further validation on real hospital data, approaches like this could underpin trustworthy AI networks that learn from many institutions while keeping each patient’s data locked safely at home.

Citation: Xu, F., Wei, X., Zhao, Z. et al. Optimization of cross-institutional medical federated learning framework driven by confidential computing. Sci Rep 16, 14323 (2026). https://doi.org/10.1038/s41598-026-44843-4

Keywords: federated learning, confidential computing, medical AI, privacy-preserving training, trusted execution environments