Clear Sky Science · en

Multi-objective inventory optimization using reinforcement learning: a comparative study on profitability and carbon emissions

Why this study matters to everyday business and the planet

Modern companies juggle a tough question: how can they keep shelves stocked and customers happy, earn healthy profits, and at the same time cut their carbon footprint? This paper explores whether a type of artificial intelligence called reinforcement learning can help managers automatically find inventory policies that both make money and reduce greenhouse gas emissions, rather than treating climate impact as an afterthought.

Balancing money made and emissions released

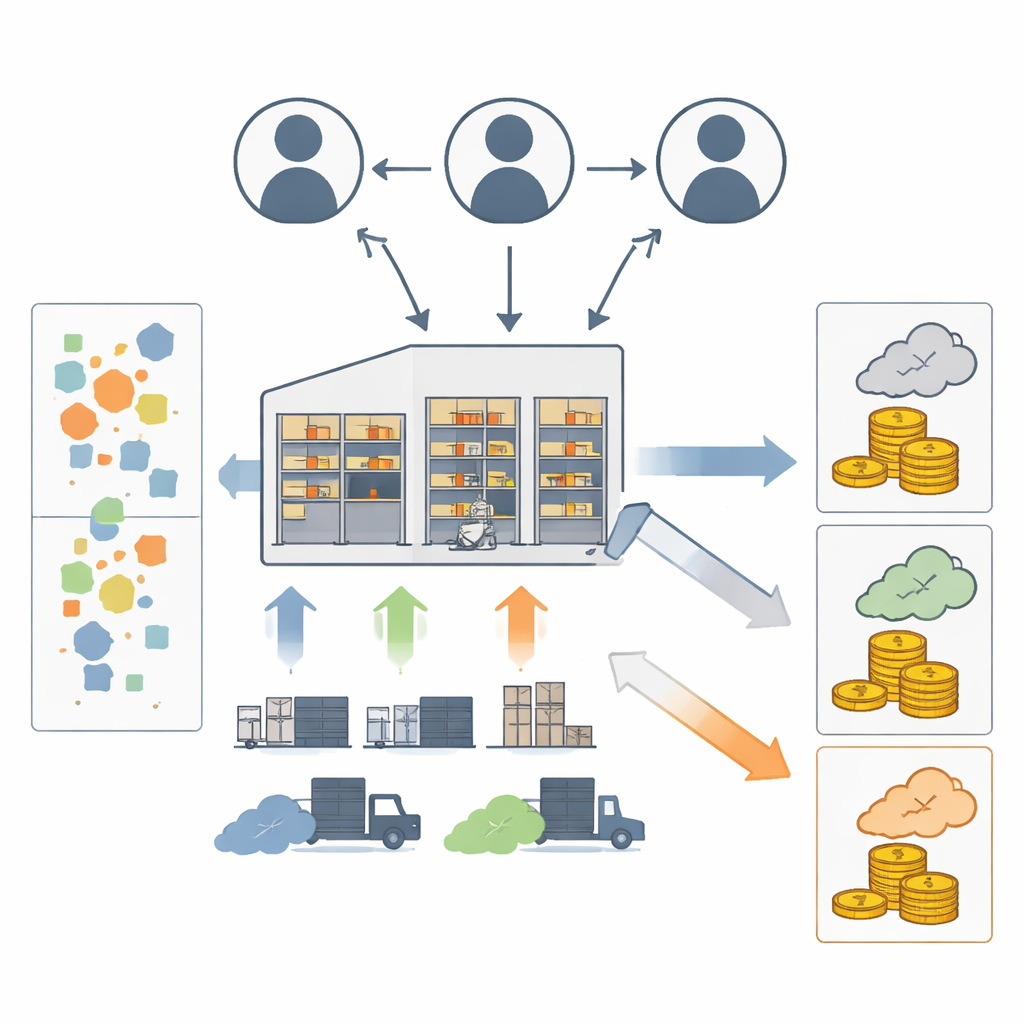

Inventory management sits at the heart of supply chains, influencing costs, delivery speed, and waste. Traditionally, mathematical models have helped firms decide when and how much to order. More recently, sustainability concerns have pushed researchers to include carbon emissions, often by adding penalties or taxes into cost formulas. That approach blends money and emissions into a single yardstick, which hides how much profit is being traded off for environmental gains. This study instead treats profit and carbon as two separate goals, so their tension can be examined clearly. The focus is a single warehouse that orders from a supplier and serves customer demand, while generating emissions from running the warehouse and from truck trips.

Teaching an AI to manage stock under uncertainty

The authors build a simulated warehouse where customer demand changes randomly over time. At each step, a software agent chooses an order quantity in fixed-size batches. The environment keeps track of inventory on hand, backlogged orders, pending deliveries, and lead time. From these, it computes revenue, costs (ordering, holding, and backorder penalties), and carbon emissions from transportation and storage. The agent receives a reward that combines profit and emissions using adjustable weights: profit adds to the reward, while emissions subtract from it. By repeatedly interacting with this virtual warehouse, the agent learns which ordering patterns tend to produce better long-run rewards.

Comparing four learning strategies

Four popular reinforcement learning algorithms are tested under identical conditions: Proximal Policy Optimization (PPO), Phasic Policy Gradient (PPG), Advantage Actor–Critic (A2C), and Double Deep Q-Network (DDQN). All of them see the same demand patterns and cost and emission parameters. The researchers track how quickly each algorithm “settles” into a stable policy, how much cumulative profit it earns, and how much carbon it produces. They also repeat experiments under two different demand patterns (a wide uniform range and a narrower Poisson distribution) to check whether the results hold when the environment becomes more predictable.

What the algorithms learned to do

The head-to-head comparison reveals distinct personalities for each method. PPG consistently achieves the highest profit, at the cost of only a modest increase in emissions relative to its competitors. PPO comes in second on profit but delivers slightly lower emissions, offering what the authors describe as a balanced trade-off between money and carbon. DDQN learns good policies faster than the others—it reaches stability in fewer training episodes—but ultimately settles on decisions that generate lower overall profit, though with relatively low emissions. A2C sits in the middle on profit while tending to generate the highest emissions. When the demand pattern is smoothed using the Poisson distribution, PPG remains the profit leader and DDQN remains the fastest learner, reinforcing the main conclusions.

Tuning priorities between green goals and earnings

To mimic different business priorities, the authors adjust the weights that tell the agent how much to value profit versus emissions in its reward. As expected, putting more weight on profit leads to higher earnings but also more carbon, while emphasizing emissions lowers profits. However, the way each algorithm reacts is different. PPO shows the most stable and predictable behavior across weight settings, maintaining reasonable profits and emissions. PPG tends to cluster around high-profit solutions with limited sensitivity to small changes in weights. DDQN and A2C show more irregular shifts in performance. When emissions are given an extreme weight, all algorithms fall into nearly zero-ordering strategies that avoid emissions but also lose money—an unrealistic but informative edge case showing the limits of the reward design.

What this means for real-world decision makers

For managers, the study suggests that reinforcement learning can serve as a flexible tool to explore trade-offs between profits and carbon emissions, even when no carbon tax or cap-and-trade system is in place. PPG appears best suited for firms that prioritize profit while still caring about emissions, whereas PPO offers the most dependable balance and robustness when preferences or conditions change. DDQN may appeal when rapid deployment and fast learning are crucial, and A2C can serve as a simpler baseline in resource-limited settings. The authors caution that their model relies on simplifying assumptions about demand, lead times, and capacity, and that extreme emphasis on emissions can push the AI into unrealistic policies. They argue that future work should relax these assumptions and search more fully for the range of possible profit–emissions compromises. Still, this study provides an early roadmap for using learning algorithms to make inventory decisions that are both financially and environmentally smarter.

Citation: Sorour, A., Sadek, Y. & Elshalakani, M. Multi-objective inventory optimization using reinforcement learning: a comparative study on profitability and carbon emissions. Sci Rep 16, 13635 (2026). https://doi.org/10.1038/s41598-026-44293-y

Keywords: inventory management, reinforcement learning, carbon emissions, sustainable supply chains, multi-objective optimization