Clear Sky Science · en

Assessment of artificial intelligence-based control algorithms to be implemented in an affordable transradial myoelectric prosthesis

New Ways to Make Artificial Hands More Accessible

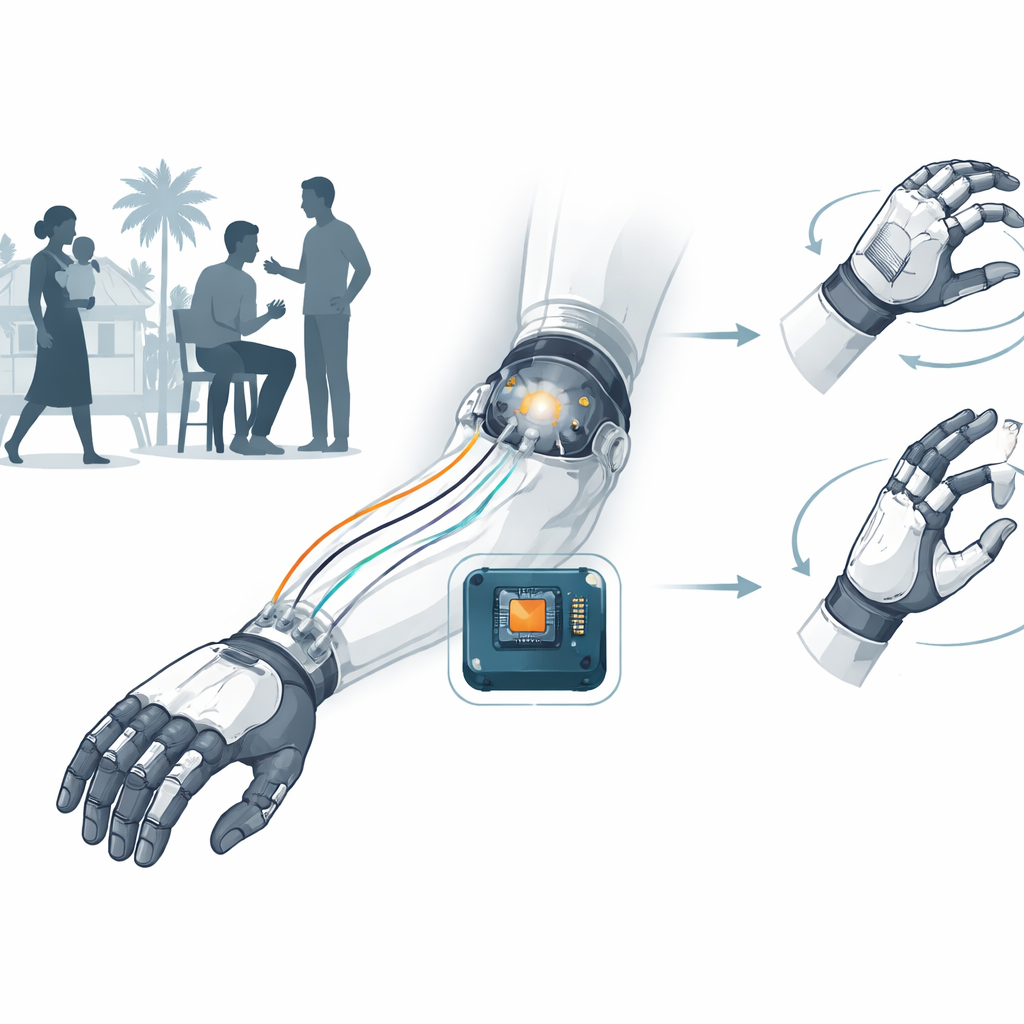

For millions of people living with forearm amputations, especially in low- and middle-income countries, advanced artificial hands remain out of reach due to cost and technical complexity. This study explores how smart computer programs can translate tiny electrical signals from muscles into simple hand movements, with the goal of building an affordable prosthetic hand that is both reliable and tailored to each user.

Why Muscle Signals Matter

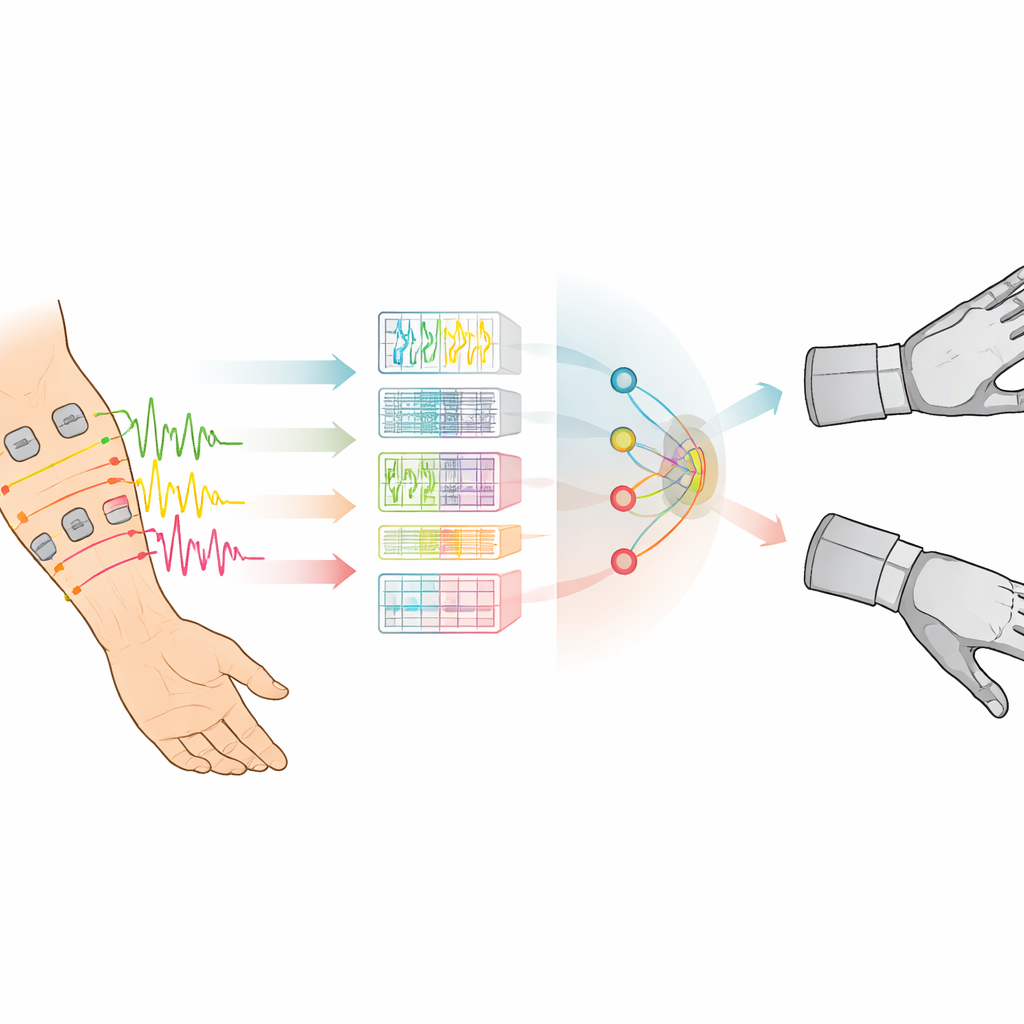

Whenever we move our hands, our muscles produce faint electrical signals that can be picked up at the skin surface. These signals, known as surface electromyography, can reveal what movement a person is trying to make, even if the hand is missing. Many modern prosthetic hands rely on these signals, but in real life they are messy and unstable. Sensor pads can shift, muscles may be weak or scarred, and arm position changes the signals. Systems that work well on people without amputations often fail when used by actual prosthesis users, who may have fewer functional muscles and more varied anatomy. This gap has made it difficult to design prosthetic control systems that are accurate, comfortable, and affordable for the people who need them most.

Building a Real-World Data Foundation

To tackle this problem, the researchers collected a new dataset from 20 adults in Peru who had forearm-level limb loss from either trauma or congenital conditions. Each person wore two small wireless sensor units on their residual forearm, with six sensing points spread over the main flexor and extensor muscle groups. Participants attempted three simple wrist-related gestures—bending the wrist, extending it, and pinching with thumb and middle finger—while seated and standing, and with different elbow and shoulder positions. In total, each person performed 240 gesture attempts, generating hundreds of files that capture how muscle signals change with posture and effort. By focusing only on people with amputations and standardizing how electrodes were placed, the team created a realistic, publicly available dataset specifically designed for prosthetic control research.

Teaching Algorithms to Read the Body

With this dataset in hand, the team tested four types of machine learning algorithms: neural networks, random forests, gradient boosting trees, and decision trees. They chopped each muscle signal into short overlapping time windows, mimicking how a real prosthetic hand would continuously listen to the body. From each window they extracted a small set of numerical features that capture signal strength, variation, and complexity across all six channels. To avoid redundancy and reduce computational load, they used a distance-based method to select the five most informative features. Instead of asking one algorithm to recognize several movements at once, they built a two-step "stacked" model. The first step decides whether the person is resting or moving; the second step, called only when movement is detected, decides whether the action is wrist flexion or extension.

How Well the System Performed

The stacked models built from decision-tree families, especially random forests and gradient boosting, performed best. Using only five key features and slightly longer time windows, the combined approach reached average accuracies above 97% in distinguishing rest, flexion, and extension for individual users. Neural networks, by contrast, were less stable and more sensitive to differences between people. The study also examined which user characteristics influenced performance. People with congenital limb differences and those with more distal (farther from the elbow) amputations tended to achieve higher accuracy, likely because their residual muscles are healthier and better defined. Participants who had lived with limb loss for an intermediate length of time also showed particularly strong results, suggesting that long-term adaptation of muscles and movement habits matters.

From the Lab to a Low-Cost Device

To see whether these algorithms could run on affordable hardware, the team deployed them on a compact Raspberry Pi Zero 2 W computer, a platform small enough to fit inside a prosthetic hand. Models using shorter time windows and tree-based methods were able to classify wrist movements in near real time, though some larger configurations exceeded the device’s limits and will require further optimization. Initial tests with one participant showed that stacked models using gradient boosting could accurately identify intended movements in a variety of arm positions, while majority voting over recent predictions helped smooth out brief misreads.

What This Means for Future Artificial Hands

In plain terms, this study shows that a smart but relatively simple combination of algorithms can reliably interpret muscle signals from real prosthesis users and can be run on inexpensive electronics. By grounding their work in a dedicated dataset from people with amputations and carefully tuning the models for each individual, the authors outline a path toward low-cost, personalized prosthetic hands that respond naturally to a user’s intentions. The next steps will be to embed this system into physical devices and test it during everyday activities, moving closer to artificial hands that are not only technically advanced but also widely accessible and easier to live with.

Citation: Garcia, J.G., Luque, E.F., Romero, E. et al. Assessment of artificial intelligence-based control algorithms to be implemented in an affordable transradial myoelectric prosthesis. Sci Rep 16, 14382 (2026). https://doi.org/10.1038/s41598-026-43000-1

Keywords: myoelectric prosthesis, surface electromyography, machine learning, upper limb amputation, prosthetic control