Clear Sky Science · en

2D grid map creation based on RGBD-camera and LiDAR data

Smarter Robot Maps for Everyday Spaces

From warehouse robots dodging pallets to home robots weaving around furniture, machines that move through the world need good maps to stay safe. Yet the sensors they use to see—laser scanners and depth cameras—each have blind spots. This study shows how combining a simple laser scanner with an inexpensive depth camera, and automatically correcting the camera’s errors on the fly, can give small indoor robots clearer, safer maps of their surroundings.

Why Single Sensors Fall Short

Today’s robots often rely on either a depth camera (which sees color and distance) or a laser scanner (which measures distance using light pulses). Depth cameras are cheap and compact, but they struggle in dim light, bright glare, or beyond a few meters, and their distance readings slowly drift away from the truth as range increases. Laser scanners, by contrast, are extremely precise and work in the dark, but they usually sense only a thin horizontal slice of the world and can miss objects that sit above or below that plane, like low pallets, overhanging shelves, or hollow structures. On top of that, laser systems tend to be more expensive and computationally heavy. Alone, neither sensor type delivers the rich, trustworthy view needed for tight spaces and crowded indoor logistics hubs.

Blending Two Views into One Clear Picture

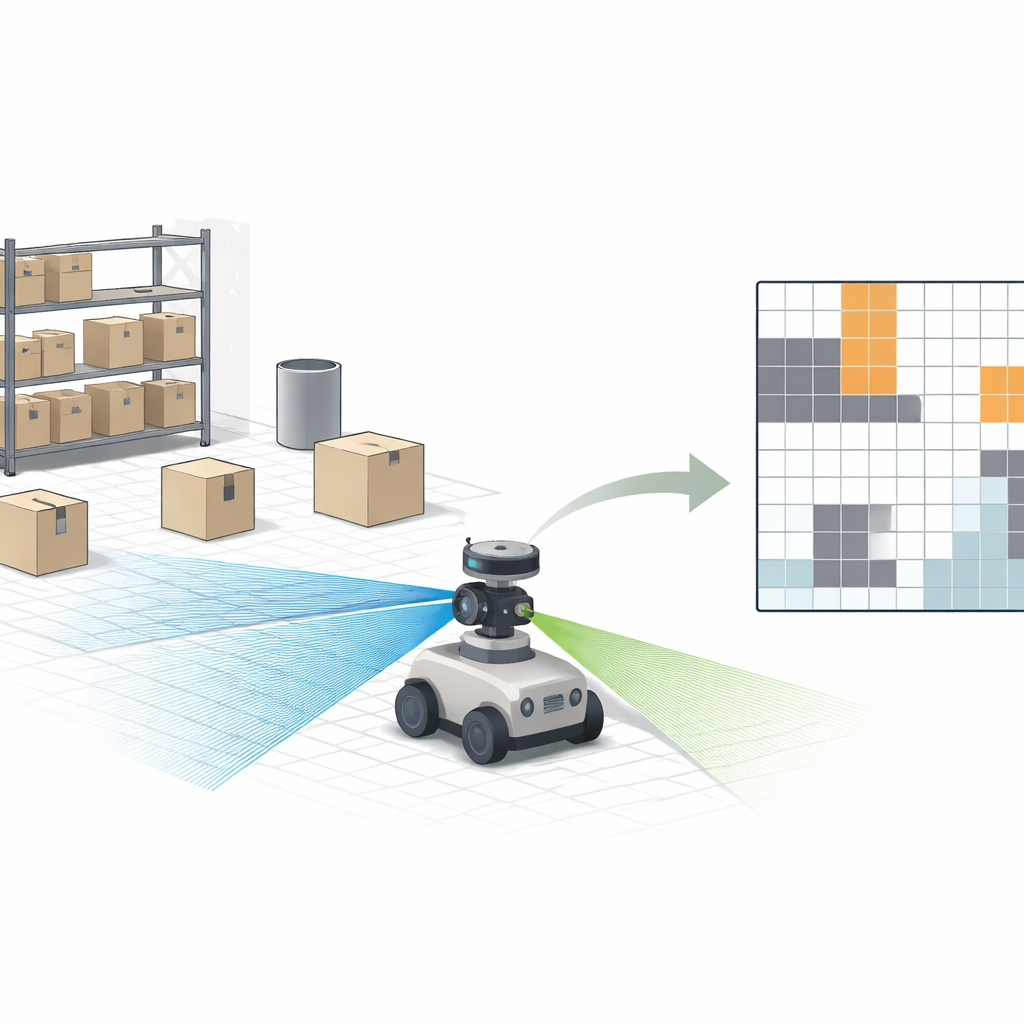

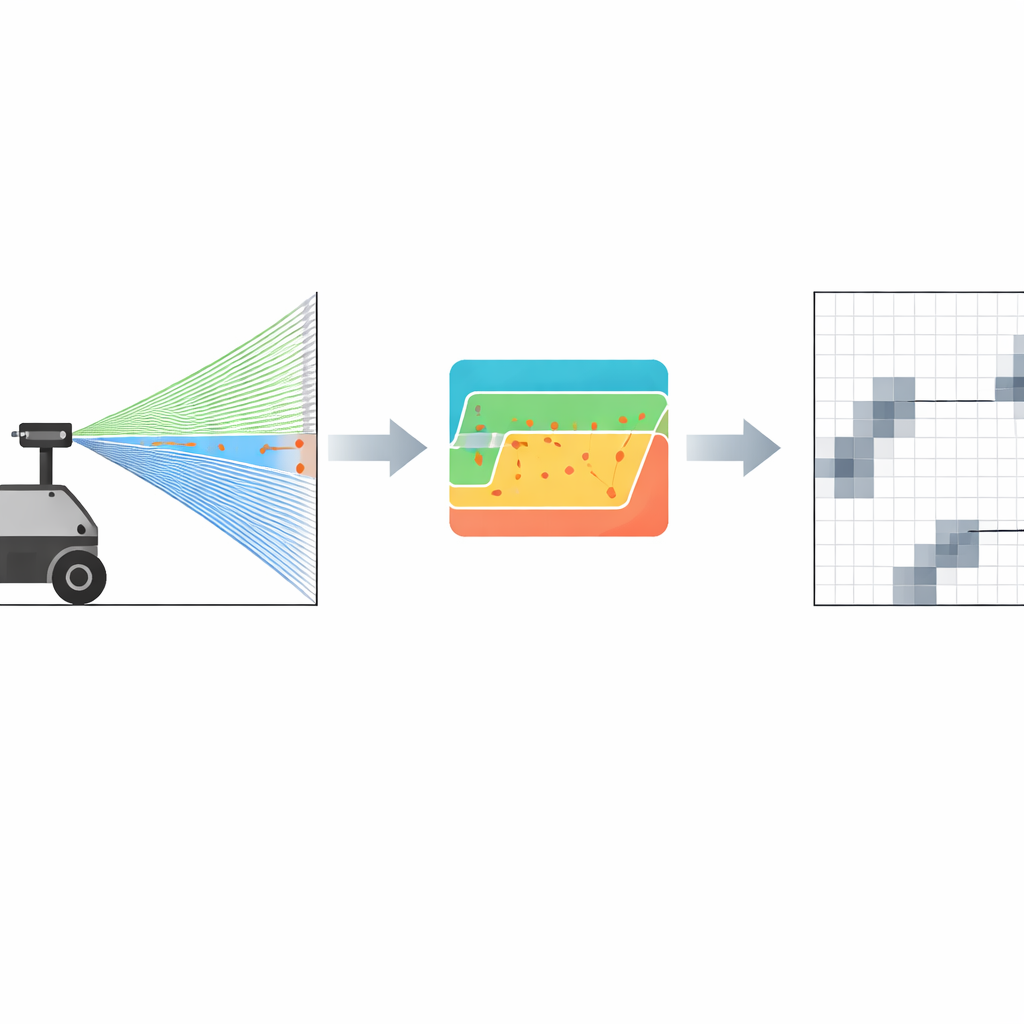

The authors tackle this gap by fusing the strengths of both sensors into a single 2D grid map—the kind of map where the floor is divided into small squares that are marked as either free or occupied. Their robot carries a low-cost RGBD (color plus depth) camera and a planar LiDAR scanner. First, they align all measurements into a common reference frame, so that a point seen by the camera or the laser ends up in the same place on the map. Then, instead of trying to keep a full 3D model, they project only the relevant 3D points down onto the floor plane, filtering out ceiling and floor clutter. A probabilistic scheme updates each grid cell based on how trustworthy each sensor is at a given distance: the laser readings are treated as highly reliable over the working range, while the camera’s influence gently fades with distance as its noise grows.

Letting the Laser Fix the Camera

A key innovation is a lightweight calibration step that lets the robot continuously correct the camera’s depth bias in the field. When the robot faces a flat wall, it uses the laser’s millimeter-accurate distance as “ground truth.” A robust fitting procedure estimates the precise distance to that wall from the laser data, even in the presence of noise. The system then compares this to what the camera reports and adjusts a single depth offset so that the camera’s average reading matches the laser’s. Crucially, this process does not require elaborate checkerboard patterns, motion-controlled arms, or full six-degree-of-freedom calibration; it can be triggered quickly in a real warehouse whenever a suitable flat surface is in view. This on-the-fly tuning directly reduces systematic camera errors that would otherwise cause the robot to misjudge where obstacles are.

From Cleaner Depth to Safer Paths

The researchers evaluated their method on a small warehouse-style robot equipped with two low-cost laser scanners and an Intel RealSense depth camera. In controlled tests against the laser ground truth, the camera’s distance error dropped sharply after calibration—for example, at about 2.16 meters, the average error fell from 0.604 meters to 0.340 meters, a reduction of roughly 43 percent. When the team compared different mapping modes, a camera-only setup failed to build a stable map, and even a naive fusion of uncorrected camera data with LiDAR produced many false obstacles, as the floor was wrongly marked as occupied. The calibrated fusion, however, produced sharper maps with lower uncertainty, detected thin and low-lying obstacles that the laser alone missed, and improved loop-closure accuracy, all while running fast enough for real-time navigation.

What This Means for Real-World Robots

In practical terms, the work offers a simple recipe for making indoor robots safer and more reliable without resorting to expensive, high-end sensors. By letting a precise but limited laser quietly “teach” a cheaper depth camera, and then combining their readings into a common 2D grid, the robot gains a more honest picture of its surroundings—especially for tricky features like hollow racks, wire mesh, and low objects. Although this extra intelligence costs some computing power and does not fully solve issues like extreme lighting, it moves low-cost robots closer to the kind of robust, trustworthy perception needed for busy warehouses, factories, and other indoor spaces where people and machines must safely share the floor.

Citation: Delhibabu, R., Zhukova, N.A. & Gizzatov, A. 2D grid map creation based on RGBD-camera and LiDAR data. Sci Rep 16, 10591 (2026). https://doi.org/10.1038/s41598-026-42698-3

Keywords: robot mapping, sensor fusion, LiDAR, depth camera, indoor navigation