Clear Sky Science · en

AI-integrated bionic fingertip E-Skin for precision slippage detection in wet environments

Smart touch for slippery everyday life

Anyone who has dropped a wet glass or struggled to hold an oily cooking utensil knows how tricky slippery objects can be. Humans usually manage thanks to the incredible sensitivity of our fingertips, which sense tiny vibrations just before something slips away. This study describes an artificial fingertip "skin" that gives robots and prosthetic hands a similarly refined sense of touch—even when objects are coated with water or oil—opening the door to safer, more agile machines in kitchens, factories, hospitals, and homes.

Why robots need a better sense of slip

Modern electronic skins already let machines feel pressure, temperature, and even humidity. But reliably sensing when an object is about to slip, especially under wet or greasy conditions, has remained a major blind spot. For robots preparing food, washing tools, or handling delicate items in the real world, this is a serious limitation: a secure grip must be firm enough to prevent dropping an object but gentle enough not to crush or damage it. The authors set out to build a wearable fingertip sensor that can detect the onset of sliding under any surface condition—dry, wet with water, or coated in oil—while being flexible, lightweight, and cheap enough for wide use.

Fingerprints as a design blueprint

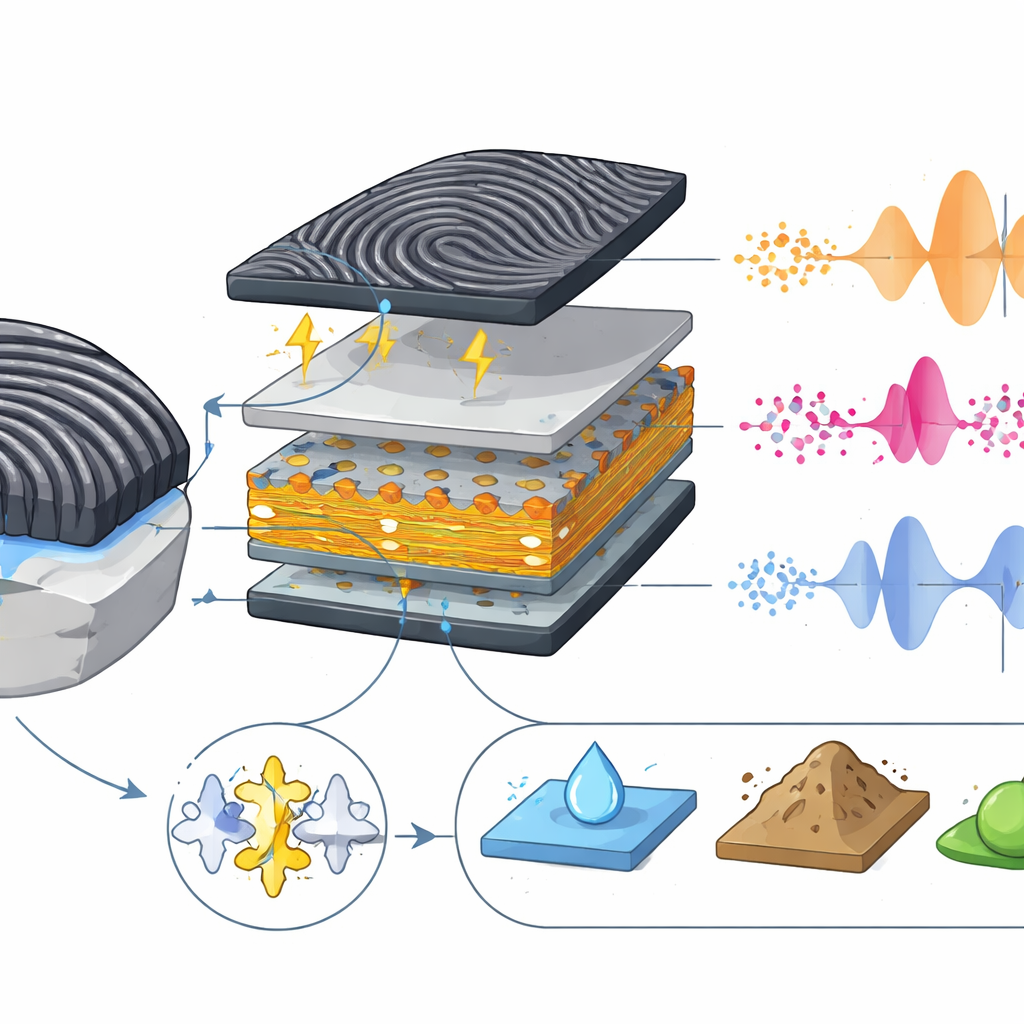

The team took direct inspiration from the human fingertip, whose ridged fingerprints help tune how vibrations travel through the skin. They fabricated a multilayer electronic film using all-screen-printing methods, a technique well suited for low-cost, mass production. The core of the device is a thin layer of a special plastic that generates electrical charges when it is bent or vibrated. On top of this, they added a soft rubber layer whose surface was carved with a random, fingerprint-like pattern using a carbon dioxide laser. When this patterned surface slides across another material, the ridges repeatedly stick and release, creating tiny vibration bursts that the inner sensing layer converts into voltage signals.

Making a tiny signal loud and durable

To ensure that these vibration signals would be strong and reliable, the researchers mixed a small amount of carbon nanotubes—nanometer-scale tubes of carbon—into the active plastic. This subtle change improved how well the molecules in the layer lined up, boosting its ability to generate charge when moved. They also fine-tuned the heating steps used during printing so the material would crystallize in its most responsive form. Tests showed that the sensor produced relatively large and stable electrical signals, and that its performance held up even after thousands of bending cycles, an important feature for a device meant to be worn on moving fingers or robotic grippers.

Feeling slip in water and oil

The real test was whether the fingertip could sense sliding on wet surfaces. Mounted on an artificial finger, the sensor was drawn across a stainless-steel plate under three conditions: completely dry, covered with water, and coated in oil. In every case, the fingerprint ridges generated regular, wave-like voltage patterns linked to the classic "stick–slip" behavior that occurs as a surface alternately grips and releases. Crucially, the patterned sensor produced clear, strong signals even when oil reduced friction, while an otherwise identical but smooth sensor largely failed under the same conditions. The grooves acted like channels that pushed liquid aside and restored enough contact to produce usable vibrations. The device also captured subtle differences in signals when sliding across materials such as ceramic, glass, and fabric, hinting at its ability to recognize not just slip, but surface type.

Teaching AI to recognize what the fingertip feels

Because the sensor outputs rich streams of data, the researchers paired it with machine-learning software that could automatically identify what kind of surface it was touching. They extracted simple numerical features from each slip signal—such as how strong and how fast it fluctuated—and trained a model to tell stainless steel from ceramic, glass, or non-woven fabric under dry, water-wet, and oil-wet conditions. Using cross-checking methods to avoid overfitting, the system correctly labeled more than 95 percent of the test cases in every setting, and almost all samples when all conditions were mixed together. In a final demonstration, the sensor was attached as electronic skin to a soft robotic hand that grasped everyday foods like cucumbers, potatoes, and carrots. Even when these objects were oiled, the system could still track slippage in real time by analyzing the characteristic vibration patterns.

From artificial fingertips to digital touch

In simple terms, this work shows that copying the structure of human fingerprints and combining it with smart materials and AI can give machines a remarkably human-like sense of when things are about to slip—whether dry, wet, or oily. Because the sensor can be printed, bent, and wrapped around fingers or grippers, it is well suited for future prosthetic hands, collaborative robots, and other devices that must handle fragile, messy, or unpredictable objects. As more of these tactile signals are turned into digital data and analyzed automatically, our physical sense of touch could be mirrored in cyberspace, supporting a future in which robots and humans interact more safely and seamlessly with the slippery world around them.

Citation: Adachi, T., Ozawa, K., Kamanoi, S. et al. AI-integrated bionic fingertip E-Skin for precision slippage detection in wet environments. Sci Rep 16, 14179 (2026). https://doi.org/10.1038/s41598-026-41096-z

Keywords: electronic skin, robotic touch, slip detection, soft robotics, tactile sensing