Clear Sky Science · en

F-Transformer: a federated transformer for efficient and privacy-preserving sequence generation

Why Smaller, Safer Language Tools Matter

Every time you type a message, search the web, or dictate to your phone, software is quietly trying to predict and generate natural-sounding language. Today’s most powerful language models, however, are huge, hungry for computing power, and often require sending sensitive text to central servers. This paper presents a new way to build language models that are both light enough for modest hardware and respectful of user privacy, while still matching or beating the performance of some of today’s headline-making systems.

From Giant Models to Lean Machines

Modern language tools are typically based on transformers, a family of neural networks that excel at handling long sentences and subtle word relationships. Famous systems like GPT-2 and BERT rely on hundreds of millions to billions of internal parameters, which makes them powerful but also difficult to run on everyday devices. They usually train in centralized data centers that must collect large volumes of text, raising concerns about privacy and data ownership. The authors argue that many real-world uses, from hospitals to mobile phones, need language models that are much smaller, less demanding, and able to learn without pulling all the raw data into one place.

Learning Together Without Sharing Secrets

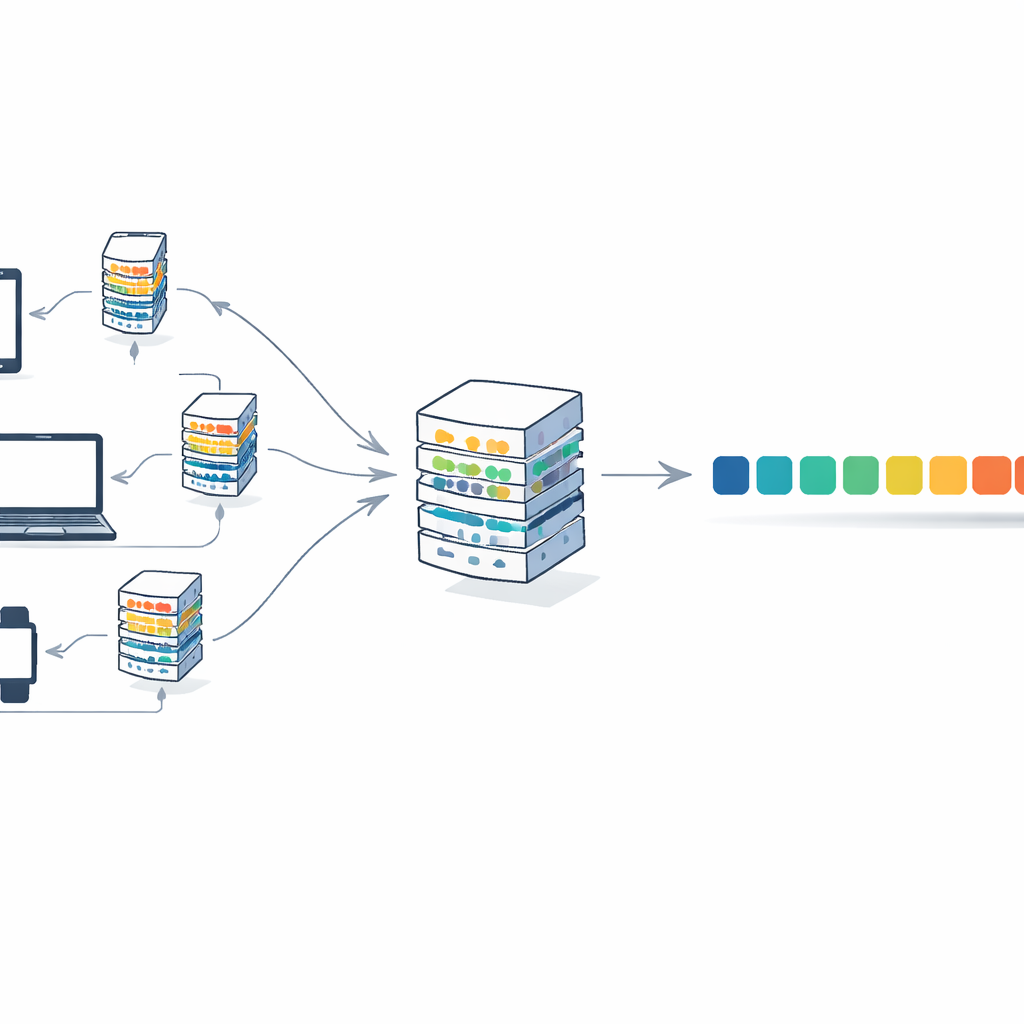

To address this, the paper combines transformers with a training strategy called federated learning. In this setup, many clients—such as institutions or devices—keep their text locally. Each client trains its own copy of the model on its private data and sends only the updated model weights, not the text itself, to a central server. The server averages these updates into a single global model and sends it back. Over many rounds, the model improves by pooling knowledge from diverse sources, yet no raw documents ever leave their original locations. This reduces privacy risks and also spreads the computational burden across clients instead of relying on one large machine.

A Compact Design Built for Sharing

The authors introduce F-Transformer, a transformer architecture designed from the ground up for this distributed, privacy-aware world. Instead of shrinking an existing giant model, they choose lean settings: just 4 attention heads, 4 layers, and 64-dimensional word representations, for a total of about 0.87 million trainable parameters. They outline a three-layer framework: a data layer that handles local text and tokenization, an artificial intelligence layer that runs the compact transformer and the federated averaging procedure, and an application layer that can support uses such as text generation, translation, and question answering across many organizations. They also present a mathematical objective that, at least in theory, blends accuracy and privacy into a single training goal by discouraging updates that reveal too much about individual records.

Performance With Less Power and Memory

To test F-Transformer, the authors use the widely studied WikiText-2 collection of Wikipedia articles, focusing on how well the model predicts the next word in a sequence. They evaluate not only prediction quality but also processor load and memory use, comparing centralized training to their federated setup. Despite its tiny size, F-Transformer achieves a validation perplexity—a standard measure of how well a model anticipates text—that beats several well-known, far larger models, including BERT-Large and GPT-2 variants, when numbers are normalized to the same scale. At the same time, the federated version cuts processor use by about 40 percent and memory consumption by about 34 percent relative to a comparable centralized baseline. The small model size also slashes communication costs: moving model updates between clients and server is over a hundred times cheaper than with GPT-2 small.

What This Means for Everyday AI Use

In plain terms, the study shows that it is possible to build language models that learn from many scattered data sources, keep raw text private, and run on modest hardware—without giving up performance. F-Transformer proves that a carefully designed, compact transformer can rival or surpass much larger systems while being easier to deploy on phones, edge devices, or sensitive institutional networks. Although the paper’s privacy guarantees are mainly architectural rather than formally measured, and real-world tests on more varied data are still to come, the results point toward a future where high-quality language generation does not require massive, centralized, and potentially intrusive AI infrastructure.

Citation: Patel, N., Brahmbhatt, S., Ramoliya, F. et al. F-Transformer: a federated transformer for efficient and privacy-preserving sequence generation. Sci Rep 16, 14093 (2026). https://doi.org/10.1038/s41598-026-40881-0

Keywords: federated learning, transformer language model, privacy-preserving AI, lightweight NLP, sequence generation