Clear Sky Science · en

Generative AI and LLMs in industry: a text-mining analysis and critical evaluation of guidelines and policy statements across 14 industrial sectors

Why This Matters to Everyday Life

Generative AI tools like ChatGPT are racing into offices, hospitals, banks, newsrooms, and even construction sites. They promise faster service, cheaper products, and new kinds of creativity—but also raise tough questions about privacy, safety, and fairness. This paper looks under the hood of how big companies in 14 different industries are actually writing the rules for these systems, revealing where businesses are being careful, where they are taking risks, and what that means for workers, consumers, and citizens.

How Companies Are Using AI at Work

Across sectors—from healthcare and finance to publishing, fashion, and game studios—firms are weaving generative AI and large language models into everyday operations. These tools help answer customer questions, draft documents, support doctors and scientists, flag fraud, translate content, and design products. The authors show that, while many organizations are excited about gains in speed and efficiency, only a minority have formal policies that go beyond experiments and pilots. Surveys cited in the paper reveal that many employees already use AI at work, often without permission, and sometimes pass off AI-written material as their own. This gap between rapid use and slow governance is the core tension the study investigates.

What the Researchers Did

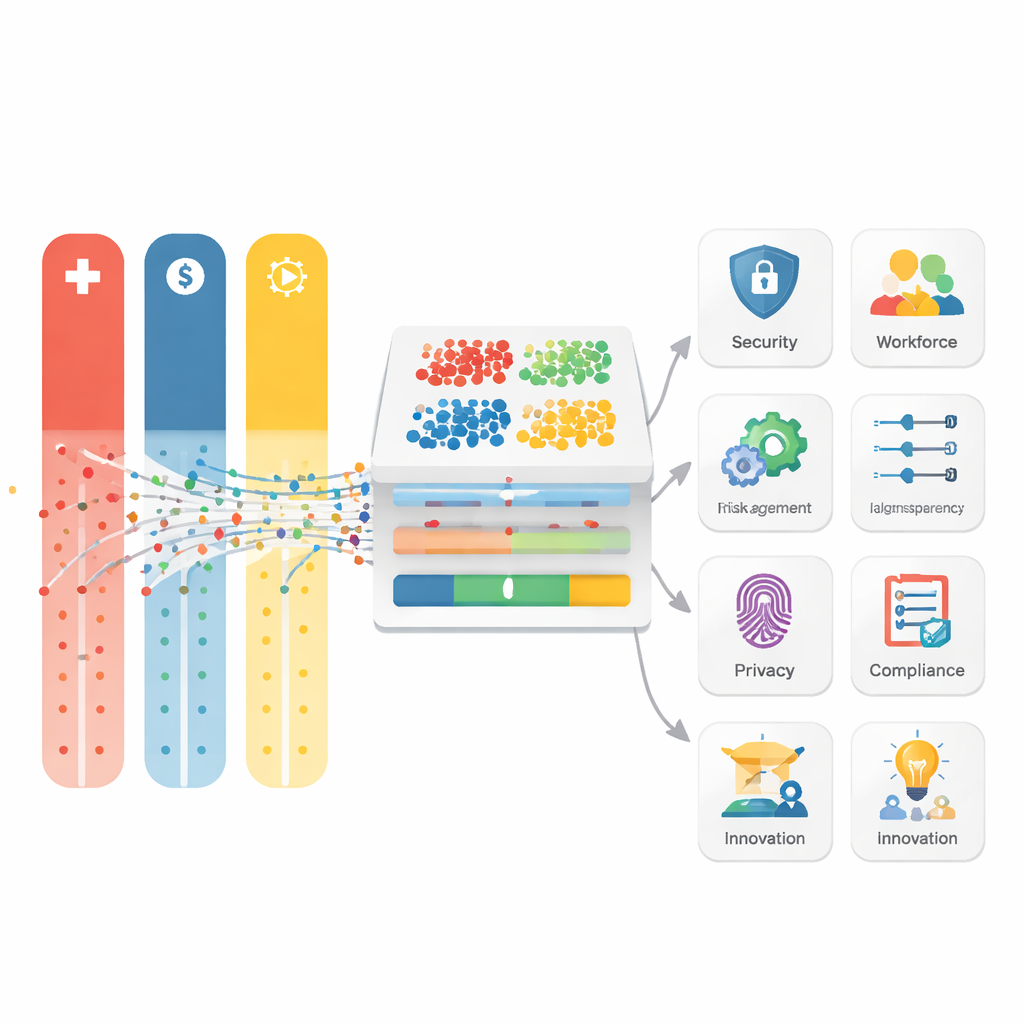

Instead of looking at the technology itself, the authors examined 160 public guidelines and policy statements from large companies in 14 industries around the world. They treated these documents as data, using text-mining techniques to see which ideas and concerns appear most often, and how those patterns change by sector and region. By breaking policies into thousands of words and phrases, then applying TF–IDF (a way of spotting unusually important terms) and K-Means clustering (a way of grouping similar texts), they uncovered eight main themes that dominate corporate thinking: data and privacy, safety and human oversight, security and misuse, intellectual property and content integrity, transparency and explainability, risk and compliance, workforce and change management, and innovation through controlled experimentation or “sandboxes.”

Different Rules for Different Risks

The study finds that industries do not treat AI the same way, because their main worries differ. Healthcare and pharmaceuticals focus on consent, patient safety, and traceability across the whole life cycle of a tool, reflecting fears about harm to people’s bodies. Banks and financial firms stress model risk, audits, and board-level responsibility, worried that bad AI decisions could spread losses through the economy. Publishers and media houses zoom in on intellectual property, authorship, and clear disclosure when AI is used in writing or images. Social media and telecom companies wrestle with privacy and user trust at huge scale, while creative fields like design, fashion, and entertainment emphasize “AI as assistant,” insisting on human review so that automation does not undermine originality or brand identity. The authors argue that these differences are sensible—but they also create a patchwork that can confuse the public and regulators.

What the Text Mining Revealed

By quantifying which words appear together, the authors show that some ideas are heavily protected while others get surprisingly little attention. “Privacy” and “integrity” appear frequently across sectors, especially in legal and pharmaceutical documents, suggesting strong concern about data handling and ethical conduct. Finance-related texts give heavy weight to predictive analytics and markets, underscoring their appetite for data-driven decisions under tight controls. Yet terms tied to openness and user empowerment—like disclosure, human-centric design, democratization, skepticism, and misinformation—are rarer, even in news and social media policies. This suggests that companies are more comfortable promising to protect data than promising to explain how systems work or to share power with users.

Toward Smarter, Fairer AI Rules

Drawing these findings together, the authors recommend a “modular” approach to AI governance. Every industry, they argue, should share a common baseline: build privacy, fairness, transparency, and continuous monitoring into AI systems from the start. On top of that, each sector can add its own risk modules—for example, strong safety trials for medical AI, stress tests for banking tools, clear labels and IP rules for creative work, and robust misinformation safeguards for news and social platforms. They also call for live testing environments (sandboxes), AI-assisted audits, and participatory design that involves end users and ethicists early on. For a layperson, the key message is that good AI policy is not just about stopping bad outcomes; it is about shaping these powerful tools so they genuinely serve human needs, share benefits widely, and remain understandable and accountable as they spread through everyday life.

Citation: Jiao, J., Afroogh, S., Chen, K. et al. Generative AI and LLMs in industry: a text-mining analysis and critical evaluation of guidelines and policy statements across 14 industrial sectors. Humanit Soc Sci Commun 13, 410 (2026). https://doi.org/10.1057/s41599-026-06598-1

Keywords: generative AI governance, industry AI policies, AI ethics, large language models, responsible AI