Clear Sky Science · en

Evaluating the accuracy of ChatGPT model versions for giving care-seeking advice

Why this study matters for everyday health choices

More and more people ask tools like ChatGPT a deceptively simple question: “Do I need the emergency room, a doctor’s visit, or can I stay home and wait?” This study puts 22 versions of ChatGPT to the test on exactly that decision. The findings matter to anyone tempted to rely on artificial intelligence instead of calling a clinic, a nurse hotline, or a trusted doctor.

How the researchers tested ChatGPT’s medical advice

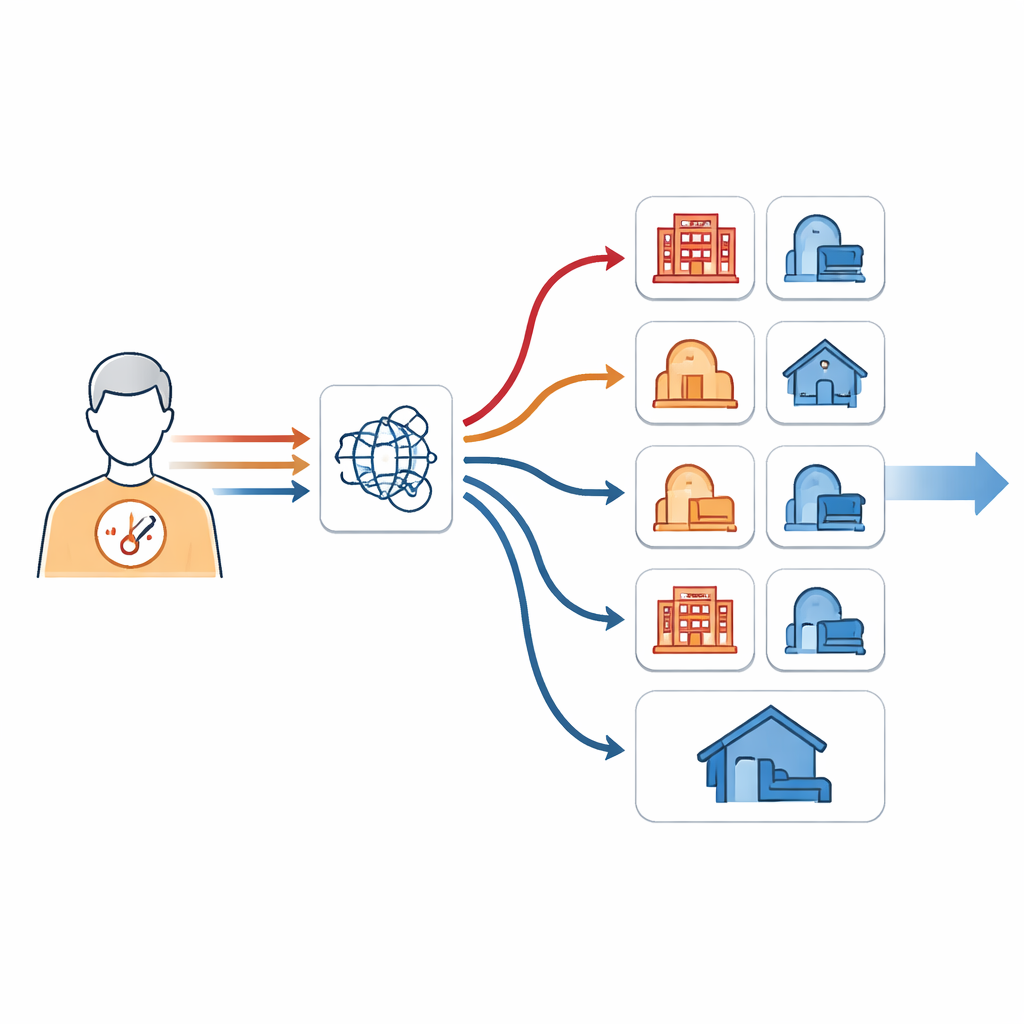

Instead of real-time patients, the team used 45 carefully designed patient stories, or vignettes, drawn from real people who had gone online to ask where they should seek care. Each story had already been judged by two physicians, who agreed on whether it called for emergency treatment, a non-urgent doctor visit, or simple self-care at home. The researchers then asked every available ChatGPT version—including older models and newer “reasoning” models—to pick one of these three options. To see how consistent the models were, they repeated this process ten times per case and per model, ending up with 9,900 separate recommendations.

How accurate were the different versions of ChatGPT?

The best-performing model in the study, called o1-mini, chose the correct option about three-quarters of the time (74%). Some smaller or lighter-weight models did noticeably worse, with accuracy dropping to about 44%. Almost all models were very good at spotting emergencies; with the limited emergency examples available, they almost always suggested urgent care when it was truly needed. They were also strong for typical non-emergency doctor visits. The biggest struggle came with self-care. Even the better models often recommended seeing a doctor when simply resting, watching symptoms, and waiting a few days would have been appropriate.

Why ChatGPT tends to “play it safe”

Across the board, the models leaned toward more urgent advice than doctors judged necessary. Older versions almost never suggested too little care, and many newer ones still erred heavily on the side of caution. From a safety standpoint, favoring caution may seem reassuring: it is generally better to send someone to a doctor than to miss a true emergency. But if a system nearly always advises some form of medical visit, its guidance becomes less useful for everyday decisions—and could add to crowding in clinics and emergency departments. It may also stoke anxiety in people who are already worried, teaching them that even mild symptoms always deserve professional attention.

Making use of inconsistency to improve advice

Surprisingly, the same ChatGPT model did not always give the same answer when shown the same patient story. Over multiple runs, some models shifted between different urgency levels for the identical case. Instead of treating this inconsistency purely as a flaw, the researchers tried to use it to their advantage. They combined the ten repeated answers for each case in several ways. When they picked the least urgent recommendation that appeared across those ten runs, overall accuracy rose by about four percentage points, and correct self-care suggestions jumped even more. In other words, when a model sometimes recommended self-care and sometimes a doctor’s visit for the same mild case, trusting the lowest-urgency suggestion often brought the advice closer to the doctors’ judgment.

What this means for people using AI for health decisions

The study’s bottom line is that current ChatGPT models can be helpful for recognizing clear emergencies, but they are not yet reliable enough to guide care-seeking decisions on their own—especially when the choice is between seeing a doctor and staying home. Newer “reasoning” models are more willing and somewhat better at recognizing when self-care is enough, but their performance is still far from perfect. Techniques that combine multiple answers from the same model may help developers build safer tools in the future. For now, people should treat ChatGPT’s triage-style advice as one input among many, not as a replacement for local emergency instructions, professional medical advice, or their own common sense.

Citation: Kopka, M., He, L. & Feufel, M.A. Evaluating the accuracy of ChatGPT model versions for giving care-seeking advice. Commun Med 6, 171 (2026). https://doi.org/10.1038/s43856-026-01466-0

Keywords: chatgpt, self-triage, emergency care, health advice, artificial intelligence