Clear Sky Science · en

Enhanced cybersecurity threat detection using novel tri-metaheuristic loss functions in generative adversarial networks with adaptive attention preservation for network traffic augmentation

Why smarter cyber defense matters

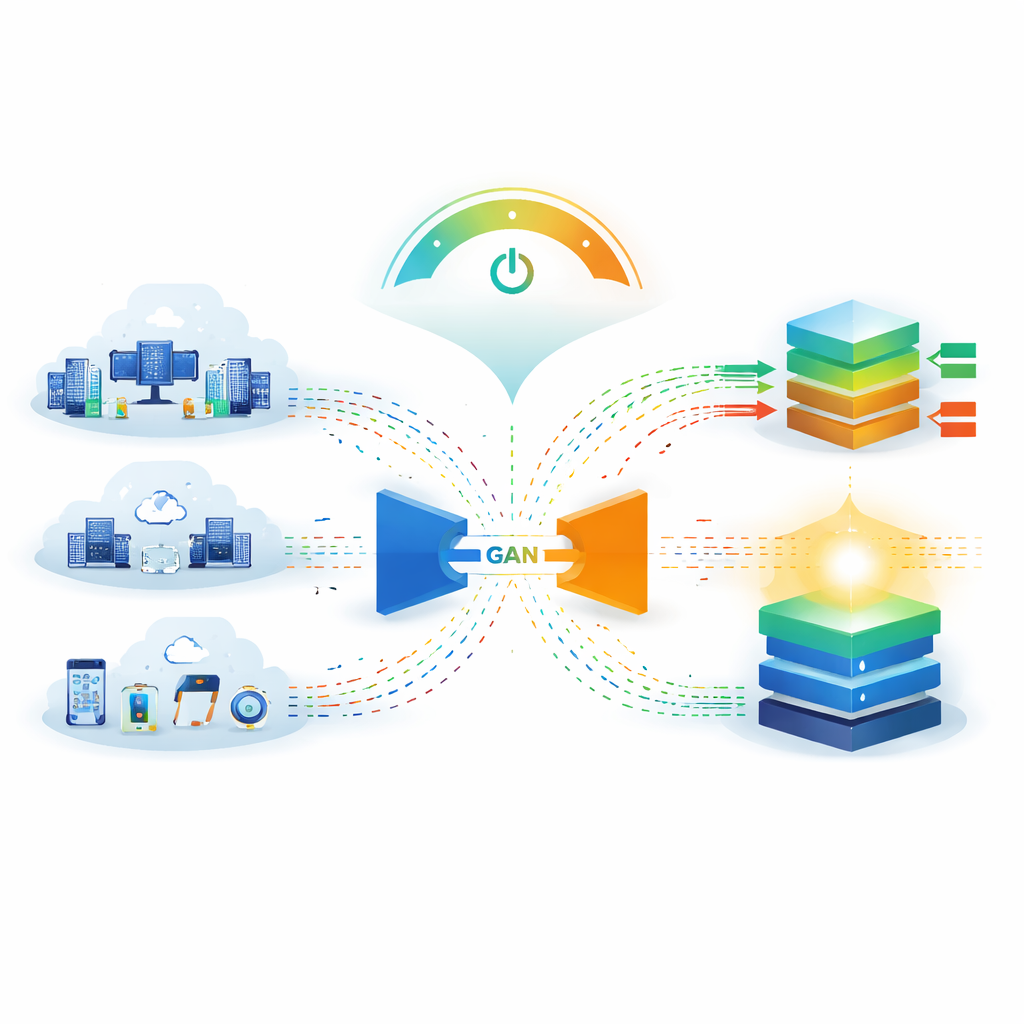

Every time you browse the web, shop online, or connect a gadget at home, silent battles unfold in the background as security systems try to spot malicious traffic among billions of harmless connections. Attackers are constantly inventing new tricks, while defenders struggle with scarce examples of real attacks and rising energy costs from ever-larger AI models. This paper explores a way to both strengthen digital defenses and cut the electricity needed to train them by teaching a specialized generative model to create realistic, diverse "practice attacks" for intrusion detection systems—without inventing entirely new algorithms, but by combining and adapting proven ideas in a carefully engineered way.

Making more realistic practice data

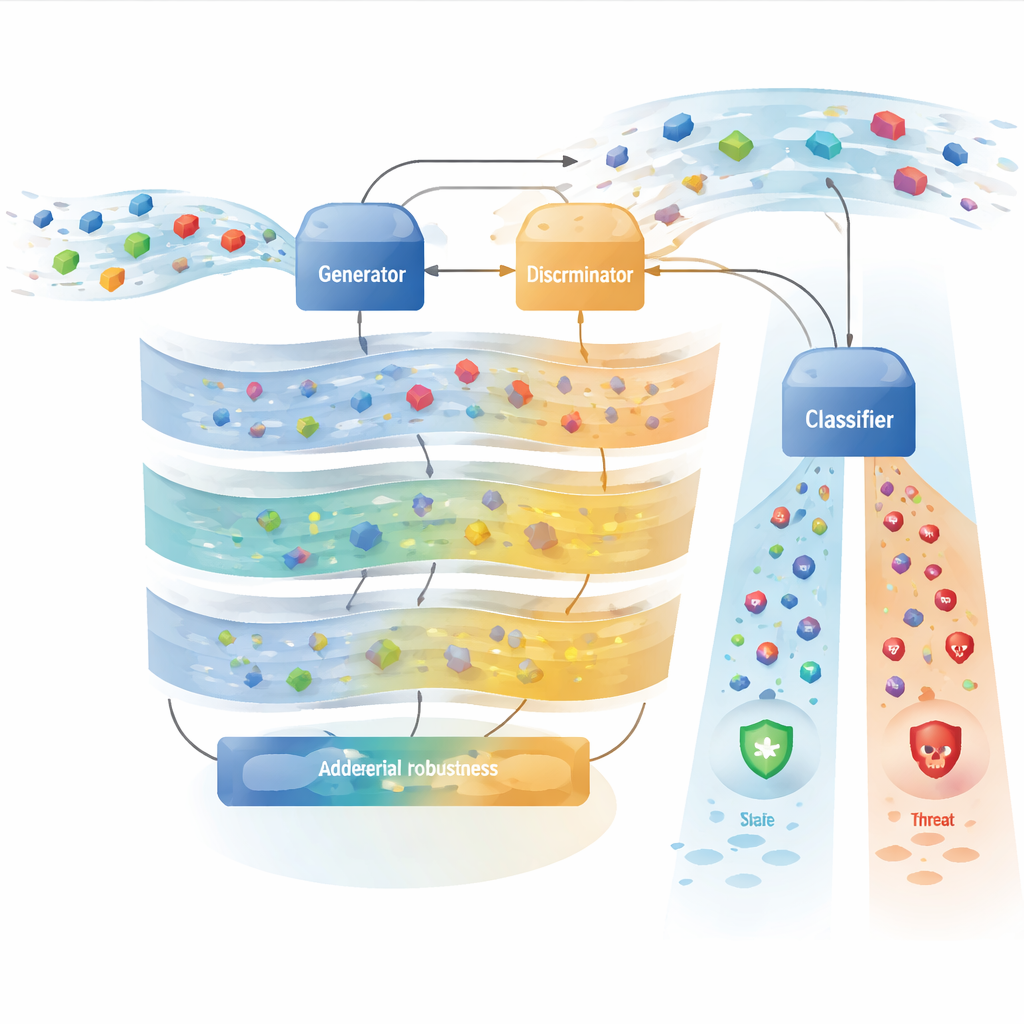

Modern cyber defenses often rely on deep-learning systems that scan network traffic for signs of intrusion. These systems perform best when trained on many examples of both normal behavior and attacks. In reality, normal traffic vastly outnumbers dangerous traffic, and some attack types are extremely rare, so models tend to ignore them. The authors use a kind of generative model, a Generative Adversarial Network (GAN), to produce synthetic attack traffic that looks and behaves like real attacks. Instead of relying on a single loss rule to guide learning, they design a tri-component loss framework that encourages the generator to preserve features that truly matter for distinguishing attacks, keep the overall statistics of traffic realistic, and maintain a wide variety of attack patterns. The result is a richer, more balanced training set for downstream intrusion detection systems.

Borrowing ideas from nature, implemented in math

The framework is inspired by three families of so‑called metaheuristic optimization methods named after fireflies, jellyfish, and mantis shrimp. Classic versions of these algorithms are population-based and non-differentiable, which makes them poorly suited to modern deep learning. Here, the authors do not run those algorithms directly. Instead, they translate the underlying design ideas into smooth, differentiable loss components that can be optimized with standard backpropagation. One group of components, influenced by "firefly" behavior, encourages the synthetic traffic to align with important attack features and match the statistical distribution of real data. Another group, inspired by "jellyfish" swarms, nudges internal representations of similar attacks to cluster together and different attacks to separate, while gradually increasing task difficulty over training. A third group, linked conceptually to "mantis shrimp" precision, focuses on robustness—ensuring that generated attacks remain detectable even when small, adversarial tweaks are applied.

Saving energy with focused attention

Beyond accuracy, the authors address an increasingly pressing issue: the energy required to train large security models. Data centers already consume 1–2% of global electricity, and security monitoring runs around the clock. To reduce this cost, the framework incorporates an energy-aware attention mechanism. Rather than spending equal computation on every network flow, a lightweight scoring module estimates how suspicious each sample is. Highly suspicious flows get full, high-resolution attention and high-precision arithmetic; mildly suspicious flows get medium attention; clearly benign traffic is processed with fewer attention heads, shorter sequences, and lower numerical precision. Additional techniques sparsify attention weights and shorten the context window for benign traffic. These design choices cut training energy by about 40% in the authors’ experiments while maintaining detection performance on attack traffic.

Putting the system to the test

The team evaluates their approach on seven widely used intrusion detection datasets that span enterprise networks, cloud testbeds, and Internet-of-Things environments, covering everything from denial-of-service floods to stealthy infiltration attempts. They train several deep learning classifiers on three kinds of data: the original imbalanced data, data augmented by standard methods such as SMOTE, and data enriched using their GAN-based augmentation. With the new framework, a transformer-based intrusion detector reaches about 98.7% accuracy and an F1-score of 0.987 on the NSL-KDD benchmark, outperforming earlier GAN variants and energy-focused baselines. Careful ablation studies show that roughly half of the improvement comes from basic class rebalancing and traditional augmentation, while the other half is due to the specific combination of loss components and attention mechanisms. The system also shows improved resilience when subjected to common adversarial attack methods, and can transfer reasonably well across datasets without full retraining.

Where it works and where it still struggles

Despite these gains, the method is not a silver bullet. The authors are explicit that some attack types remain very hard to detect—especially slow, stealthy infiltration attacks, where recall can drop below 30% even with augmentation. In addition, real-world deployment tests across five organizations rely on verified ground truth for only about 1.8% of the collected traffic, meaning operational accuracy estimates are still uncertain. The framework also depends on relatively powerful hardware, such as NVIDIA A100 GPUs, which may not be available in smaller organizations. These caveats underline that the results are promising but bounded by the specific datasets, hardware, and verification procedures studied.

What this means for everyday security

In plain terms, this work suggests that carefully integrating several well-known deep-learning tricks—rather than inventing entirely new ones—can yield a cybersecurity system that both catches more attacks and uses less energy to train. By generating realistic synthetic attacks that preserve critical patterns, guiding the learning process with multiple complementary loss components, and dynamically focusing computing power where threats are most likely, the proposed framework offers a more data-efficient and environmentally conscious way to train intrusion detectors. It improves detection on benchmark datasets and shows encouraging robustness to evasive maneuvers by attackers, while openly acknowledging weak spots in rare, highly disguised attacks. For users and organizations, approaches like this move us closer to security tools that are not only smarter and more resilient, but also greener.

Citation: Khalil, H.M., Elrefaiy, A., Elbaz, M. et al. Enhanced cybersecurity threat detection using novel tri-metaheuristic loss functions in generative adversarial networks with adaptive attention preservation for network traffic augmentation. Sci Rep 16, 12074 (2026). https://doi.org/10.1038/s41598-026-46375-3

Keywords: intrusion detection, generative adversarial networks, network traffic augmentation, adversarial robustness, energy-efficient AI