Clear Sky Science · en

Enhancing educational assessment through automated question classification using a RoBERTa-based ensemble model

Smarter Tests for Modern Classrooms

Every year, teachers write thousands of exam questions to gauge how well students are learning—not just what facts they remember, but how deeply they can think. Deciding which questions test simple recall and which require real problem‑solving is important, yet doing this by hand is slow and often inconsistent. This paper introduces an artificial intelligence system that can automatically sort exam questions by how demanding they are on students’ thinking skills, promising fairer tests and more time for teaching.

Why the Levels of Thinking Matter

For decades, educators have relied on a framework known as Bloom’s Taxonomy to shape lessons and exams. It describes six layers of thinking, from remembering basic facts through understanding, applying, analyzing, and evaluating, up to creating something new. A good exam should span this full range instead of clustering at the easiest levels. But classifying each question into one of these levels is a judgment call, and different teachers may disagree. Automating this step can make assessments more objective and can quickly reveal whether a test truly challenges students’ minds rather than merely checking memory.

Teaching a Machine to Read Exam Questions

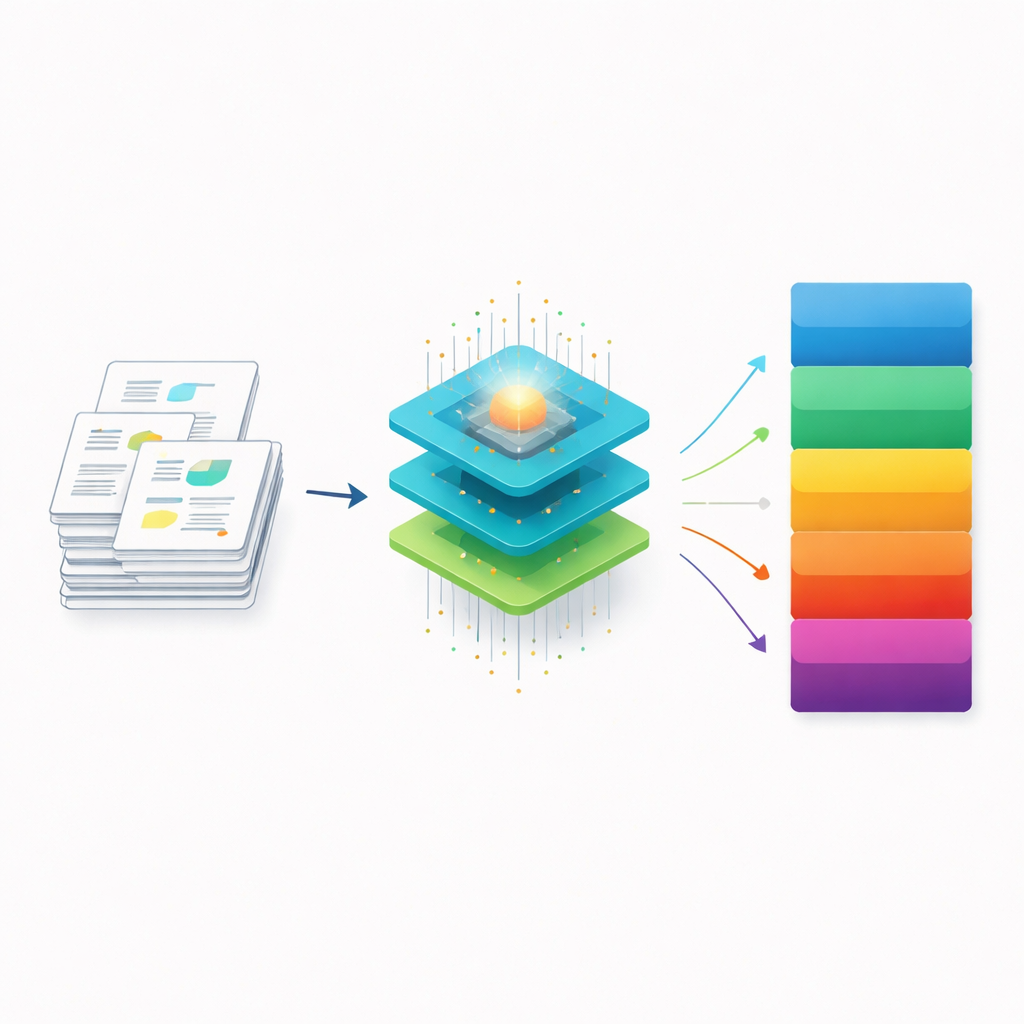

The authors built their system on a powerful language model called RoBERTa, which has been trained on vast amounts of text to capture subtle shades of meaning. When the model reads an exam question, it converts each word into a rich numerical representation that reflects how it relates to the words around it. These representations then flow into three specialized neural networks. One focuses on how information unfolds across the sentence in order, another tracks long‑term patterns, and a third looks for key local phrases. Together, they learn to detect the types of wording that signal whether a question is asking students to recall, explain, apply, or innovate.

Blending Different AI Viewpoints

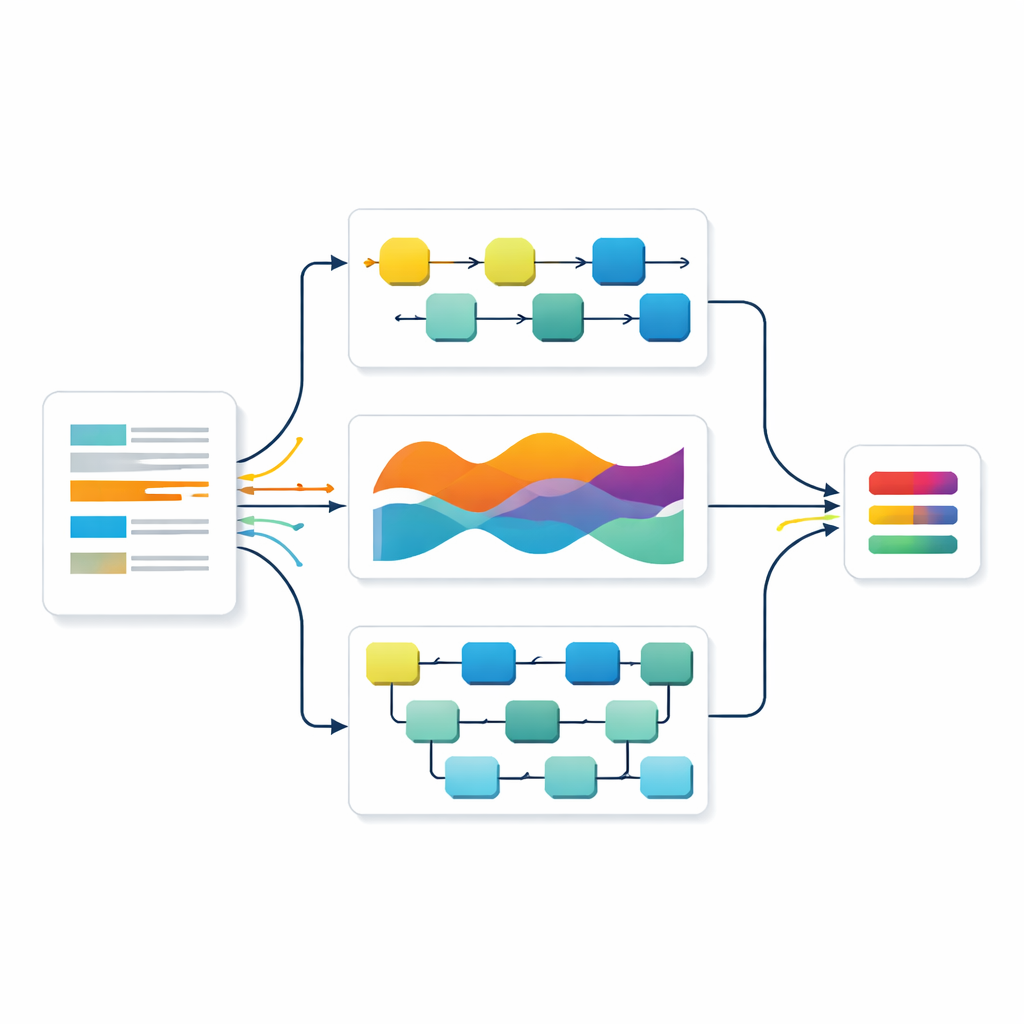

Rather than trusting a single network, the researchers combined all three using a strategy borrowed from voting systems. Each model produces its own guess about a question’s level, along with a measure of confidence. These guesses are then averaged, but not equally—models that prove more accurate on a separate validation set are given more weight. This “weighted ensemble” approach lets the strengths of one model offset the weaknesses of another. The team also rigorously prepared their data, expanding a public dataset of exam questions with carefully checked paraphrases so the system could learn from more examples without introducing noise.

How Well Does It Work?

On a held‑out test set that the models never saw during training, all three individual networks classified questions with over 90 percent accuracy, already surpassing many earlier approaches in the research literature. The combined ensemble did even better, correctly labeling about 92 percent of questions and maintaining balanced performance across all six thinking levels, including the more advanced ones. A statistical test confirmed that this improvement over the best single model was unlikely to be due to chance. Detailed analyses of errors showed that the ensemble reduced confusion between neighboring levels of thinking, which are often the hardest for humans to separate as well.

What This Means for Teachers and Students

By automatically sorting exam questions into levels of thinking, this system could help teachers quickly check whether their tests truly measure a range of skills, from basic recall to creative problem‑solving. It can flag gaps—for example, if an exam contains too many easy questions and too few that promote deeper reasoning—and can help schools design more consistent assessments over time. While the tool does not replace professional judgment, it offers a fast, evidence‑based starting point that reduces workload and human bias. Looking ahead, the authors plan to integrate such systems into online learning platforms and extend them to new kinds of skills that will matter in an era where students increasingly work alongside AI.

Citation: Hamid, M., Malik, S., Saleem, M. et al. Enhancing educational assessment through automated question classification using a RoBERTa-based ensemble model. Sci Rep 16, 13754 (2026). https://doi.org/10.1038/s41598-026-45486-1

Keywords: educational assessment, Bloom’s Taxonomy, automated question classification, deep learning in education, language models