Clear Sky Science · en

Adaptive lightweight mask R-CNN model for underwater debris instance segmentation and classification towards sustainable marine waste management

Why cleaning up underwater trash matters

Far below the waves, plastic bottles, bags, fishing nets and other debris are piling up on the seafloor and drifting through coastal waters. This hidden garbage harms sea life and makes it harder to monitor the health of the oceans. Divers, underwater robots and remote cameras can help, but they first need to be able to see and recognize trash clearly in murky, tinted water. This study presents a new computer vision system that lets underwater robots spot, outline and classify debris in real time, even when visibility is poor, offering a powerful tool for future marine cleanup efforts.

Problems seeing clearly under the sea

Underwater scenes are far tougher to interpret than pictures taken in air. As light travels through water, red tones vanish first, followed by other colors, leaving images dominated by blue-green hues. Suspended particles scatter light, lowering contrast and adding a hazy veil. For a robot or camera trying to find a pale plastic bag against a bright, shifting seabed, this is a serious challenge. Traditional image fixes such as simple contrast adjustments can help a little, but they often fail when conditions change with depth, turbidity or lighting. Many existing deep-learning detectors either struggle to outline debris accurately or are too heavy to run on the small computers typically carried by autonomous underwater vehicles.

A faster, lighter way to spot underwater trash

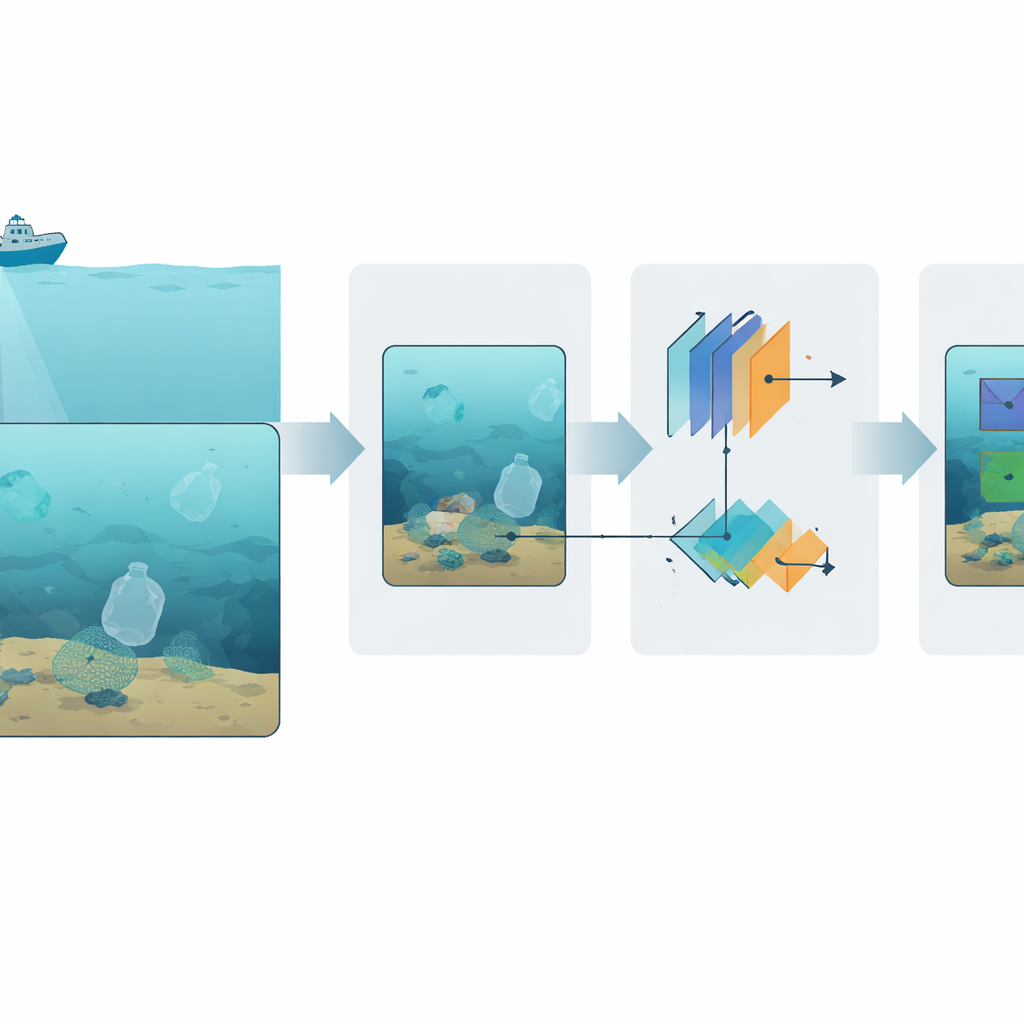

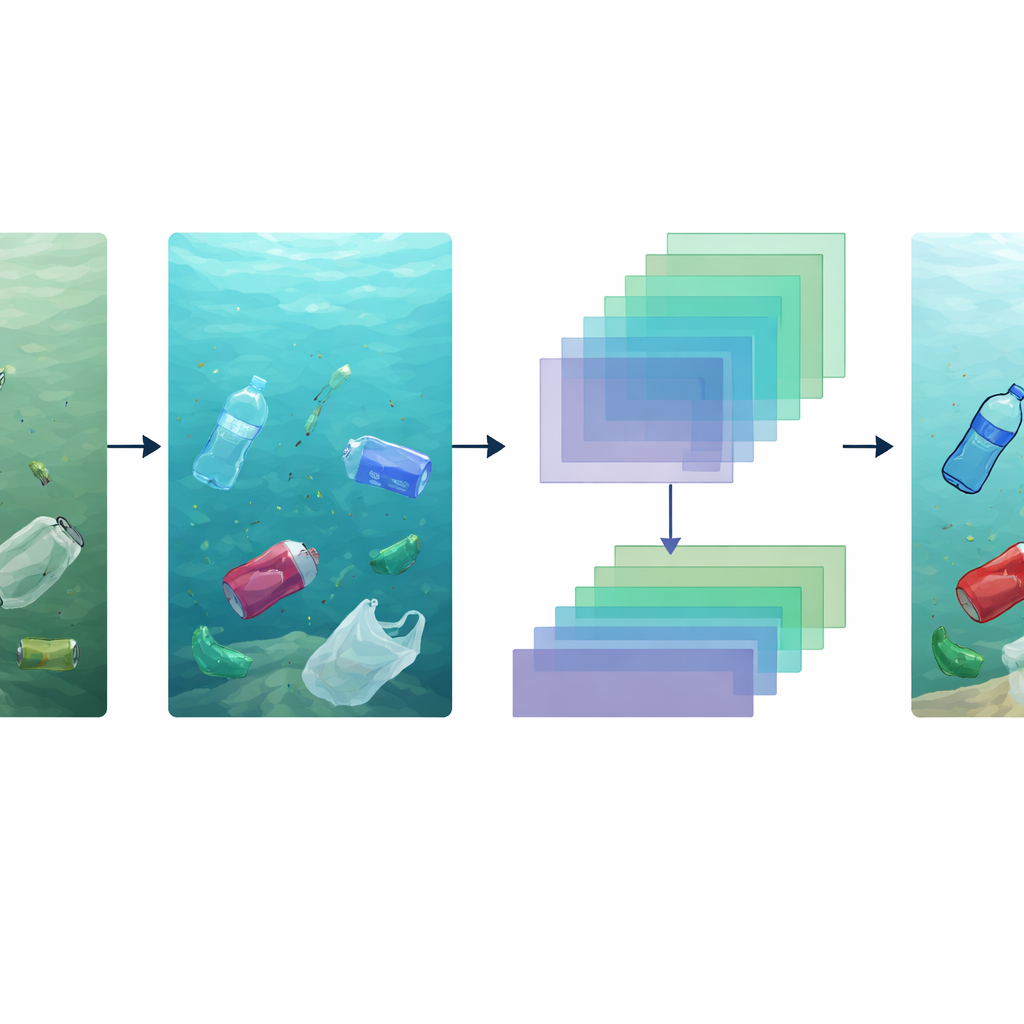

The authors propose an “adaptive lightweight Mask R-CNN” system tailored for this harsh environment. At a high level, the system follows a simple chain: it first cleans up the raw underwater image, then extracts key visual patterns, then proposes likely debris regions and finally draws precise silhouettes around each piece of trash while assigning it to a category such as bottle, bag or net. To keep the system fast enough for real-time use, it relies on MobileNetV3—a compact yet capable neural network—as its main feature extractor. This backbone is paired with an upgraded region proposal module that is tuned to find small, irregular and partially hidden debris, a common situation on cluttered seafloors.

Making murky images look more natural

A central ingredient is a dedicated enhancement module that operates before detection. This module is trained to reverse the physical effects of water on light, guided by a model of how different colors fade with depth. A convolutional neural network estimates how far light has traveled through the water and how strongly each color channel has been absorbed, then rebuilds a clearer, more natural-looking version of the scene. Additional steps adjust brightness, stretch contrast and gently reduce noise, yielding images with sharper edges and more realistic colors. These corrections make it much easier for later stages of the system to pick out bottles, bags and other items against busy, low-contrast backgrounds.

How the system learns to outline debris

Once the image is enhanced, MobileNetV3 converts it into stacks of feature maps that summarize shapes, textures and color patterns at multiple scales. An improved proposal module fuses information from several of these scales so it can handle both large nets and tiny bits of plastic. Instead of relying on hand-chosen template sizes, it learns anchor shapes from the training data and emphasizes those that best match the scene, cutting down on false alarms. For each promising region, a final branch refines the boundaries using a precise sampling method that preserves fine details along edges. The result is a mask that traces each object’s outline, not just a rough bounding box, which is crucial when estimating how much trash is present or planning robotic grasping and collection.

Proving performance in realistic conditions

The team trained and tested the system on several underwater debris image sets, including challenging scenes with strong haze, low light and color distortion. To mimic real dives, they also used data augmentation tricks such as contrast changes, flips and blurs. The enhanced model achieved a mean average precision of about 88% and an overlap score above 83%, outperforming both a standard Mask R-CNN and faster one-shot detectors such as YOLO variants, while still running at around 30 frames per second. It performed well across different types of plastics—from bottles and bags to microplastics—and kept good accuracy even on previously unseen datasets and in high-turbidity water, indicating that it can adapt to varied field conditions.

What this means for healthier oceans

In simple terms, the study shows that it is now possible to build compact vision systems that not only find underwater trash but also outline and classify it accurately in real time on board small underwater robots. By combining physics-aware image cleanup with an efficient detection and segmentation pipeline, the proposed approach strikes a practical balance between speed and precision. Such systems could power future fleets of autonomous vehicles that map debris hot spots, track how waste moves with currents and assist in targeted cleanup operations. While further work is needed to cope with rare debris types and a wider range of water conditions, this research marks a concrete step toward smarter, more sustainable marine waste management.

Citation: Deluxni, N., Sudhakaran, P., Alroobaea, R. et al. Adaptive lightweight mask R-CNN model for underwater debris instance segmentation and classification towards sustainable marine waste management. Sci Rep 16, 14057 (2026). https://doi.org/10.1038/s41598-026-44542-0

Keywords: underwater debris detection, marine pollution, computer vision, autonomous underwater vehicles, plastic waste