Clear Sky Science · en

Harnessing multi-modal deep learning for multi-drone navigation-based trajectory prediction system

Smarter Skies for Helpful Drones

From search-and-rescue missions to crop monitoring and package delivery, teams of drones are increasingly being asked to fly together through crowded, unpredictable airspaces. Keeping these fleets from colliding while still reaching their goals quickly is a complex juggling act. This paper presents a new artificial-intelligence system that learns to predict how multiple drones will move, so their paths can be coordinated in real time with high accuracy and low computing cost.

Why Guiding Many Drones Is So Hard

Planning a safe path for a single drone is already challenging: it must weave around buildings, trees, and bad weather while still reaching its target efficiently. When several drones share the same airspace, the problem becomes much tougher. Each machine’s decisions affect the others, and all must avoid both fixed obstacles and each other. Traditional planning methods often handle one goal at a time—such as covering an area or avoiding collisions—but struggle to balance safety, efficiency, and smooth motion together, especially when hardware and onboard computers are limited.

A New Brain for Coordinated Drone Flight

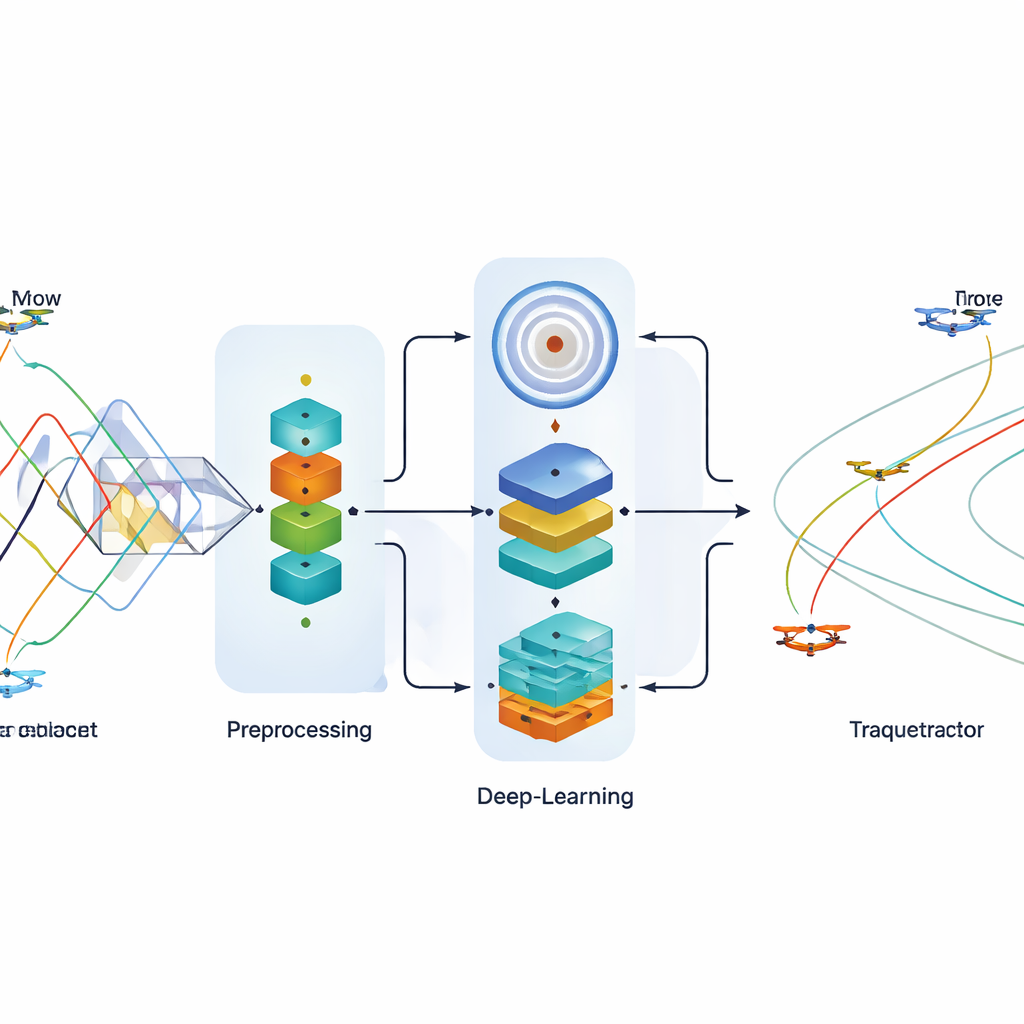

To tackle this, the author introduces a navigation-based Trajectory Prediction System using a Multi-Modal Deep Architecture, shortened to NTP-MMDA. In simple terms, the system acts as a shared “brain” that learns from past flights how drones tend to move through space and time. It receives streams of position and sensor data from several drones and predicts where each one is likely to fly next, seconds into the future. These predictions help a higher-level planner choose paths that are safe and well coordinated. Before learning begins, the system carefully cleans and standardizes the incoming data using a technique called quantile normalization, which makes different sensor readings comparable and reduces noise.

Three Learning Engines Working Together

The heart of NTP-MMDA is an ensemble of three deep-learning components that each “see” the problem from a different angle. The first, a bidirectional gated recurrent unit (BiGRU), is designed to capture how drone positions evolve over time, looking both backward and forward along a flight sequence to understand motion patterns. The second, a variational autoencoder (VAE), learns a compressed internal picture of typical trajectories and can represent uncertainty—useful when conditions are changing or data are incomplete. The third, an adaptive deep belief network (ADBN), is built to extract layered patterns and nonlinear relationships in the data, and in this work is adapted to output continuous coordinates rather than simple class labels. Their outputs are fused into a single prediction of each drone’s future path.

Proving the System in Real Flight Data

The NTP-MMDA model was tested on an openly available drone trajectory dataset containing real flight paths from multiple drones. The study compared the new system against several established methods, including random forests, classic regression, standalone recurrent networks, and feature-matching techniques. On key measures of prediction error—such as mean squared error and mean absolute error—the new model consistently produced smaller mistakes for three different drones, meaning its predicted positions were closer to the actual flight paths. It not only improved accuracy but also ran faster, with lower computing demands measured in operations, memory use, and inference time. Careful “ablation” tests, where each of the three learning components was removed in turn, showed that all three contribute, but the full combination works best.

What This Means for Everyday Drone Use

For a layperson, the takeaway is that this research demonstrates a more reliable way to forecast where each drone in a flock will be, moments ahead. With better foresight, drones can automatically choose routes that are smooth, efficient, and free of collisions, even in busy or hazardous environments such as disaster zones or complex farmland. Because the system achieves high accuracy while using relatively little computing power, it is well suited to practical, real-time use on resource-limited platforms. As multi-drone operations become more common in emergency response, environmental monitoring, and commercial services, approaches like NTP-MMDA could help keep our skies safer and our autonomous helpers more capable.

Citation: Alzahrani, A. Harnessing multi-modal deep learning for multi-drone navigation-based trajectory prediction system. Sci Rep 16, 12670 (2026). https://doi.org/10.1038/s41598-026-42180-0

Keywords: multi-drone navigation, trajectory prediction, deep learning, collision avoidance, autonomous UAVs