Clear Sky Science · en

Sensory-motor control with large language models via iterative policy refinement

Teaching Machines to Move on Their Own

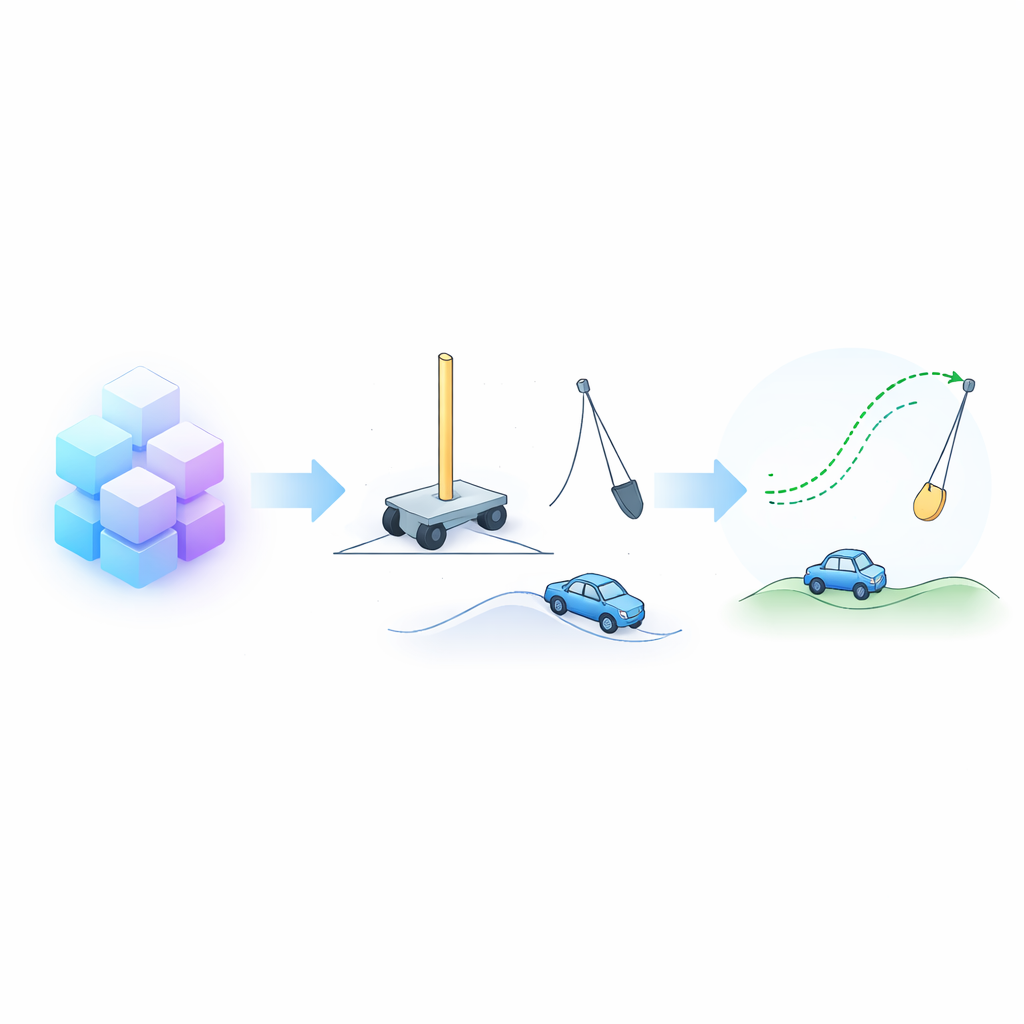

Imagine a robot that learns to balance a pole, swing a pendulum upright, or drive out of a valley—without human engineers painstakingly programming every little movement or collecting thousands of example demonstrations. This paper explores how large language models (LLMs)—the same kind of systems used for chatbots—can be turned into "brains" that design and improve control strategies for such moving machines, relying largely on text descriptions and a bit of trial and error.

From Words to Motions

Traditional robot control often breaks movement into fixed building blocks, such as predefined walking steps or reaching motions. A higher-level program then selects and sequences these pieces. While this works in simple settings, it struggles in more fluid situations where movements blend into one another and must be finely tuned moment by moment. The authors instead ask LLMs to create full control rules that directly map what the robot senses—its position, speed, angles, and so on—into continuous motor commands. The only starting information the model receives is a natural-language description of the robot’s body, its sensors and motors, the surrounding environment, and what it is supposed to achieve.

A Loop of Reflection and Refinement

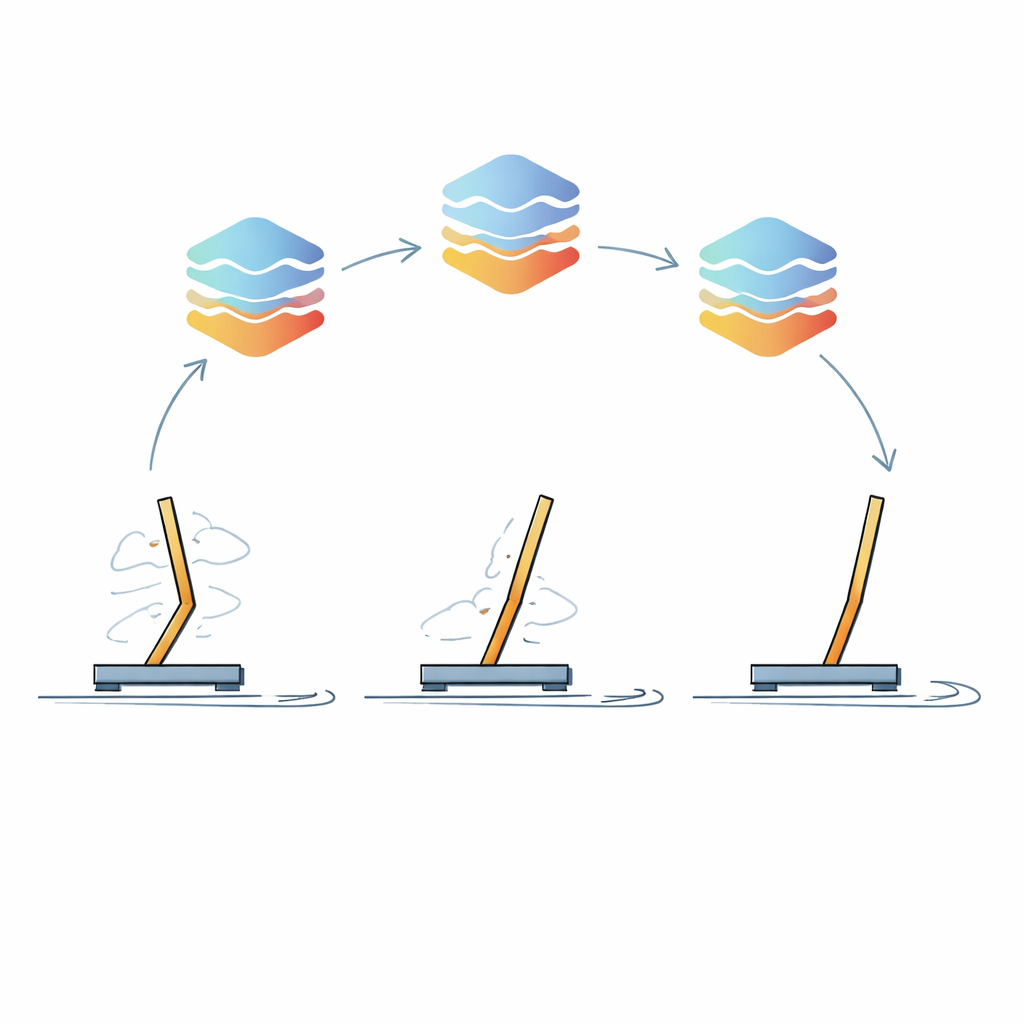

The heart of the approach is an iterative learning loop the authors call Iterative Policy Refinement. In the first step, the LLM is prompted to think through the problem in stages: it first outlines a high-level strategy in plain language, then turns that into clear IF–THEN–ELSE style rules, and finally converts those rules into executable code. That initial controller is run inside a simulated environment—such as a cart with a pole that must be kept upright—and the robot’s performance is measured. Crucially, short snippets of the robot’s sensory readings and corresponding actions are then fed back to the LLM, together with a summary of how well the strategy worked. The LLM is asked to analyze these traces, spot weaknesses, and generate an improved controller. This cycle repeats many times, gradually polishing the behavior.

Putting the Idea to the Test

To check whether this method really works, the researchers tried it on a set of classic benchmark tasks used in reinforcement learning: balancing a cart–pole system, swinging and stabilizing a pendulum, driving a car up a steep hill, and solving an acrobot task where a two-link system must be swung up to a target height. They also tackled an inverted pendulum task from a popular physics simulator. These tasks are simple enough to study in detail but still capture key challenges: the robot does not see everything at once, rewards come with delay, and the physics can be highly unstable. The team compared several modern open-source language models of roughly 70–120 billion parameters, varied how much randomness they allowed in the model’s outputs, and repeated each experiment multiple times to get reliable statistics.

How Well Do Language Models Control Machines?

The best-performing model, a 120-billion-parameter system called GPT-oss, consistently discovered high-quality control strategies across most tasks, often reaching optimal or near-optimal scores. Another model, Qwen2.5, performed particularly well on some problems, even outperforming GPT-oss on the inverted pendulum, though it struggled on others such as the standard pendulum task. Importantly, the first controllers the LLMs produced were often mediocre, showing that they were not merely recalling canned solutions from training data. Performance improved markedly over iterations as the models used feedback to adjust which sensor signals mattered most and how they should influence actions. The authors also probed how many time steps of sensor data to include in each refinement prompt and what pieces of feedback were most critical, finding that an intermediate amount of data and rich information about previous strategies worked best.

Why This Matters for Future Robots

For a non-specialist, the main takeaway is that language models can do more than talk: they can help design the fine-grained motor rules that make machines move intelligently. Instead of starting from random behavior and requiring huge amounts of trial-and-error data, an LLM can propose a reasonable control plan from a verbal description, then steadily improve it by reading short records of what happened when the robot tried it. This blend of prior knowledge and experiential learning could reduce the cost and effort of building capable robots and other autonomous systems. While there are still hurdles—such as the heavy computation needed to run large models and the challenge of scaling to very complex, long-duration tasks—the study suggests a path toward robots whose low-level movements are shaped, at least in part, by systems originally trained simply to predict the next word in a sentence.

Citation: Carvalho, J.T., Nolfi, S. Sensory-motor control with large language models via iterative policy refinement. Sci Rep 16, 13575 (2026). https://doi.org/10.1038/s41598-026-42091-0

Keywords: large language models, robot control, reinforcement learning, embodied agents, iterative policy refinement