Clear Sky Science · en

Visualising backward information propagation in deep reinforcement learning from a variational data assimilation perspective

Why this matters beyond computer science

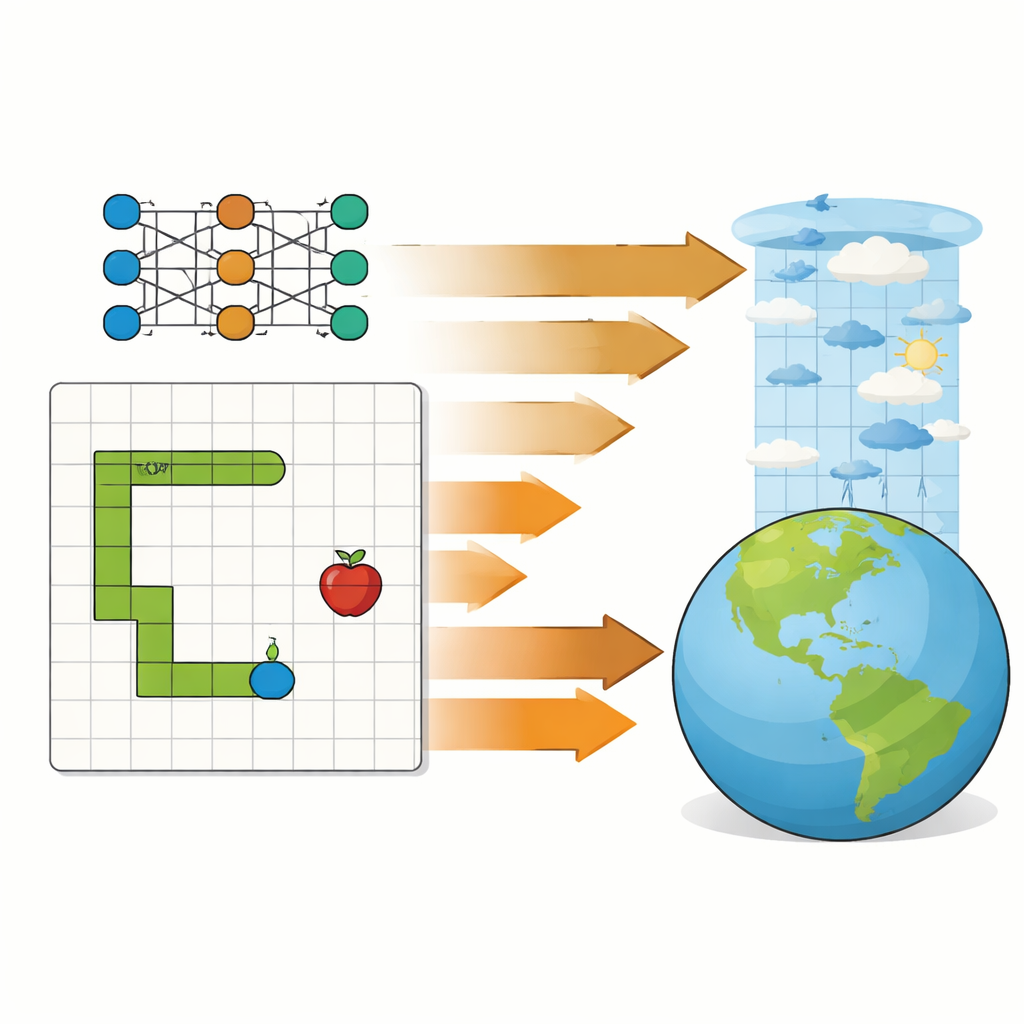

Weather forecasts, climate models, and game-playing artificial intelligence may seem worlds apart, yet they rely on the same hidden engine: repeatedly nudging a model so that it better matches what actually happens. This paper opens that black box. Using a simple Snake video game as a test bed, the author shows—visually and step by step—that the way a learning algorithm improves its play closely mirrors how meteorologists refine weather forecasts using observational data. The result is a clear, intuitive bridge between modern AI and longstanding methods in atmospheric science.

Two worlds with a shared hidden engine

In numerical weather prediction, variational data assimilation is used to combine a physical model of the atmosphere with real-world observations. Scientists run the forecast model forward in time, compare it with measurements, and then propagate the information from those mismatches backward to adjust the model’s starting conditions. Deep reinforcement learning, which powers systems that learn to play games or control robots, also runs a model forward: an agent takes actions, receives rewards or penalties, and then sends information backward through a neural network to tweak its internal parameters. The paper argues that, beneath the different jargon, both processes are doing the same kind of work—minimizing a single score that measures how well the system is doing over an entire sequence of events.

A simple game as a clean laboratory

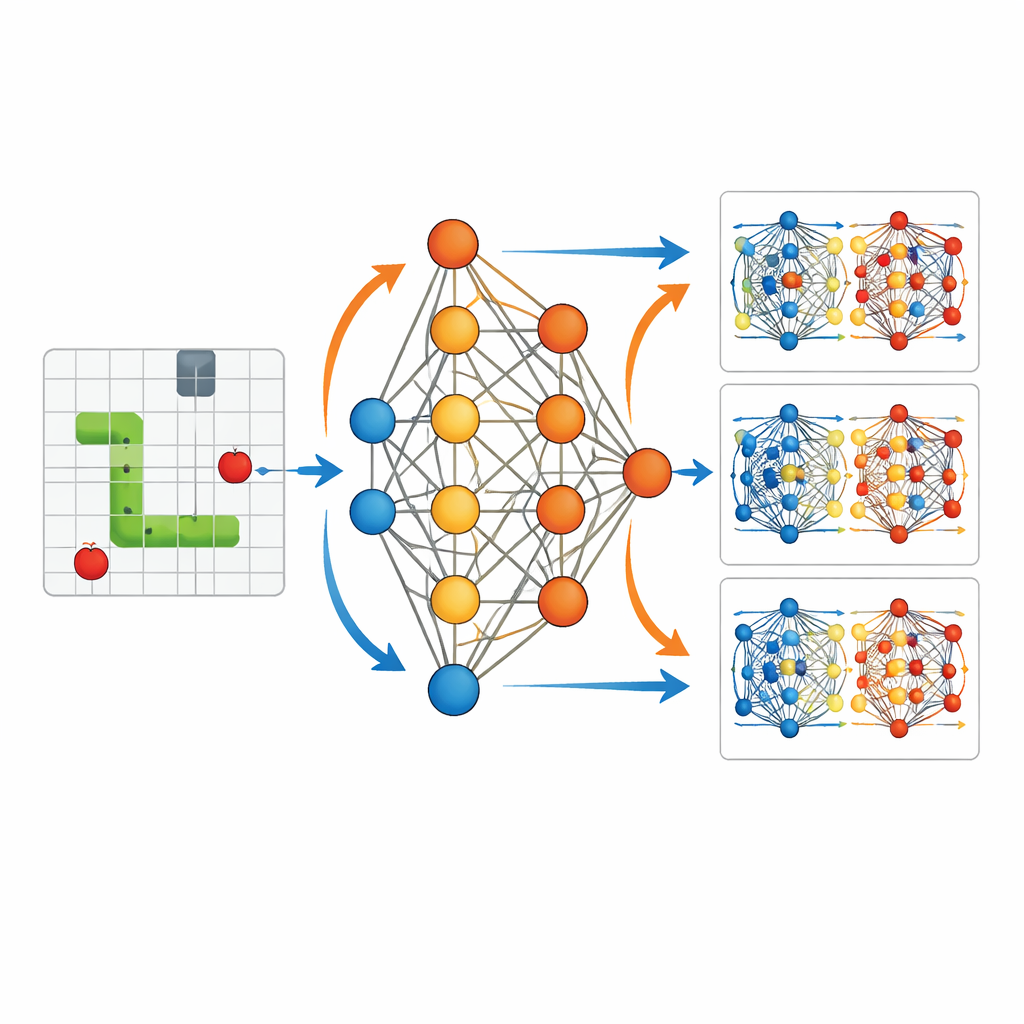

To make this connection concrete, the study uses a stripped-down setting: an AI agent learning to play the classic Snake game. The agent sees a compact description of its surroundings—where food, walls, and its own body lie relative to its head—and feeds these 11 bits of information into a small neural network with one hidden layer. The network outputs three options: turn left, go straight, or turn right. Each time the snake eats food, the agent receives a positive reward; if it crashes into a wall or itself, it gets a negative reward and the game ends. Crucially, the author records every single parameter of this network—3,584 in total—at every training step, so that the entire learning process can be replayed and inspected in detail.

Watching learning happen from the inside

With this full record, the paper visualizes how the network’s internal “weights” change as the snake learns. Early on, the actions are almost random, and the weight updates are scattered and small. Over many games, as the agent explores the grid and experiences many successes and failures, those updates begin to form structured patterns. The snake’s paths become longer and more directed toward food. The study shows that each small burst of learning after a move is like a short-range correction: information from immediate rewards ripples backward to adjust the parameters that produced that move. Periodically, the system also reuses past game experiences, recomputing how good or bad those old choices look under the latest parameters. This resembles how weather centers repeatedly linearize and re-optimize around an updated forecast in four-dimensional variational data assimilation.

From random wandering to purposeful motion

The contrast between trained and untrained agents makes the effect of these hidden updates easy to see. Without training, the snake meanders near its starting point, bumping into walls or itself with no apparent strategy. After training, the same network structure produces smooth, purposeful trajectories that actively home in on food while avoiding danger. Visualizations of parameter changes show that rewards at specific times selectively strengthen or weaken particular connections in the network, organizing its behavior. This mirrors how observational information in data assimilation gradually reshapes model initial conditions so that forecasts follow trajectories more consistent with reality.

What the study really shows

The work does not introduce a new learning algorithm or a new way to forecast the weather. Instead, it offers a clear, didactic picture of a shared principle: both deep reinforcement learning and variational data assimilation repeatedly run a model forward, measure how well it did, and then send that information backward to improve some set of adjustable quantities. In Snake, those quantities are neural-network weights that encode a strategy; in weather prediction, they are the atmospheric states that seed a forecast. By making the backward flow of information visible in a small, fully observable system, the paper gives atmospheric scientists a more intuitive feel for modern machine-learning dynamics and helps AI researchers appreciate the long history of similar ideas in geoscience.

Citation: Wang, KY. Visualising backward information propagation in deep reinforcement learning from a variational data assimilation perspective. Sci Rep 16, 11581 (2026). https://doi.org/10.1038/s41598-026-42086-x

Keywords: reinforcement learning, data assimilation, neural networks, weather prediction, optimization