Clear Sky Science · en

Learning-aided observer design for improving autonomous vehicle safety

Why smarter safety matters for self-driving cars

As cars take over more of the driving, they must stay in control even during sudden swerves, slippery roads, or tire problems. Today’s systems can tell if a car is clearly out of control, but they often struggle to notice the grey zone where the car is technically stable yet no longer following the intended path well. This paper presents a new way for self-driving cars to monitor their own behavior in real time, spotting both outright loss of stability and subtle loss of performance before they turn into accidents.

Watching how a car really moves

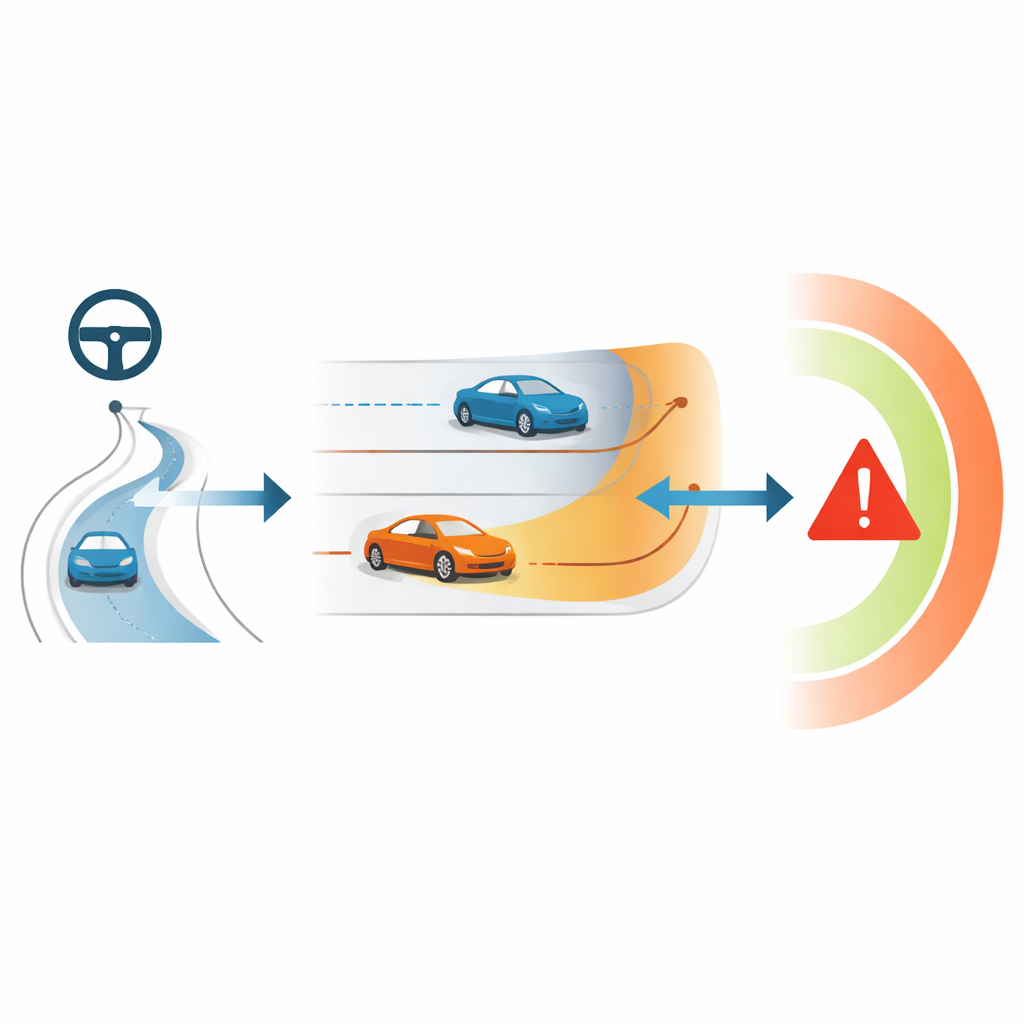

The core idea is to focus on how the car actually moves sideways when it turns or changes lanes. Engineers track quantities like how fast the car is rotating, how quickly it drifts sideways, and how far it strays from the planned path. These are not all directly measured by sensors, so they must be estimated. The authors build an "observer"—a piece of software that watches steering input, speed, and position and then reconstructs the hidden motion of the vehicle. If this observer can follow the true motion closely, it can serve as the car’s inner eye for safety.

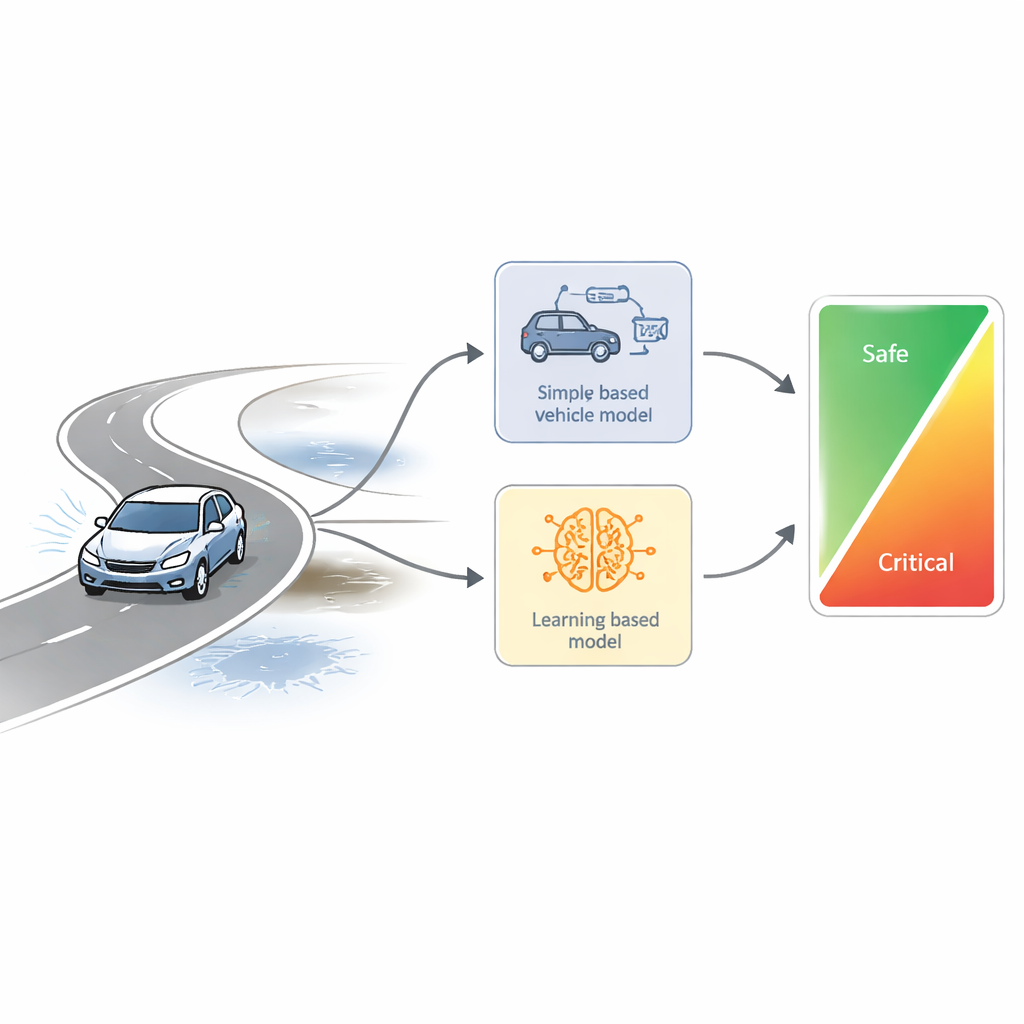

Blending physics and learning

Instead of relying on a single model, the new observer combines two very different views of the car. One view is a traditional physics-based model that treats the vehicle like a simplified bicycle: it uses mass, inertia, and tire stiffness to predict how the car should behave. This model is robust and well understood but less accurate when the car is pushed to its limits. The second view is a learning-based model trained with reinforcement learning, which learns from many driving examples how the car actually responds, especially in hard turns and other nonlinear situations. By running both observers in parallel, the system compares what the simple physics predicts with what the learning model expects under real-world conditions.

Turning disagreement into a safety signal

The key safety measure in this work is the difference between the two observers. When the car is operating in a gentle, predictable regime, the learning model and the physics model agree closely, and this difference stays small. As the car approaches more extreme conditions—such as sharp lane changes at high speed, sudden loss of grip, or steering saturation—the learning model detects behavior that the simple model cannot capture, and the difference grows. The authors gather these differences into a single value, which can be pictured as the length of a vector inside a safe "bubble". As long as the value stays within a chosen inner limit, the motion is considered safe; when it crosses that limit, the system flags a safety-critical situation.

Putting the method to the test

The researchers first test their approach in computer simulations where a virtual car follows a curved road at changing speeds. They show that the combined observer keeps estimation errors small while clearly signaling moments when the car is pushed hard and its lateral tracking degrades. Next, they move to real-world experiments using an autonomous test vehicle on a proving ground, including a standardized "moose test" with a sudden lane change. By carefully choosing which sensor signals and how much recent history to feed into the learning model, they improve its estimation accuracy and demonstrate that the safety index reliably lights up during risky motions and stays calm otherwise. Statistical measures confirm that the improved learning-aided observer cuts estimation errors by more than half compared with a simpler setup.

What this means for everyday driving

For a non-specialist, the takeaway is that this method gives self-driving cars a more nuanced sense of when they are getting close to the edge. Rather than only asking, "Is the car still stable?", the system also asks, "Is it still performing as expected, or starting to slide away from the plan?" By comparing a sturdy physics model with a flexible learning model, the car can detect danger earlier, even when its motion still looks acceptable on the surface. The authors show that this safety evaluation can run alongside existing controllers today and could, in future work, feed directly back into steering and braking decisions to keep automated vehicles safer in sudden, real-world emergencies.

Citation: Mihály, A., Németh, B., Kopasz, M. et al. Learning-aided observer design for improving autonomous vehicle safety. Sci Rep 16, 11858 (2026). https://doi.org/10.1038/s41598-026-35378-9

Keywords: autonomous driving safety, vehicle lateral dynamics, reinforcement learning, state estimation, driver assistance systems