Clear Sky Science · en

Breaking through safety performance stagnation in autonomous vehicles with dense learning

Why safer self-driving cars matter

Self-driving cars promise fewer crashes, less traffic, and more mobility for everyone. Yet after years of hype and billions of dollars invested, truly driverless cars that can handle all conditions are still rare on public roads. The main roadblock is safety: today’s systems struggle with unusual, high‑stakes situations such as a sudden cut‑in, an aggressive driver, or a confusing intersection. This paper introduces a new way to train autonomous vehicles that targets those rare but crucial moments, aiming to push safety close to human levels and unlock wider deployment.

The hidden problem of rare dangers

Most of the time, driving is uneventful: cars follow lanes, keep distance, and nothing bad happens. For learning algorithms, this is surprisingly bad news. Modern autonomous vehicles rely on deep learning, which improves by spotting patterns across huge amounts of data. But serious crashes and near‑crashes are very rare in that sea of normal driving. As vehicles get a bit safer, the most dangerous events become even rarer, starving the learning process of what it most needs. The authors call this the “curse of rarity.” It leads to high uncertainty in training and, in practice, to a kind of safety stagnation: fixing performance in one situation can make it worse in another, a tradeoff they describe as a “seesaw effect.”

Why learning only from crashes backfires

Many developers try to beat this rarity problem by focusing on failures: they replay the worst crashes and troublesome edge cases, then train their systems to avoid those particular mistakes. The study shows that this intuitive strategy can be misleading. Concentrating just on crash data introduces bias: the system may become very good at a small set of scenarios while unknowingly becoming worse in other, equally important ones. In other words, the learning process is pushed off course. Rule‑based safety layers, which use hand‑crafted rules to prevent obvious dangers, help in some situations but struggle with the enormous variety and complexity of real‑world traffic. Together, these approaches have not been enough to continually improve overall safety.

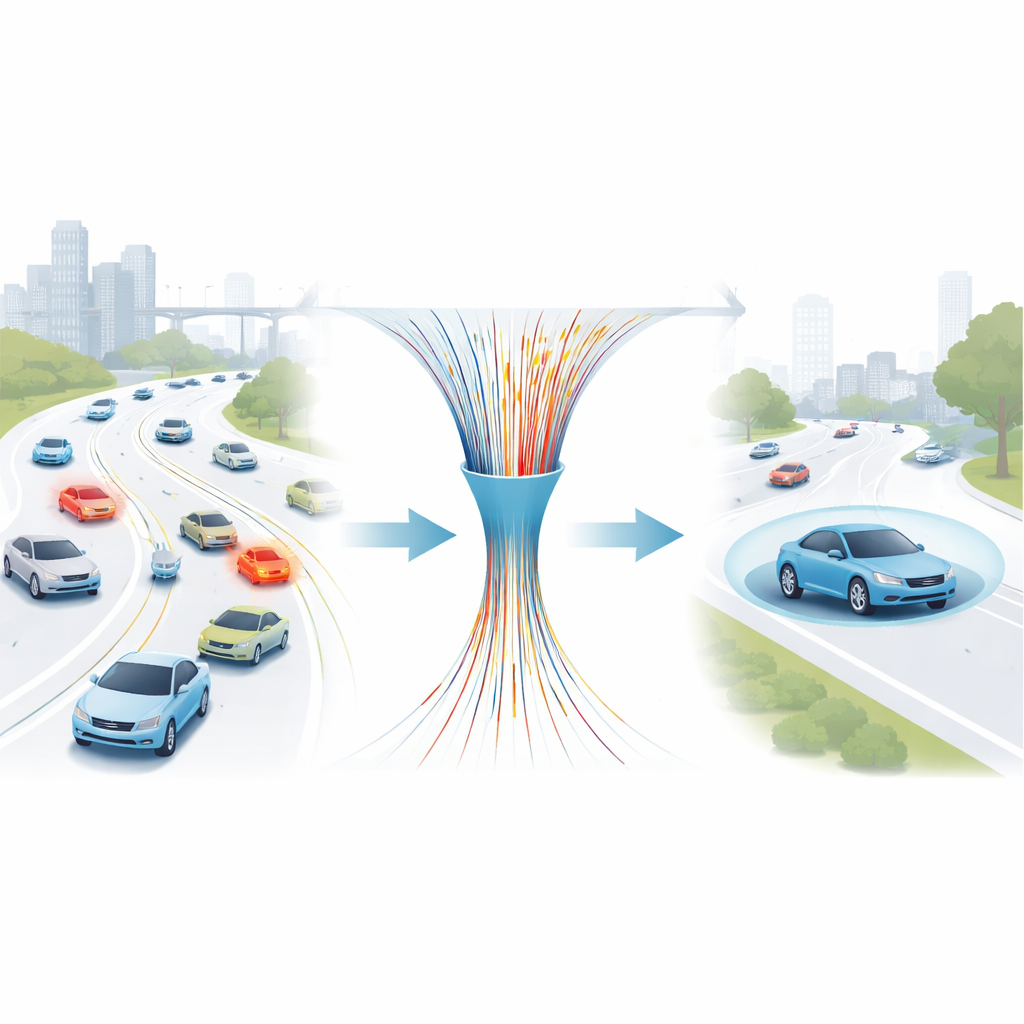

Making every useful moment count

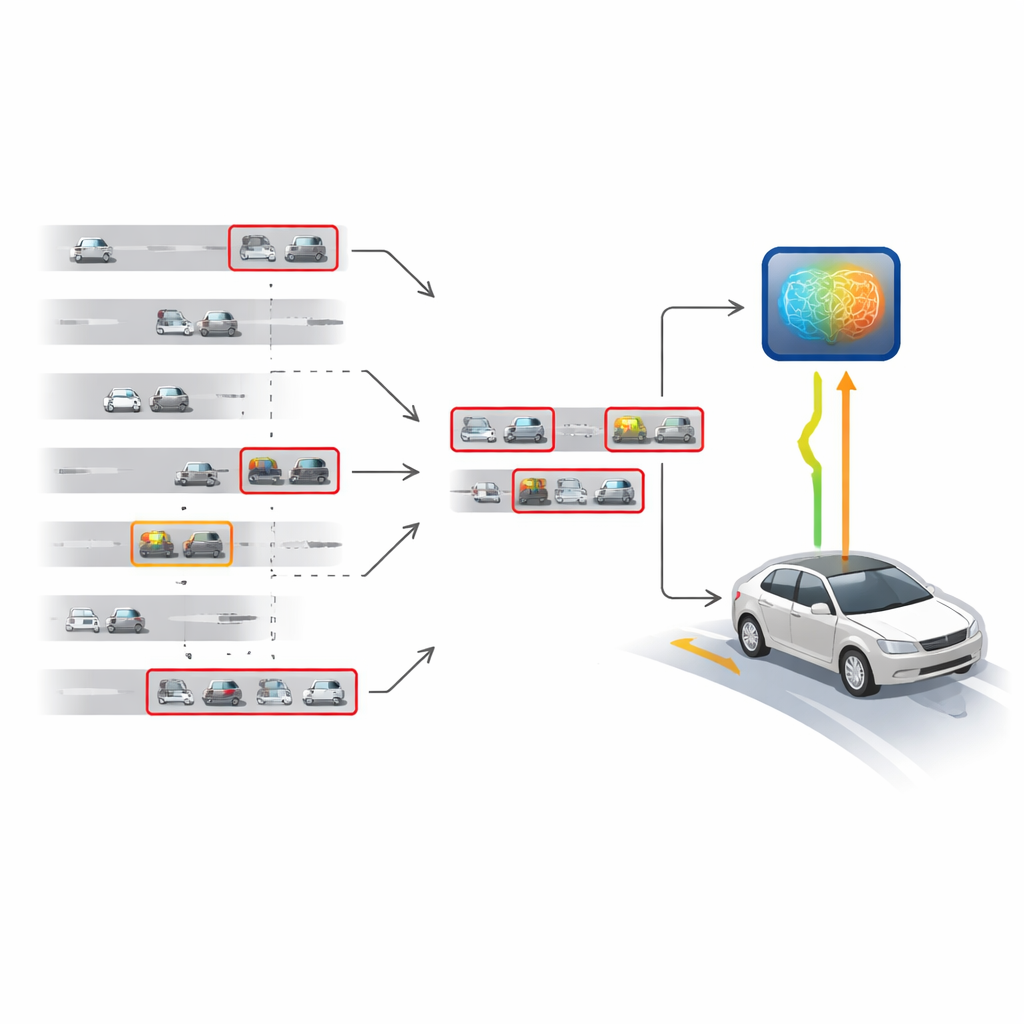

The authors propose a “dense learning” strategy that reshapes the training data instead of simply adding more of it. Rather than treating all driving moments equally, they sift through both simulated and real‑world episodes to keep only the most informative ones. These include not only avoidable crashes, where a better decision would have prevented impact, but also “near‑misses,” where a collision almost occurred but was successfully avoided. Long driving episodes are then trimmed to retain only the safety‑critical slices of time, and these slices are reconnected to form a compact, information‑rich training set. A learned safety score helps automatically flag risky states, and a retrospective step re‑checks past data against the latest driving policy using counterfactual simulation. This three‑layer densification—episode‑level, state‑level, and retrospective—greatly reduces randomness in learning while keeping the training signal honest.

A safety co‑driver for many kinds of cars

Using this dense learning pipeline, the team trains a safety‑focused driving agent called “SafeDriver.” Rather than replacing an existing autonomous driving system, SafeDriver acts like a protective co‑driver: during normal conditions, the base system is in charge, but when the learned safety score detects a dangerous situation, SafeDriver briefly takes over braking and steering to steer the car out of trouble. The researchers test this idea across a variety of conditions: high‑speed multi‑lane highways, complex roundabouts, and urban street networks built from large real‑world driving datasets. In simulations, adding SafeDriver cuts crash rates by about one to two orders of magnitude compared with the underlying systems alone, and reduces “avoidable” crashes even more sharply.

From simulation to the test track

To see if the approach holds up outside the computer, the team equips a real Lincoln sedan running the open‑source Autoware system with SafeDriver and evaluates it on the Mcity test track using a mixed‑reality setup. Virtual cars and traffic lights are blended into the real camera view, allowing repeatable, high‑risk scenarios without endangering human road users. After carefully tuning the simulator to match the physical car’s behavior, they show that SafeDriver reduces the overall crash rate in track tests by roughly 90 percent, and avoidable crashes by nearly 99 percent. The same densified training also improves performance on a large, diverse urban planning benchmark spanning four cities.

What this means for everyday drivers

In plain terms, this work shows that the path to safer self‑driving cars is not just more data, but smarter data. By concentrating training on the rare moments when safety hangs in the balance—both the close calls and the crashes that could have been avoided—the dense learning method provides a clearer, more stable signal for improvement without sacrificing performance elsewhere. While more research is needed to extend the idea to other safety‑critical machines, such as medical robots or aircraft, these results suggest that autonomous vehicles can break out of their current safety plateau. If widely adopted, approaches like this could bring self‑driving technology much closer to the level of reliability the public expects before trusting cars to drive themselves.

Citation: Feng, S., Zhu, H., Sun, H. et al. Breaking through safety performance stagnation in autonomous vehicles with dense learning. Nat Commun 17, 3163 (2026). https://doi.org/10.1038/s41467-026-69761-x

Keywords: autonomous vehicles, self-driving safety, reinforcement learning, rare events, machine learning training data