Clear Sky Science · en

The effect of medical explanations from large language models on diagnostic accuracy in radiology

Why this study matters

Modern hospitals are rapidly adopting powerful text-based artificial intelligence tools to help doctors make sense of complex medical information. Radiologists, who interpret medical scans such as CT and MRI images, are under constant pressure to give fast and accurate answers. This study asks a simple but important question: if an AI system not only gives a diagnosis but also explains its thinking, does that actually help doctors make better decisions—and what style of explanation works best?

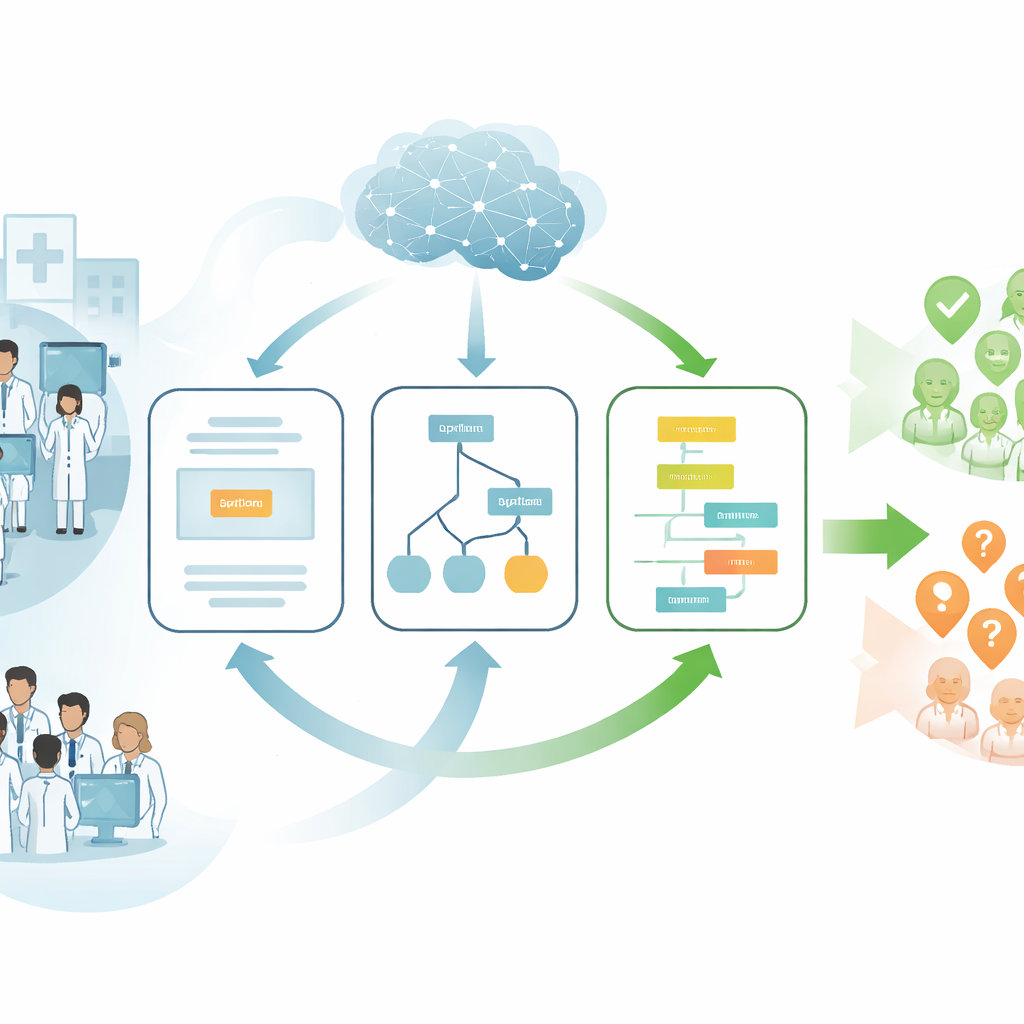

Different ways an AI can "talk" to doctors

The researchers focused on large language models, or LLMs—AI systems that can read and write natural language and, in this case, also look at medical images. Instead of treating the AI as a black box that spits out a single answer, they tested three different ways it could present its advice to radiologists. In one format, the AI simply named its best-guess diagnosis. In another, it listed several possible diagnoses, much like a doctor’s mental checklist. In the third, it walked through its reasoning step by step, laying out how details from the scan and the patient’s story led to its conclusion. The team wanted to know which of these explanation styles would best support human judgment rather than replace it.

A large test with real-world radiology cases

To explore this, the authors ran a randomized experiment with 101 practicing radiologists in the United States. Each radiologist reviewed 20 real patient cases drawn from an education series published by a leading medical journal. Every case included a short clinical description plus one or more CT or MRI images, and doctors had to type in a free-text diagnosis, just as they would in real life. Some radiologists received no AI help at all. Others saw AI advice in one of the three formats: a bare diagnosis, a ranked list of five possible diagnoses, or a detailed step-by-step explanation. The AI used was a multimodal version of GPT-4 that can handle both text and images. All its outputs—including mistakes—were shown as-is to mimic real-world usage.

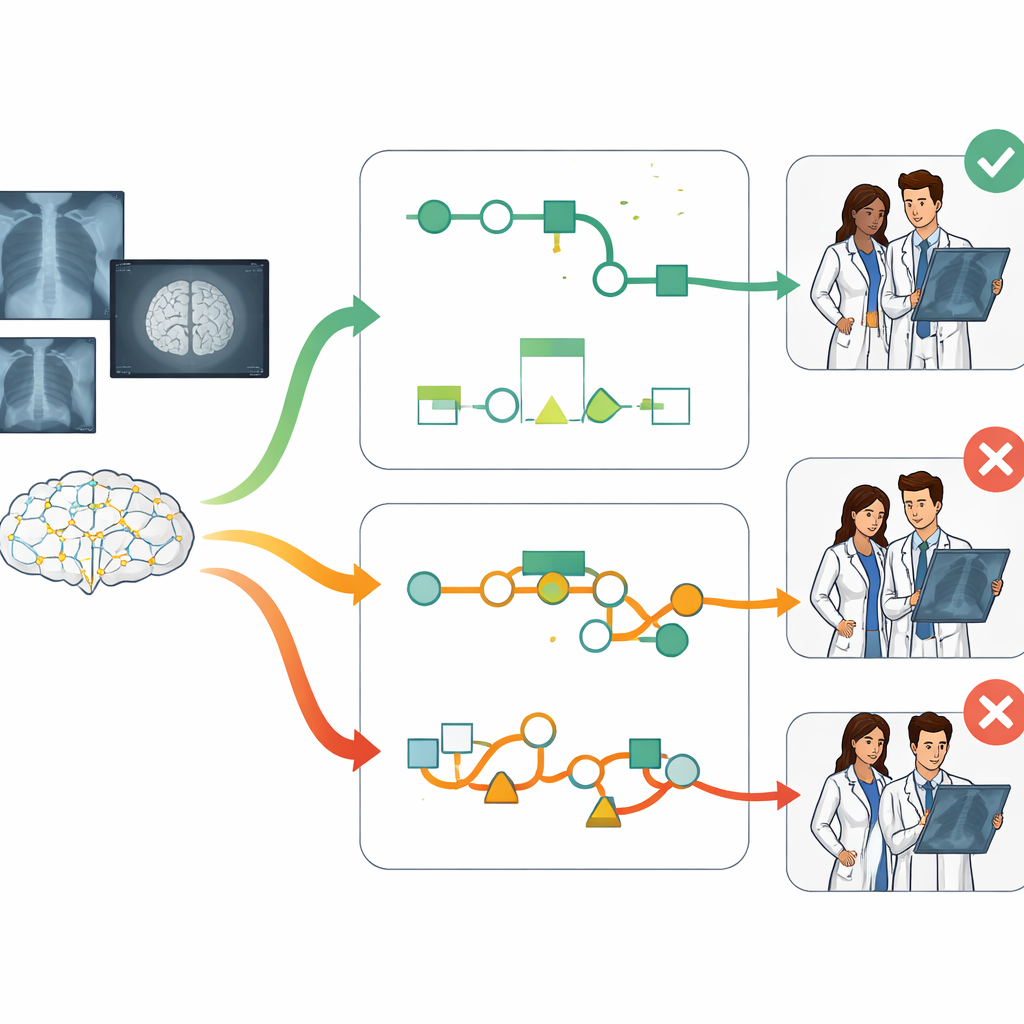

Step-by-step reasoning boosts accuracy

The central finding is clear: explanation style mattered a lot. Radiologists who saw the chain-of-thought style—the step-by-step reasoning—were noticeably more accurate than those who worked without AI and also more accurate than those who saw only a single diagnosis or a list of alternatives. On average, chain-of-thought support improved diagnostic accuracy by more than 12 percentage points over no AI help and by 7 to 10 points over the other AI formats. These gains held up even after accounting for factors such as years of experience, subspecialty training, and how long doctors spent on each case, suggesting that the way information is presented can meaningfully change how well doctors perform.

Following good advice and rejecting bad advice

The study also dug into how doctors responded when the AI was right or wrong. With the differential diagnosis list, radiologists tended to follow the AI’s top suggestion even when it was incorrect, a pattern of excessive trust known as automation bias. In contrast, the chain-of-thought format encouraged more selective reliance. When the AI’s diagnosis was correct, doctors were very likely to agree with it. But when something in the step-by-step reasoning seemed off, they were more willing to override the AI and choose a different answer. In other words, detailed reasoning helped doctors judge when to lean on the machine and when to trust their own expertise.

Robust results across skills and specialties

The advantages of step-by-step explanations were seen in a wide range of situations. Radiologists with both shorter and longer careers benefited, as did those with basic or advanced computer skills. The pattern held for easier and harder cases and for both general radiologists and those working in specialized areas, such as neuroradiology or abdominal imaging. The authors also ran numerous statistical checks—controlling for the AI’s own accuracy, the length of its outputs, and different modeling assumptions—and found that the superiority of chain-of-thought explanations was remarkably stable.

What this means for patients and future AI tools

For patients, the message is cautiously optimistic: AI can help radiologists, but how it communicates its reasoning is crucial. Simply listing possibilities or giving a confident-sounding answer is not enough and may even nudge doctors toward incorrect choices. In this controlled experiment, AI that “thinks out loud” in a clear, stepwise fashion helped physicians better spot when the machine was right and when it was wrong, leading to fewer diagnostic errors overall. As hospitals continue to integrate AI into clinical workflows, designing systems that prioritize transparent, reasoning-focused explanations could play a key role in making medical diagnoses safer and more reliable.

Citation: Spitzer, P., Hendriks, D., Rudolph, J. et al. The effect of medical explanations from large language models on diagnostic accuracy in radiology. npj Digit. Med. 9, 333 (2026). https://doi.org/10.1038/s41746-026-02619-0

Keywords: radiology diagnosis, medical AI, large language models, chain-of-thought explanations, clinical decision support