Clear Sky Science · en

PsychiatryBench: a multi-task benchmark for LLMs in psychiatry

Why this work matters for mental health and AI

Mental health problems touch hundreds of millions of people worldwide, yet many never receive timely, high-quality care. At the same time, powerful chatbots based on large language models (LLMs) are rapidly entering doctors’ offices, therapy apps, and even everyday search engines. This article introduces PsychiatryBench, a new way to test how well these systems really understand psychiatric medicine. It asks a simple but urgent question: can today’s AI tools think through complex mental health cases in a way that is safe, reliable, and close to expert standards?

A new test built from real clinical knowledge

Most previous attempts to gauge AI in mental health relied on social media posts, small interview transcripts, or even conversations made up by other AI systems. These are far from the carefully reasoned case histories and exam questions used to train psychiatrists. PsychiatryBench takes a different route. The authors built a 5,188‑item question set drawn only from trusted psychiatric textbooks, casebooks, and self‑exam guides. The benchmark covers eleven types of tasks, from naming a diagnosis and choosing a treatment to planning ongoing care, answering foundational knowledge questions, and following a case over time. The focus is adult and geriatric psychiatry in outpatient settings, where doctors must weigh overlapping symptoms, medical side effects, and long‑term management rather than only dramatic emergencies.

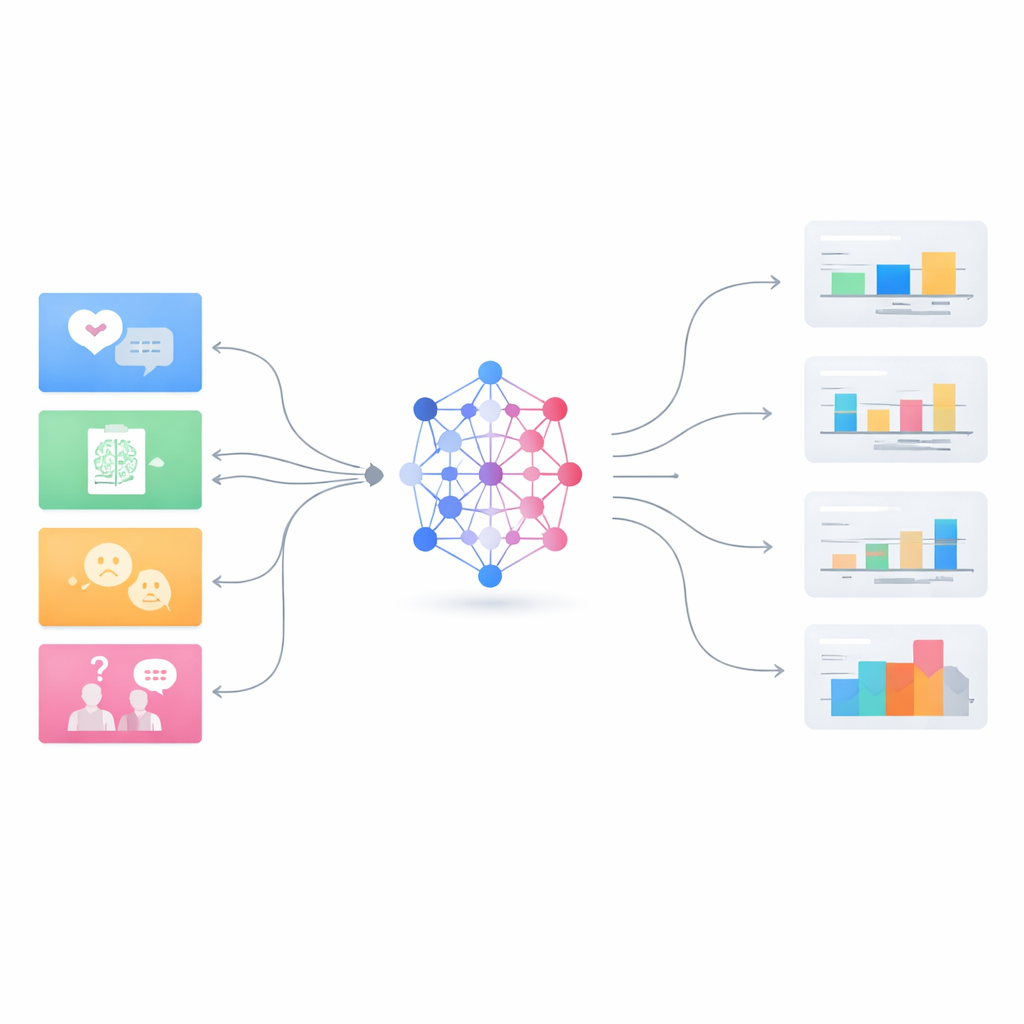

How the AI models were put to the test

The team evaluated fifteen leading LLMs, including general‑purpose systems from major tech companies and several models tailored specifically to medicine. For structured tasks like multiple‑choice questions, they scored answers in the usual way. For open‑ended responses—such as “How would you manage this case?”—they used another strong language model as a neutral judge to compare each answer against an expert reference on a 0–100 similarity scale. This allowed them to probe not just fact recall but also the quality of reasoning and how closely the AI’s clinical logic matched that of experienced psychiatrists. Special scoring methods were used for complex exam formats, such as extended matching items where one list of options must be applied correctly to several vignettes.

What today’s systems can do—and where they fall short

Across the full benchmark, a clear top group of frontier models emerged. Newer general‑purpose systems such as GPT‑5 Medium and Claude Sonnet 4.5 in “thinking” mode reached average scores in the mid‑80% range and performed strongly on demanding tasks like diagnosis, treatment planning, and multi‑step follow‑up questions. They also showed relatively stable performance across very different task formats, suggesting robust reasoning rather than narrow trick‑learning. In contrast, smaller or older models lagged well behind, and some medical‑specific models displayed wide swings between high scores on factual exams and weak performance on open‑ended clinical reasoning. Even the leaders struggled with the hardest tasks: fine‑grained classification of specific disorders with overlapping symptoms, and exam items that require choosing among many nearly similar options.

The generalist–specialist paradox in medical AI

One of the most striking findings is that models trained broadly on many types of text often outperformed models trained specifically on biomedical literature when it came to complex psychiatric reasoning. Specialized medical models like MedGemma excelled at knowledge‑heavy tasks such as multiple‑choice questions and detailed disorder labels, but they generally fell behind on flexible, narrative tasks that mirror real clinic visits. This “generalist–specialist paradox” suggests that sheer exposure to medical text is not enough; the ability to integrate context, juggle uncertainty, and revise hypotheses—as strong general models do—is crucial for psychiatry. At the same time, the study shows that adding more “thinking” steps helps some architectures but not others, hinting that useful deliberation in AI must be carefully designed, not simply forced.

Limits, safeguards, and what comes next

Despite encouraging scores, the authors stress that these systems are not ready to make unsupervised clinical decisions. The benchmark draws on polished textbook cases, not messy real‑world records, crises, or culturally diverse presentations. It does not test whether a chatbot might mishandle an actively suicidal person, reinforce delusions, or respond in ways that erode trust. The scoring itself relies on another AI judge, which introduces its own biases. As a result, PsychiatryBench should be seen as a foundational laboratory test, not a certificate of safety. The authors argue that, for now, LLMs are best suited to supporting education, documentation, and early brainstorming under careful human oversight.

What this means for patients and clinicians

For lay readers, the takeaway is both hopeful and cautionary. Modern language models are beginning to approximate parts of expert psychiatric reasoning, especially in structured, textbook‑like settings. They can already help students practice, assist clinicians with summaries, and surface guideline‑based options. But they also show predictable blind spots in subtle diagnosis, multi‑label classification, and handling ambiguous cases—exactly the areas where mistakes can be most harmful. PsychiatryBench shines a light on these strengths and weaknesses, offering a transparent way to track progress and design safer systems. In plain terms, the study suggests that AI can become a useful assistant in mental healthcare, but only if its abilities are measured honestly and its role is kept firmly under the guidance of trained professionals.

Citation: Fouda, A.E., Hassan, A.A., Hanafy, R.J. et al. PsychiatryBench: a multi-task benchmark for LLMs in psychiatry. npj Digit. Med. 9, 320 (2026). https://doi.org/10.1038/s41746-026-02582-w

Keywords: psychiatry benchmark, large language models, mental health AI, clinical reasoning, medical evaluation datasets