Clear Sky Science · en

Few-shot android malware classification with quantum-enhanced prototypical learning and drift detection

Stopping Bad Apps Before They Spread

Most of us carry a powerful computer in our pockets, and that convenience comes with a hidden race: security teams trying to spot new Android malware as fast as criminals invent it. Traditional defenses need thousands of known bad apps to learn what to block, which is far too slow when entirely new malware families appear every week. This paper introduces a smarter detector that can learn from only a handful of examples, keep up as attacks evolve over time, and still explain why it flags a given app—offering a blueprint for more resilient protection on everyday phones.

Why New Threats Are So Hard to Catch

Android now dominates the global phone market, making it a lucrative target for malware authors who churn out hundreds of thousands of new samples daily. Real-world datasets are skewed: a few malware families contain huge numbers of apps, while many emerging families have fewer than ten known samples. On top of that, attackers constantly change their tactics, causing the statistical “shape” of the data to drift over months and years. Classic machine-learning systems trained once on high-dimensional technical features struggle in this setting: they need many labeled examples of each family, they become brittle when the threat landscape shifts, and retraining them from scratch is costly and slow.

Learning From Just a Few Bad Examples

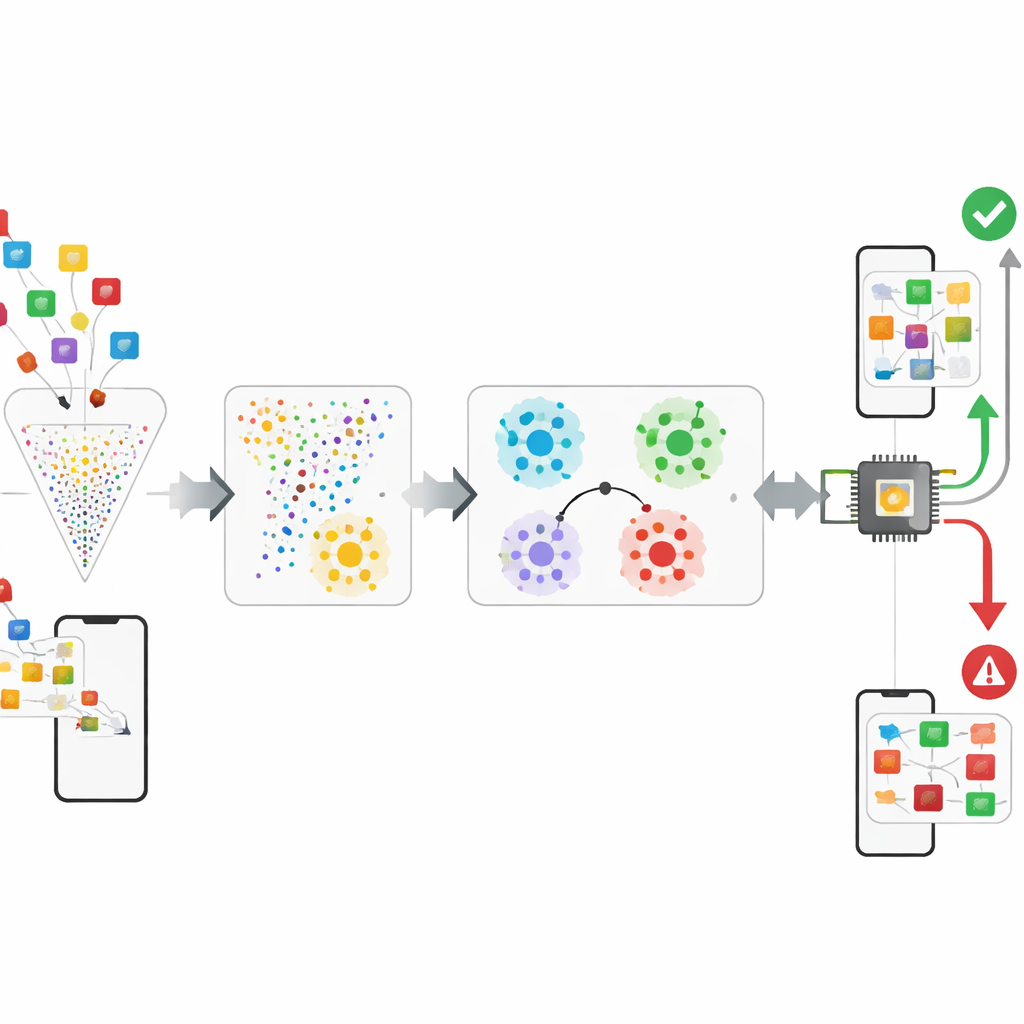

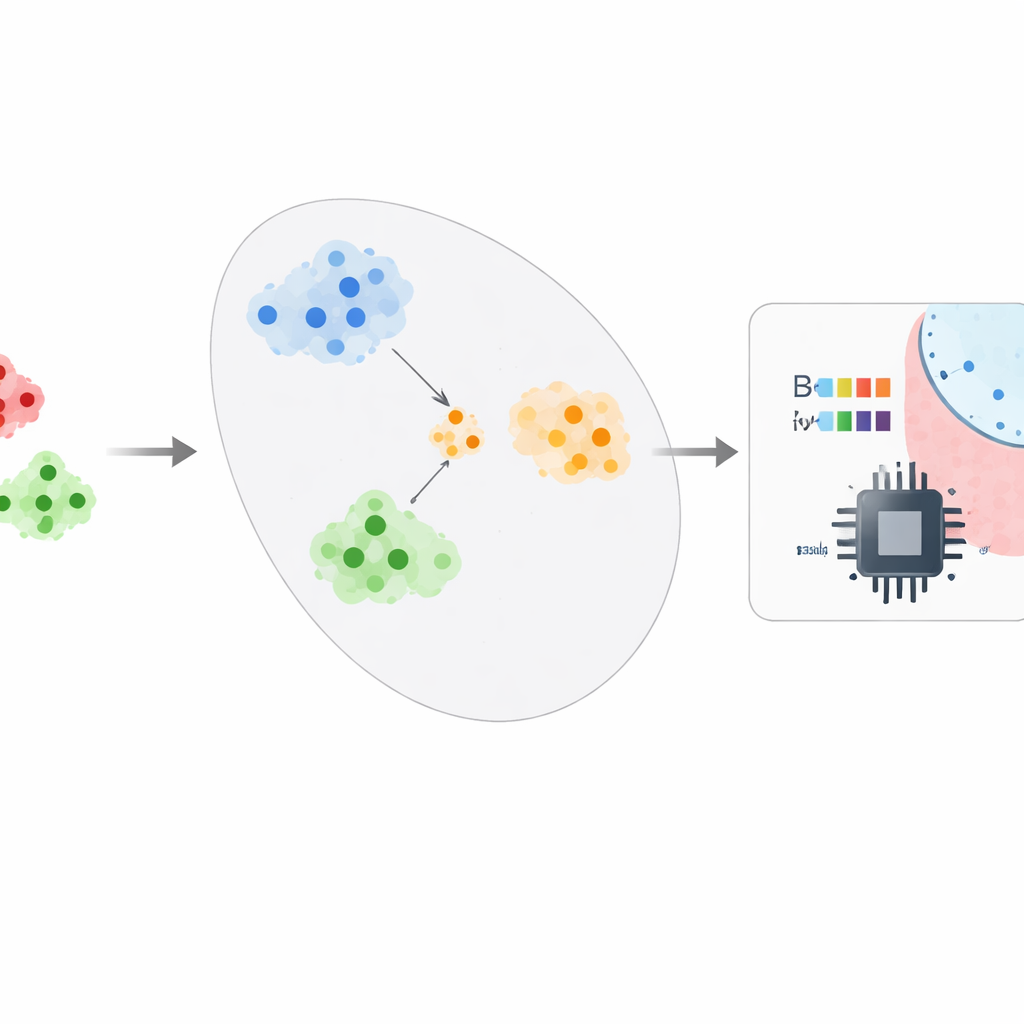

The authors propose a framework that treats malware detection more like learning a sense of "similarity" than memorizing labels. After pruning the raw Android features down by about 95–99% using a technique called CatBoost, the system feeds these compact descriptions into a "prototypical" network. During training, the network repeatedly solves small practice tasks where it must tell apart a few classes using only a few examples of each. Over time, it learns an internal map where apps from the same family end up close together, and different families form well-separated clusters. At deployment, security analysts only need around five confirmed samples of a new malware family: the system averages their positions to form a prototype and classifies new apps by checking which prototype they are closest to, turning a data-hungry problem into a few-shot one.

Adding Quantum Nuance and Watching for Change

To squeeze more insight from the already compressed features, the framework experiments with a small quantum-inspired classification layer. A four-qubit circuit encodes a tiny vector of features into a quantum state, entangles the qubits, and then measures them; a simple classical layer then turns those measurements into a decision. In simulation, this hybrid step adds a modest but statistically significant bump in accuracy, hinting that quantum devices may one day help capture subtle relationships between behaviors inside an app. At the same time, the system explicitly monitors how well it performs over chronological slices of data drawn from a time-stamped Android dataset. By training on earlier slices and testing on later ones, it can measure how much accuracy erodes as malware behavior drifts and flag when retraining becomes necessary.

Putting the Approach to the Test

The researchers evaluate their framework on two large public datasets. One, CCCS-CIC-AndMal-2020, contains hundreds of thousands of Android apps across many malware families and benign programs, each described by over 9,000 code and behavior features. The other, KronoDroid, offers fewer features but includes time stamps from 2008 to 2020, making it ideal for tracking change over time. After feature selection, the system uses only 51 and 29 features on these datasets respectively, yet still reaches around 99–100% accuracy, with very low false-alarm and miss rates. It also shows that it can classify entirely held-out malware families with only a small drop in performance, and that its accuracy degrades only slightly across simulated time periods when periodic retraining is allowed.

Seeing Inside the Black Box

Beyond raw scores, the authors use modern explanation tools to see which behaviors most strongly influence decisions. They find that low-level actions on files—such as how apps manipulate file descriptors or create and rename directories—are especially telling signals of malicious intent. By highlighting, for each flagged app, which behaviors pushed the prediction toward "malware" or "benign," the system gives human analysts a way to audit and trust its judgments, and to understand where stealthy samples still slip through. This analysis also exposes edge cases: for instance, some legitimate file managers resemble malware because they perform intensive file operations.

What This Means for Everyday Security

In simple terms, this work shows that it is possible to build an Android malware detector that learns a general "sense" of bad behavior, can be quickly updated with just a few confirmed samples of a new threat, and stays reliable even as attackers gradually change their tricks. While the quantum part is still exploratory and the tests rely on curated datasets, the overall framework points toward future phone security tools that are lighter, faster to adapt, and more transparent about their reasoning—helping defenders keep pace with a rapidly evolving mobile threat landscape.

Citation: Tawfik, M., Tarazi, H., Dalalah, A. et al. Few-shot android malware classification with quantum-enhanced prototypical learning and drift detection. Sci Rep 16, 10744 (2026). https://doi.org/10.1038/s41598-026-45738-0

Keywords: Android malware, few-shot learning, quantum machine learning, concept drift, cybersecurity