Clear Sky Science · en

Analyzing the effect of reasoning-based supervision on face anti-spoofing

Why fake faces matter to everyday security

Face recognition unlocks phones, opens office doors, and guards sensitive data. But these systems can be fooled by simple tricks, like holding up a printed photo, playing a video on a tablet, or wearing a realistic mask. This study looks at a new way to make such systems both harder to fool and easier for humans to understand by teaching them to “explain” their decisions in plain language while they learn to spot fake faces.

Turning face checks into explainable stories

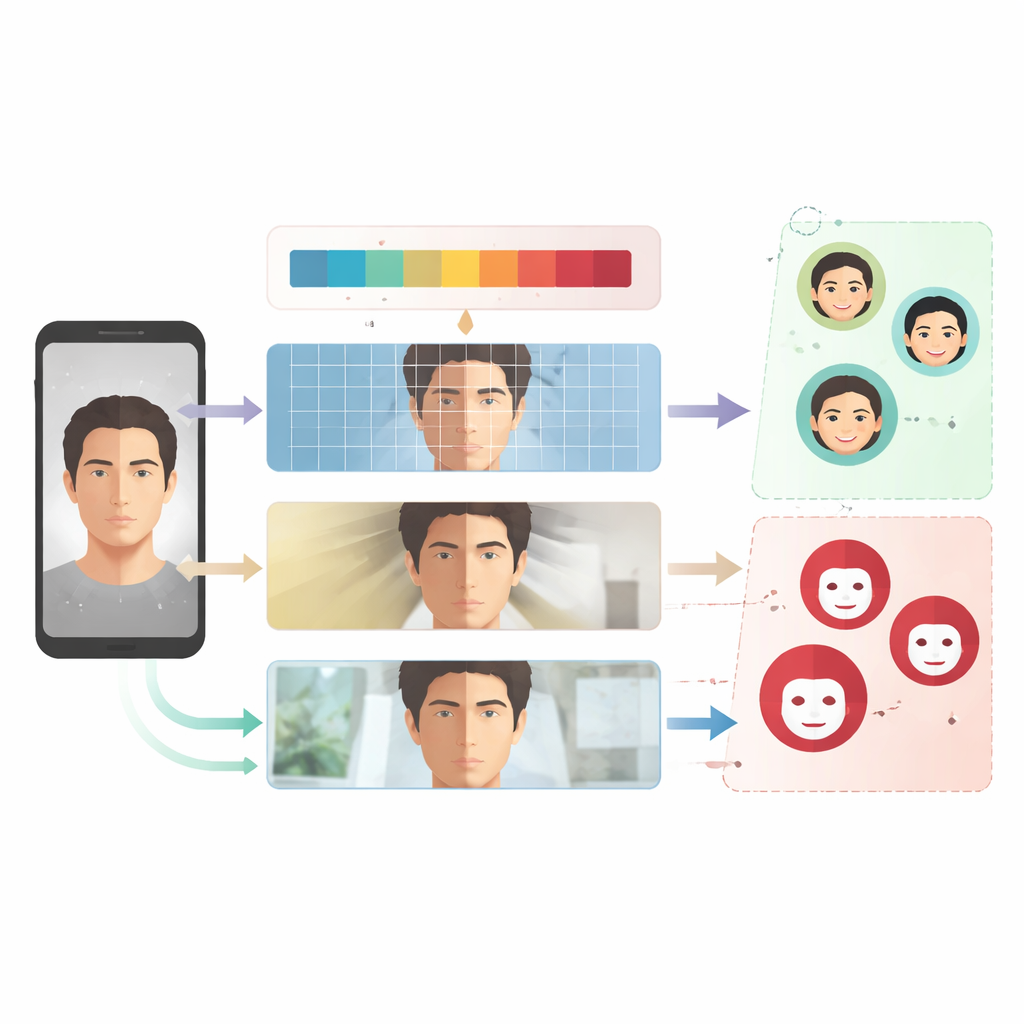

Most current anti-spoofing tools act like black boxes: they output “real” or “fake” but give little clue why. The authors instead build on a vision–language model, a type of AI that can both look at images and generate text. During training, the model not only decides whether a face is live or spoofed but also produces a short explanation describing the visual clues it used, such as odd textures, flat lighting, or reflections that do not look natural. These explanations are not just for show; they become part of the teaching signal that shapes what the model pays attention to.

Building a benchmark that thinks out loud

To study this idea in a controlled way, the team enriches four widely used face anti-spoofing datasets—covering common attacks like printed photos and replayed videos—with detailed text descriptions. Using GPT-4o, they generate two kinds of captions for each image. “Vanilla” captions offer a short, general justification, while “reasoning-style” captions walk through six clear steps: initial look, artifact detection, feature analysis, lighting and shadows, context, and final judgment. By keeping the image data and the underlying neural network fixed and changing only the style of these captions, the researchers can isolate how explanation structure affects what the model ultimately learns.

How teaching with reasons changes the model

Training the system now becomes a dual task. One loss term rewards correct live/spoof decisions, and another rewards accurate explanation generation, with classification treated as the main goal. The authors also use a lightweight fine-tuning method so that only small adapter layers and output heads are updated, leaving the large pre-trained backbone largely untouched. They compare models trained only with vanilla captions to models trained with a mix of vanilla and reasoning captions across several challenging “train on some datasets, test on a different one” protocols. This setup mimics real-world conditions, where future attacks may not look exactly like past ones.

When explanations help—and when they hurt

Across many tests, especially the standard MCIO leave-one-out protocols, models exposed to reasoning-style captions detect spoofs more accurately and make fewer mistakes on unfamiliar datasets. In some cases, they even outperform specialized state-of-the-art defenses designed for cross-dataset robustness. The reasoning-guided models appear to focus more consistently on spoof-specific clues such as texture irregularities, pixelation, or unnatural lighting. However, the study also uncovers a downside: if the explanations repeatedly emphasize features that are not important for a new kind of attack—for example, print-like textures when the new attack uses 3D masks—the model can inherit this bias and stumble, showing that the “teaching script” can mislead as well as help.

Limits of measuring explanations

The authors note that they mainly judge explanation quality in terms of whether the text implies the correct live or spoof label, using another language model to read and interpret the generated explanations. This does not fully address whether the reasoning is faithful to what the vision system actually saw, or whether it is truly helpful to human operators monitoring security systems. They also highlight that using powerful language models to create and interpret explanations may introduce subtle biases—for example, overemphasizing frequently mentioned visual patterns—that could affect fairness or performance on different demographic groups and recording conditions.

What this means for safer face recognition

In everyday terms, this work shows that getting an AI system to “say why” during training can change how it learns to spot fake faces—and often improves its ability to handle new, unseen attacks—without making the model bigger or more complex. At the same time, the kind of reasoning we teach matters: structured explanations can act like a steering wheel, but if they point toward the wrong clues, the model may veer off course when attacks change. The study proposes viewing explanations not just as user-friendly add-ons, but as powerful dials that engineers can tune to trade off robustness, interpretability, and bias in future security systems.

Citation: Min, J., Lim, K., Kim, M. et al. Analyzing the effect of reasoning-based supervision on face anti-spoofing. Sci Rep 16, 13360 (2026). https://doi.org/10.1038/s41598-026-43800-5

Keywords: face anti-spoofing, explainable AI, vision-language models, biometric security, presentation attacks