Clear Sky Science · en

AI-powered BlindSpot VisionGuide system on raspberry Pi for enhancing independence of visually impaired users

Helping People Rely Less on Sight

For millions of people with limited or no vision, everyday tasks that sighted people take for granted—recognizing a friend’s face, understanding what is in a room, or simply catching up on the news—can be exhausting or impossible without help. This paper introduces BlindSpot‑VisionGuide, a compact system built on a low‑cost Raspberry Pi computer that listens for voice commands, looks through a camera, and responds with spoken guidance. By turning visual information into sound in real time, it aims to give visually impaired users more independence at home, at work, and on the move.

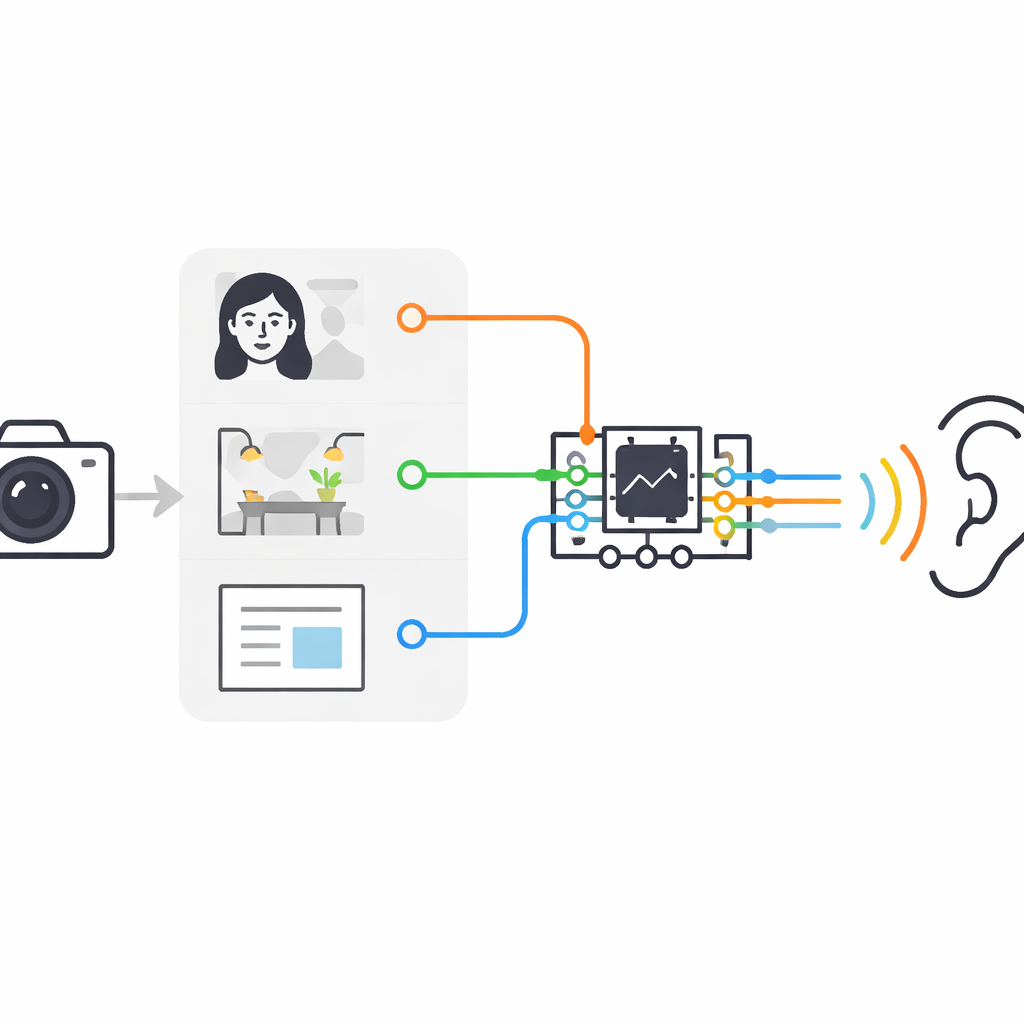

One Small Box, Three Helpful Abilities

BlindSpot‑VisionGuide bundles three main abilities into a single device. First, it can recognize familiar faces, so a user can tell who has entered the room without waiting for an introduction. Second, it can describe what the camera sees in plain language, such as a person sitting at a table or a bird resting on a railing. Third, it can fetch headlines and short summaries from online newspapers and read them aloud. All of this runs on a Raspberry Pi 5, a credit‑card‑sized computer often used in hobby projects, paired with a small camera, microphone, and speaker or headphones.

Talking Instead of Tapping

Instead of screens, buttons, or touch gestures, the system relies almost entirely on voice. The Raspberry Pi continuously listens for simple spoken commands like “run the face module” or “run the newspaper module.” When the user triggers face recognition, the camera captures live video, the software isolates any faces, compares them to a small on‑device gallery of known people, and then speaks the closest match. For scene description, the user gets a brief pause to aim the camera; the system then takes a picture and passes it through an advanced image‑to‑text model that creates a natural‑sounding sentence, which is turned into speech. For news, the system contacts an online service, filters recent articles—by country, date, and other options—and then reads each title and summary in a steady, clear voice.

How the Smart Pieces Work Together

Behind the scenes, BlindSpot‑VisionGuide leans on modern artificial intelligence tools but uses them in a very practical, engineering‑focused way. For face recognition, it converts each face into a compact numerical “fingerprint” using a deep network and then compares this fingerprint to stored examples. In tests with 20 volunteers and 300 images, it correctly recognized people about 94% of the time and typically answered in under a quarter of a second per face. For image captions, it uses a powerful model called BLIP, which combines a vision module and a language module. This produces rich descriptions, but on the small Raspberry Pi it needs around 4.5 seconds to speak a caption—fast enough for understanding a static scene, but not yet for split‑second decisions like crossing a busy street. The newspaper module relies on web programming interfaces rather than fragile web scraping, allowing reliable access to up‑to‑date news while limiting the amount of personal data sent over the network.

Balancing Speed, Power, and Privacy

A key challenge is fitting all three abilities into a tiny, low‑power computer without relying on distant cloud servers. The authors treat this as a systems‑engineering problem rather than a race for ever larger neural networks. Only one module runs at a time, sharing the camera, microphone, and speech engine to keep memory use and battery drain under control. Face recognition and scene description work fully offline once the models are stored on the device, which helps protect user privacy. The only regular internet use is for fetching fresh news, and even there the system can cache articles so that they can be replayed later without a connection. User tests with 15 visually impaired participants rated the overall usability as “excellent” on a standard questionnaire, with high task‑success rates and relatively low mental workload.

What This Means for Everyday Life

In simple terms, BlindSpot‑VisionGuide shows that a low‑cost, pocket‑sized computer can offer a suite of useful “eyes and ears” for someone who cannot rely on vision. It does not invent new learning algorithms; instead, it proves that existing face, language, and speech tools can be carefully combined to run locally, respond quickly enough for many everyday situations, and respect user privacy. The system is not yet suited to fast, safety‑critical navigation, and it still depends on the internet for live news and on English‑only speech. But as hardware accelerators, faster models, and multilingual voices become more common, this kind of integrated, voice‑driven box could become a practical companion for visually impaired users, helping them recognize people, understand their surroundings, and stay informed with far less dependence on others.

Citation: Sudha, M., Swaminathan, S., Suba, M. et al. AI-powered BlindSpot VisionGuide system on raspberry Pi for enhancing independence of visually impaired users. Sci Rep 16, 11316 (2026). https://doi.org/10.1038/s41598-026-39724-9

Keywords: assistive technology, visual impairment, Raspberry Pi, computer vision, text-to-speech