Clear Sky Science · en

Evaluating LLMs' divergent thinking capabilities for scientific idea generation with minimal context

Why This Matters for Everyday Science Fans

Much of the excitement around modern AI comes from its apparent brilliance on tests and exams. But scientific breakthroughs rarely come from answering quiz questions; they start with odd, half-formed ideas sparked by a single word or hunch. This paper asks a down-to-earth question with big consequences: when you give today’s large language models just a tiny hint—a lone scientific keyword—can they actually brainstorm fresh, plausible research ideas, and how does that "creative spark" relate to the usual measures of AI intelligence?

From Test-Solving Machines to Idea Companions

Most current benchmarks treat AI as a super student: models are fed rich context—like full abstracts or problem descriptions—and then graded on whether they find the right answer. That setup mainly measures convergent thinking: narrowing options down to a single solution. The authors argue that early stages of science look very different. A scientist often starts with almost nothing but a topic word, then free-associates dozens of possible questions and directions. To capture this kind of divergent thinking in machines, they introduce LiveIdeaBench, a new benchmark that deliberately strips context down to just one scientific keyword—such as “microscopy” or “weather forecasting”—and asks models to suggest short, concrete research ideas.

How the New Benchmark Works

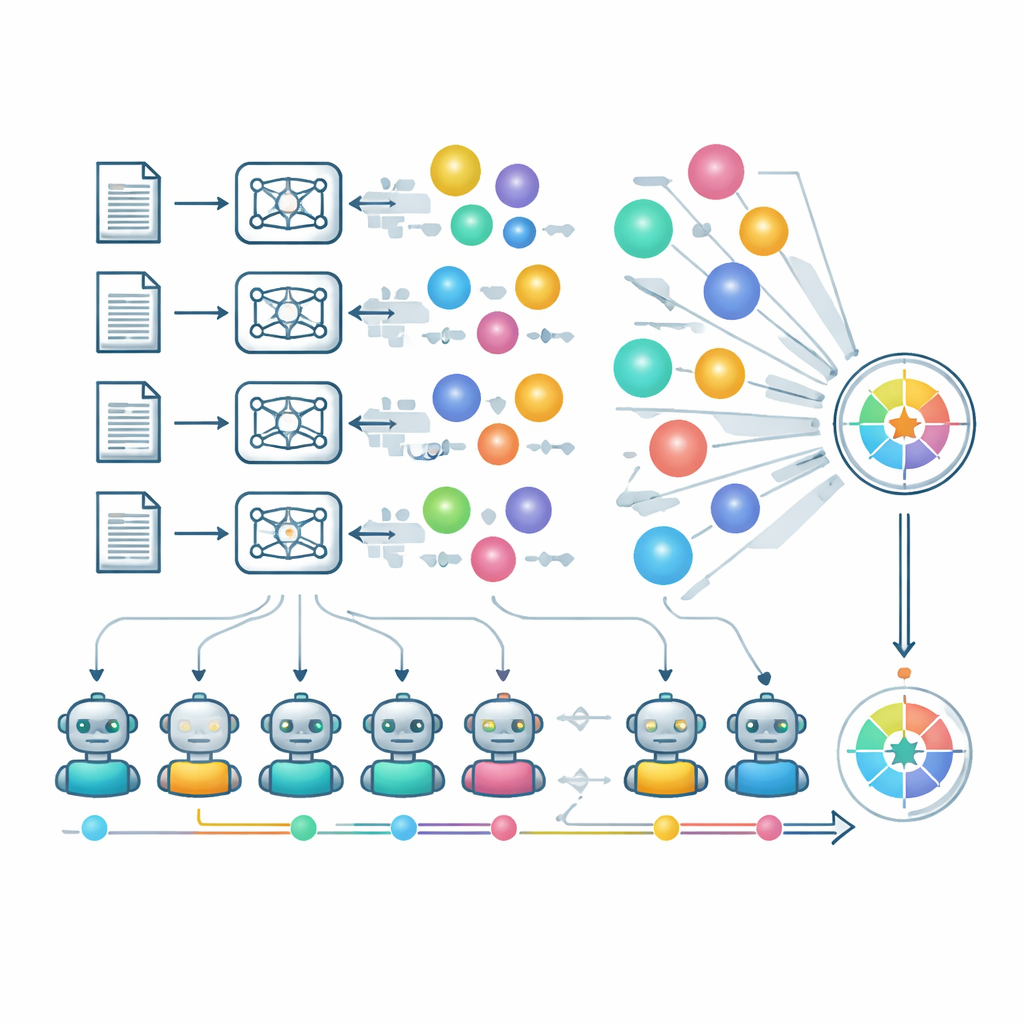

LiveIdeaBench spans 1180 popular scientific keywords across 22 fields, from physics to medicine and social science. For each keyword, more than 40 leading language models are prompted to generate compact scientific ideas. A dynamic panel of top-performing models then serves as "judges" that rate each idea along five creativity-inspired dimensions: how original it is, whether it seems feasible, how clearly it is expressed, how many distinct ideas the model can produce from the same cue (fluency), and how consistently it can perform across very different topics (flexibility). Multiple judges score each idea, and scores are averaged to reduce individual model bias. The benchmark is updated regularly, both in the keywords it uses and the models it evaluates, so it follows the moving frontier of current science and AI capability.

What the Results Reveal About AI Creativity

The authors’ large-scale tests show that performance on LiveIdeaBench looks strikingly different from rankings on standard "general intelligence" leaderboards. Some well-known models that excel at math, coding, and reasoning do not shine at generating varied, novel scientific ideas from minimal prompts. Others with modest general scores, including comparatively small models, show surprisingly strong divergent thinking, sometimes matching or even beating leading systems on creativity-related measures. The study also finds a trade-off between how bold and how safe ideas are: models that suggest very original directions may be weaker on feasibility, while others favor more practical but less surprising ideas. Importantly, longer, more elaborate answers do not reliably produce better ideas; sheer volume of words is only weakly related to quality.

Peeking Inside the Evaluation Mechanics

To approximate expert review at scale, the authors lean heavily on "LLMs as judges." A curated group of strong models independently rate originality, feasibility, and clarity, and a separate process checks whether multiple ideas from the same model and keyword are truly different or just rephrasings. Flexibility is captured by looking at how a model’s scores hold up in its weaker areas, not only in familiar domains. The team also analyzes how architecture, training strategies, and safety policies influence creative output. Models with stricter safety filters sometimes refuse to answer for certain sensitive keywords, hurting their scores despite responsible behavior. The authors note that using AI judges comes with risks—such as sycophancy and blind spots in unfamiliar scientific territory—but show preliminary agreement with human experts in a specialized mathematics domain.

Implications for the Future of AI-Assisted Discovery

To a non-specialist, the core takeaway is simple but powerful: being good at tests does not automatically make an AI a good partner for brainstorming new science. Divergent thinking—the ability to spin off many different, meaningful research ideas from a single hint—emerges as a partly independent skill that current benchmarks largely ignore.

Citation: Ruan, K., Wang, X., Hong, J. et al. Evaluating LLMs' divergent thinking capabilities for scientific idea generation with minimal context. Nat Commun 17, 3625 (2026). https://doi.org/10.1038/s41467-026-70245-1

Keywords: AI creativity, divergent thinking, scientific idea generation, large language models, benchmarking