Clear Sky Science · en

Event-based lunar optical flow egomotion estimation challenge: design and results of the ELOPE competition

Why smarter eyes matter for moon landings

As space agencies prepare to send robots and eventually people back to the Moon, landing safely in rough, shadowy terrain—especially near the poles where ice may hide in permanent darkness—becomes a major challenge. This paper explores a new kind of “smart eye” for spacecraft, called an event-based camera, and reports on a worldwide competition that tested how well different teams could use it to guide a lunar lander toward a safe touchdown.

A new way to see motion in the dark

Unlike ordinary cameras that take full pictures at fixed intervals, event-based cameras only react when the brightness at a tiny pixel changes. Each change triggers a pinpoint “event” with a precise time tag, creating a stream of sparse, rapid updates instead of bulky images. This design mimics how biological eyes work and brings key advantages for spaceflight: extremely fast response, very wide brightness range, and low power use. Those strengths are especially useful near the Moon’s South Pole, where the Sun skims the horizon, craters sit in deep shadow, and bright, reflective dust can easily overwhelm traditional sensors.

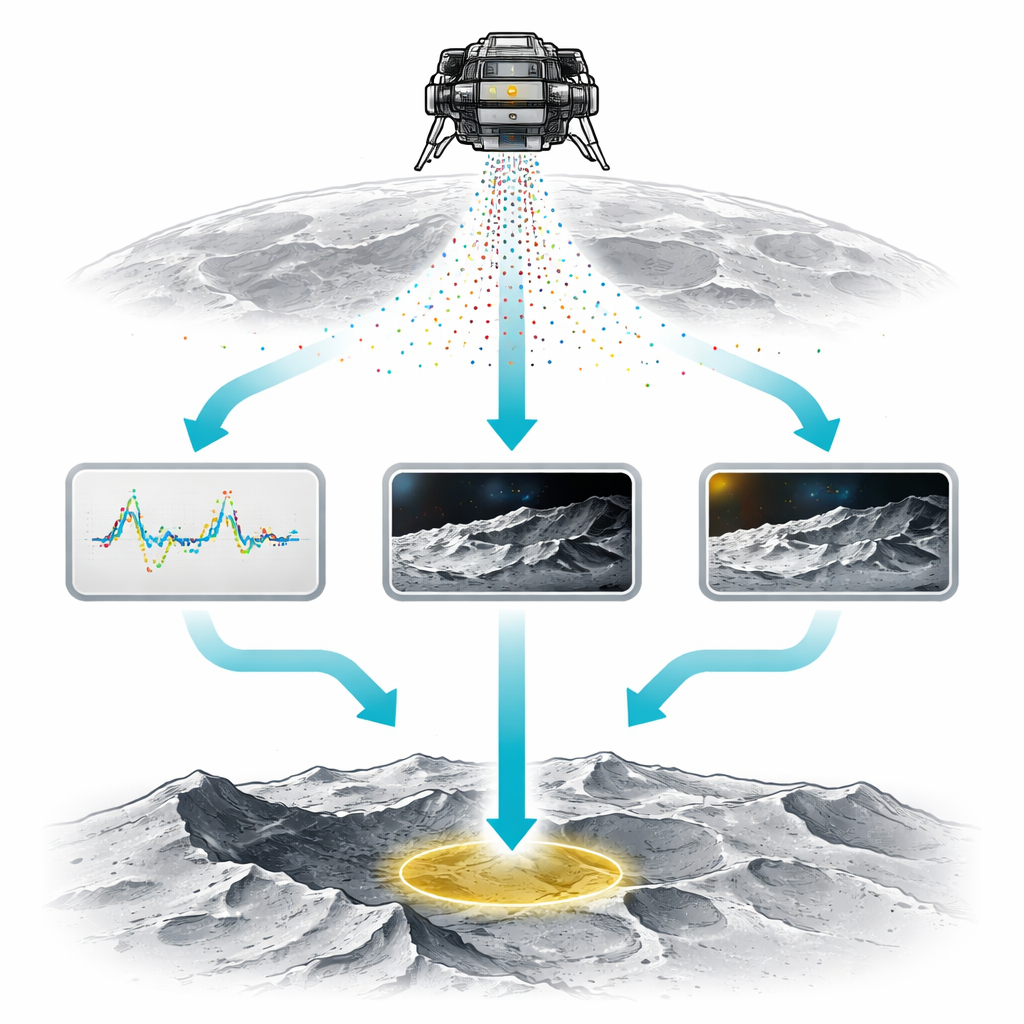

Building a realistic digital moon playground

Because real event-based data from the Moon do not yet exist, the researchers created the ELOPE dataset and challenge. Using specialized software, they first simulated fuel-efficient landing paths for a spacecraft similar to the Apollo lander, including both ordinary descents and more complex “divert” maneuvers where the touchdown site changes late in the approach. A planetary rendering tool then generated detailed, photo-like views of the surface along these paths under different lighting conditions, and an event-camera simulator converted the image sequences into streams of events. Each sequence came with perfect “answer keys” for the lander’s true motion, plus virtual readings from a motion sensor and a laser rangefinder, allowing competitors to test how accurately their methods could reconstruct the lander’s speed and direction.

A global contest of smart navigation ideas

The ELOPE competition drew 44 teams from universities, industry, and independent researchers, with 21 reaching the final scoreboard and submitting 132 solutions. All teams had to estimate the lander’s three‑dimensional velocity over time from the same synthetic sensor data. The organizers also provided a strong frame-based reference method that first converted events into images and then applied standard optical flow techniques, challenging competitors to do better by exploiting the full potential of event-based sensing. Only three teams beat this baseline, revealing both the promise and current limits of the technology.

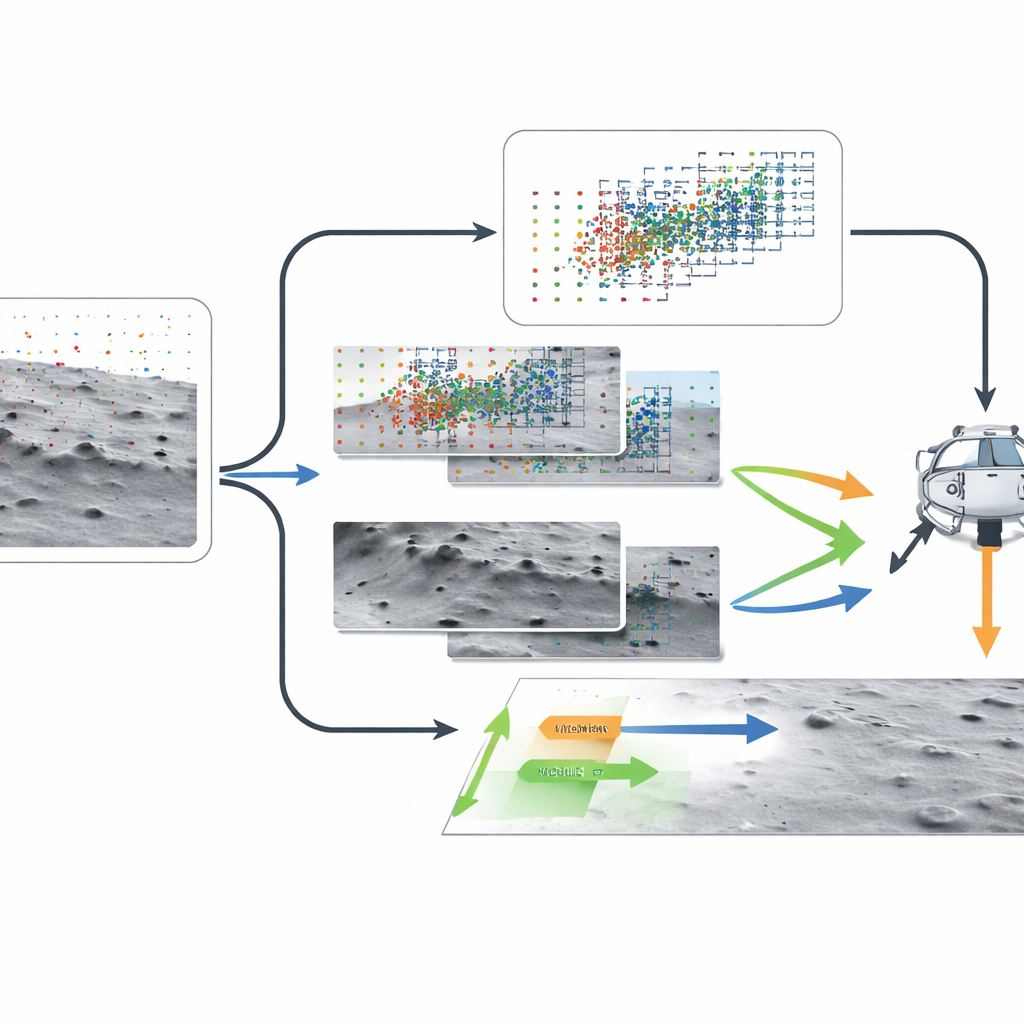

Three winning paths to reading motion

The top team, SOMIS-LAB, leaned fully into the event-only view of the world. Instead of bundling events into fixed time slices, their method adjusted the time window on the fly based on how many events arrived, then warped the events according to trial motion guesses and judged how “sharp” the resulting pattern looked in the frequency domain. By seeking the motion that made edges in the scene as crisp as possible, they achieved the best accuracy—though at a high computing cost that would currently be too slow for a real landing. The second- and third-place teams, HRI and LUNARIS, took a more traditional route: they binned events into short-lived images and used established computer vision tools. One treated the lunar surface as nearly flat and found the best overall transformation between successive images; the other estimated detailed per-pixel motion, removed the part caused by the lander’s rotation using motion-sensor data, and combined this with distance information to infer the lander’s speed. These approaches were fast enough for real-time use and still outperformed the baseline.

What we learn from difficult cases

By studying where all methods struggled, the authors found that the hardest trajectories were those with strong lighting problems—oversaturated or poorly exposed source images, which produced few meaningful events once converted. They also saw that complex divert maneuvers, with sharper turns and attitude changes, were generally more difficult to reconstruct than straightforward descents. Interestingly, many teams experimented with deep learning but often reverted to classical vision methods for their final submissions, citing challenges such as sparse events, limited training data, and the need for robust generalization. A post-competition survey also revealed that most participants converted events into frames with fixed timing, underscoring how rare the fully event-driven strategy of the winning team still is.

What this means for future lunar missions

The study concludes that event-based cameras are strong contenders for future lunar landing systems, particularly in demanding polar regions. Even with a synthetic dataset and the added handicap of missing camera calibration details, several teams matched or beat a carefully crafted frame-based reference method. The most accurate solution showed that fully embracing the event stream can yield excellent motion estimates, while fast frame-based hybrids demonstrated that practical, real-time systems are already within reach. Together, these results mark an important step toward landers that can “see” rapid motion and harsh lighting more like a living eye, improving the chances of safe, precise touchdowns on the Moon and beyond.

Citation: Fanti, P., Williams, L.B.S., Dvořák, O. et al. Event-based lunar optical flow egomotion estimation challenge: design and results of the ELOPE competition. npj Space Explor. 2, 18 (2026). https://doi.org/10.1038/s44453-026-00033-0

Keywords: event-based vision, lunar landing, egomotion estimation, neuromorphic sensors, autonomous navigation