Clear Sky Science · en

From computation to environmental cost the resource burden of artificial intelligence

Why the metals inside our smart machines matter

Behind every friendly chatbot or image generator lies a vast hidden world of metal, mining, and manufacturing. This paper peels back the digital curtain to show that artificial intelligence (AI) is not just about electricity and data. It is also about the physical hardware that makes it possible, from powerful chips to the many chemical elements they contain. As AI models grow larger and more capable, the study asks a simple but pressing question: what is the material cost of this progress for the planet and for people living near mines and factories?

From rising demand to real-world limits

The authors begin by placing AI inside a broader story of resource use. In recent years, data centers and specialized chips have become central to everything from streaming to cloud services, and now to AI. Technology giants are pouring hundreds of billions of dollars into new data centers, and AI workloads are expected to dominate their use by the end of the decade. So far, public debate has focused mainly on how much electricity and water these centers consume. But the machines at their core rely on a long list of metals and minerals, some of which are scarce, hard to mine, or toxic when mishandled. The study argues that to judge whether AI is truly sustainable, we must move beyond counting kilowatt-hours and start counting kilograms of material as well.

What a single AI chip is really made of

To ground this abstract issue in hard facts, the researchers took apart a widely used graphics processing unit, the Nvidia A100, and chemically analyzed its contents. They identified 32 different elements, including common metals like copper, iron, and tin, along with smaller amounts of nickel, barium, zinc, and several precious metals such as gold and silver. Strikingly, about 90 percent of the chip’s mass is made up of heavy metals, and roughly 93 percent consists of elements classified as hazardous under typical exposure conditions. Different parts of the chip have distinct roles and recipes: the heatsink is almost pure copper for cooling, the circuit board mixes copper and iron with other additives, while the tiny computing core concentrates silicon and a variety of other metals.

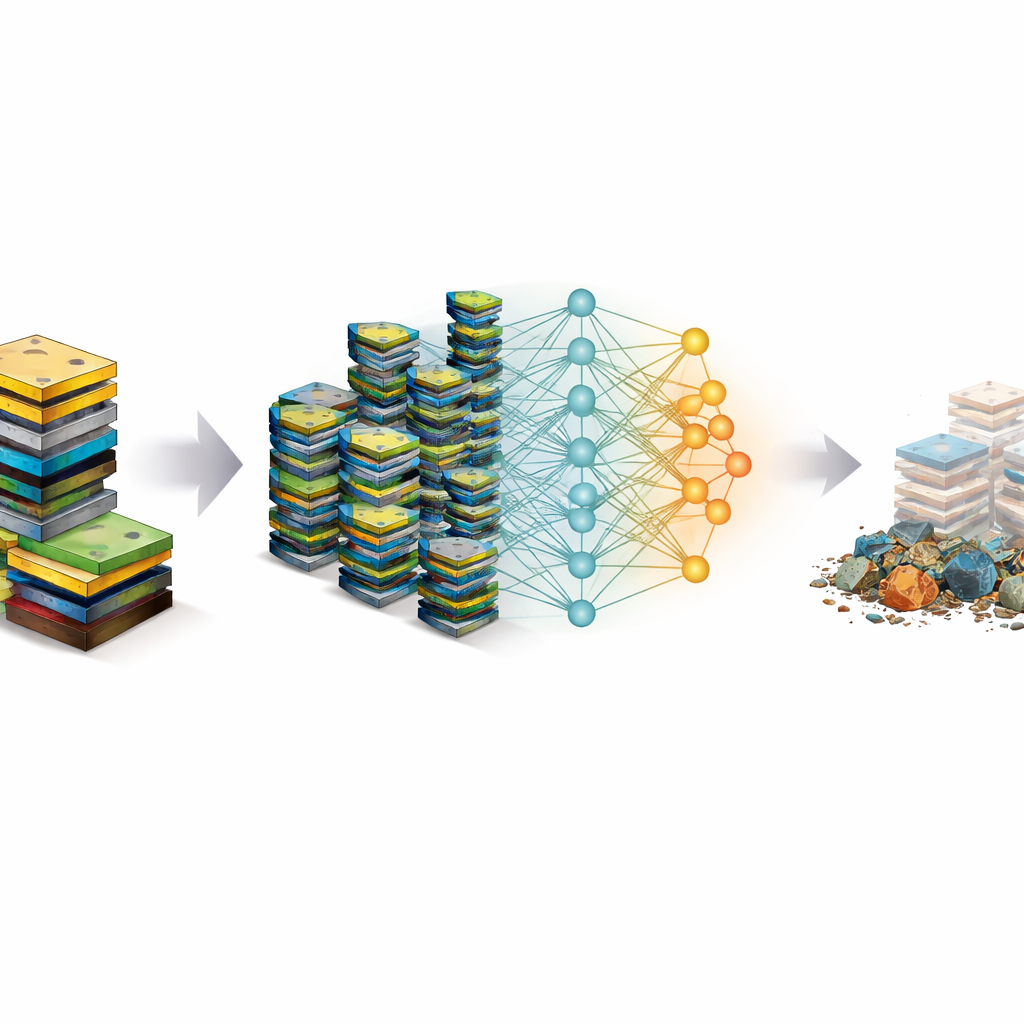

How many chips it takes to teach an AI to talk

Next, the study links the digital workload of training large AI models to the physical wear and tear on hardware. Training effort is measured in FLOPs, a standard count of how many basic calculations a model performs. By estimating how many FLOPs the Nvidia A100 can deliver over its useful life, and comparing this with the compute budgets reported or inferred for major models, the authors calculate how many GPUs are effectively “used up” by a single training run. Under realistic assumptions about chip lifetime and efficiency, training a state-of-the-art language model like GPT-4 consumes the equivalent of thousands of A100 units. For a set of nine prominent models, the total material demand falls in the range of several tonnes of extracted resources, most of them hazardous metals that pose risks during mining and disposal.

Small performance gains, big material bills

The paper then compares how model performance improves relative to this growing hardware burden. Using standard tests of math skills, general knowledge, coding, science questions, and everyday reasoning, the authors show that newer, much larger models often deliver only modest score increases compared with their predecessors, despite using tens or even hundreds of times more compute. Some abilities, such as advanced mathematical problem solving, do improve dramatically but appear especially resource-hungry. Others, like basic commonsense reasoning, show only slight gains even when training workloads soar. This pattern suggests that simply scaling up models and hardware is an increasingly costly path to progress, not only in terms of energy but also in terms of metal extraction and future e-waste.

Ways to stretch each chip further

The study also explores how better engineering might ease the material load. Two levers stand out. First, improving how fully each GPU is used during training can reduce the number of units needed; raising utilization from low to high levels can shrink GPU demand by more than half. Second, extending the typical lifetime of chips in data centers—from around one to three or even five years—further cuts the total number that must be produced and eventually discarded. Combining these measures, the authors estimate that the effective GPU demand for training a model like GPT-4 could, in principle, be reduced by more than 90 percent. However, they caution that real-world gains depend on cooling, maintenance, and system design, and that efficiency improvements can sometimes spur more AI use overall, offsetting some of the savings.

What this means for the future of AI

In closing, the authors argue that AI’s environmental footprint cannot be judged by electricity meters alone. Each new generation of powerful models is built on a foundation of copper, nickel, arsenic, lead, and many other elements dug from the ground, often in regions with limited capacity to control pollution and protect workers. As performance gains from brute-force scaling begin to taper off, the study suggests a shift in priorities: toward smarter architectures, better algorithms, higher-quality data, and longer-lasting hardware, rather than simply more of the same chips. For everyday readers, the message is that our digital assistants and creative tools are not weightless. They carry a hidden material cost that must be acknowledged and managed if AI is to grow without overwhelming the planet’s resource and pollution limits.

Citation: Falk, S., Kluge Corrêa, N., Luccioni, S. et al. From computation to environmental cost the resource burden of artificial intelligence. Commun Earth Environ 7, 397 (2026). https://doi.org/10.1038/s43247-026-03537-5

Keywords: AI hardware materials, GPU environmental impact, large language model training, electronic waste, sustainable computing