Clear Sky Science · en

Evaluating large language models for pharmacotherapy simulations: a mixed-methods study

Why this matters for future pharmacists

As powerful chatbots become more common in classrooms and clinics, educators are asking a pressing question: can these tools safely help train future pharmacists who will manage high‑risk cancer treatments? This study dives into how four large language models (LLMs) perform when asked to run realistic drug‑therapy simulations for two serious blood cancers, offering an early safety check on technology that may soon shape how health professionals learn.

Practice without putting patients at risk

Simulation-based learning lets pharmacy students rehearse complex treatment decisions in a safe environment before they ever write a real prescription. Traditionally, these simulations are designed and run by expert faculty, which is effective but time‑consuming and hard to scale. LLMs promise something new: automatically generated, interactive cases that can adapt to a student’s answers and provide instant feedback. The authors set out to test whether this promise holds up in a demanding area—pharmacotherapy for acute myeloid leukemia (AML) and chronic myeloid leukemia (CML), two related but very differently treated cancers.

A tough test using twin blood cancers

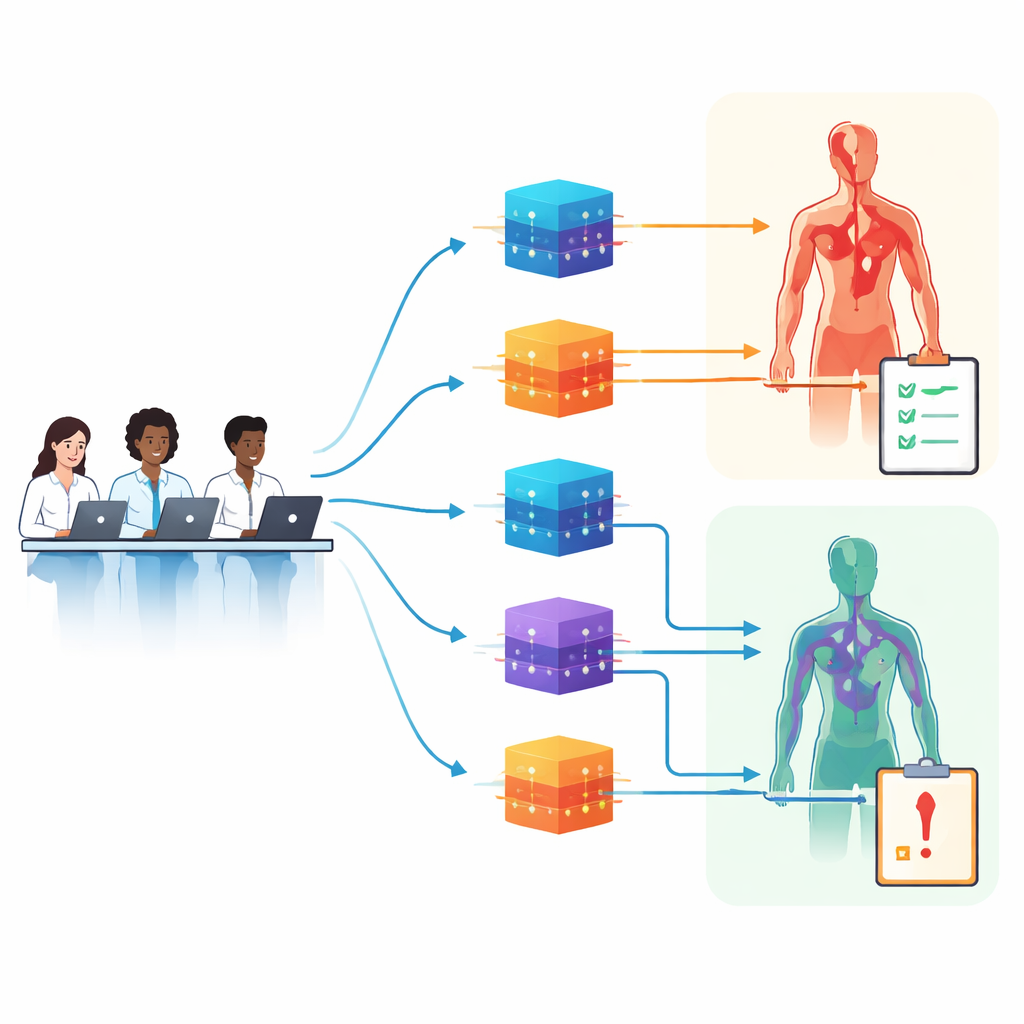

The researchers chose AML and CML because they look similar on paper but require sharply different drug strategies. That similarity creates a “stress test” for LLMs: can the models keep the diseases straight, or will they blur them together and suggest the wrong therapy? Using a carefully engineered master prompt, they asked four major platforms to generate full teaching sessions, including patient cases, questions, and step‑by‑step reasoning. One hundred four PharmD students interacted naturally with these AI‑built simulations, while panels of oncology and education experts rated each session on three fronts: how realistic and guideline‑aligned the clinical content was, how well the reasoning was modeled, and how sound the instructional design appeared.

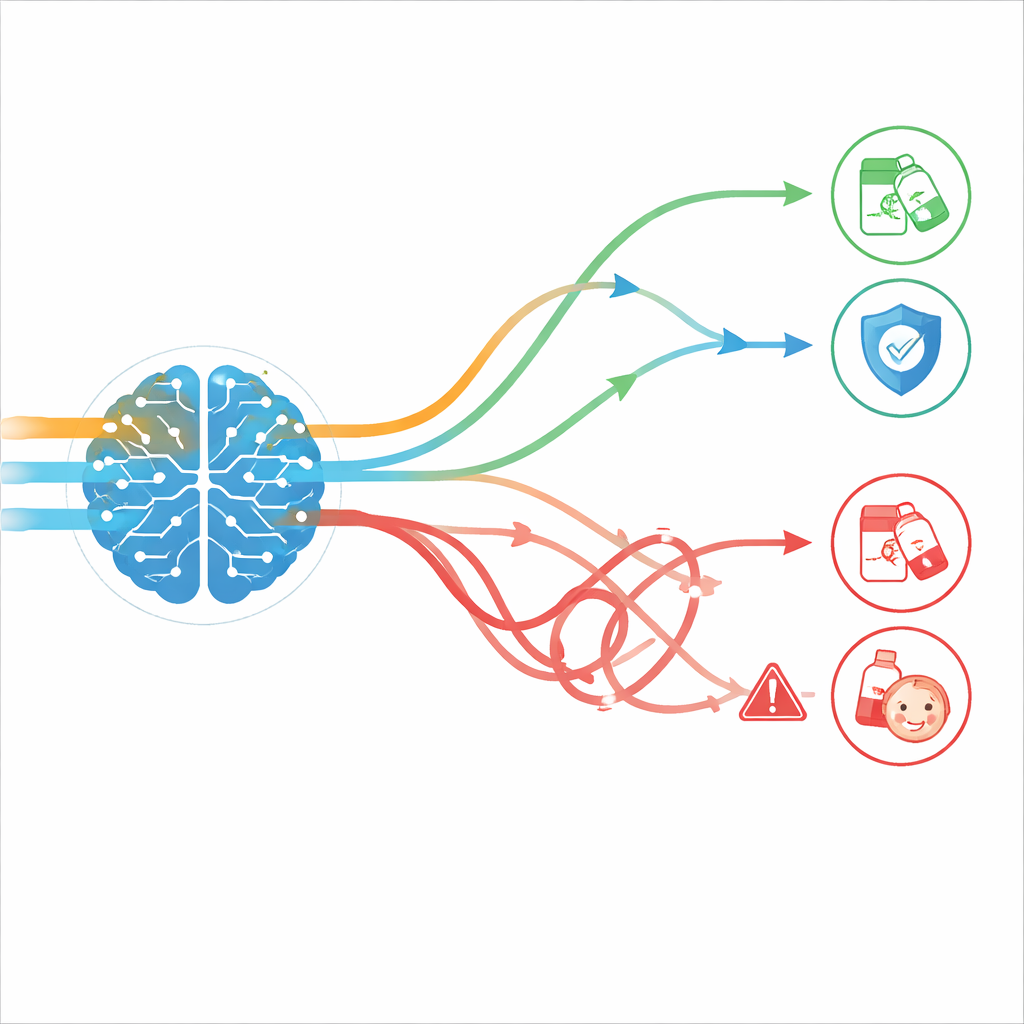

Where the chatbots did well—and where they failed

Across 103 usable sessions, just over half (about 52%) met the expert bar in all three domains at once. The strongest areas were lesson structure and modeled reasoning: more than 80% of sessions provided clear objectives, useful scaffolding, and plausible clinical thought processes. In other words, the LLMs were quite good at telling a believable story and walking through decisions in a way that looked like expert reasoning. The weak spot was accuracy and safety of the actual drug recommendations, which passed only about 58% of the time. Errors included outdated or off‑guideline choices, incorrect dose‑related decisions, invented clinical trials with realistic‑sounding details, and “domain entanglement,” where treatments meant for one leukemia—or even for a different blood cancer altogether—were applied to another. Notably, this kind of cross‑disease mixing happened only in the more complex AML cases.

Different cancers, different models, different results

CML simulations fared better overall than AML simulations, with roughly three out of five CML sessions passing versus only two out of five AML sessions. The authors suggest that CML’s more linear treatment rules may be easier for LLMs to follow than AML’s branching, multi‑factor choices. Performance also varied across platforms: some models produced safer drug plans but slightly weaker lesson design, while others offered beautifully structured teaching with more frequent clinical mistakes. Yet students tended to like all of them about equally. They reported higher satisfaction than the “neutral” benchmark, especially praising ease of use and time savings, and nearly half said they preferred LLM-based learning to traditional cases. Crucially, their satisfaction did not track with expert‑judged safety or accuracy—students were just as happy with flawed sessions as with high‑quality ones.

Why expert oversight still matters

For educators and health systems, the message is nuanced. LLMs already appear capable of building engaging, well‑structured simulations that feel realistic and help students practice reasoning through cancer therapy. But the same sessions often hide subtle or serious treatment errors that learners are unlikely to spot on their own. The authors argue that, at least for now, AI should be used to draft simulations that are then carefully reviewed and edited by clinical experts, especially in complex, fast‑changing fields like oncology. With better guardrails—such as real‑time guideline access, checks against fabricated evidence, and stronger safeguards against mixing related diseases—LLMs may eventually deliver safe, scalable training. Until then, human judgment remains the critical safety net between a polished AI case and the real patients students will one day treat.

Citation: Farrag, A.N., El-Zeiny, A. & Ali, A.M. Evaluating large language models for pharmacotherapy simulations: a mixed-methods study. npj Digit. Med. 9, 355 (2026). https://doi.org/10.1038/s41746-026-02626-1

Keywords: pharmacy education, large language models, cancer pharmacotherapy, medical simulation, AI safety