Clear Sky Science · en

A suite of large language models for public health infoveillance

Why online chatter matters for public health

During outbreaks and health scares, many people turn first to social media instead of official websites or doctors. These posts reveal what people fear, what they misunderstand, and how they respond to advice. The study described here introduces PH-LLM, a family of artificial intelligence models built specifically to read this constant stream of online messages in many languages, helping health officials see problems early and respond before rumors and worries spiral out of control.

Watching health conversations in real time

Public health agencies have long relied on surveys, clinic reports, and lab data to guide decisions. But these traditional tools are slow and often miss the fast-changing mood online, where beliefs about vaccines, testing, and new diseases spread in hours. Researchers call efforts to track this digital pulse "infoveillance"—monitoring information instead of infections. Until now, most such efforts depended on smaller machine learning models trained for one task at a time, such as spotting vaccine hesitancy in English tweets. These systems struggled to keep up when a new issue, language, or platform became important.

An AI suite built for public health questions

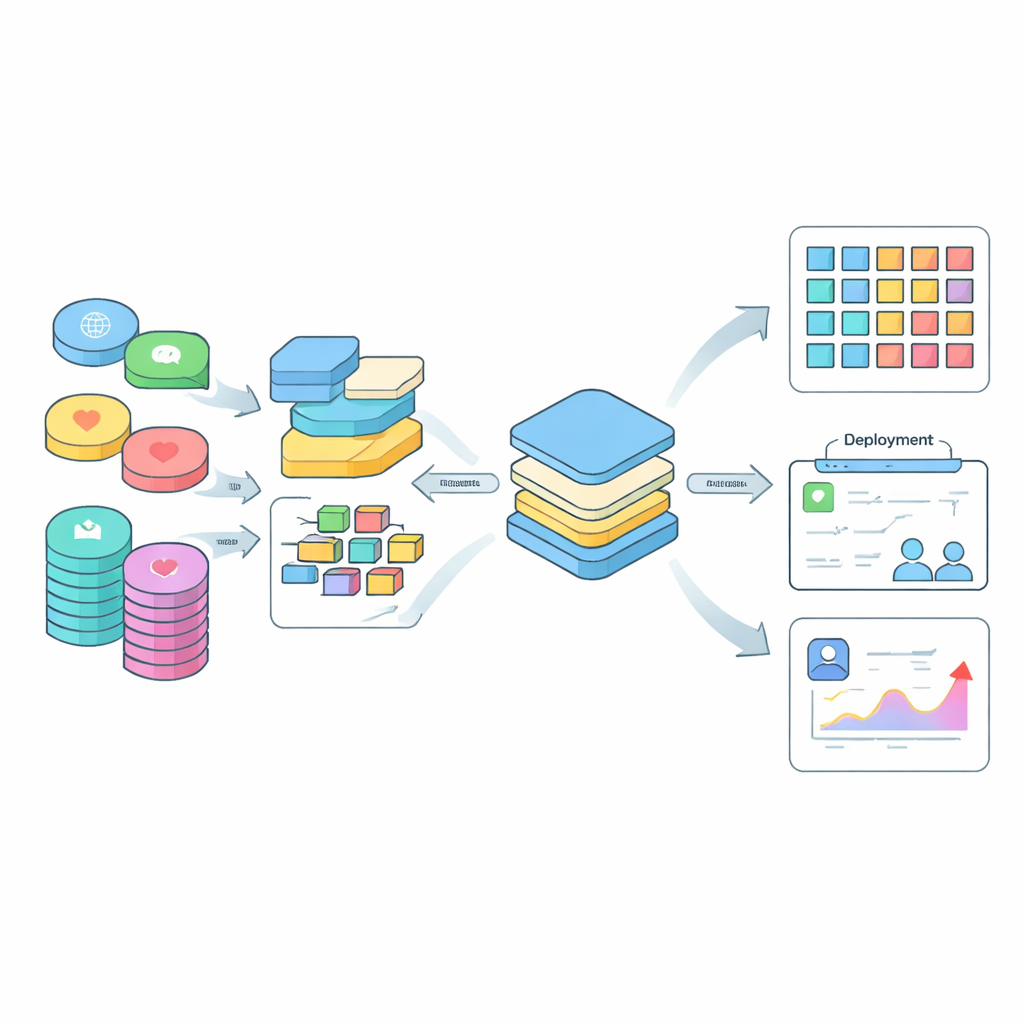

To tackle this gap, the authors created PH-LLM, a suite of large language models tuned specifically for public health infoveillance. Starting from a powerful multilingual base model, they fine-tuned six versions, ranging from lightweight to larger models, on nearly 600,000 examples drawn from 30 social media datasets and several health question–answer resources. These examples covered a wide mix of topics, including vaccine attitudes, testing hesitancy, mental health signals, hate speech, and misinformation about COVID-19 and other issues. Rather than rewriting every post in English, they kept the original languages and combined them with instruction templates translated into 29 tongues, so the models could naturally handle mixed-language and non-English content.

Putting PH-LLM to a tough test

To find out how well PH-LLM really works, the team built a demanding benchmark of 39 unseen tasks drawn from 10 separate datasets in English, Arabic, Chinese, and Indonesian. These tasks asked the models to do things like separate personal stories from news, flag hate speech, identify false claims about cures, and detect changing feelings toward COVID-19 vaccines. PH-LLM had to answer in a "zero-shot" setting, meaning it received no extra training for any specific task. The researchers compared PH-LLM’s accuracy against a wide range of well-known systems, including open-source models such as Llama, Mistral, BLOOMZ, and Qwen, as well as commercial models like GPT-4o and GPT-4o mini.

Smaller yet stronger across languages

Across both English-only and multilingual tasks, PH-LLM models not only held their own but frequently outperformed alternatives of similar or even much larger size. The 14-billion and 32-billion parameter versions delivered the best overall performance, beating larger open-source models and even the commercial GPT-4o family on average in the benchmark. Importantly, the stronger multilingual scores mean PH-LLM can track health-related conversations in Arabic, Chinese, Indonesian, and other languages that are often overlooked. Because PH-LLM reaches this level of accuracy with fewer parameters than many competitors, it can run on more modest computing hardware, lowering costs for health agencies, especially in low- and middle-income countries.

From online signals to real-world decisions

The authors envision PH-LLM as a practical tool that non-technical public health workers can use through simple interfaces. When combined with information about time, location, and local conditions, PH-LLM’s classifications can reveal where vaccine doubts are rising, which communities are targeted by hate speech, or when dangerous rumors start to spread. While the models still have limits—such as uneven performance on very hard or imbalanced tasks and possible biases inherited from social media data—they mark a major step toward real-time, multilingual monitoring of public health conversations. In plain terms, PH-LLM turns the noisy stream of social media into clearer signals that can help authorities act faster and more fairly during both everyday campaigns and future pandemics.

Citation: Zhou, X., Zhou, J., Wang, C. et al. A suite of large language models for public health infoveillance. npj Digit. Med. 9, 270 (2026). https://doi.org/10.1038/s41746-026-02435-6

Keywords: public health surveillance, social media misinformation, vaccine hesitancy, multilingual AI, large language models