Clear Sky Science · en

BGC-LiteNet: BeiDou grid code embedded lightweight neural architecture for real-time UAV fire detection and localization

Watching the Woods from Above

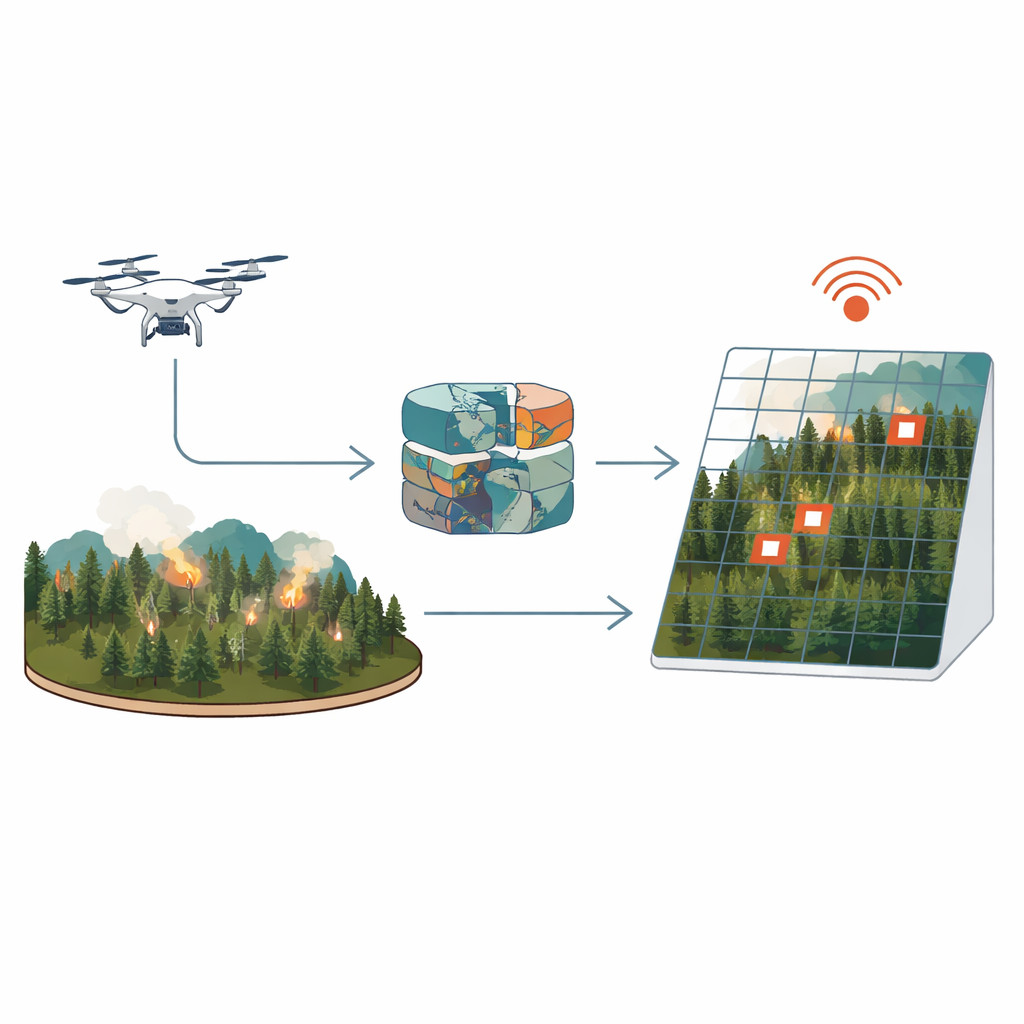

Forest fires can turn from a small spark into a catastrophe in minutes. Drones equipped with heat‑sensing cameras promise to spot these fires early, but today’s systems face a tough trade‑off: fast, lightweight software usually misses tiny, early flames, while heavier, more accurate programs respond too slowly to be useful in an emergency. This paper introduces a new approach called BGC‑LiteNet that aims to do both at once—detect small fires quickly and pinpoint their locations on a map in nearly real time, even on modest onboard computers.

Why Early Fire Detection Is So Hard

Early‑stage forest fires appear in drone images as just a few glowing pixels, often hidden by smoke, dim light, or cluttered terrain. Traditional methods that rely on simple thresholds or handcrafted image features are fast but easily fooled by other heat sources and changing conditions. Newer deep‑learning systems are better at recognizing fires, but they usually give only image positions, not actual map coordinates. Converting those positions into real‑world locations requires extra steps involving camera geometry, satellite positioning, and online mapping services. Each added step costs time—hundreds of milliseconds or more—and depends on reliable communications, which are often unavailable in remote mountains.

Putting the Map Inside the Neural Network

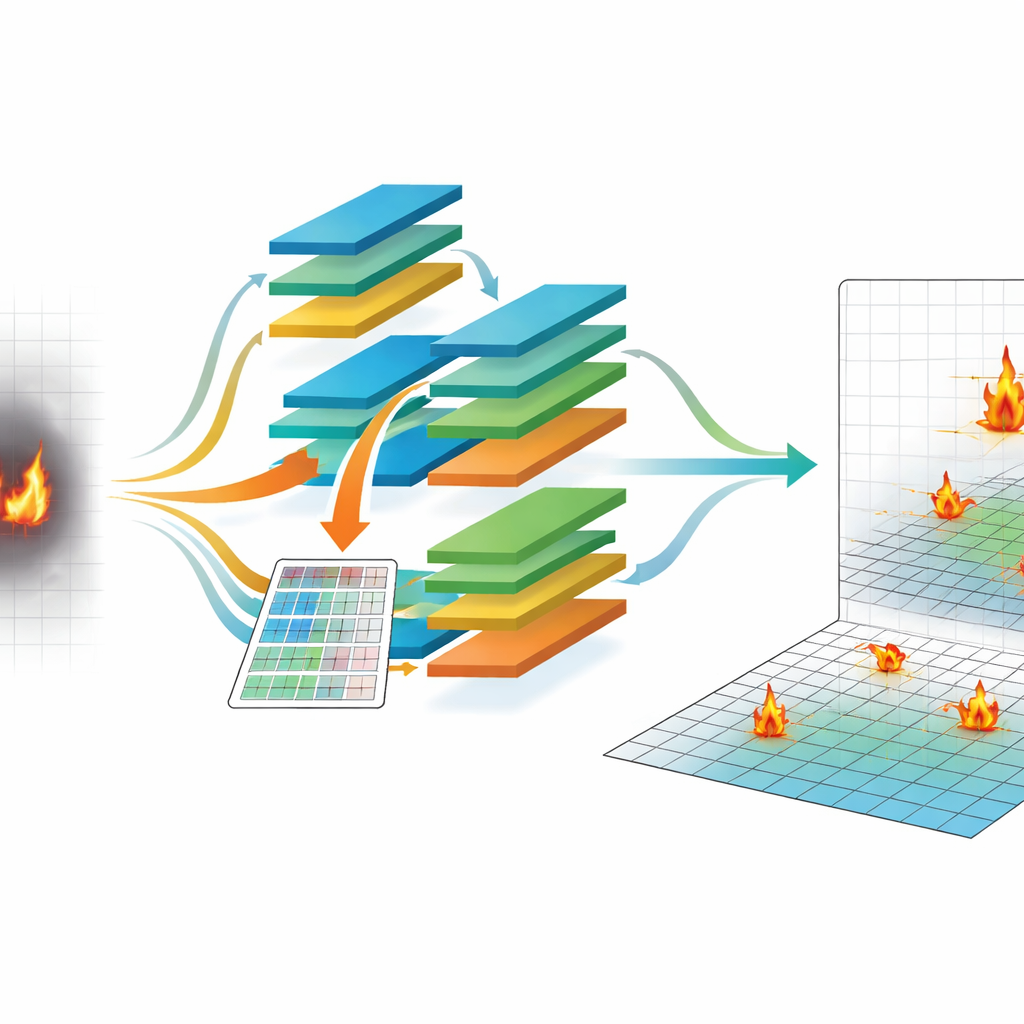

Instead of treating mapping as an afterthought, BGC‑LiteNet bakes geography directly into the detection process. It uses China’s BeiDou Grid Code (BGC), a national standard that divides the Earth into a regular grid of cells about 100 meters across. For every pixel in a drone’s infrared image, the system computes which grid cell on the ground it corresponds to and builds a matching grid map. This grid map is then turned into a set of learnable numeric codes that travel into the neural network alongside the image itself. In effect, each pixel comes with both a visual clue and a geographic hint, allowing the model to learn where fires are likely to appear and to output standardized grid identifiers directly, without calling external mapping services.

Designing a Brain That Thinks Fast

Embedding geography is only half the story; the rest is making it run fast on a small onboard computer. The authors use a technique called neural architecture search, which automatically tests many possible network designs to find those that balance accuracy against speed. They measure how long basic building blocks—such as compact convolutions and attention modules—take to run on an embedded device, then guide the search so that slow combinations are discouraged. The final BGC‑LiteNet design uses under one million parameters, relies heavily on efficient convolutions, and prunes unnecessary paths, resulting in an average processing time of about 38 milliseconds per image at standard resolution on an NVIDIA Jetson Xavier NX module carried by a drone.

Putting the System to the Test

To see whether this approach works in the real world, the team built a dataset of nearly 13,000 drone images collected over mountains, plains, forest edges, and mixed terrain across several Chinese provinces. Each image is paired with highly accurate drone position data and fire annotations linked to BeiDou grid cells. Across this diverse collection of day, night, smoky, and clear scenes, BGC‑LiteNet detects fires with about 89 percent mean average precision and achieves more than 92 percent accuracy in geolocating them within 50 meters. It outperforms both traditional methods and popular compact deep‑learning models, especially when fires are small, lighting is low, or smoke is dense. The system also scales well across different image sizes and stays responsive even at higher resolutions.

From Single Drones to Firefighting Networks

Accurate, standardized locations are crucial when multiple drones and ground teams must work together. Because BGC‑LiteNet outputs locations directly in a national grid system, different drones automatically “speak the same language,” reducing disagreements in reported positions. Tests with simulated multi‑drone patrols show that its location estimates for the same fire are tightly clustered, much more so than for methods that rely on separate mapping steps for each platform. The researchers also show that the system keeps working under challenging lighting, smoke, rain, and fog, and can be converted quickly to standard GPS‑style coordinates for existing mapping tools.

What This Means for Firefighting

BGC‑LiteNet demonstrates that it is possible to build a drone‑based fire detection system that is both fast and precise by treating mapping as part of the intelligence rather than an add‑on. By embedding a national grid reference directly into the neural network and automatically designing the model for low‑power hardware, the authors achieve quick, accurate fire alerts that can guide responders to within tens of meters of a new blaze. Beyond forest fires, the same idea—teaching neural networks to understand where as well as what—could help in other tasks such as wildlife surveys, disaster assessment, and precision agriculture.

Citation: Yin, H., Yu, Y., Hong, A. et al. BGC-LiteNet: BeiDou grid code embedded lightweight neural architecture for real-time UAV fire detection and localization. Sci Rep 16, 14456 (2026). https://doi.org/10.1038/s41598-026-44728-6

Keywords: UAV fire detection, geospatial deep learning, edge AI, BeiDou Grid Code, real-time localization