Clear Sky Science · en

Assessing the risk of bias of clinical trials with large language models and ROBUST-RCT: a feasibility study

Why this matters for patients and doctors

Modern medicine relies on clinical trials to decide which treatments work, but even well-designed studies can be misleading if they are biased. Checking each trial carefully for hidden problems is slow, complicated work that can delay updated medical guidelines for years. This study explores whether large language models—advanced AI systems that read and analyze text—can help humans more quickly and consistently judge how trustworthy clinical trials are, using a newer, simpler tool called ROBUST-RCT.

How trial quality is judged today

Clinical trials are often called the gold standard, yet they can still be distorted by design flaws, poor reporting, or selective analysis. To spot these issues, reviewers commonly use Cochrane’s Risk of Bias 2 (RoB 2) checklist. While rigorous, RoB 2 is notoriously time-consuming, difficult to apply even for experts, and yields only modest agreement between different reviewers. Meanwhile, the number of trials published each year keeps rising, but the number of studies that actually make it into systematic reviews has not kept pace, and many reviews are already out of date at publication. This growing gap has spurred interest in tools that are easier to use and in technological help from AI.

A new tool and a role for AI

ROBUST-RCT is a recently developed alternative to RoB 2. Instead of trying to capture every possible source of bias, it focuses on six core items that are both common and strongly linked to distorted treatment effects. The tool was designed by epidemiologists to strike a balance between simplicity and scientific rigor, and it was tested for usability with junior reviewers. Because ROBUST-RCT is newer and less familiar than RoB 2, the authors saw an opportunity: combine this streamlined checklist with large language models to see whether AI could reliably assist in judging trial bias alongside human reviewers.

What the researchers actually tested

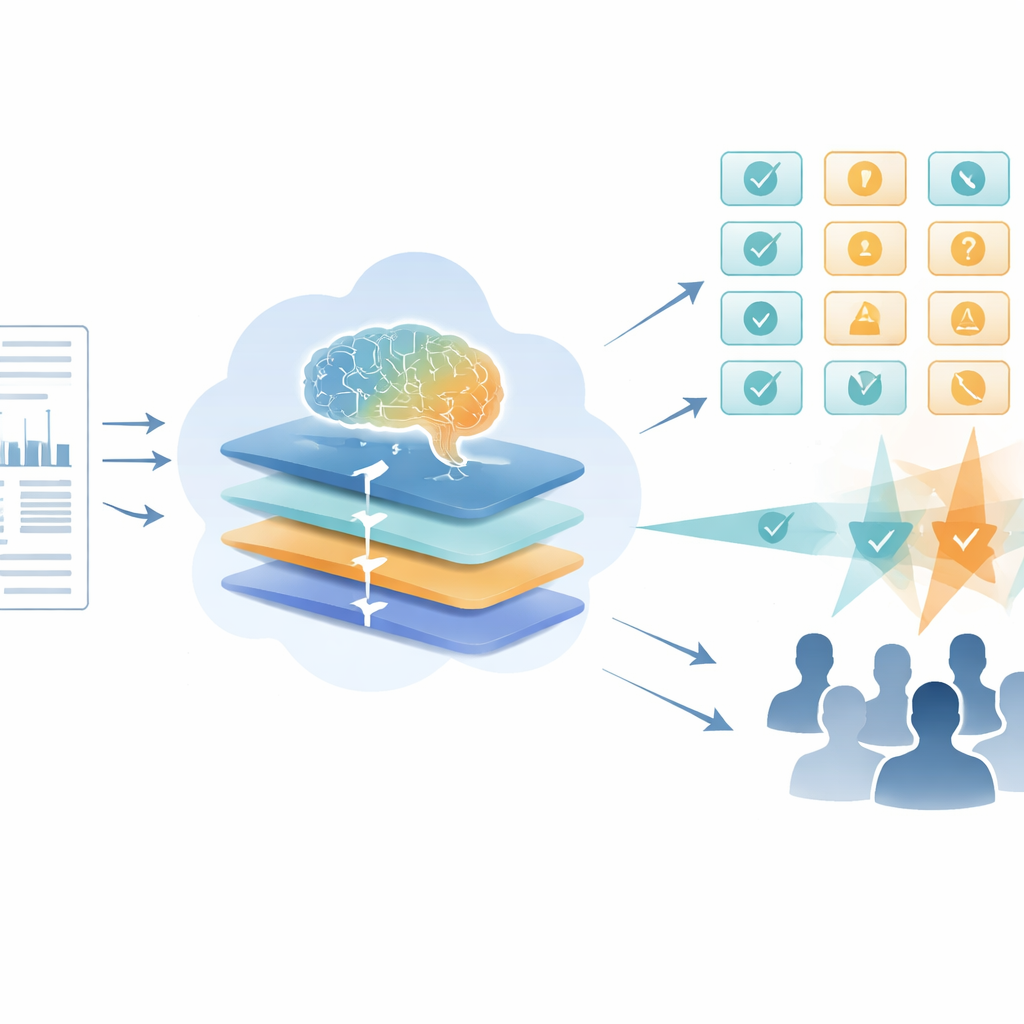

The team randomly selected 20 drug trials indexed in PubMed Central and, after exclusions, ended up with 9 randomized controlled trials for detailed analysis. Three early-career medical researchers independently used the ROBUST-RCT manual to rate the primary outcome of each trial, then resolved any disagreements in consensus meetings. In parallel, four different large language models—GPT-4-turbo, Gemini 2.5 Pro Preview, DeepSeek-R1, and Qwen3-235B-A22B—were given the full trial PDFs plus a detailed, step-by-step instruction prompt explaining how to apply ROBUST-RCT. The core question was: how closely did each AI’s final ratings match the human consensus across the six main items of the tool?

How well the AIs agreed with humans

To quantify agreement, the authors used a statistic called Gwet’s AC2, which improves on more familiar Kappa scores and better handles uneven rating patterns. Across 54 paired human–AI comparisons, three of the four models reached at least “moderate” reliability when benchmarked probabilistically, meaning their ratings were often similar to those of the human consensus, and big disagreements were relatively rare. Gemini 2.5 Pro Preview performed best (AC2 of 0.69), followed by Qwen3-235B-A22B (0.65) and GPT-4-turbo (0.60). DeepSeek-R1 was the weakest (0.46) and tended to rate trials as more biased than humans did, possibly because it relied on text-only extraction and could not fully use tables and figures. Notably, when the authors looked only at human reviewers before they met to discuss, their own agreement (Fleiss’ Kappa of 0.49) was similar to what has been reported for the older RoB 2 tool.

What this means for future evidence reviews

Despite its small sample size, this feasibility study shows that several current large language models can reach at least moderate agreement with human reviewers when applying ROBUST-RCT, a simpler risk-of-bias tool for clinical trials. In practice, such models could eventually serve as a “third reviewer” to break ties, flag likely errors, or pre-screen studies so that human experts can focus on the most complex or disputed cases. The authors stress that AI will not replace human judgment and that ethical issues—such as data privacy, training on copyrighted material, and the risk of overreliance on automated tools—must be addressed. Still, the findings suggest that carefully guided AI could help keep systematic reviews more up to date, allowing clinicians and guideline panels to spend less time on technical scoring and more time interpreting what the totality of evidence means for patient care.

Citation: Vidor, P.R., Casiraghi, Y., de Souza, A.M. et al. Assessing the risk of bias of clinical trials with large language models and ROBUST-RCT: a feasibility study. Sci Rep 16, 13723 (2026). https://doi.org/10.1038/s41598-026-44303-z

Keywords: risk of bias, clinical trials, systematic reviews, large language models, evidence-based medicine