Clear Sky Science · en

A lightweight feature fusion network for weak and small target detection in remote sensing

Why finding tiny objects from the sky matters

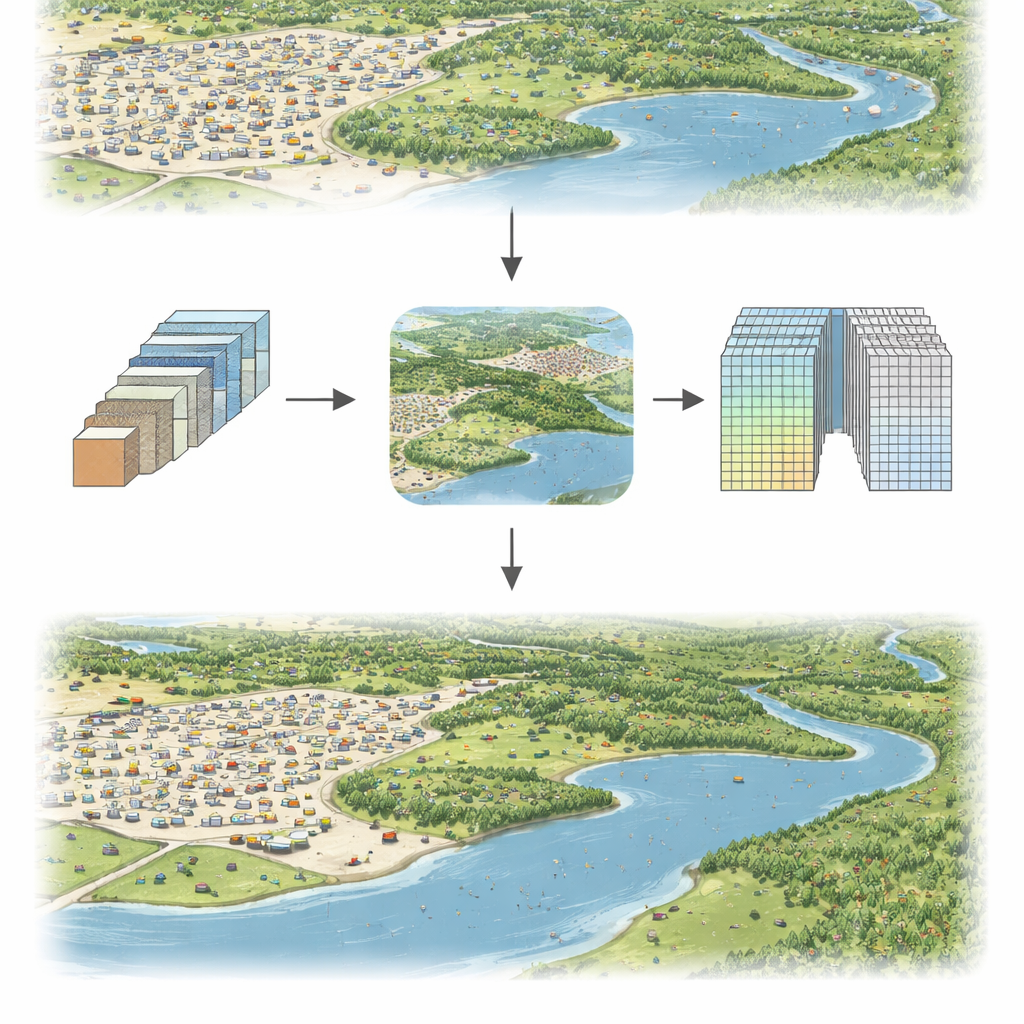

From monitoring traffic and ships to guiding disaster response, today’s satellites and drones constantly scan Earth’s surface. Yet many of the things we care about most—small vehicles, boats, or pieces of infrastructure—appear in images as just a few pixels across, easily lost in cluttered city blocks, forests, or shorelines. This paper introduces GSS‑YOLO, a new, lightweight computer vision system designed to reliably spot these weak, tiny targets in remote‑sensing images, even when the pictures are blurry, dim, or full of distracting background detail.

The challenge of seeing needles in a haystack

Remote‑sensing cameras mounted on aircraft and satellites capture huge areas at once. That wide view is useful, but it shrinks each object: small targets may cover only 10×10 pixels or less. At the same time, backgrounds are complex—clouds, rooftops, trees, rivers, shadows, and seasonal changes in lighting or weather all add noise. Traditional detection systems either miss these tiny objects or require heavy, slow models that are hard to run in real time on drones or edge devices. The authors set out to build a model that is both accurate for small objects and efficient enough to run quickly on limited hardware.

A compact system tuned for small details

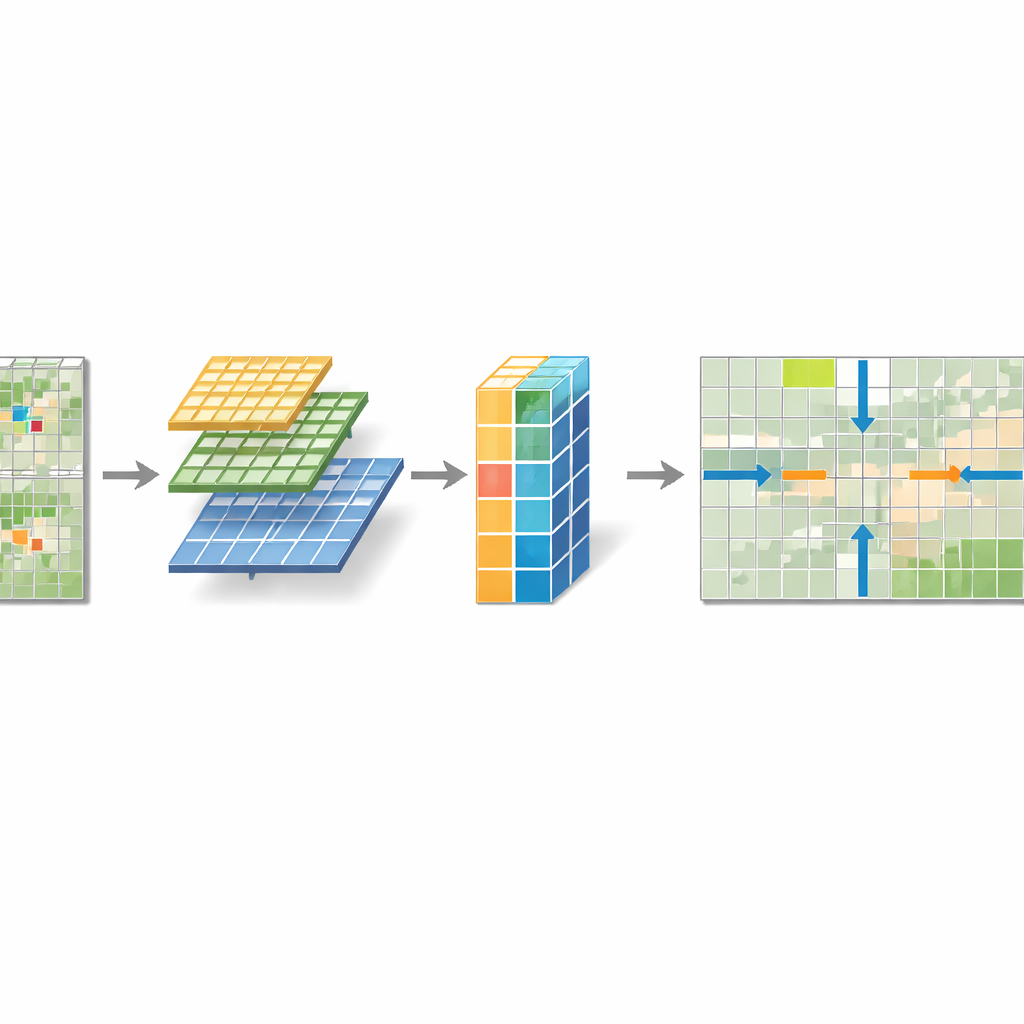

The researchers start from a popular real‑time detector called YOLOv5 and redesign key parts to create GSS‑YOLO. They introduce three main building blocks that work together. First, a Shallow‑Deep Information Aggregation (SIA) module blends information from small and slightly larger neighborhoods in the image, helping the network combine fine edges with broader context without bloating the model. Second, an SPD‑Conv block changes how the system reduces image size: instead of simply throwing away pixels during downsampling, it rearranges them so that fine details are preserved in extra channels before gentle compression. Third, a Global Context‑Aware Module (GCAM) sits just before the final detector and looks across the whole image to highlight positions that are likely to contain small targets while toning down background clutter.

How the new modules work together

SIA tackles a core weakness of many vision networks: ordinary convolutions only see local patches and struggle with global context. By running parallel filters that look at slightly different scales, then passing the result through lightweight layers that mix and regularize features, SIA produces richer descriptors of small objects without adding many parameters. SPD‑Conv addresses a different problem—information loss from aggressive downsampling. It slices the feature map into interleaved sub‑grids and stacks them depth‑wise, so that no pixel is discarded; a simple 1×1 filter then compacts this richer representation. GCAM adds a global “spotlight” effect. It pools information separately along horizontal and vertical directions to keep track of rows and columns where tiny objects appear, and combines this with a streamlined attention mechanism over channels. The result is a multi‑dimensional mask that strengthens signals at likely target locations and suppresses confusing textures elsewhere.

Putting the model to the test

To see whether these ideas translate into real‑world gains, the team evaluated GSS‑YOLO on three demanding datasets. USOD contains dim, ultra‑small grayscale targets in low‑light scenes; VisDrone2019 offers busy urban scenes shot from drones, full of tiny pedestrians and vehicles; and DIOR is a diverse satellite collection featuring airplanes, bridges, ships, sports fields, and more. Across all three, GSS‑YOLO consistently achieved higher precision, recall, and average detection quality than a suite of modern competitors, including recent YOLO versions and several specialized small‑object models. On the USOD dataset, for example, it not only delivered the best accuracy but did so with the fewest parameters—about 5 million—and the highest processing speed, reaching hundreds of frames per second. Visual examples show it avoiding both missed detections and false alarms in crowded, cluttered scenes where other systems struggle.

What this means for everyday applications

For non‑experts, the key message is that GSS‑YOLO makes it more feasible to run sharp‑eyed detection on small, hard‑to‑see targets directly on drones, satellites, or other compact devices, without relying on massive data centers. By better preserving fine image details and using global context to guide attention, the model turns faint specks into confidently recognized objects. While it can still fail under extreme conditions—such as when most of a target is hidden or motion blur is severe—the work marks a practical step toward real‑time, wide‑area monitoring for traffic management, environmental observation, security, and emergency response, where seeing tiny details quickly can make a big difference.

Citation: Wu, Z., Li, N., Tian, Z. et al. A lightweight feature fusion network for weak and small target detection in remote sensing. Sci Rep 16, 13295 (2026). https://doi.org/10.1038/s41598-026-43560-2

Keywords: remote sensing, small object detection, lightweight neural network, drone imagery, Earth observation