Clear Sky Science · en

Transparent AI for mathematics: transformer-based large language models for mathematical entity relationship extraction with XAI

Why turning word problems into data matters

Many of us remember struggling with word problems in math class: stories about mangoes, teams, or packets of chocolates that secretly describe equations. Computers find these problems even harder, because they must recognize which numbers are involved and how they are connected. This paper shows how modern language technology can read such everyday math stories, figure out the hidden operation—addition, division, or even square roots—and, crucially, explain why it reached that conclusion. The result is a step toward smarter, more transparent tools for education and scientific work.

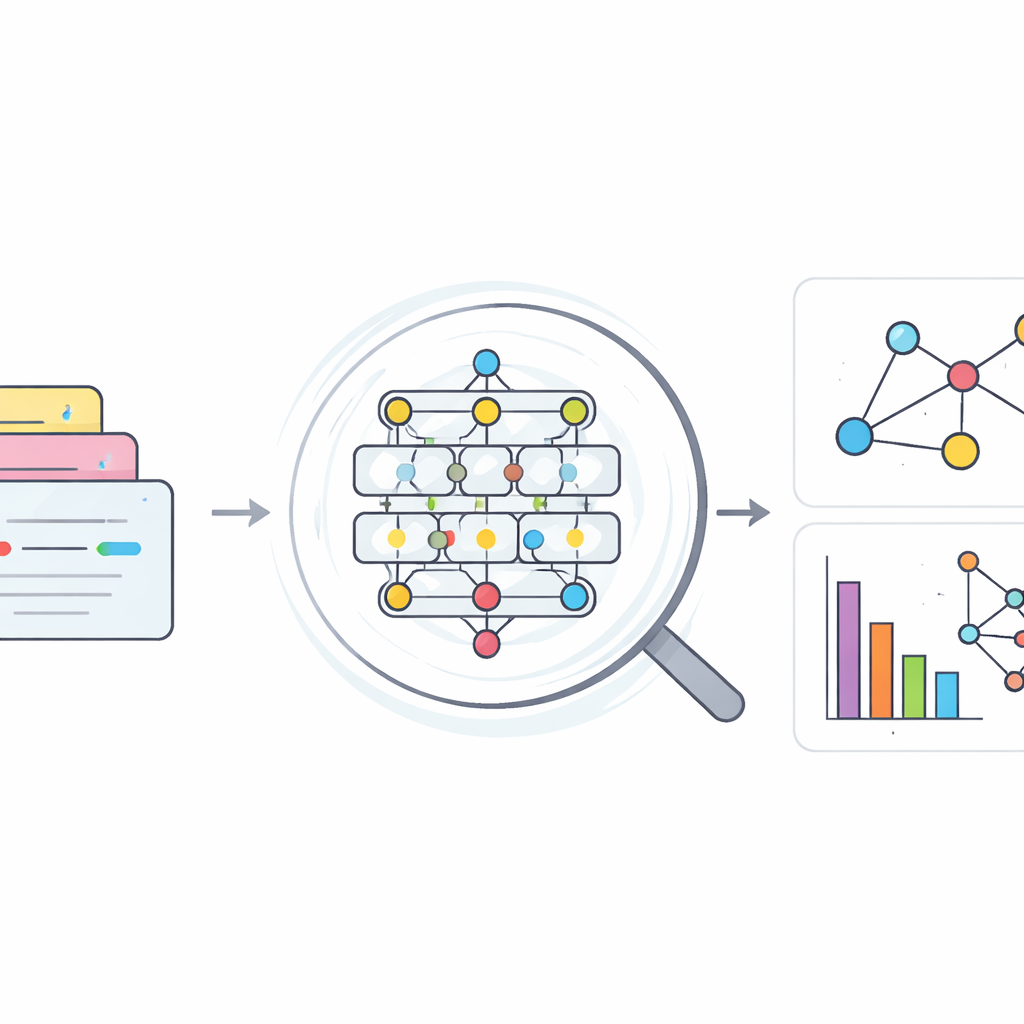

From stories to structured math

At the heart of this study is the idea that each math word problem can be seen as a small network of "things" and "links." The things are the quantities (like "twelve mangoes" or "three children"), and the link is the operation that connects them (such as dividing, adding, or taking a square root). The author treats these problems as an "entity–relationship" task: the numbers are entities, and the operation is the relationship between them. By turning messy sentences into this simple structure, computers can move closer to how humans internally organize a math problem before solving it. This structure can also power search tools, knowledge maps, and automatic helpers that understand mathematical text in textbooks or research articles.

Building a dataset of everyday math stories

To train an artificial intelligence system to recognize these relationships, the study first needed examples. The author assembled a new dataset by combining two earlier collections of Bangla and English mathematical texts. From these sources, they selected English sentences and carefully identified number phrases like "five thousand and forty" as single mathematical entities, not just scattered words. Each problem was matched with its corresponding equation to label the relationship between two key quantities as one of six basic operations: addition, subtraction, multiplication, division, factorial, or square root. After removing duplicates, cleaning stray symbols, and simplifying the language through steps like stop-word removal and lemmatization, the final dataset contained 3,284 distinct math statements with clearly marked entities and relationships.

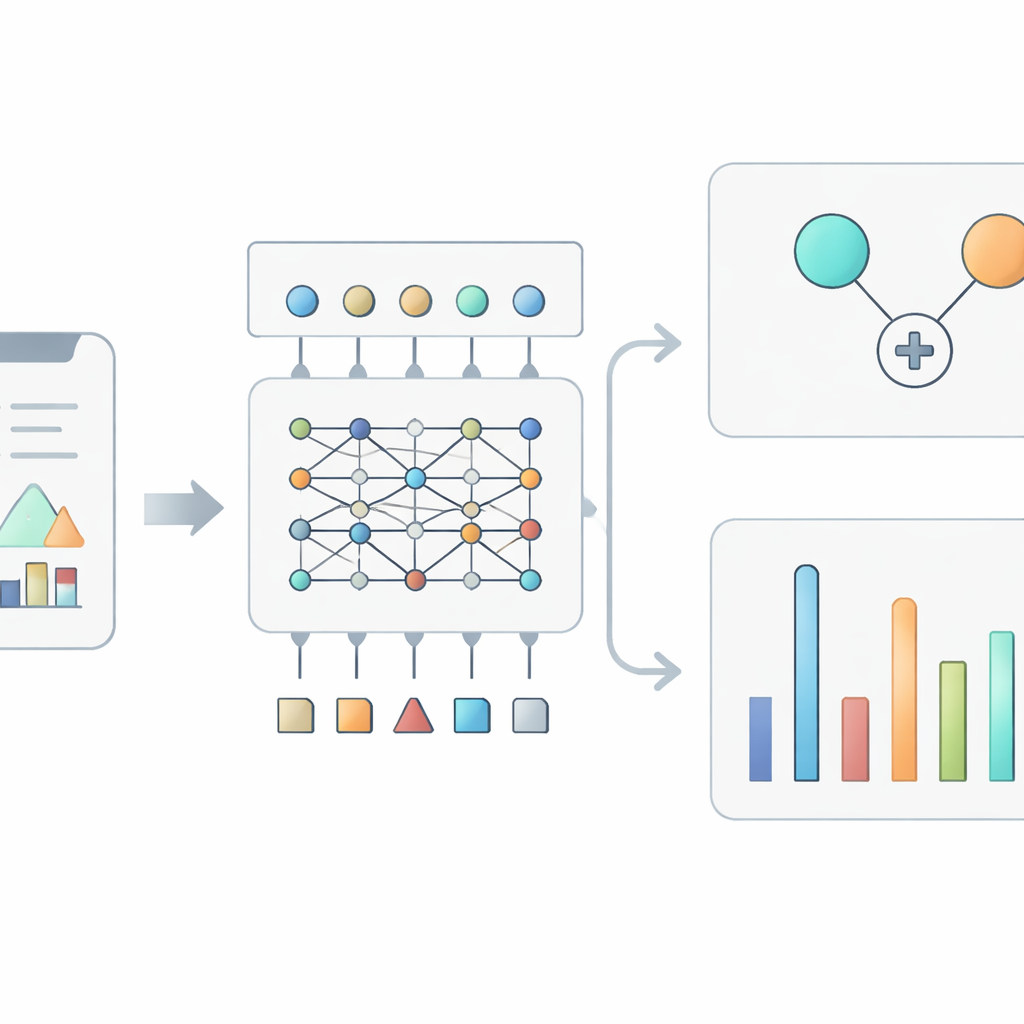

Teaching transformers to read math problems

With the dataset prepared, the next step was to test powerful transformer-based language models—the same family of models that has transformed modern natural language processing. Several contenders were evaluated: BERT, ELECTRA, RoBERTa, ALBERT, DistilBERT, and XLNet. Each model received the cleaned text and was fine-tuned so that its output was not a full solution to the problem, but simply the type of relationship connecting the two main quantities. Evaluation used common measures such as accuracy and F1 scores on held-out test data. BERT emerged as the clear winner, correctly identifying the relationship in almost every case, with an accuracy of 99.39%. The learning curves showed that the model improved steadily without overfitting, and a confusion matrix revealed only a handful of mix-ups, mostly between similar operations like addition and subtraction or division and multiplication.

Opening the black box with explanations

High accuracy alone is not enough when AI systems are expected to support learning or research. Users need to understand why a particular prediction was made. To address this, the author applied SHAP, a popular method from explainable AI, to the trained BERT model. SHAP assigns each word in a sentence a contribution score that reflects how much it pushes the model toward or away from a specific operation. By feeding unseen math problems through a SHAP explainer, the study produced visualizations where helpful words glow positively and misleading ones pull in the opposite direction. For example, words such as "divided," "equally," and "each" strongly support a division prediction, while contextual words that do not point to division reduce that confidence. Across many examples, the analysis showed that the model relied more on operation-indicating words than on the raw numbers themselves, mirroring how humans skim for cues like "total," "from," or "square root" when interpreting a problem.

What this means for future math tools

In plain terms, this work demonstrates that a modern language model can be trained to read short math stories and almost perfectly identify the core operation tying the numbers together, while also showing its reasoning in a human-readable way. By combining careful dataset construction, a strong transformer model, and SHAP-based explanations, the study offers a transparent framework for turning narrative math problems into structured relationships. This approach could underpin future systems that not only solve problems, but also highlight the key phrases that matter, support automated checking of mathematical texts, and build rich, navigable maps of mathematical knowledge for students and researchers alike.

Citation: Aurpa, T.T. Transparent AI for mathematics: transformer-based large language models for mathematical entity relationship extraction with XAI. Sci Rep 16, 13038 (2026). https://doi.org/10.1038/s41598-026-43507-7

Keywords: math word problems, transformer language models, explainable AI, entity relation extraction, SHAP explanations