Clear Sky Science · en

MetaCAM as an ensemble-based class activation mapping framework improves model explainability

Why seeing inside AI decisions matters

Modern artificial intelligence can spot tumors, recognize faces, and classify photos with superhuman accuracy, yet often cannot explain why it reached a particular decision. This “black box” behavior is troubling in high‑stakes settings like medicine or self‑driving cars, where people need to know which parts of an image actually influenced a prediction. This paper introduces MetaCAM, a new way to combine many existing visualization techniques into a single, clearer picture of what an image‑recognizing neural network is looking at, aiming to make AI decisions more transparent and trustworthy.

How machines show what they “look at”

Convolutional neural networks, the workhorses of image recognition, process pictures through many layers and then output a label such as “cat” or “tumor.” Class Activation Maps (CAMs) were created so we could peek inside these networks. A CAM overlays a heatmap on the original image, highlighting regions that contributed most to a chosen prediction. Over the years, many CAM variants have appeared, differing in how they use gradients, feature maps, or image perturbations to estimate importance. However, their performance is inconsistent: a method that works well for one image, class, or model can perform poorly for another, and there is no universal agreement on which CAM is “best.”

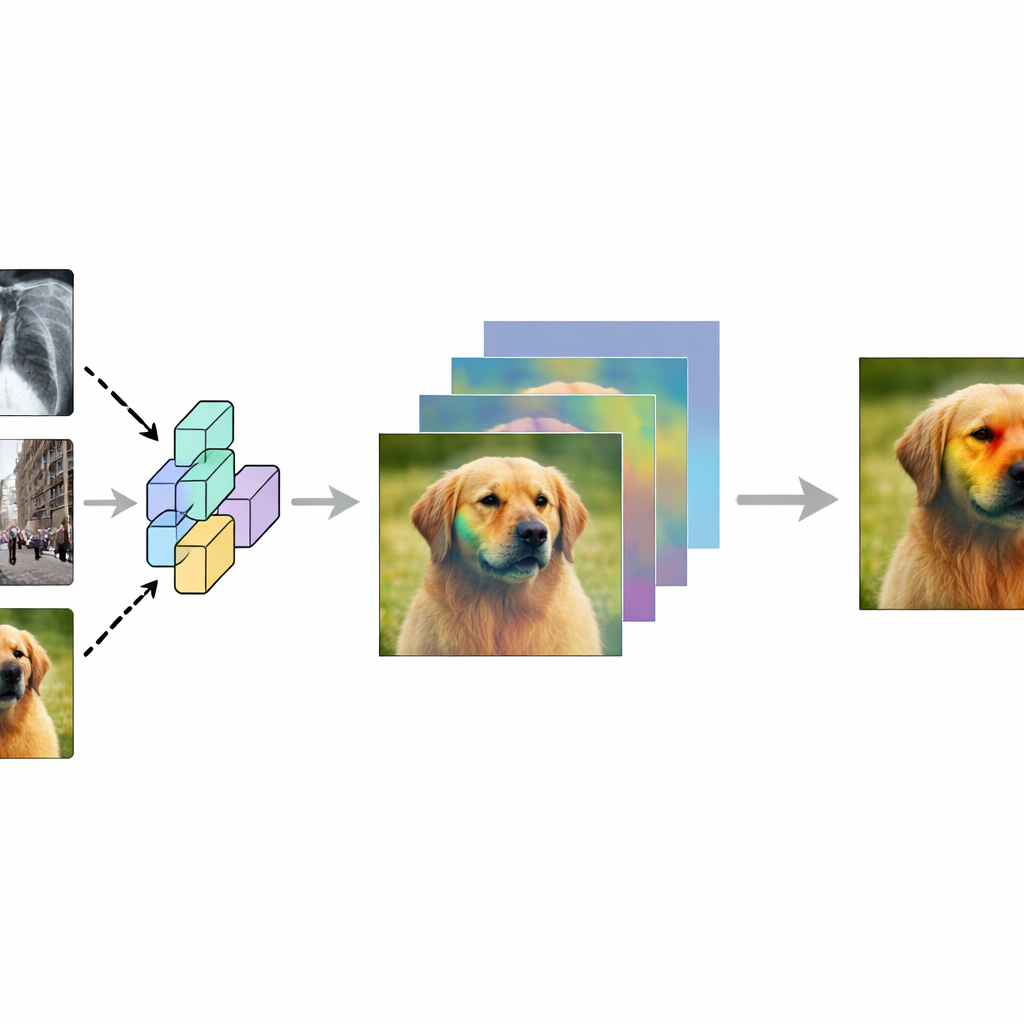

A team approach to clearer heatmaps

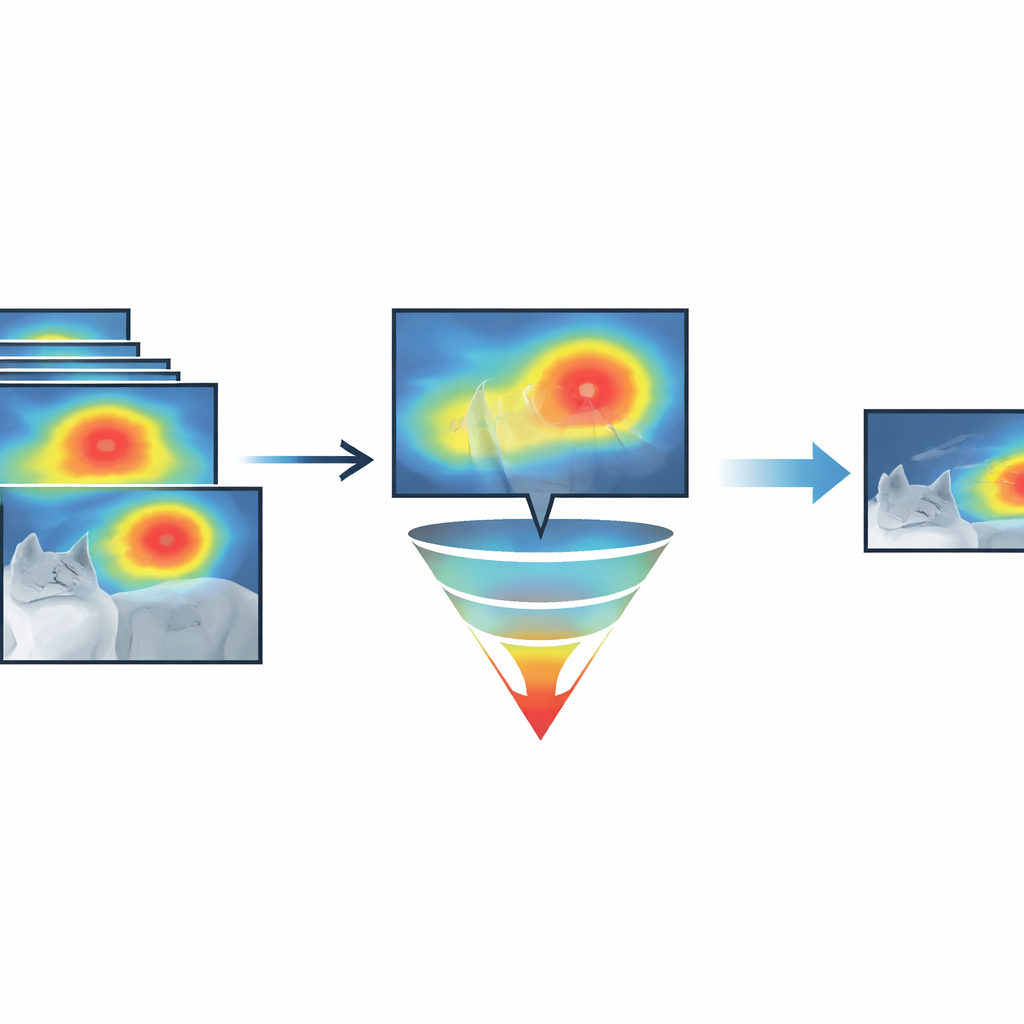

The authors propose MetaCAM, which treats different CAM methods like members of a committee rather than rivals. Instead of trusting a single heatmap, MetaCAM starts by generating several maps for the same image and target class using diverse techniques, including gradient‑based methods, perturbation‑based methods, and even related tools like FullGrad. It then looks for consensus: pixels that are repeatedly highlighted across methods are considered more reliable. By keeping only the top percentage of pixels that receive the strongest, most consistent attention across the ensemble, MetaCAM produces a refined heatmap that is less influenced by the quirks or failures of any single method.

Picking only the most convincing pixels

A key innovation is “adaptive thresholding,” which decides how many of the most active pixels to keep. Rather than always highlighting, say, the top 30 percent of pixels, the authors systematically test different cutoffs and choose the one that best matches how the network’s predictions change when those pixels are gently blurred. They rely on a quantitative test called Remove and Debias (ROAD), which measures how much the model’s confidence drops when its most “important” pixels are perturbed and how little it changes when the least important pixels are disturbed. By scanning across many thresholds, MetaCAM finds the level that gives the strongest separation between truly crucial and largely irrelevant regions, and this same idea can also sharpen individual CAM methods on their own.

Discovering which methods help—and which surprisingly do

To understand which ingredients make the best MetaCAM, the researchers ran large‑scale experiments that turned groups of CAM methods on or off in different combinations. Using high‑performance computing, they evaluated 64 such combinations across many images, classes, and neural network architectures. They then introduced a summary statistic called the Cumulative Residual Effect, which captures how much each group tends to raise or lower performance relative to the median experiment. Surprisingly, even “bad” or noisy maps—such as a CAM that often highlights the wrong object, or a map made from random noise—can sometimes improve MetaCAM, because their presence forces the consensus to tighten around pixels that multiple methods agree on, reducing spurious highlights elsewhere.

What this means for safer, more transparent AI

Across a wide variety of test images and models, MetaCAM, with adaptive thresholding, consistently outperformed all individual visualization methods according to the ROAD metric. In practical terms, its heatmaps more precisely mark the regions that actually drive a network’s decision, while filtering out much of the clutter and misdirection seen in single‑method maps. For fields like medical imaging, autonomous vehicles, or biometric screening—where understanding why a system said “yes” or “no” can be as important as the answer itself—MetaCAM offers a more stable, evidence‑based window into AI reasoning, helping experts judge whether a model is focusing on the right features before they trust it in the real world.

Citation: Dick, K., Kaczmarek, E., Miguel, O.X. et al. MetaCAM as an ensemble-based class activation mapping framework improves model explainability. Sci Rep 16, 10613 (2026). https://doi.org/10.1038/s41598-026-42879-0

Keywords: explainable AI, class activation maps, deep learning, model interpretability, ensemble methods