Clear Sky Science · en

Zero-shot performance of selected large language and multimodal models on the 2023 Brazilian Portuguese medical residency exam

Why this matters for doctors and patients

Artificial intelligence is quickly moving into hospitals and clinics, but most tests of these systems are done in English. This study asked a simple, high‑stakes question: how well do today’s large AI models handle real medical exam questions written in Brazilian Portuguese, including those that use images such as X‑rays? The answer helps doctors, educators, and policymakers judge whether these tools are ready to assist care in countries where English is not the main language.

Putting AI through a real medical entrance exam

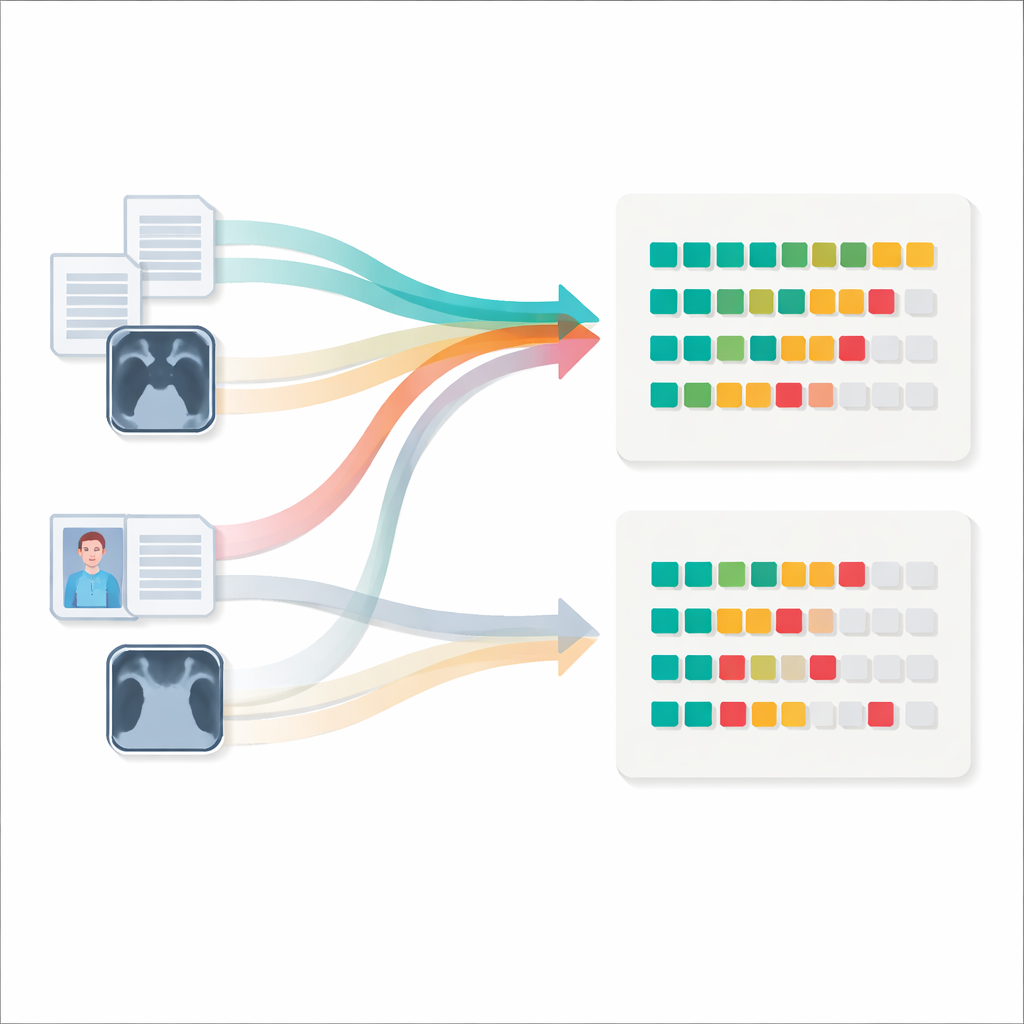

The researchers took the 2023 medical residency entrance exam from one of Brazil’s leading teaching hospitals, an exam that thousands of young doctors sit each year. It contains 117 multiple‑choice questions covering internal medicine, surgery, pediatrics, gynecology and obstetrics, and public health. Most questions are text only, but more than a third include images such as radiology scans, clinical photos, and diagnostic tracings. Six text‑only AI models and four multimodal models that can also see images were challenged to answer the exam in a “zero‑shot” setting: they were given no prior examples or fine‑tuning specific to this test, only standard instructions to pick an answer and explain their reasoning.

How smart were the models on written questions?

On questions made only of text, performance ranged widely. The weakest system got just over one in five questions right, while the best models answered roughly seven out of ten correctly. A family of models called Claude topped the chart, with scores around 70 percent, slightly above GPT‑4.0 Turbo and clearly ahead of several open‑source and commercial competitors. One open‑source model with many billions of parameters, however, came close to the leaders, hinting that strong performance need not be limited to proprietary systems. When the researchers compared these AI scores with the distribution of human candidates’ grades, the best models clustered near the middle of the applicant pool: not star students, but roughly on par with an average new doctor taking the exam.

Images still trip up today’s AI

Things changed when images were added. For the four multimodal models tested, accuracy dropped once image‑based questions were included, often falling below 50 percent correct, especially for radiology‑heavy items. Only the most advanced model maintained almost the same score on mixed text‑and‑image questions as on text alone. Across domains, the systems did best in public health and pediatrics, and worst in radiology and other image‑focused questions, suggesting that current training data and model design favor written material over medical pictures. Clinicians involved in the study did not feel that the image questions were inherently harder for humans, but the available data did not allow a direct, question‑by‑question human comparison, so it remains unclear how much of the performance gap comes from image reasoning versus question difficulty.

Peeking inside the explanations

To move beyond right‑or‑wrong scoring, the team asked three experienced physicians to review the explanations produced by one multimodal model. They judged whether the AI had interpreted the question correctly, whether its reasoning lined up with the chosen answer, and whether following its advice could harm a patient. For questions the model answered correctly, its explanations were usually coherent and considered safe. For questions it missed, however, misleading or invented reasoning—often called hallucinations—was common. The doctors sometimes disagreed on which explanations were problematic, reflecting the inherent gray areas of medical judgment, but they agreed more when the AI’s answer was clearly wrong and potentially unsafe.

What this means for AI in everyday care

Overall, the study shows that today’s large AI models can approach average human performance on a demanding medical exam written in Brazilian Portuguese, at least for text‑only questions. Yet they still struggle with medical images and can offer confident but faulty explanations that might mislead clinicians if used uncritically. The findings underline both the promise and the limits of current systems: they may become valuable assistants in Portuguese‑speaking healthcare, especially for reading and summarizing text, but they are not ready to replace trained doctors or to handle complex multimodal diagnosis without careful oversight and continued improvement.

Citation: Truyts, C.A.M., Rabelo, A.G., Souza, G.M.d. et al. Zero-shot performance of selected large language and multimodal models on the 2023 Brazilian Portuguese medical residency exam. Sci Rep 16, 11756 (2026). https://doi.org/10.1038/s41598-026-42829-w

Keywords: medical AI, large language models, Portuguese medicine, multimodal diagnostics, medical education