Clear Sky Science · en

Comparative analysis of large language models as decision support tools in oral pathology

Why smart chatbots in mouth medicine matter

Most people now carry powerful artificial intelligence in their pockets, packaged as friendly chatbots that answer questions in seconds. But can these tools safely help doctors interpret the tiny tissue changes that reveal whether a spot in the mouth is harmless or the start of something serious? This study asks exactly that, comparing four widely used chatbots to see how well they support specialists who diagnose diseases from microscope descriptions of oral tissues.

How the study put chatbots to the test

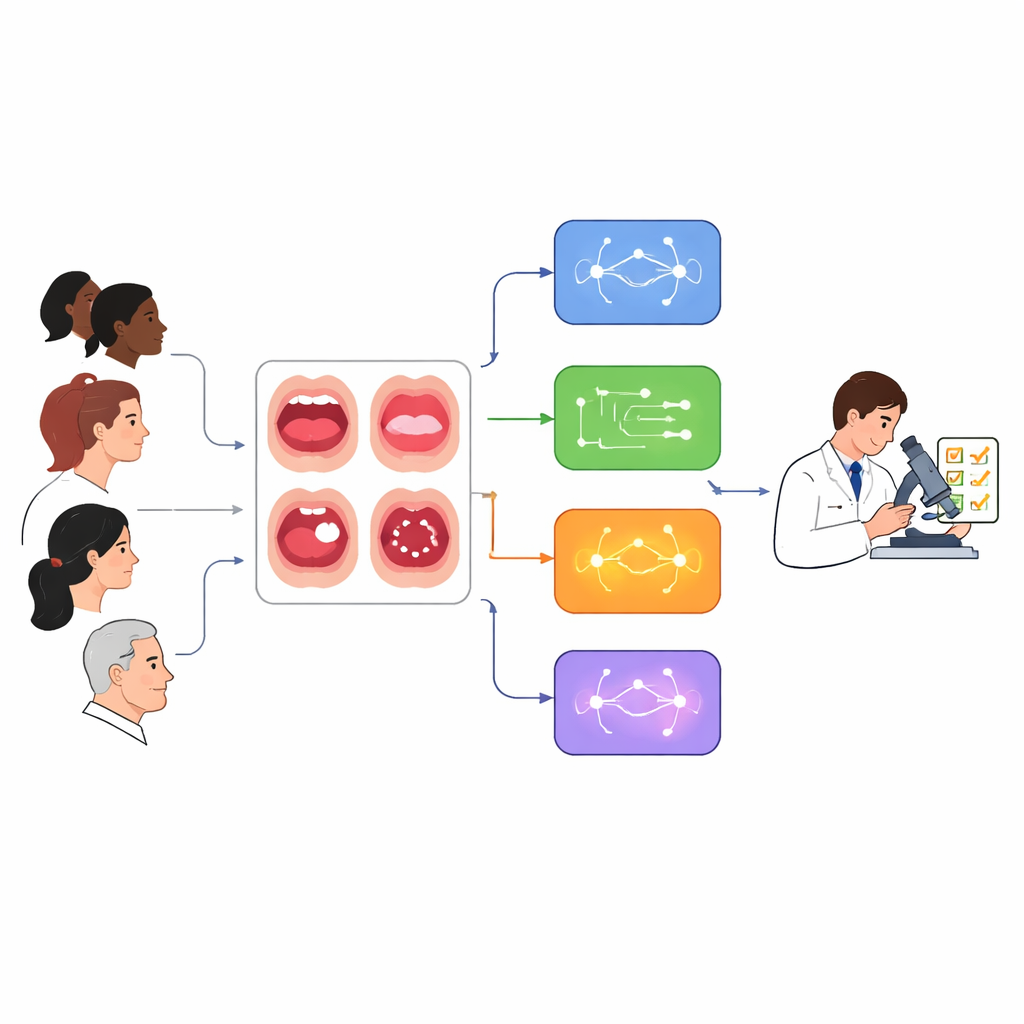

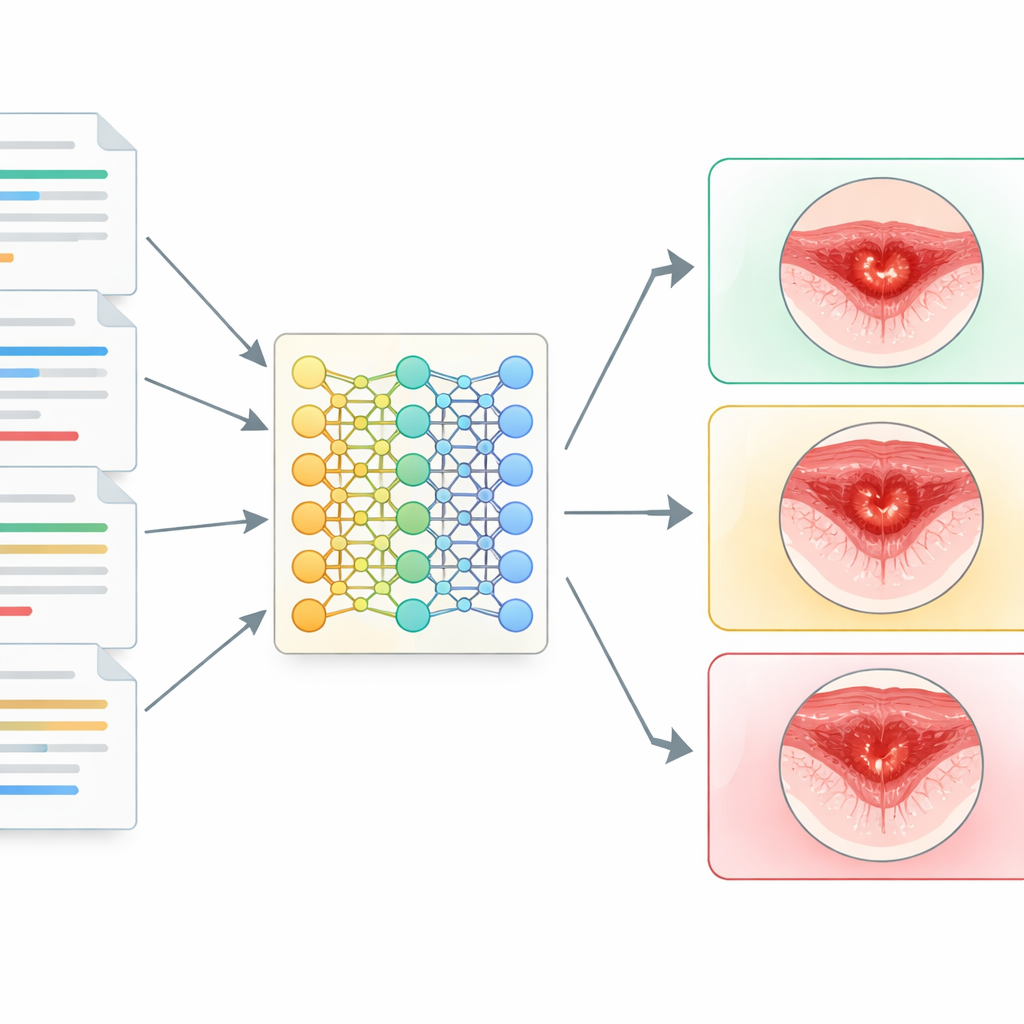

The researchers gathered 102 real-world reports describing what pathologists saw under the microscope in biopsies from the mouth and jaws. These reports covered a wide range of problems, from simple mucus-filled swellings and fibromas to potentially cancerous changes such as oral epithelial dysplasia and full-blown squamous cell carcinoma. For each case, the team fed the same text report, plus basic patient details like age, sex, and lesion location, into four chatbots: ChatGPT-4.0, a reasoning-focused ChatGPT o1-preview, Meta AI based on LLaMA-3, and Google’s Gemini. Each chatbot was asked for one main diagnosis and three possible alternatives, mimicking how a clinician might seek a quick second opinion.

Scoring answers against human experts

Two certified oral pathologists, working independently and then in consensus, compared each chatbot’s main suggestion with the original diagnosis in the hospital record. They sorted the answers into three groups: clearly wrong; similar or partially right (for example, catching only part of a combined diagnosis or using different but clinically equivalent wording); or fully correct. The team also checked whether a chatbot that missed the main diagnosis might still list the right answer among its three alternatives. Using standard statistical methods, they compared how often each system agreed with the human experts and examined whether results shifted with patient age or sex.

Which chatbot came closest to specialists

The reasoning-focused ChatGPT o1-preview gave the most reliable support: its main diagnosis matched the experts in about two out of three cases (68.6 percent), with Meta AI close behind (65.7 percent). ChatGPT-4.0 performed moderately well (59.8 percent), while Gemini trailed with correct answers in only about a quarter of cases (27.5 percent). When agreement was measured more strictly, ChatGPT o1-preview and Meta AI reached what statisticians call “substantial” agreement with oral pathologists, ChatGPT-4.0 reached “moderate” agreement, and Gemini showed “poor” agreement. All chatbots were better at common, clearly defined benign problems like mucoceles and fibromas, and consistently struggled with trickier conditions such as oral epithelial dysplasia or rare lesions.

Where the machines still fall short

Even when the chatbots were allowed a list of three alternative diagnoses, they often failed to include the correct one, especially Gemini and Meta AI. The study also found that, for most models, performance dipped slightly in older patients, possibly because age-related tissue changes make the microscopic picture more complex. By contrast, none of the systems showed differences between men and women. The authors highlight several reasons for caution: the “black box” nature of commercial AI, unknown training data, uneven representation of rare diseases, and the fact that the chatbots saw only text descriptions without the microscope images that human pathologists routinely use.

What this means for future care

For lay readers, the key message is that today’s conversational AIs can sometimes echo expert judgment in oral pathology, but they are far from dependable enough to stand on their own. The best-performing chatbot roughly matched specialists in two cases out of three and did worse in the very situations where mistakes matter most—unusual or early-stage disease. The authors conclude that, at present, these tools should be used only as helpers that may support education, reduce workload, and offer a rough second look, never as replacements for trained pathologists. With better data, clearer oversight, and careful testing, such systems may one day become safer partners in diagnosis, but for now, human expertise remains essential.

Citation: Alvarez-Silberberg, V.I., Alvarez-Silberberg, C.P., Galletti, C. et al. Comparative analysis of large language models as decision support tools in oral pathology. Sci Rep 16, 11272 (2026). https://doi.org/10.1038/s41598-026-41533-z

Keywords: oral pathology, artificial intelligence, clinical decision support, large language models, digital dentistry