Clear Sky Science · en

The emergence of trust under conditions of distrust

Why trust online strangers at all?

Every day people send money, secrets, or game items to people they have never met, often without any company or platform stepping in to guarantee fair play. Common sense says this is risky, yet online communities, games, and marketplaces still run on countless small acts of trust. This study asks a deceptively simple question: when there are strong reasons to be suspicious, why and how does trust between strangers still emerge?

A dangerous game of give and take

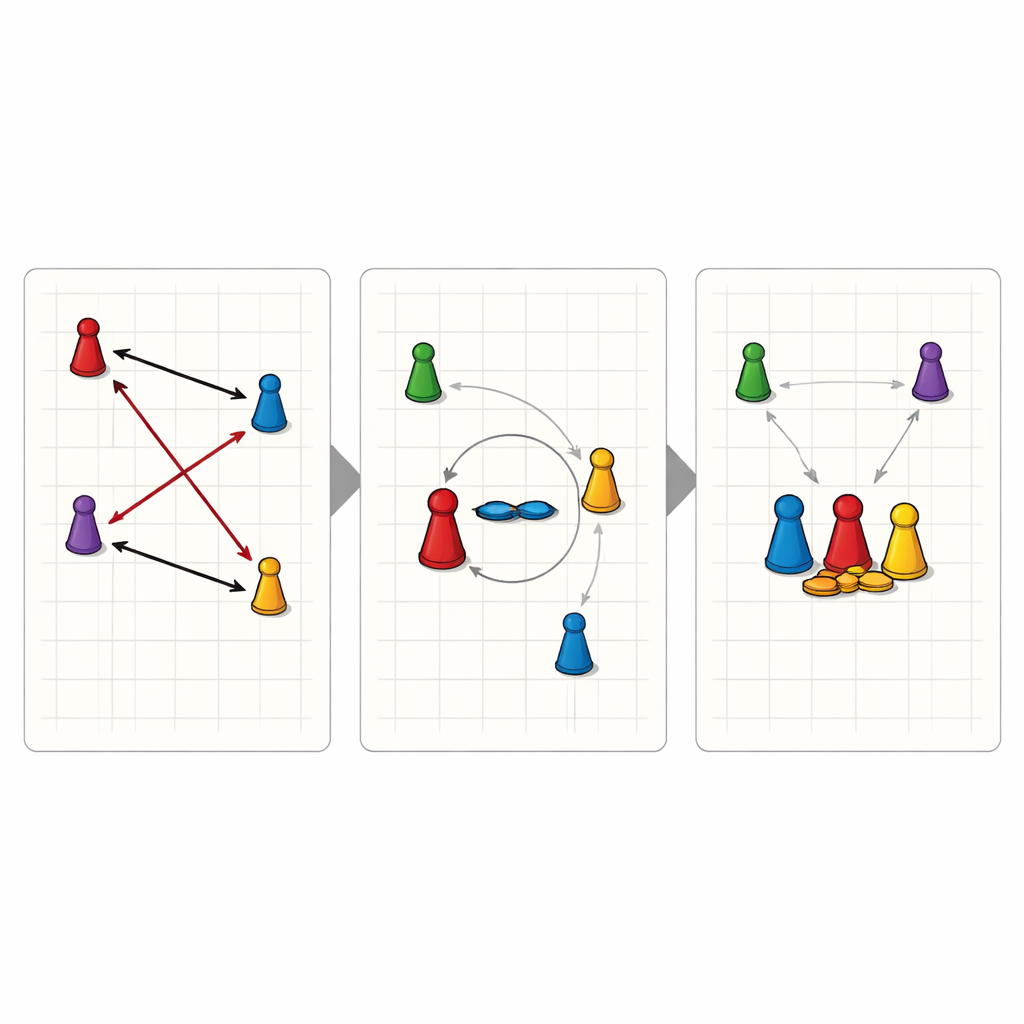

To explore this puzzle, the researcher built a custom online game inspired by social deduction titles like Mafia or Among Us. Over nine sessions, 101 internet users—recruited from Reddit and initially unknown to one another—played a text-based strategy game called “Tank Turn Tactics.” Each player controlled a token on a grid and could move, attack others, or transfer valuable resources such as life points and action points. The twist was that only one player could ultimately win, and killing others was usually the easiest path to victory. The rules were deliberately designed so that betrayal was tempting and trust was costly, closely mirroring risky corners of the internet where scams and social engineering flourish.

How trust was spotted in the chaos

In many laboratory studies, trust is measured with very simple games or with short survey questions about attitudes toward strangers. Here, the researcher combined several methods to capture trust as it actually unfolded over time. First, in-game actions were logged and classified as friendly (like gifting resources), hostile (like attacking), or neutral (like upgrading one’s own range). Trust was defined quite strictly: a player had to intentionally give a valuable resource or perform a cooperative action that helped another player and carried real risk. Second, participants were asked to “think aloud” while they played, speaking their thoughts into a voice channel. These verbal records allowed the researcher to check whether a seemingly friendly move was deliberate trust or just a misclick or test of the game interface. Together, this created a detailed picture of who trusted whom, when, and why.

Trust amid attacks and alliances

Despite the hostile setting, trust did appear. Across all sessions, players made 173 verified trust decisions, often forming temporary alliances by giving away life or action points with no guaranteed return. Most game actions were still attacks, and overall the environment remained dangerous, but cooperative behavior was far from rare. The study also identified different play styles: some players behaved mostly offensively, some focused on self-protection, and others leaned into cooperation. Interestingly, players who used cooperative tactics tended to survive longer than those who relied only on aggression or self-interest, suggesting that even in cutthroat conditions, selective trust can pay off. Yet trusting relationships were fragile. In several sessions, close allies supported each other deep into the game, only for one to turn on the other near the end; once betrayal occurred, attempts to repair the relationship almost always failed.

When surveys and behavior disagree

After the games, participants filled out widely used survey scales that ask how much they generally trust strangers. These measures are often used in social science to estimate “generalized trust” in a society. In this study, however, the usual link between what people say and what they do largely broke down. Players’ self-reported trust levels did not reliably predict how often they acted trustingly in the game. What mattered more were the local circumstances: how the round was unfolding, how others had behaved so far, and how active each player was overall. Highly active players tended to spread their moves widely instead of building deep, repeated trust with specific partners, while more moderate players sometimes formed stronger cooperative bonds.

What this means for life online

For non-specialists, the core message is both reassuring and cautionary. Even when rules and incentives push people toward selfishness and betrayal, many still choose to take risks on others, and these choices can help them do better in the long run. At the same time, those acts of trust are selective, unstable, and strongly shaped by the immediate social context rather than by fixed personality traits or simple survey responses. The game shows that trust on the internet is not a naive blind faith but a shifting, strategic process: people constantly weigh danger against potential benefits, watch how others behave, and adjust their willingness to cooperate. Understanding these subtle dynamics can help designers of online platforms—and everyday users—recognize when trust is likely to form, when it may collapse, and how easily it can be exploited.

Citation: Fehlhaber, A.L. The emergence of trust under conditions of distrust. Sci Rep 16, 11352 (2026). https://doi.org/10.1038/s41598-026-36825-3

Keywords: online trust, social deduction games, digital deception, cooperation and betrayal, internet strangers