Clear Sky Science · en

Intrinsic stabilization of synaptic plasticity improves learning and robustness in artificial neural networks

How brains inspire steadier learning in machines

When we learn a new skill, our brains somehow stay flexible enough to pick up fresh information yet stable enough not to forget what we already know. Modern artificial intelligence often struggles with this balance, learning quickly but sometimes becoming fragile or unstable. This study takes inspiration from the brain’s feedback signals to design a new way for artificial neural networks to learn more efficiently while staying robust in the face of noise, changing tasks, and misleading examples.

A new guiding signal inside learning machines

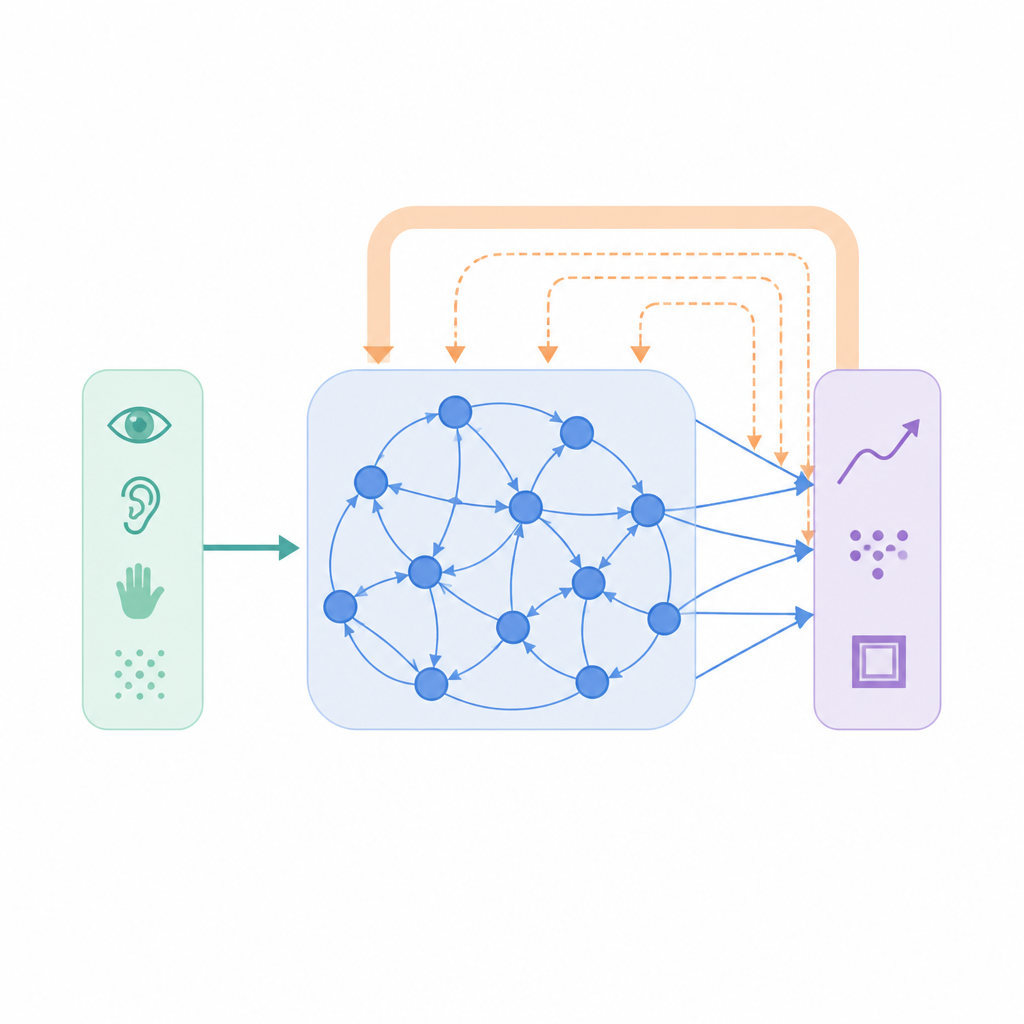

Most machine learning systems rely on a simple recipe: compare what the network predicts to the correct answer and nudge its connections to shrink that gap. This bottom-up approach works well but ignores the rich top-down signals that flow through real brains, carrying expectations, context, and predictions. The authors introduce an added ingredient called intrinsic Top-Down Stabilization (iTDS). Instead of only chasing the correct answer, the network also learns a slower, internal signal that tracks its own past output. This gently changing signal is fed back into the learning rule, so each connection is shaped not just by external error, but also by how the network tends to behave over time.

Teaching networks to remember their own habits

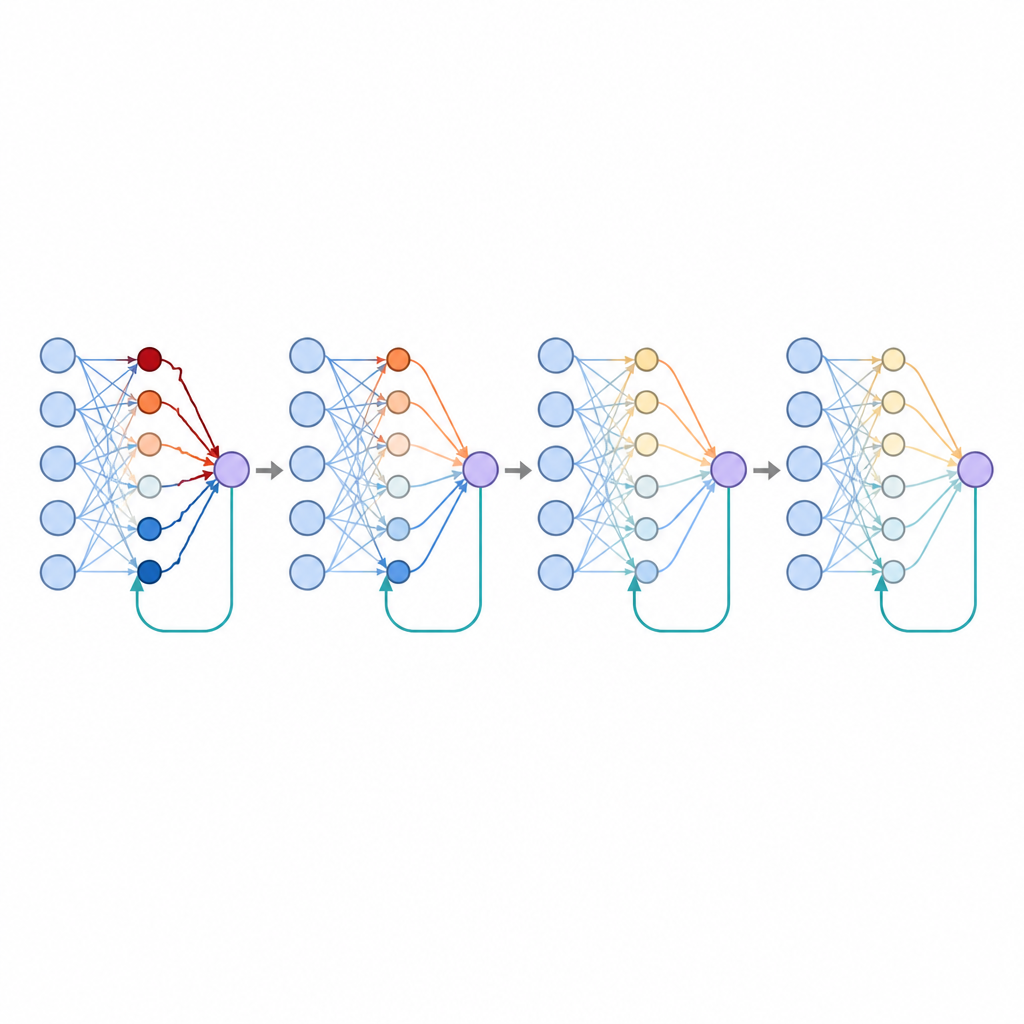

In iTDS, the network keeps a running, smoothed record of its recent output activity. This internal trace does not try to guess future data or raw sensory input; it simply becomes a filtered echo of what the network has already produced. During training, the usual error term pushes connections toward the correct target, while the top-down term compares the current output with this echo. When the network’s activity is highly variable, these two influences tend to point in a similar direction, reinforcing each other and speeding learning. As training progresses and the network settles into a more consistent pattern, the top-down influence gradually weakens, acting more like a stabilizer than a driver of change.

Better timing, categorizing, and coping with chaos

The researchers tested iTDS in several kinds of neural networks, including recurrent, feedforward, and reservoir models, across sixteen tasks. These ranged from tracking smooth signals over time, to recognizing handwritten digits, to solving simple logical problems. In many cases, adding iTDS led to faster training and lower error than standard supervised learning alone, even when the underlying network dynamics were chaotic. The method was particularly effective when the networks had many active units or when the output signals fluctuated strongly, conditions that are common in both biological and artificial settings.

Learning from noisy and misleading examples

Real-world data are rarely clean. To test robustness, the authors deliberately added noise to inputs, rotated images away from their usual orientation, and even slipped in “counter-trials” where examples were paired with the wrong answer. Under these stressful conditions, networks using only standard learning tended to overreact, reshaping their connections in unhelpful ways. With iTDS, the slow top-down signal acted like a brake: when a surprising or contradictory example appeared, the two learning influences partially canceled, reducing the size of the weight change. As a result, networks with iTDS were more resistant to both random distortions and cleverly chosen perturbations that normally fool classifiers.

Why splitting positive and negative feedback matters

The study also explored what happens when some connections specialize in tracking only positive errors and others track only negative ones, echoing how certain brain cells respond differently to rewards and setbacks. This separation naturally produced sparser patterns of connectivity, where many connections remained near zero while a smaller subset carried most of the influence. Such sparsity is known to improve generalization, and here it further lowered error rates. The authors showed that simply forcing many weights to stay at zero mimicked much of this benefit, suggesting that specialized feedback may be one biological route to achieving sparse, efficient representations.

What this means for future brains and machines

Overall, the iTDS framework shows that giving learning machines a slowly changing sense of their own recent behavior can make them faster learners and more stable problem-solvers. Instead of relying solely on the immediate mismatch with the correct answer, networks that blend quick corrections with gentle, top-down stabilization handle noise, confusion, and change more gracefully. For brain science, the model offers concrete predictions about how feedback pathways might shape learning. For artificial intelligence, it suggests that building in self-monitoring signals, across multiple timescales, could be a practical path toward systems that learn more like we do and fail less often when the world gets messy.

Citation: Pilzak, A., Pennington, B. & Thivierge, JP. Intrinsic stabilization of synaptic plasticity improves learning and robustness in artificial neural networks. Nat Commun 17, 4164 (2026). https://doi.org/10.1038/s41467-026-70920-3

Keywords: synaptic plasticity, top-down feedback, recurrent neural networks, robust learning, noise resilience