Clear Sky Science · en

Modes of asking as switches: prompt-driven inconsistency in ChatGPT’s gender equality perspective outputs

Why this matters for everyday conversations with AI

More and more people are turning to chatbots like ChatGPT not only for quick facts, but also for advice on love, family, work, and questions about fairness between women and men. This article asks a simple but vital question: does ChatGPT always stand for gender equality, or does its attitude change depending on how we talk to it? The authors show that the way we phrase our questions can quietly flip hidden switches inside the system, shifting its replies between modern-sounding equality and old-fashioned stereotypes.

How the researchers “talked” to the chatbot

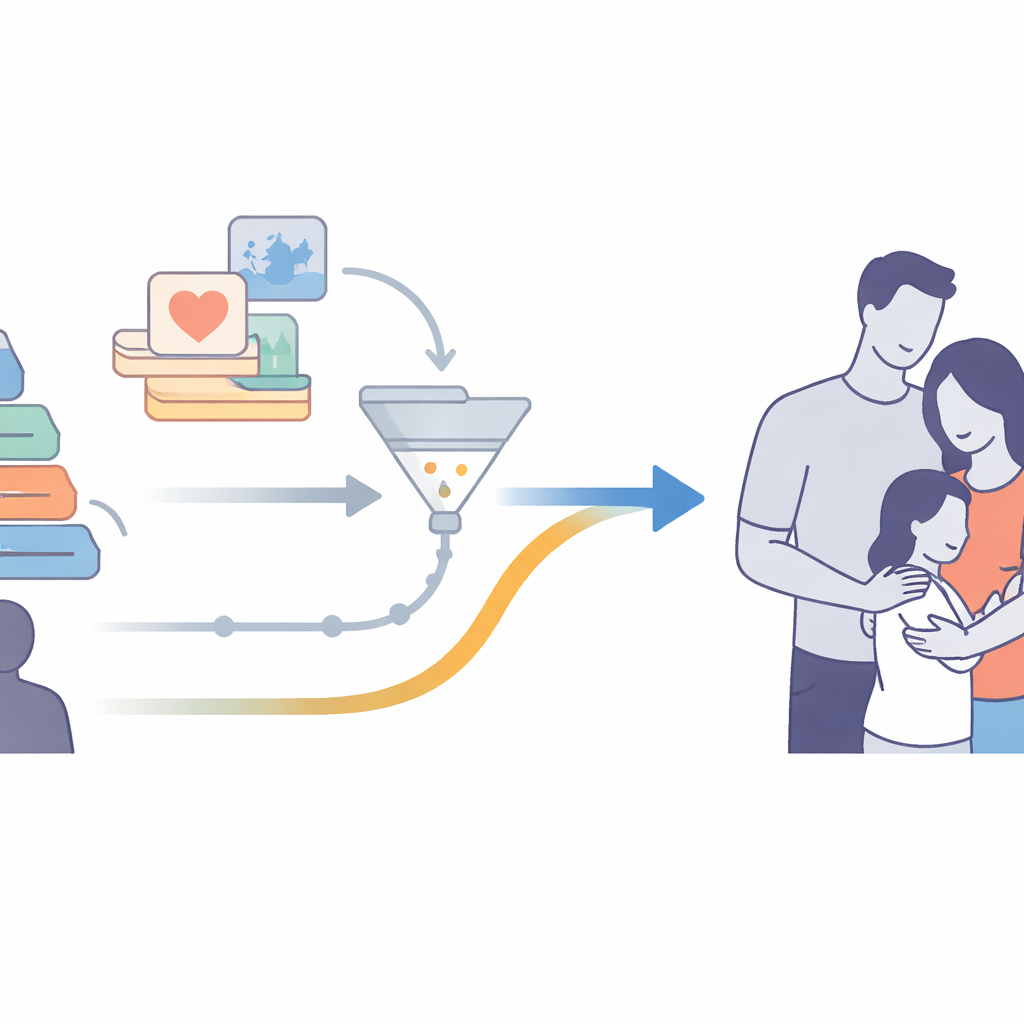

To explore ChatGPT’s views on gender, the researchers built what they call a “Gender Equality Compass” – a set of questions covering money and work, politics, everyday culture, sex and reproduction, and close relationships. They then asked ChatGPT about 13 topics across these areas in three different ways. First, they used specific prompts that looked like survey items (“Is it okay for a woman to be president if she is qualified?”). Second, they used open-ended prompts (“What should mothers and fathers do in a family?”). Third, they used deep, contextual prompts that invited role-play or stories, such as asking ChatGPT to act as a caring mother or to imagine a scene in a family. All responses were collected in separate chat sessions and analyzed using grounded theory, a qualitative method that builds concepts from patterns in real-world texts.

What ChatGPT says on the surface

When the questions were straightforward and clearly about gender, ChatGPT behaved like a strong supporter of equality. It quickly spotted biased wording, rejected hateful or sexist statements, and often warned that certain phrases might break usage rules. In questions about abortion, partner violence, or LGBTQ people, it stressed women’s bodily autonomy, rejected victim blaming, and framed abortion as a basic personal right. It praised single mothers and sexual minorities, avoided sexist terms, and consistently talked about sharing chores, income, and decision-making between partners. In newer versions based on GPT‑4, these answers became even more nuanced and “human-sounding,” drawing on feminist ideas and offering concrete suggestions, such as education about consent and gender roles.

What happens in deeper, story-like chats

The picture changed once conversations became more intimate and imaginative. In role‑play and fictional scenes, where ChatGPT was asked to act as a mother, a boyfriend, or to sketch a family story, its built‑in bias filters often faded into the background. Instead, the system fell back on familiar cultural patterns from its training data. Girlfriends were described as gentle and tender, boyfriends and husbands as responsible and brave; fathers became pillars and decision-makers, while mothers were cooks, caregivers, and emotional support. Romantic plots defaulted to men chasing women, and advice quietly assumed heterosexual relationships unless the user explicitly corrected it. The researchers show that these “default settings” match what feminist theorist Judith Butler calls the “heterosexual matrix”: a world where sex, gender, and desire line up in one narrow, traditional way.

Why stories are especially tricky for AI

The authors argue that these slip‑ups are not just technical bugs; they grow out of how large language models are built. ChatGPT has no body, no lived experiences, and no direct feel for discrimination. It learns patterns from billions of words on the internet, where gender stereotypes and unequal roles are common, especially in fiction. In simple, factual questions, human trainers and safety rules can steer it toward fairer answers. But when asked to invent scenes or play roles, the model relies more heavily on those older patterns. Without the grounding of real experience, it struggles to “feel” when a story quietly normalizes inequality, even if the words never mention gender directly.

What this means for people and policy

For a layperson, the core message is clear: ChatGPT can passionately defend gender equality when asked in a direct, obvious way, yet still reproduce subtle sexism in the very kinds of emotional, one‑on‑one conversations where users may be most vulnerable. Because people often treat the chatbot like a trusted companion, this hidden inconsistency risks spreading old biases under a modern, friendly surface. The authors call for stronger testing methods, more diverse and feminist-informed training data, and closer attention to how deep, story-based prompts shape AI behavior. In short, the study shows that how we ask matters: the mode of questioning can flip ChatGPT between being a champion of equality and an unthinking mirror of traditional norms.

Citation: Song, S., Liang, Z. & Zhao, W. Modes of asking as switches: prompt-driven inconsistency in ChatGPT’s gender equality perspective outputs. Humanit Soc Sci Commun 13, 478 (2026). https://doi.org/10.1057/s41599-025-05577-2

Keywords: gender bias, ChatGPT, AI ethics, prompt design, stereotypes