Clear Sky Science · en

Adaptive robot guidance through real-time compliance estimation and dual-modal control

Teaching Robots to Be Better Helpers

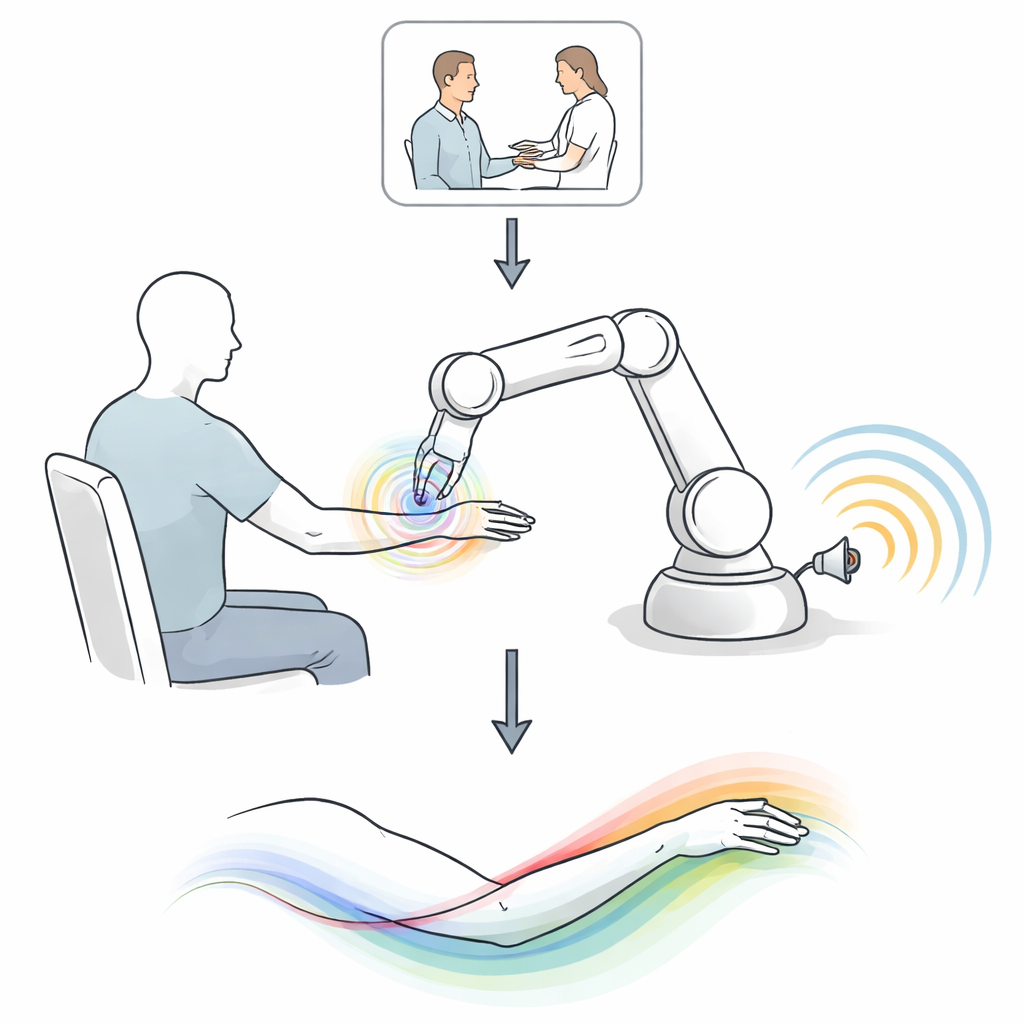

Imagine learning a new dance move or rehabbing a sore shoulder. A human instructor doesn’t just push your arm into place; they also offer step-by-step tips and encouragement. Today’s robots, by contrast, usually either move you silently or talk without being able to touch. This paper introduces a new way for robots to blend touch and speech, adapting in real time to how well a person is following along so the help feels safer, more natural, and more effective.

Why Blending Touch and Voice Matters

Human teachers constantly adjust how much they physically guide you versus how much they talk. If you are off-track, they might firmly move your arm and give clear directions. As you improve, they back off, offering lighter guidance and more encouragement. Robots have struggled to do this because physical forces and spoken language are very different kinds of signals. The authors set out to give a robot something like an instructor’s intuition: a way to estimate how willing and able a person is to follow guidance, then decide when to push, when to talk, and how to combine both.

How the Adaptive Robot Coach Works

The team designed a Robot Guidance Controller with three main parts. First, it continuously estimates “compliance” — how closely a person is following the desired motion — using simple measures like the gap between where the arm should be and where it is, and how smoothly it is moving. Second, an optimization step decides how much of the correction should come through physical force versus spoken cues, shifting the balance as the person’s behavior changes. Third, a force-to-language model converts the robot’s internal plans into short, context-appropriate phrases, such as gentle tips or encouragement, based on patterns learned from real human therapists. Together, these elements let the robot coordinate its hands and its “voice” in real time.

Learning from Expert Therapists

To ground the system in real-world teaching behavior, the researchers studied physical therapists working with patients on a simple but clinically important task: lifting the arm from the side to overhead and back. In carefully recorded sessions, therapists sometimes guided only with speech, sometimes only with touch, and sometimes with both. The team found clear patterns. When patients resisted or struggled, therapists used stronger physical assistance and more frequent, instructional speech. As patients followed more closely, therapists eased their grip, spoke less often, and shifted from commands to encouragement. These patterns directly inspired how the robot estimates compliance and decides when to lean on force, speech, or a mix of the two.

Putting the Robot Coach to the Test

The new controller was evaluated with twelve healthy volunteers performing the same shoulder-raising exercise alongside a collaborative robot. In one set of trials, the researchers compared single modes of help—only verbal cues, only physical forces—with a basic dual-mode controller that always combined them in a fixed way. In a second study, they compared that fixed dual-mode setup with their adaptive Robot Guidance Controller. Performance was measured by how closely participants followed the intended path, how smooth their motion was, how long they took to finish, and how the robot’s speech patterns changed.

What the Experiments Revealed

Across conditions, combining touch and speech generally beat using either one alone, especially when the newly added channel was the only one the person actually paid attention to. In some cases, adding physical guidance cut position errors by more than half and made movements dramatically smoother and faster. The adaptive controller went further, trimming tracking errors by up to 50%, improving smoothness, and reducing completion time by about a quarter compared with the fixed dual-mode baseline. Notably, its speech patterns—how often it “spoke” and how much it shifted from instructions to encouragement—more closely matched those of expert therapists, and participants rated the adaptive guidance as more natural and more like working with a human coach.

What This Could Mean for Everyday Life

For non-experts, the takeaway is that robots can be taught to guide people more like skilled human instructors: using a mix of gentle touch and timely words that adapts as you learn. While this work was demonstrated in a rehabilitation exercise, the underlying ideas could extend to teaching handwriting, sports skills, or complex job tasks. By sensing how well someone is responding and adjusting physical and verbal help on the fly, future robots may become not just precise machines, but patient, responsive partners in learning and recovery.

Citation: Tejwani, R., Payne, J., Velazquez, K. et al. Adaptive robot guidance through real-time compliance estimation and dual-modal control. Commun Eng 5, 81 (2026). https://doi.org/10.1038/s44172-026-00632-5

Keywords: human-robot interaction, rehabilitation robotics, adaptive guidance, robotic coaching, multimodal control