Clear Sky Science · en

Explainable deep learning framework incorporating medical knowledge for insulin titration in diabetes

Why Smarter Insulin Decisions Matter

For millions of people living with type 2 diabetes, getting the insulin dose right is a daily balancing act. In hospitals, doctors must constantly adjust doses based on changing blood sugar levels, lab tests, and medications. This is time‑consuming and mentally taxing, especially where staff are stretched thin. Powerful artificial intelligence (AI) systems can help, but most work like sealed boxes: they produce a number without showing their reasoning. This study describes a new AI framework that not only recommends insulin doses, but also explains its choices in a way that lines up with how experienced diabetes specialists think.

Making Sense of a Black‑Box Helper

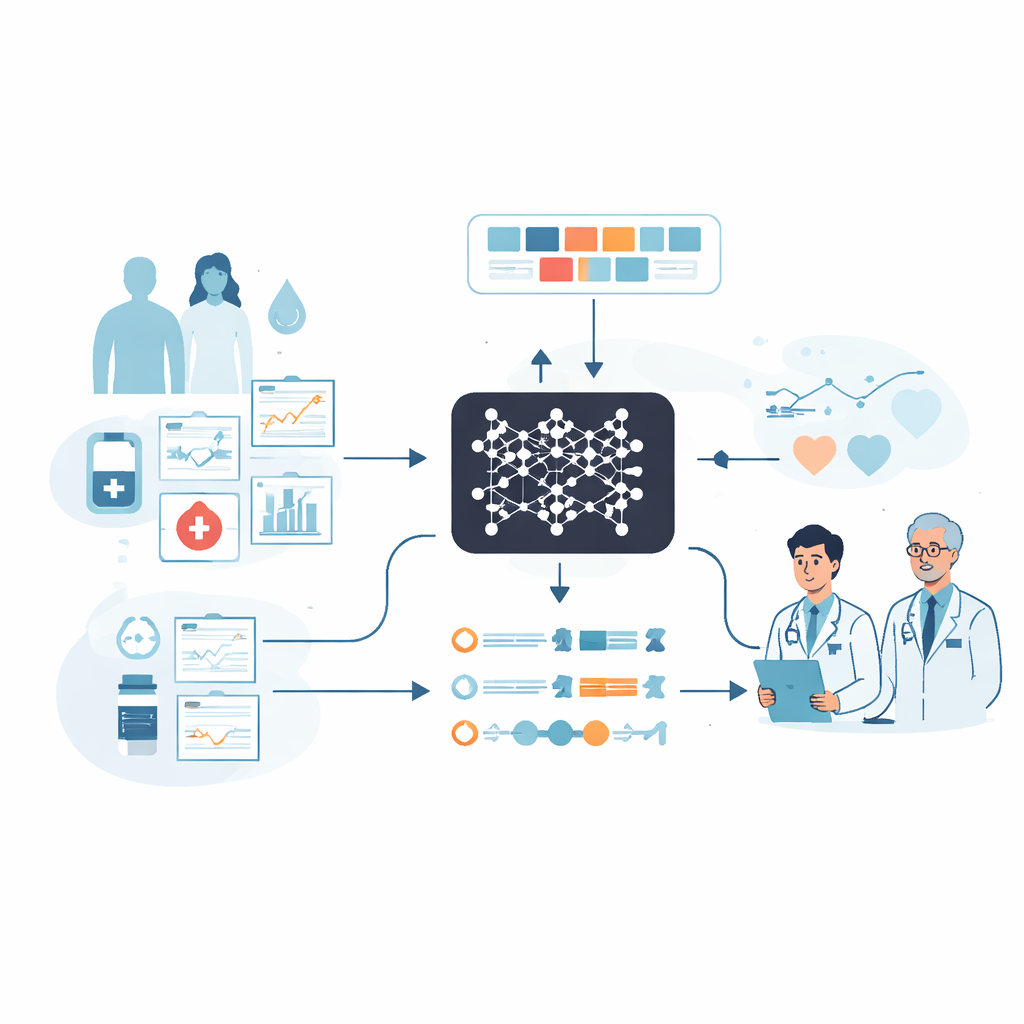

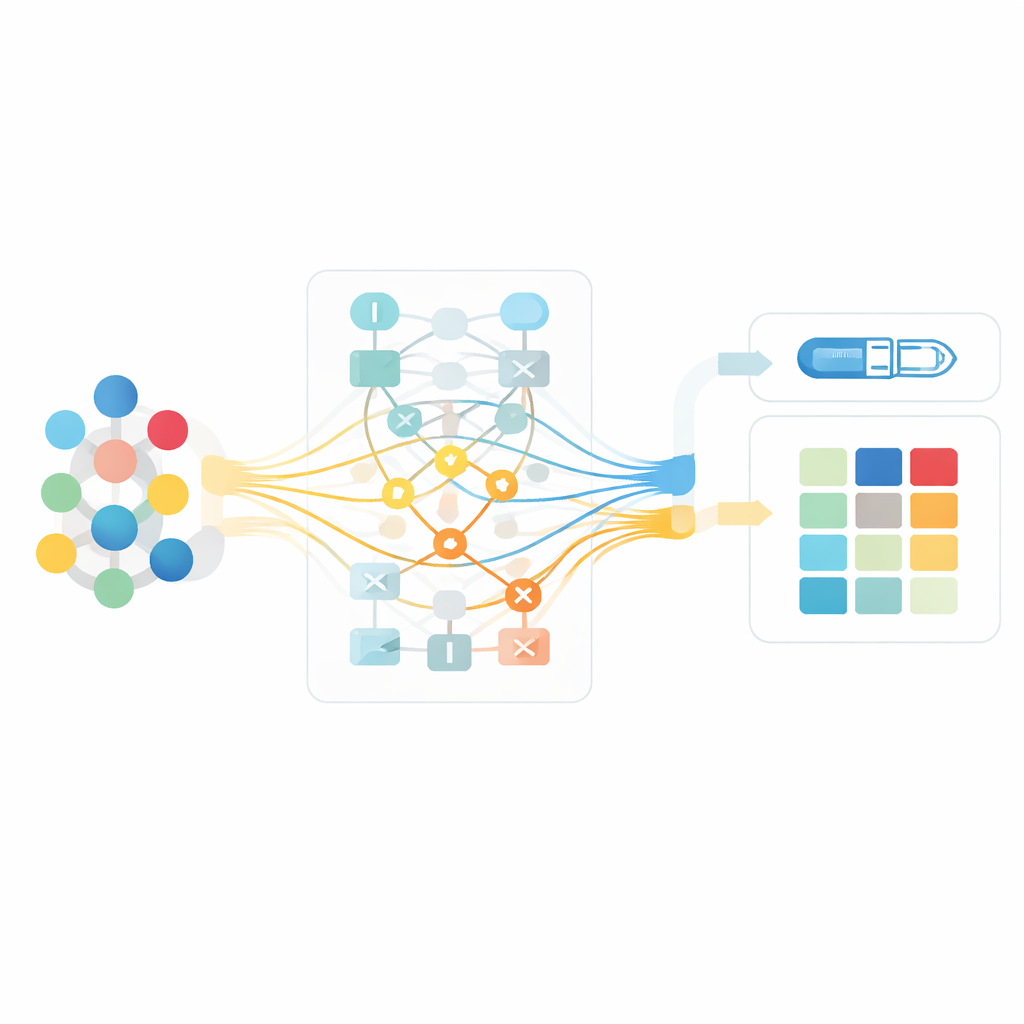

The researchers built on a previously developed deep learning system that predicts insulin doses for hospitalized patients with type 2 diabetes, using data from electronic health records. These records include basic patient details, blood sugar readings throughout the day, insulin and other diabetes drugs, and lab test results. The original model performed well but could not show why it favored one dose over another, making many clinicians hesitant to rely on it. To bridge this gap, the team designed an “explainable AI” layer that sits on top of the black‑box model and translates its inner workings into human‑understandable pieces, such as which blood sugar records, prior insulin injections, or patient characteristics most influenced a particular recommendation.

Looking at Factors in Combination, Not Alone

Most existing explanation tools focus on the impact of one factor at a time—say, age or a single blood sugar value—on the model’s prediction. That can be misleading in diabetes care, where the safe insulin dose usually depends on how several factors interact, such as recent blood sugar trends, kidney function, body weight, and past doses. The new framework uses a method that can capture both individual effects and pairwise interactions between factors. This allows it to highlight, for example, how a high after‑lunch blood sugar together with a recent decrease in lunch‑time insulin sends a strong signal to increase the next dose, whereas the same blood sugar in another context might call for a smaller change.

Teaching the AI to Respect Medical Judgment

Even a sophisticated explanation method can clash with medical common sense if it is driven only by patterns in the data. To address this, the researchers introduced a “doctor‑in‑the‑loop” process. A panel of experienced endocrinologists repeatedly reviewed the AI’s explanations, checking whether the features it highlighted, and the direction and size of their effects, agreed with established clinical guidelines. Their feedback was translated into explicit constraints, such as: insulin doses should not decrease when blood sugar rises, or when other blood sugar‑lowering drugs are reduced. These constraints were built into the explanation engine and refined over several rounds until the experts judged that the system’s reasoning matched safe and accepted practice. Statistical tests showed that, after this process, the explanations aligned far better with known diabetes care principles and avoided obvious mistakes.

How the System Affects Real‑World Decisions

To see whether better explanations actually help clinicians, the team ran a study with eight doctors—half junior and half more experienced—who reviewed real patient cases under three conditions: working alone, seeing only the AI’s dose suggestion, and seeing both the dose and its explanation. Across all participants, viewing the AI explanation reduced the average difference between their chosen dose and an expert reference and increased the percentage of decisions that matched the expert’s direction and stayed within an acceptable range. The benefit was especially strong for junior doctors, whose accuracy and confidence improved the most. Senior doctors already performed well, but still reported feeling more confident when they could see why the AI recommended a particular dose.

Why Explanation Quality Matters

The researchers also tested what would happen if the AI’s explanations were wrong, by feeding doctors synthetic, deliberately faulty reasoning alongside otherwise good dose suggestions. In these cases, performance often worsened, particularly among junior clinicians, and the usual benefits of AI support disappeared or even reversed. This finding underscores that explanations are not just a cosmetic add‑on: if they are misleading, they can harm care by steering clinicians away from their own better judgment. High‑quality, medically grounded explanations are therefore essential for safe AI use.

What This Means for Patients and Clinicians

In everyday terms, the study shows that an AI system can be trained not only to recommend insulin doses, but also to “show its work” in a way that resonates with how diabetes specialists reason about care. By weaving medical knowledge directly into its explanation engine and involving doctors throughout development, the framework makes AI suggestions more trustworthy and easier to scrutinize. For patients, this could translate into safer and more consistent insulin adjustments, especially when less experienced clinicians are on duty. More broadly, the approach offers a template for building transparent AI tools in other complex areas of medicine, helping transform black‑box predictions into collaborative partners in clinical decision‑making.

Citation: He, H., Ying, Z., Li, B. et al. Explainable deep learning framework incorporating medical knowledge for insulin titration in diabetes. Commun Med 6, 192 (2026). https://doi.org/10.1038/s43856-026-01449-1

Keywords: type 2 diabetes, insulin dosing, explainable AI, clinical decision support, electronic health records