Clear Sky Science · en

Specialized foundation models for intelligent operating rooms

Smarter Help in the Operating Room

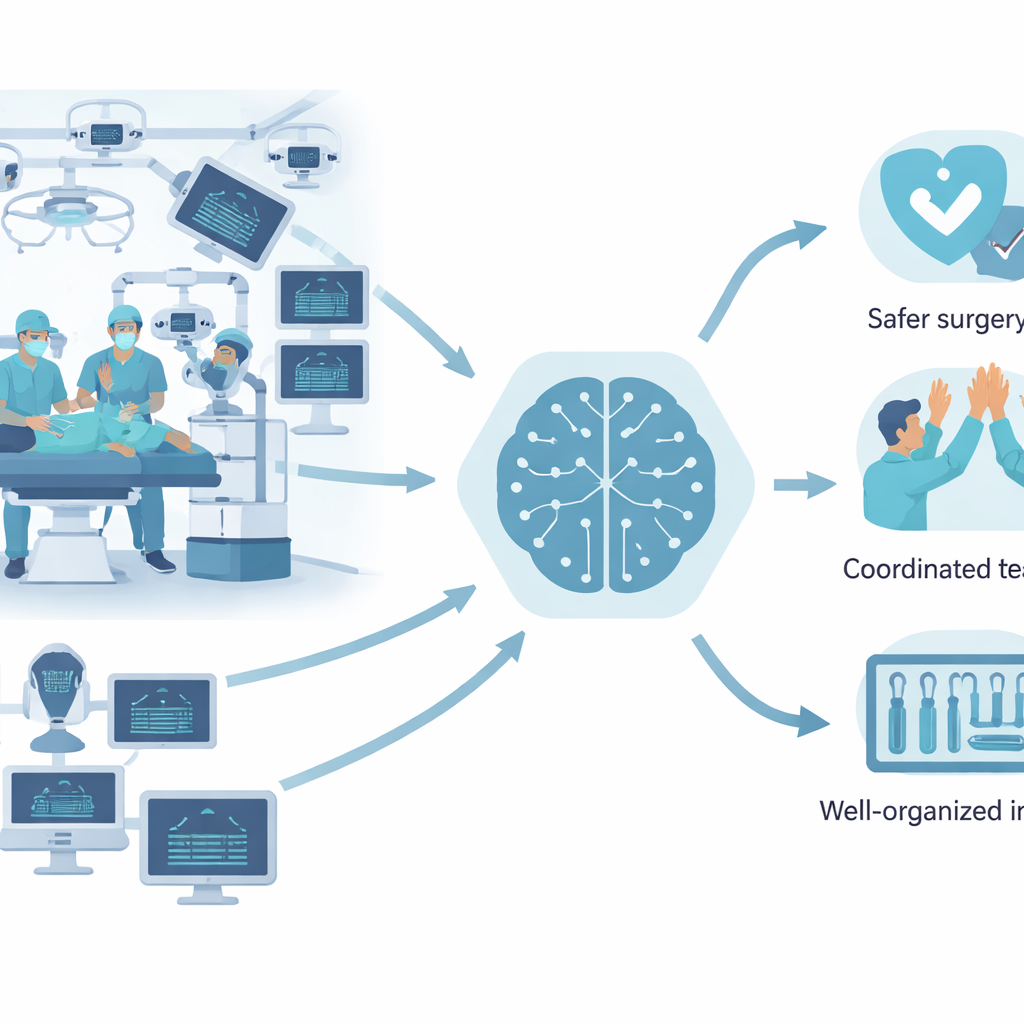

Modern surgery happens in a crowded, high‑tech space where people, robots, cameras, and monitors must work together without mistakes. This article presents ORQA, a new kind of artificial intelligence designed specifically for the operating room. Unlike popular chatbots that mainly handle words and simple pictures, ORQA is built to watch, listen, and interpret everything happening during surgery so it can support teams, spot hazards, and ultimately make operations safer.

Why Today’s AI Struggles in Surgery

Many of the AI tools that have impressed the world were trained on internet images, videos, and text. They can explain medical terms or describe a common operation, but the visual world of an operating room is very different. Multiple cameras show overlapping views, robotic arms move near people, tools are small and shiny, and many events unfold at once. General‑purpose AI models often miss critical details: they may see that a surgeon is present but fail to locate a specific tool, predict the next step of a robot, or recognize when sterile areas are being violated. When the authors tested leading vision‑language systems, including commercial models and a strong open‑source model, their performance on surgical tasks was only slightly better than guessing based on the most common answer.

Turning Surgical Workflows into Questions and Answers

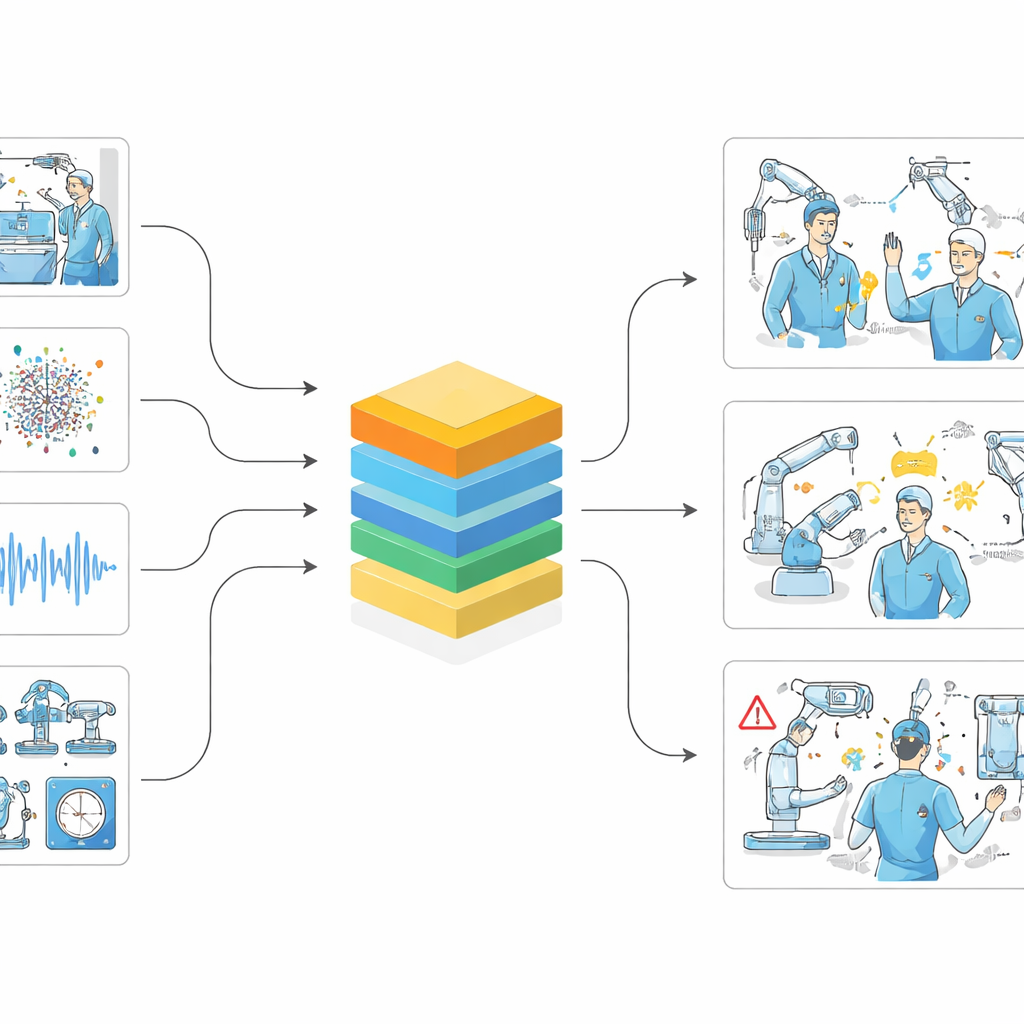

To systematically measure and improve machine understanding of surgery, the researchers created the ORQA benchmark. They combined four rich datasets of real and simulated operating rooms that include external camera views, surgeon‑mounted video, 3D scene reconstructions, audio, robot logs, and more. From these sources they generated over 100 million question‑and‑answer pairs about what is happening in the room. These questions cover 23 task types, such as how many people are present, which tools are in use, what action is underway, where a tool is located in 3D space, whether a sterility breach is occurring, and what the next robot step will be. By reducing this huge pool to one million diverse examples for training and separate sets for testing, they created a common yardstick for any AI model that claims to understand surgery.

A Foundation Model Built for the Operating Room

Using this benchmark, the team trained ORQA, a specialized foundation model that fuses many streams of surgical data. Separate encoders handle video frames, 3D point clouds, sound, speech transcripts, robot telemetry, and tracking data, turning them into a shared numerical representation. A large language model then reasons over this combined signal to answer questions about the scene. On the ORQA benchmark, this domain‑tuned system more than doubles the performance of general models, and it does so across a wide range of tasks—recognizing actions, locating instruments, reasoning about distances and roles, and checking safety‑related conditions. The model can even be extended with memory structures that keep track of how the operation has unfolded over time, hinting at future gains from richer temporal modeling.

Making Surgical AI Fast and Practical

Powerful models are often too large for real‑time use inside hospitals, where computers may be small and network connections to remote servers are restricted for privacy reasons. To address this, the authors used a process called distillation, in which a big “teacher” model trains smaller “student” versions. They produced three compact ORQA variants that run several times faster while preserving most of the original accuracy. These lighter models can operate locally on a single graphics card or edge device, allowing multiple stations in an operating room to be monitored at once and avoiding the need to stream sensitive patient data to the cloud. The structured, traceable outputs—such as lists of people, tools, and their interactions—also make it easier for clinicians to inspect, audit, and trust the system’s behavior.

What This Means for Future Surgery

In simple terms, the study shows that surgery needs its own kind of AI, trained directly on the sights and sounds of real operations rather than on general web content. ORQA demonstrates that when a model is exposed to the right multimodal surgical data, it can reliably track who is doing what, where tools are, how the procedure is progressing, and whether something unsafe might be happening. While much work remains before such systems can directly guide surgery, ORQA and its benchmark provide a foundation for smarter assistants, better documentation, and, eventually, more autonomous and coordinated operating rooms.

Citation: Özsoy, E., Pellegrini, C., Bani-Harouni, D. et al. Specialized foundation models for intelligent operating rooms. npj Digit. Med. 9, 362 (2026). https://doi.org/10.1038/s41746-026-02631-4

Keywords: surgical AI, operating room, multimodal models, medical robotics, patient safety