Clear Sky Science · en

YOLO-MFD: a multi-scale feature and dynamic head framework for prefabricated shoreline underwater object detection

Smarter Eyes Beneath City Shorelines

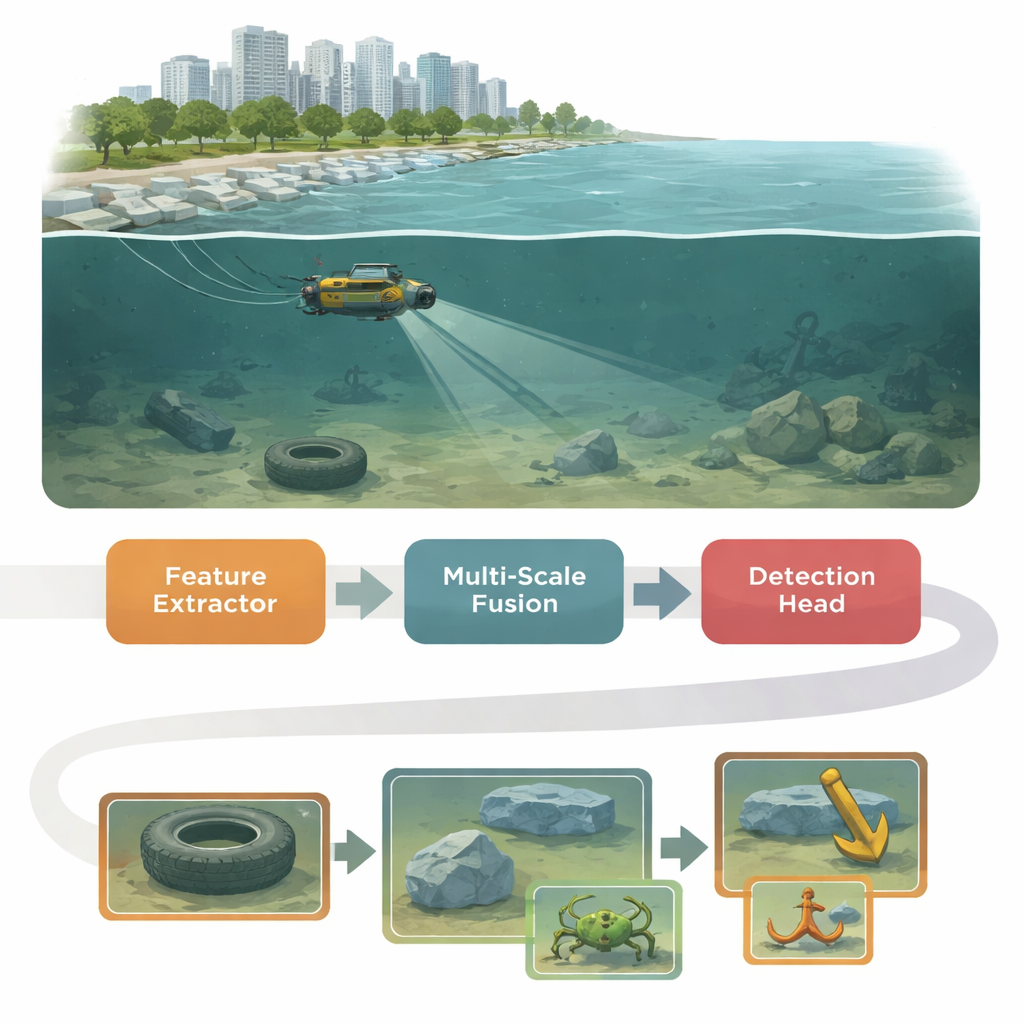

As cities build more walls, piers, and prefabricated revetments along rivers and lakes, much of the critical infrastructure ends up hidden underwater. Checking whether these blocks are stable, cracked, or cluttered with debris is hard, especially in cloudy, shallow water where visibility is poor. This paper introduces YOLO-MFD, a new computer vision system that helps underwater robots spot small, faint objects along shorelines more reliably and quickly, even when the water is murky and the scene is crowded.

Why Underwater Pictures Are So Hard to Read

Rivers, lakes, and urban shore waters are rarely crystal clear. Light is absorbed and scattered, colors shift toward green or blue, and suspended particles blur edges. Small creatures, marine litter, or defects in prefabricated shoreline blocks can be tiny, low-contrast, and densely packed. Standard object-detection systems, originally designed for clear street scenes, tend to miss these targets or confuse them with background clutter. At the same time, inspection robots and embedded devices used near shorelines have limited computing power, so any solution must be both accurate and efficient.

A Three-Part Brain for Murky Water

YOLO-MFD builds on the popular YOLO family of real-time detectors but reshapes its internal “brain” in three coordinated stages. First, a new backbone called CUMANet (Cross-scale Unified Multi-scale Attention Network) learns to extract features from images while paying attention to broad context. It uses parallel branches and a specialized convolution that behaves like a multi-branch module during training but simplifies to a single, efficient operation during deployment. This helps the network look beyond local noise, capture long-range cues, and keep important details from being washed out by turbidity and color distortion.

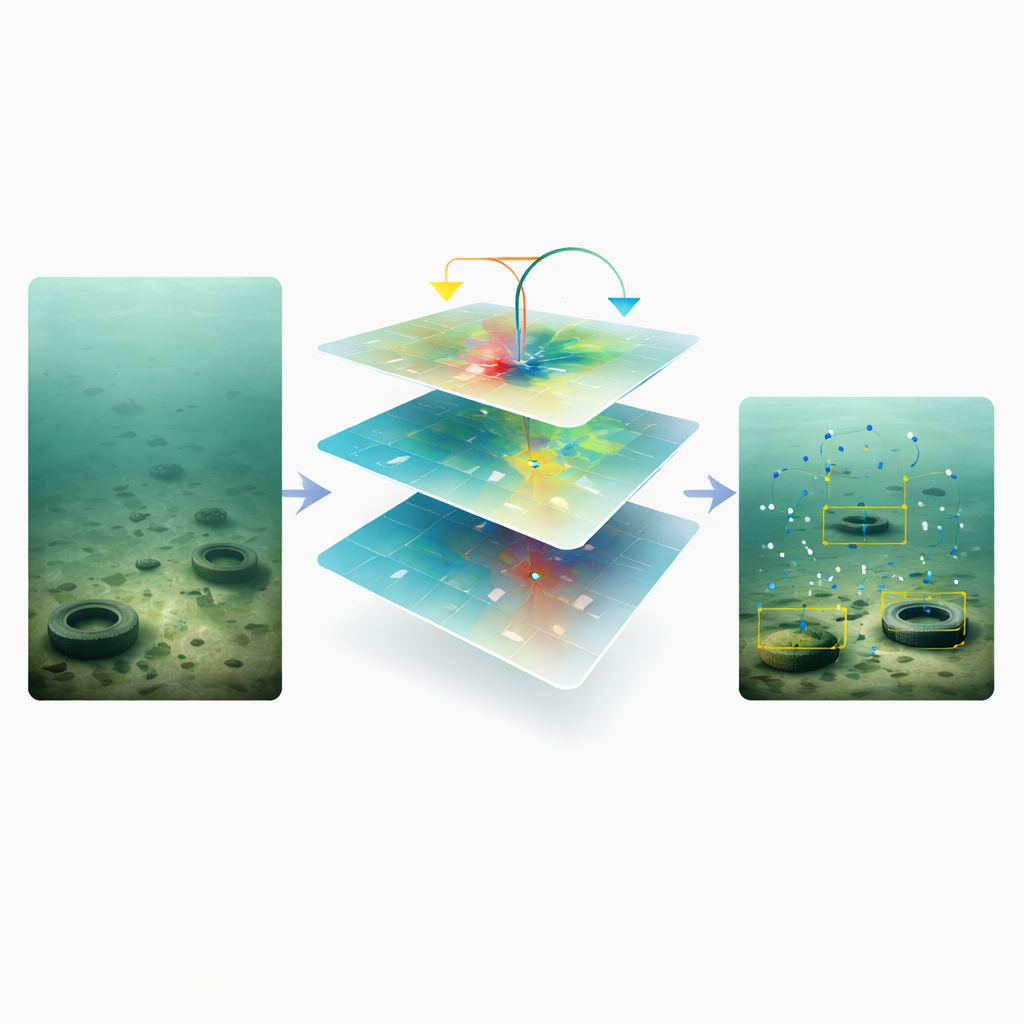

Keeping Track of Tiny Clues at Different Scales

The second stage, Adaptive Feature Modulation (AFM), tackles a common weakness in vision systems: when combining information from coarse and fine resolutions, small-scale details often get drowned out. AFM brings together two feature maps by first aligning their sizes and channels, then computing gentle, independent gates for each branch. Instead of forcing one scale to dominate, AFM lets both contribute whenever they carry useful signals, and adds a residual shortcut to avoid losing weak but important patterns. This balanced multi-scale fusion is especially helpful for spotting small sea cucumbers, starfish, or cracks in concrete that barely stand out from the background.

A More Flexible Final Decision Maker

The final stage, DPNDyHead (Dual-Pooling and Normalized Dynamic Head), refines features just before the system decides what and where objects are. It borrows the idea of deformable convolutions, which shift their sampling points to better follow blurred or distorted shapes underwater. To handle objects of very different sizes, DPNDyHead uses both average and max pooling across scales, blending global context with sharp local responses such as edges or textures. A normalization step steadies the feature statistics before generating task-specific activations, reducing the impact of color shifts and uneven lighting. Together, these tricks help align the confidence of classification (what the object is) with the precision of localization (where it is).

How Well Does It Work in the Real World?

The authors tested YOLO-MFD on two public underwater datasets from aquaculture and open-sea farms, which include many small, crowded targets and strong image degradation. On both DUO and UDD, the new framework outperformed classic two-stage detectors, anchor-free methods, modern Transformer-based models, and recent YOLO variants. It achieved higher mean Average Precision and recall—meaning it both found more true objects and made fewer mistakes—while using only a few million parameters and modest computing power. Detailed experiments showed that each of the three modules (CUMANet, AFM, and DPNDyHead) contributed measurable gains, and their combination yielded the best overall balance of accuracy, robustness, and speed.

Clearer Insight for Safer Shores

In practical terms, this work offers underwater robots and monitoring systems a sharper, more reliable view of what lies along urban shorelines and engineered riverbanks. By designing an object detector that explicitly counters murky water, scale imbalance, and misaligned predictions, the authors provide a tool that can better track infrastructure health, support ecological surveys, and guide intelligent management of prefabricated shoreline structures. As future work explores broader environments and even lighter versions of the model, methods like YOLO-MFD could become a key part of routine underwater inspection, helping keep coastal cities and inland waterways safer and better maintained.

Citation: Gang, Y., Li, T., Li, S. et al. YOLO-MFD: a multi-scale feature and dynamic head framework for prefabricated shoreline underwater object detection. Sci Rep 16, 10971 (2026). https://doi.org/10.1038/s41598-026-45591-1

Keywords: underwater object detection, shoreline infrastructure, computer vision, autonomous underwater vehicles, deep learning