Clear Sky Science · en

A behaviour-adaptive AI assistant enhancing accessibility and usability for blind users through real-time interaction personalization

Why Smarter Voices Matter

Talking computers are becoming common in our phones, speakers, and laptops. But for people who cannot see, these voices are more than a convenience—they are a lifeline to information, work, and daily tasks. This paper introduces AURA, a new kind of talking assistant designed to listen not just to what blind users say, but to how they react in real time, gently reshaping its own speaking style so it becomes easier to follow and less tiring to use.

Everyday Tools That Still Fall Short

Today’s screen readers and voice assistants read out screens or answer questions, but they usually talk to everyone in the same way. They tend to speak in a fixed speed, offer either too much or too little detail, and march through content in a strict order. For many blind users, this “one size fits all” approach leads to repeated replays, frequent skips, and mental overload as they try to keep up or dig out what matters. Past research has shown that changing speech speed, amount of detail, and how complex the language is can make a big difference, yet most tools do not adjust automatically as a conversation unfolds.

A New Kind of Listening

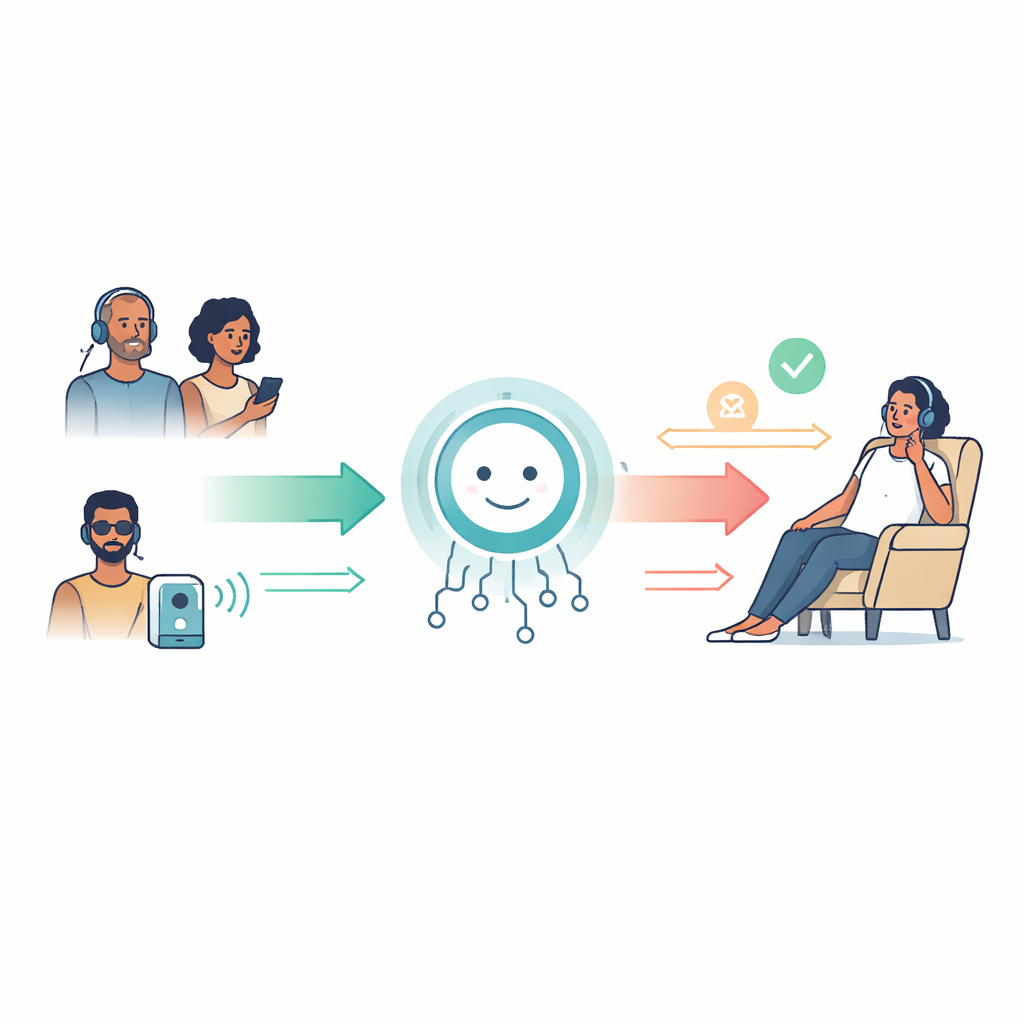

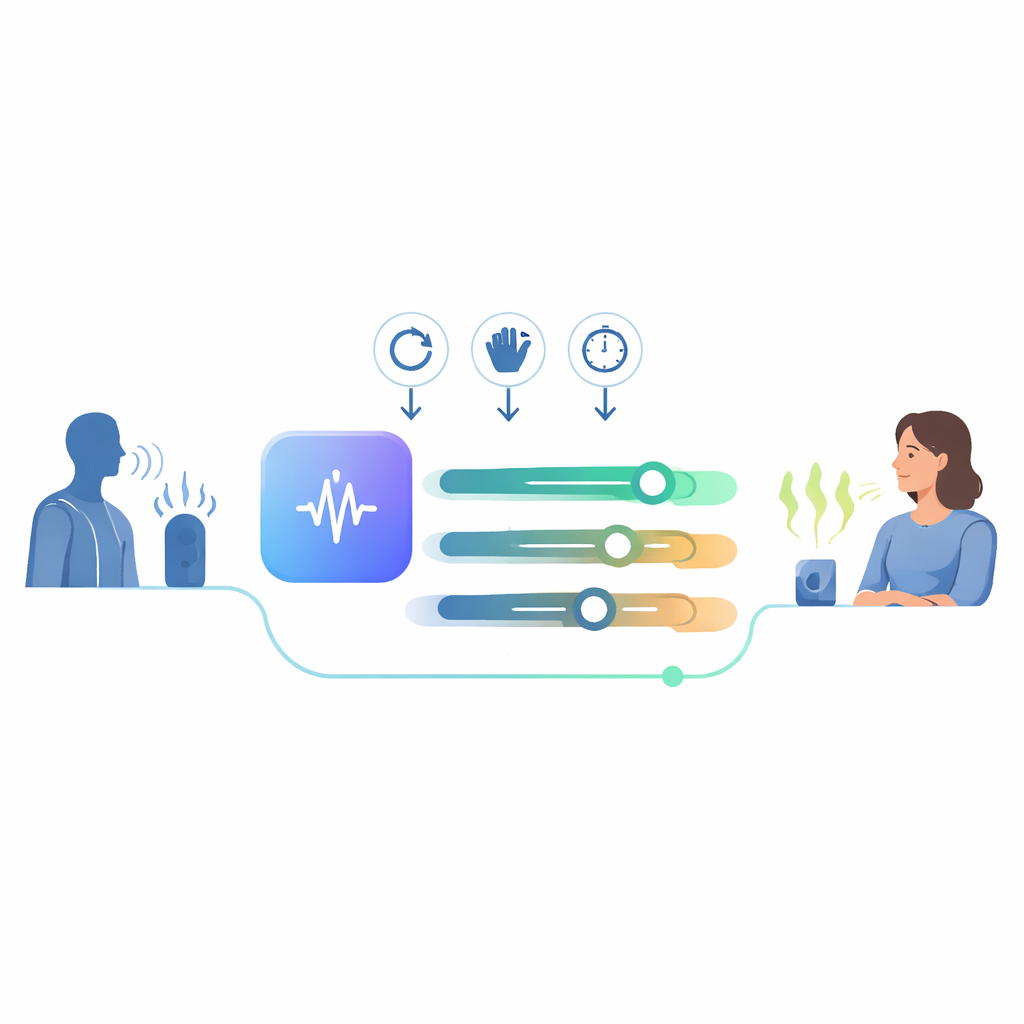

AURA (Adaptive User-Responsive Assistant) is built to change that pattern. It is a voice-based system that combines a powerful language model—the same general technology behind advanced chatbots—with a simple but smart way of watching how a user behaves during a session. Instead of guessing from long surveys or fixed user profiles, AURA watches three natural signals: how often a user replays a response, how often they cut a message short, and how long they listen before acting. These clues do not need any extra hardware, do not expose private data like eye movements or heart rate, and fit naturally into how people already use talking systems.

How the Assistant Adapts on the Fly

Inside AURA, the interaction runs in a closed loop. First, the user speaks, and their words are turned into text. The system then fetches a lightweight profile that stores three adjustable dials: how fast it should speak, how long its answers should be, and how simple or complex its language should sound. This profile shapes the prompt sent to the language model, which then crafts a response that already aims to match the user’s current style. The text is turned into speech using the chosen settings and played back to the user. During and after this response, AURA quietly logs whether the user replays, skips, or listens straight through, then tweaks the profile for the next turn. Over a few back-and-forth exchanges, the assistant “homes in” on a way of speaking that better fits the listener—all without the user needing to change any settings menus.

Testing the Idea in a Safe Sandbox

To see whether this rule-based adaptation actually behaves sensibly, the researcher did not start with human volunteers. Instead, the study used simulated user profiles that mimic three common patterns: one that replays a lot because it struggles to catch details, one that skips a lot because answers feel too long, and one that prefers fast, dense responses. For each profile, the system ran many short sessions both with and without adaptation. The study then measured how often replays and skips happened, how long tasks took, and whether the assistant’s internal settings settled into a stable pattern that matched the intended profile. While no formal statistics were run—this was a feasibility check rather than a full user trial—the numbers showed clear shifts.

What the Early Numbers Suggest

Under replay-heavy conditions, the adaptive version of AURA cut replay events by roughly two thirds compared with a fixed, non-adaptive setup. In skip-heavy conditions, skips dropped by about half once the system learned to keep answers shorter and more to the point. Across all simulated profiles, the assistant reached stable settings that matched the target style in most sessions, and completing a standard multi-step task took about one fifth less time with adaptation turned on. Importantly, the adaptation rules were simple and transparent: repeated replays nudged the assistant toward slower speech and simpler language, while frequent skips nudged it toward briefer, denser replies. This design keeps the system easier to understand and debug than a black-box learning model—a key concern for safety and trust in assistive technology.

What This Means for Real People

For readers outside the research world, the main takeaway is that talking computers can become more considerate listeners. By paying attention to natural signals like “Did you replay that?” or “Did you cut me off?”, an assistant can quickly learn to talk in a way that is less frustrating and more efficient, especially for blind and visually impaired users who depend on audio. The current work does not yet prove better day-to-day experience, because it was tested with computer-generated behaviour rather than real people. But it lays the groundwork—both technical and conceptual—for future studies with blind users, richer conversations, and support in multiple languages. If these next steps succeed, tools like AURA could help shift assistive technology from rigid, one-way reading machines to responsive partners that adapt themselves in real time to the people who rely on them most.

Citation: Algamdi, S.A. A behaviour-adaptive AI assistant enhancing accessibility and usability for blind users through real-time interaction personalization. Sci Rep 16, 12666 (2026). https://doi.org/10.1038/s41598-026-43320-2

Keywords: blind accessibility, adaptive voice assistant, behavior-aware AI, large language models, assistive technology