Clear Sky Science · en

Backpropagation-free spiking neural networks with the forward–forward algorithm

Teaching Computers to Think in Spikes

Many of today’s smartest machines learn using math that looks nothing like what happens in the brain. This paper explores a new way to train “spiking” neural networks—computer models that communicate with brief electrical pulses, much like real neurons do—without relying on the standard backpropagation recipe. The authors show that a brain-inspired method called the forward–forward algorithm can teach these spiking networks to recognize images and sounds with accuracy close to, and sometimes better than, the best existing methods, while being more compatible with low-power, neuromorphic hardware.

Why Spiking Brains Are Hard to Train

Spiking neural networks process information using discrete bursts, or spikes, over time rather than smooth, continuous numbers. This makes them attractive for energy-efficient computing and for mimicking biological brains. But it also creates a headache for conventional learning algorithms: backpropagation needs smooth gradients to adjust the connections between neurons, while spikes are all-or-nothing events. Workarounds exist—such as surrogate gradients that pretend spikes are smooth during learning—but backpropagation still requires storing large amounts of intermediate activity and sending precise error signals backward through every layer, assumptions that are both computationally heavy and biologically unrealistic.

A Different Path: Learning with Two Forward Passes

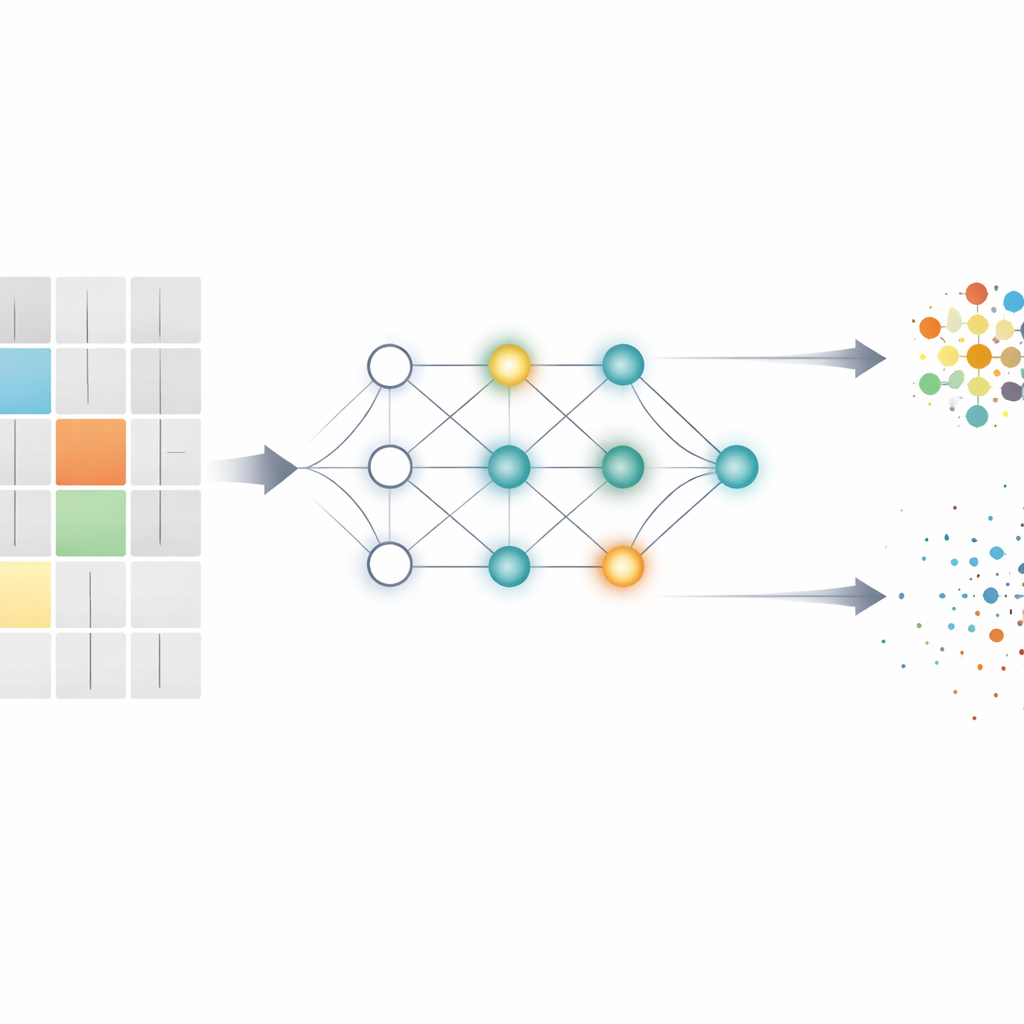

The forward–forward algorithm offers a contrasting approach. Instead of one forward pass followed by a backward sweep of error signals, each layer of the network is trained using two forward passes only: one with “positive” examples that match the correct label, and one with “negative” examples constructed to be wrong in a controlled way. For each layer, the network measures a simple score called “goodness,” based on how strongly its neurons respond. The goal is to make goodness high for positive inputs and low for negative ones. Because each layer uses only its own activity to update its connections, there is no need to send error signals back through the entire network, and the algorithm becomes more local, modular, and hardware-friendly.

Making Forward–Forward Work with Spikes

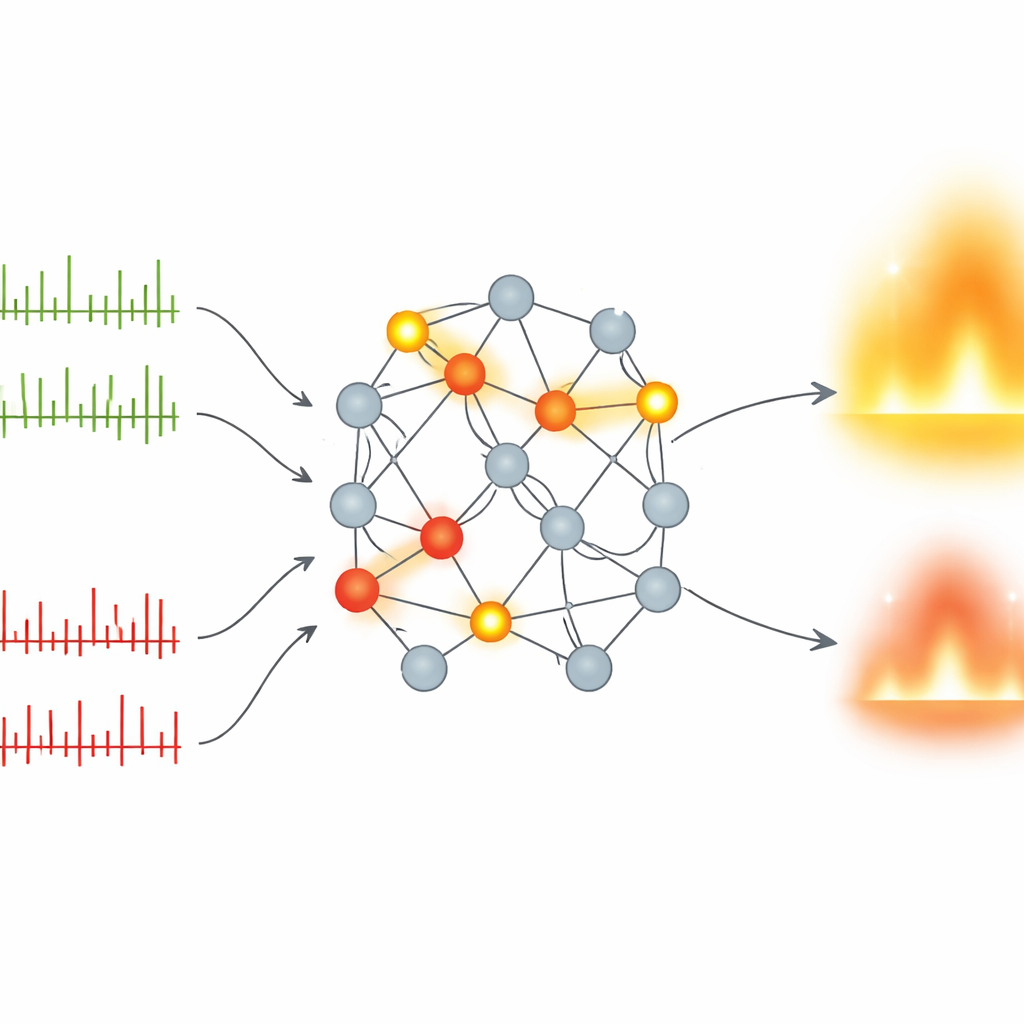

The authors adapt this idea to spiking networks by carefully designing how inputs and labels are encoded and how goodness is measured. First, images or sound patterns are turned into spike trains using rate coding—more intense inputs produce more spikes over a short series of time steps. Labels are embedded directly into part of the input, so a single vector carries both the data and a candidate class. Positive samples use the correct label; negative samples use a deliberately “hard” incorrect label chosen from classes the network tends to confuse. As spikes flow through layers of leaky integrate-and-fire neurons, the model counts how often each neuron fires for positive and negative passes. The goodness of a layer is then defined by the overall spike counts, and a smooth loss function encourages positive goodness to exceed negative goodness by a comfortable margin while keeping gradients stable.

How Well Does This Spiking Method Perform?

To test their approach, the authors train compact spiking networks on several standard vision benchmarks, including handwritten digits (MNIST), fashion items, Japanese characters, and colored object images (CIFAR-10), as well as neuromorphic datasets where inputs already arrive as spikes, such as event-based digit recordings (N-MNIST) and spoken digits encoded as spike trains (SHD). Despite using only two hidden layers and as few as 10 time steps, their forward–forward spiking models match or surpass other forward–forward spiking systems and come close to the best backpropagation-trained spiking networks. On more demanding temporal tasks like SHD, their method even outperforms several backpropagation-based spiking models, all while using fewer parameters and remaining easier to map onto event-driven hardware.

What This Means for Future Brain-Like Machines

For a lay reader, the key message is that there is now a promising way to train brain-inspired, spike-based networks without relying on the heavy machinery of backpropagation. By judging each layer on how strongly it responds to good versus bad examples, and by working entirely with forward passes, the forward–forward approach keeps learning local and modular while still achieving competitive accuracy. Although some ingredients—like surrogate gradients and explicit label embedding—are not strictly biological, this framework moves machine learning a step closer to how real nervous systems might adapt, and opens a path toward more efficient, low-power intelligent devices that learn from streaming sensory data in real time.

Citation: Ghader, M., Kheradpisheh, S.R., Farahani, B. et al. Backpropagation-free spiking neural networks with the forward–forward algorithm. Sci Rep 16, 14294 (2026). https://doi.org/10.1038/s41598-026-41671-4

Keywords: spiking neural networks, forward-forward learning, neuromorphic computing, biologically inspired AI, backpropagation alternatives