Clear Sky Science · en

Ethical considerations in the integration of artificial intelligence into education: a novel deep neural network framework for predicting transparency scores

Why this matters for students and teachers

Classrooms around the world are rapidly adopting artificial intelligence tools to grade work, recommend courses, and flag students who may need help. Yet most people have little idea how these systems actually make decisions, or whether they treat everyone fairly. This study introduces a way to measure how "see‑through" such educational AI systems are, and presents a new model, EduTransNet, that aims to make powerful predictions while keeping fairness, privacy, and accountability at the center.

Seeing inside AI decisions

At the heart of the paper is a new yardstick called the transparency score. Instead of judging AI in education only by how accurate its predictions are, the authors ask: can we explain its reasoning, trace how inputs lead to outputs, talk about its decisions clearly with non‑experts, and show that it follows ethical rules? They bundle these four ingredients—explainability, traceability, clarity of communication, and ethical compliance—into a single score from 0 to 100. High scores signal AI systems whose behavior can be inspected and questioned by students, teachers, and administrators, rather than hidden in a black box.

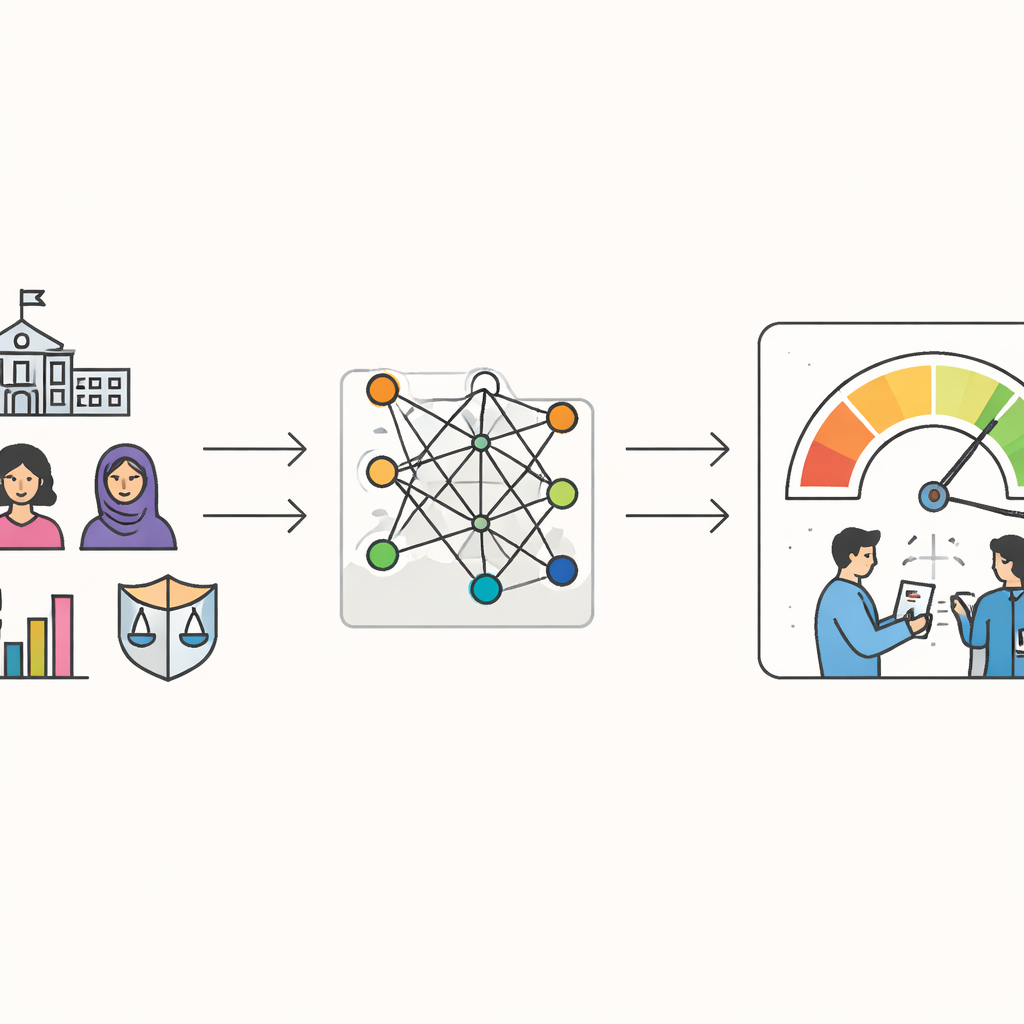

How the new model learns from students

To test this idea, the researchers collected data from 2,847 students at three universities in Pakistan. The information included basic demographics such as age and gender, study behaviors like attendance and weekly study hours, grades, and survey responses about privacy worries, awareness of algorithmic bias, sense of fairness, and expectations for openness. Using these ingredients, EduTransNet learns to predict each student’s transparency score—essentially estimating how transparent an AI‑driven decision would feel to that student and similar peers. Importantly, the model relies most on engagement and ethical perception features, and much less on demographics, suggesting it is driven more by how students act and feel than by who they are.

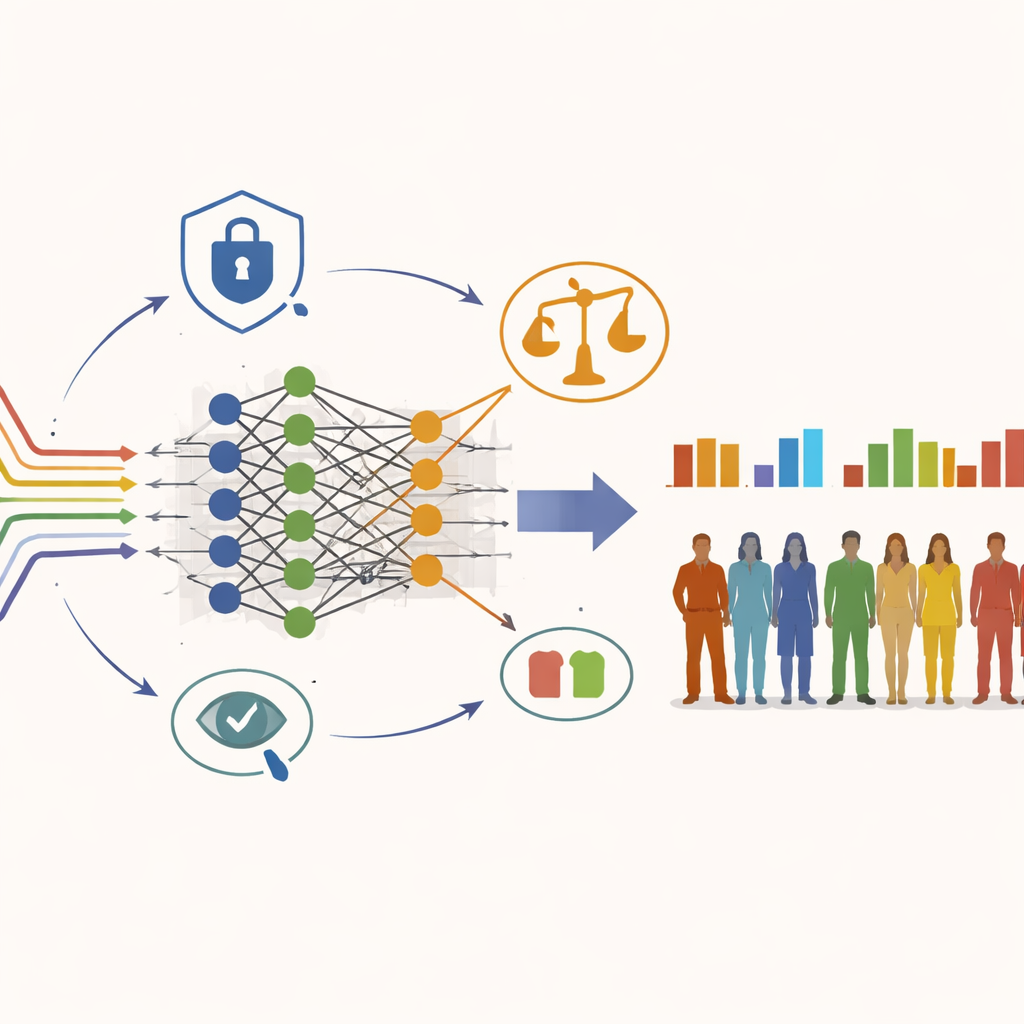

Building an AI model with guardrails

EduTransNet is a deep neural network tuned specifically for this task. It stacks several layers that gradually compress and refine the input data into a single transparency prediction. But unlike many powerful models that focus only on accuracy, this one is designed with guardrails. The training process explicitly penalizes patterns where one gender or ethnic group consistently receives higher or lower predicted scores than others. The authors also weave in a formal privacy‑versus‑usefulness trade‑off, using anonymization and noise‑adding techniques so that individual students cannot easily be reidentified while the system still learns meaningful patterns.

Checking accuracy, fairness, and privacy

The team compared EduTransNet with three common predictive tools: linear regression, support vector regression, and random forests. Across repeated tests and on a held‑out set of students it had never seen, EduTransNet explained about 99–100 percent of the variation in transparency scores, while making much smaller errors than the other models. Statistical checks suggested this strong performance is not just overfitting to quirks of the data. At the same time, fairness audits using established toolkits showed that predicted scores were closely balanced across gender and ethnic groups, and that error rates did not systematically favor any one group. Separate analyses confirmed that privacy protections were strong enough to limit reidentification risk while still supporting accurate learning.

What this means for real classrooms

Beyond the math, the study explores how such a system might be used in practice. In one scenario, EduTransNet helps identify students who may be at risk academically, but pairs each risk flag with a high‑or low‑transparency score so teachers know how much to trust and how to explain the alert. In another, it supports course and career recommendations while checking that suggestions are not quietly steering disadvantaged students toward narrower paths. At the policy level, aggregated transparency scores could highlight schools or programs where AI decisions feel opaque, guiding targeted reforms without exposing individuals.

Take‑home message for non‑experts

The central conclusion is that we do not have to choose between powerful AI and responsible AI in education. By designing models like EduTransNet that treat transparency and fairness as core design goals—rather than afterthoughts—developers can produce systems that are both highly accurate and easier to question, monitor, and improve. While the results so far come from three universities and need to be tested elsewhere, the framework offers a concrete path for schools, policymakers, and AI builders who want to harness smart tools without giving up on student rights, privacy, or equality of opportunity.

Citation: Alotaibi, R.M., Alnfiai, M.M., Alotaibi, N.N. et al. Ethical considerations in the integration of artificial intelligence into education: a novel deep neural network framework for predicting transparency scores. Sci Rep 16, 12123 (2026). https://doi.org/10.1038/s41598-026-41480-9

Keywords: AI in education, algorithmic transparency, ethical AI, student privacy, fairness in machine learning