Clear Sky Science · en

Human-centered design-based lightweight wearable IMU human pose estimation

Why Faster Body Tracking Matters

From physical therapy clinics to virtual reality headsets, many new technologies rely on understanding how our bodies move in real time. Today this often requires cameras, markers, or bulky computers that are hard to wear all day. This study explores how tiny motion sensors, similar to those in smartphones and smartwatches, can be combined with clever algorithms to estimate full-body pose almost instantly, using very little power. The goal is simple: make motion tracking accurate enough for serious use, but light and efficient enough to disappear into everyday wearables.

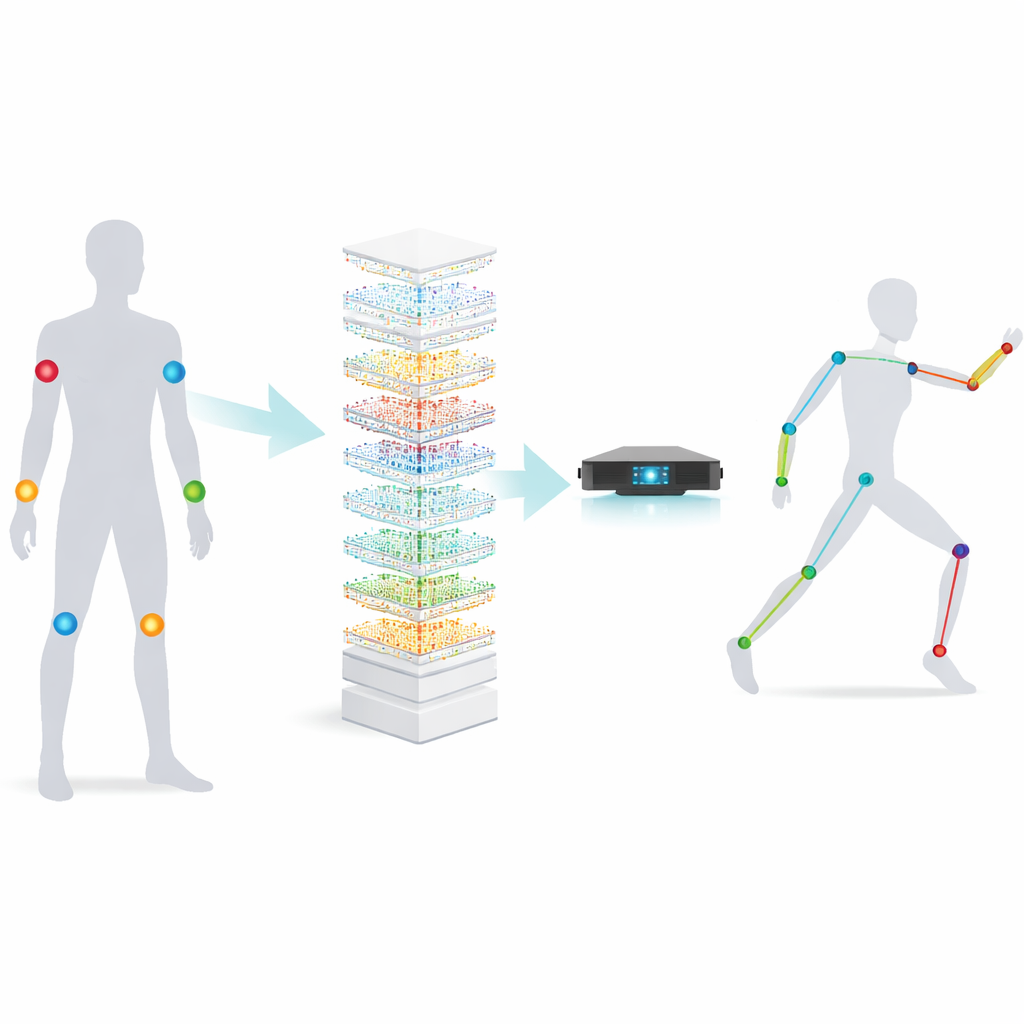

Small Sensors, Big Motions

The work centers on inertial measurement units, or IMUs—matchbox-sized devices that measure acceleration and rotation. Placed on a few key body locations, IMUs can sense how we move even when cameras cannot see us, such as in crowded rooms or outdoors at night. The challenge is that turning these raw sensor readings into a detailed 3D body pose is a complex puzzle: the device has only a handful of signals, yet it must infer the positions of many joints, in many different people, performing many different actions. Previous methods used large neural networks, such as deep recurrent networks and Transformers, which are accurate but heavy—they require lots of memory, energy, and time, making them ill-suited to small wearable devices.

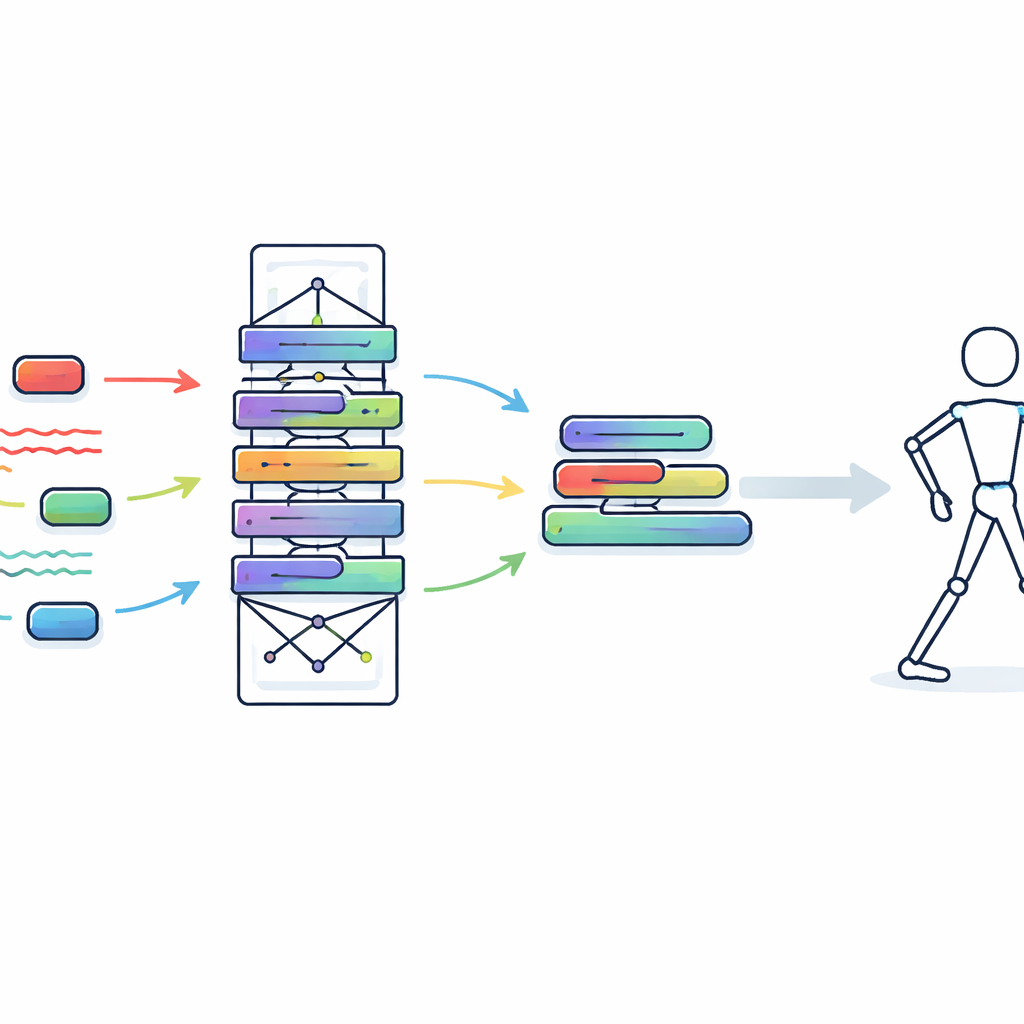

Teaching a Small Model to Think Like a Big One

The authors propose a two-step strategy inspired by how a student learns from a teacher. During training in the lab, they use a large, powerful Transformer model as the “teacher” to deeply analyze the sensor data over time and across body locations. In parallel, they design a smaller “student” model built from an operation called involution, which can flexibly adapt to local patterns in the data while using far fewer parameters than standard convolution. Through a process known as knowledge distillation, the student does not just match the final pose outputs; it is also nudged to mimic the teacher’s internal feature patterns. This way, the student gradually picks up high-level tricks for reading motion from sensors without needing the teacher’s size and complexity once deployed.

Turning a Training Network into a Tiny Runtime Engine

To make the student model truly wearable-friendly, the researchers go a step further with a procedure called structural re-parameterization. During training, the student block includes several branches, normalization steps, and adaptive kernels to maximize learning flexibility. Before deployment, all of these pieces are mathematically merged into a single streamlined computation that behaves like two simple one-dimensional convolutions. This folding process preserves the model’s behavior but eliminates extra layers and operations. Because standard convolution is heavily optimized on modern hardware, this transformation drastically cuts the time and energy needed to process each frame, without sacrificing what the network has learned.

How Well Does It Work in Practice?

The team evaluates their approach on two public motion datasets, DIP-IMU and IMUPoser, which contain millions of frames of people performing everyday and athletic activities, captured simultaneously with IMUs and high-precision motion-capture systems. Their lightweight model matches or nearly matches the best existing methods in average joint error—81 millimeters on DIP-IMU and 94 millimeters on IMUPoser, within about 1% of the strongest baselines. At the same time, it runs one to two orders of magnitude faster: each frame is processed in roughly 0.011–0.012 milliseconds, compared with several tenths of a millisecond to nearly a full millisecond for competing systems. This speed translates to tens of thousands of frames per second on a GPU, far beyond what any wearable device actually needs, leaving plenty of room for battery savings and other on-device tasks.

What This Means for Everyday Wearables

For non-specialists, the key takeaway is that the authors have found a way to separate “thinking hard” from “acting fast.” A large model can think hard during training to understand human motion in rich detail, while a much smaller model—carefully taught and then simplified—handles the real-time work on your wristband, headset, or rehabilitation brace. The result is body tracking that is almost as accurate as heavyweight lab systems but lean enough for low-power, always-on devices. This paves the way for wearables that can give timely feedback during exercise, warn about unsafe movements on the job, or make virtual worlds respond more naturally to our bodies, all without bulky hardware or rapid battery drain.

Citation: Wang, L., Liu, J., Xue, J. et al. Human-centered design-based lightweight wearable IMU human pose estimation. Sci Rep 16, 11420 (2026). https://doi.org/10.1038/s41598-026-41004-5

Keywords: wearable sensors, human pose estimation, inertial measurement units, lightweight neural networks, real-time motion tracking