Clear Sky Science · en

Quantifying uncertainty in protein representations across models and tasks

Why reliability in protein AI matters

Artificial intelligence has become a powerful microscope for the invisible world of proteins. Modern "protein language models" can guess what a protein looks like in 3D and how it might behave, just from its sequence of building blocks. These models are already helping design new drugs and understand disease mutations. But there is a hidden problem: they rarely tell us how much we should trust the internal representations they create. This paper tackles that gap by asking a simple question with big consequences: when a model turns a protein into a cloud of numbers, how can we tell whether that cloud actually reflects real biology or is just noise?

From sentences to proteins

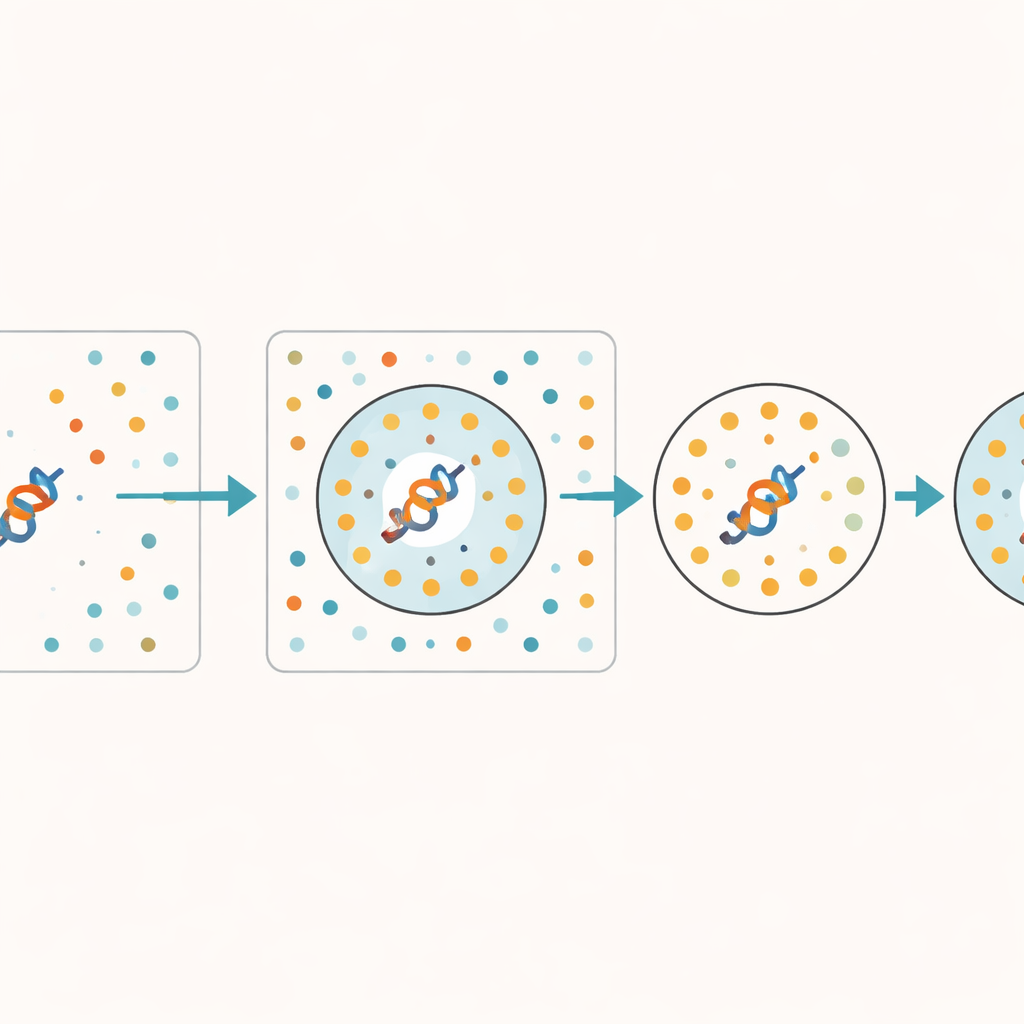

Language models were first developed to handle human text, learning how words relate to one another and predicting what comes next in a sentence. The same ideas now power models that read protein and DNA sequences as if they were long words. For each protein, the model produces an "embedding"—a point in a high-dimensional space meant to summarize what the model knows about that protein. These embeddings are fed into many downstream tasks, such as predicting structure, function, and the impact of mutations. Yet, unlike familiar prediction scores or confidence measures, embeddings are usually taken at face value: if the model outputs a vector, users tend to trust it, even in regions of protein space that the model has barely seen during training.

Spotting when the model is guessing

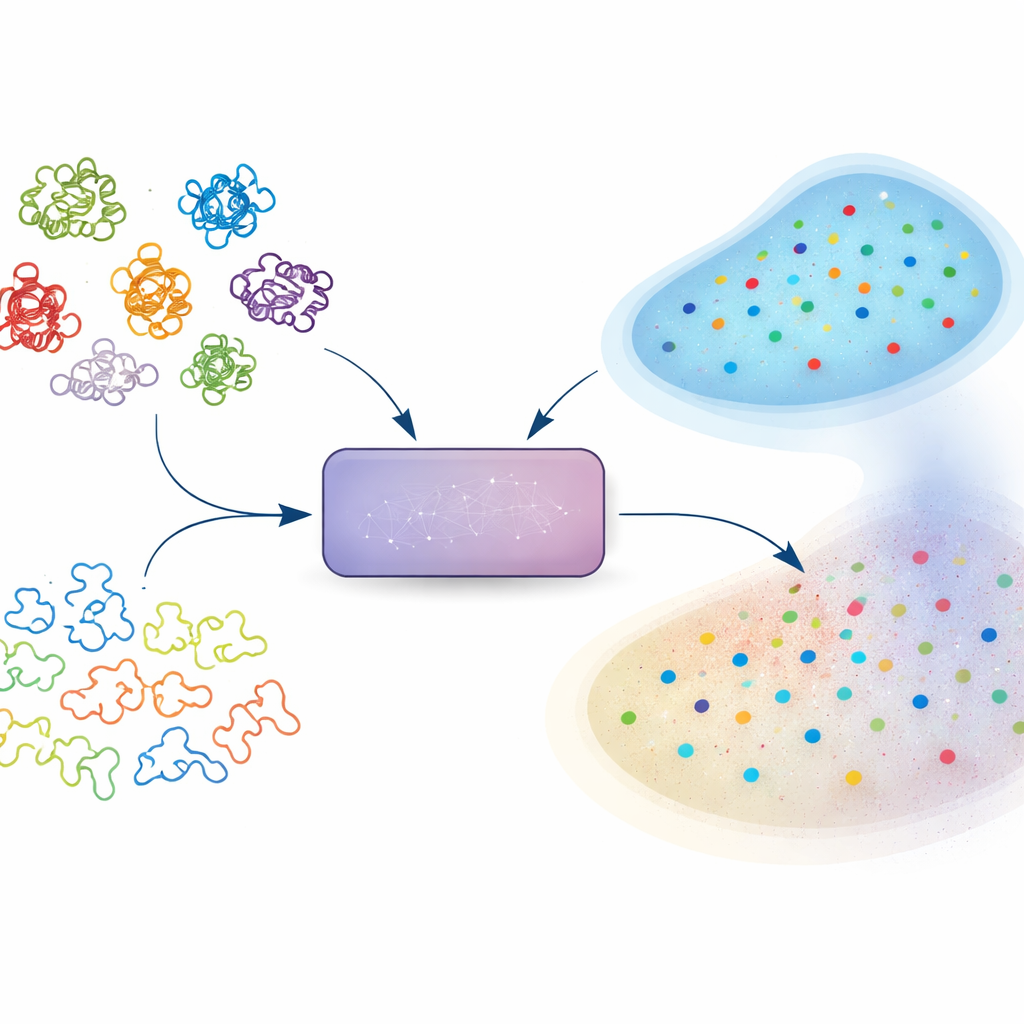

The authors propose a practical way to estimate how trustworthy an embedding is, without modifying the underlying model. Their key idea is to give the model a set of deliberately scrambled protein sequences that keep the same basic composition but lose all meaningful biological patterns. These synthetic sequences act as a "junkyard"—a reference for what the model produces when there is no real signal to learn. For any real protein, the method checks how many of its closest neighbors in the model’s internal space belong to this junkyard. If many nearby points come from scrambled sequences, the protein’s representation is probably under-learned or ambiguous. The authors call this fraction of junkyard neighbors the Random Neighbor Score (RNS).

Tying uncertainty to real-world performance

To see whether RNS actually reflects something biologically important, the team analyzed large collections of protein structures and sequences using several state-of-the-art models, including ESM-2 and ProtT5. They found that proteins whose structures were predicted accurately tended to have low RNS—meaning their embeddings were far from the junkyard. In contrast, proteins with poor structural predictions lived in regions where real and scrambled sequences overlapped. This pattern held across different models and tasks. When they looked at more applied problems, such as predicting which amino acid residues contact each other in 3D or assigning secondary structure, they observed a clear decline in accuracy as RNS increased. In other words, the less certain the embedding (higher RNS), the less reliable the downstream prediction.

Blind spots in protein space

RNS also revealed systematic blind spots in how models represent different parts of the protein universe. Intrinsically disordered regions—flexible stretches that lack a stable structure—had consistently higher RNS than well-structured domains, showing that models struggle more with these slippery sequences. Even within the well-studied human proteome, a substantial fraction of proteins had nonzero RNS, indicating they are not well captured by popular models. Surprisingly, bigger models were not always better: a large, structure-focused model could be more uncertain about many human proteins than a smaller, more general one. For newly discovered metagenomic proteins and even computer-designed "hallucinated" proteins that were built to look realistic, low RNS suggested that models can confidently generalize beyond their training data when patterns are coherent.

Better filters for better biological insight

The authors next tested how RNS-based screening affects a clinically relevant task: predicting whether a single-letter change in a human protein is likely to disrupt its function or cause disease. When they restricted analysis to proteins with low RNS—where embeddings appeared reliable—model performance improved markedly, often reaching strong discrimination between harmful and neutral variants. For proteins with high RNS, predictions dropped toward coin-flip levels. This supports the view that unreliable embeddings quietly cap the best possible accuracy of any downstream tool built on them, regardless of clever training tricks.

What this means for using AI in biology

For non-specialists, the takeaway is that not all AI-derived protein representations are equally trustworthy, and that this trustworthiness can now be quantified. The Random Neighbor Score acts as a simple, model-agnostic health check on embeddings: low scores indicate that a protein sits among other biologically meaningful sequences, while high scores suggest it drifts toward a junkyard of random look-alikes. By filtering or weighting proteins based on RNS before making structural predictions, annotating functions, or prioritizing disease variants, researchers can focus on regions where the model truly "understands" the data. Just as no scientist would use a blurry microscope without noticing, this work argues that every protein language model should come with a built-in way to gauge the sharpness of its internal view of biology.

Citation: Prabakaran, R., Bromberg, Y. Quantifying uncertainty in protein representations across models and tasks. Nat Methods 23, 796–804 (2026). https://doi.org/10.1038/s41592-026-03028-7

Keywords: protein language models, embedding reliability, representation uncertainty, variant effect prediction, intrinsically disordered proteins