Clear Sky Science · en

Biomimetic hairy affective-touch sensory AI interface

Why teaching touch to machines matters

We often judge how someone feels from the way they touch us: a quick pat, a tense shove, a slow reassuring stroke. Today’s robots and smart devices can see and hear, but they largely miss this rich emotional channel. This paper presents a new kind of soft, hairy electronic skin that lets machines sense not just that they are being touched, but how that touch feels emotionally, opening the door to gentler, more natural interactions between people and AI.

A new kind of artificial hairy skin

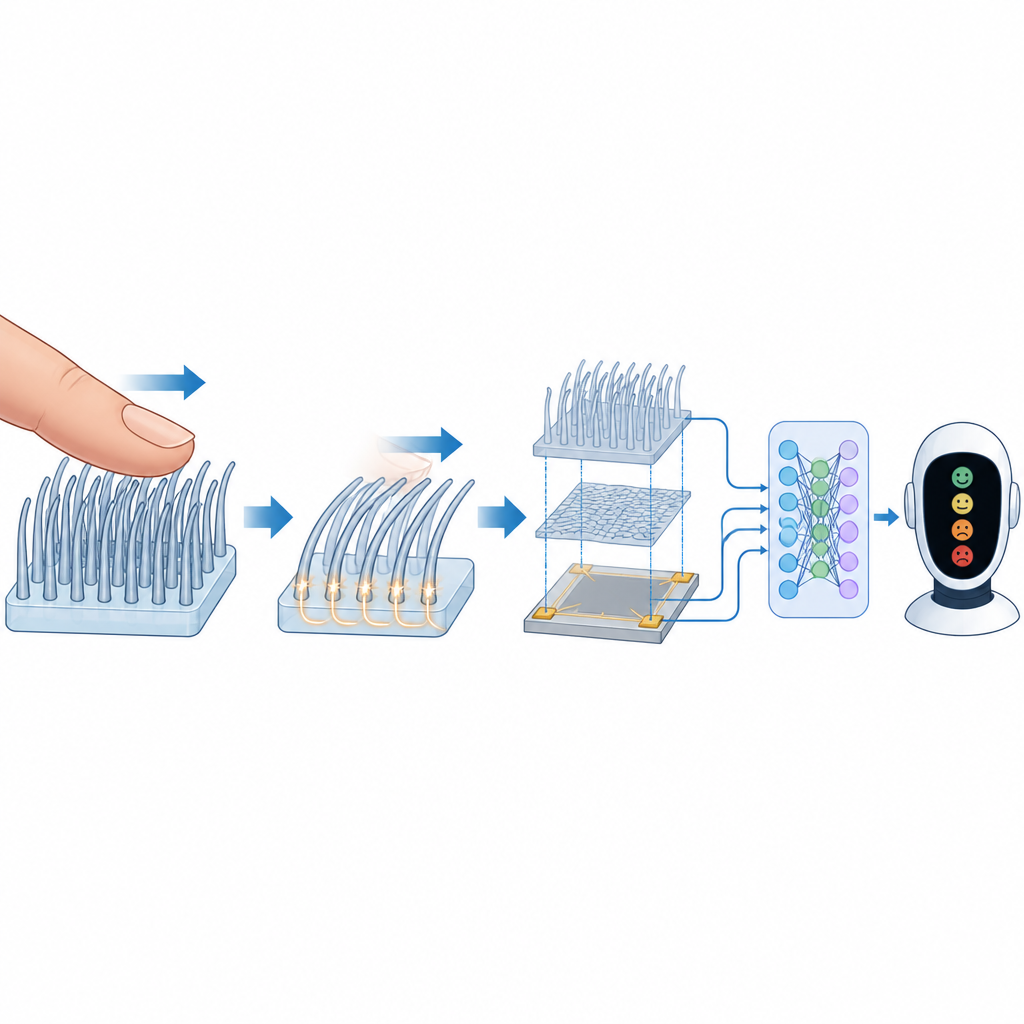

The researchers created a flexible sensor, called BioAI2, that mimics the fine hairs on mammal skin. In animals, tiny nerve endings wrapped around hair roots detect light strokes and send signals linked to comfort and social bonding. BioAI2 copies this idea with a bed of soft silicone hairs sitting on top of a thin, highly uniform conductive mesh. When a finger brushes across the hairs, they bend and bounce back, creating brief electrical pulses without any batteries or external power. These pulses carry information about where the touch happens, how hard it is, and how fast it moves, much like the signals from our own touch-sensitive nerve fibers.

From gentle strokes to brain like signals

Under the surface, the device relies on a simple physical effect: when two different materials touch and separate, tiny charges move between them. Human skin and the silicone hairs trade charge as the finger slides, and the underlying mesh collects these changes as a train of sharp spikes. By carefully designing hairs of two different heights and spacing, the team made the sensor especially sensitive to light forces while still handling stronger presses. They found that the rate of electrical spikes rises with stroking speed up to a sweet spot, then falls again, matching how certain human touch nerves respond best to caress-like speeds. This makes the output not just a technical signal, but a direct stand in for the way our bodies encode pleasant touch.

Seeing force, location, and motion all at once

Unlike many earlier touch sensors that measure only pressure at fixed points, BioAI2 reads out several aspects of touch from a single thin sheet. Four electrodes at the corners collect pulses whose strengths and timing vary with where the finger lands and how it moves. The researchers developed a mathematical mapping method based on smooth "isoline" curves so that even touches near the edges can be located very accurately over a large area. The spiky hair design also makes each pulse extremely short in time, allowing the system to separate overlapping touches from multiple fingers and reconstruct complex paths, such as letters or shapes drawn on the surface.

Teaching machines to read feelings from touch

To link these patterns to human emotions, volunteers watched film clips designed to trigger positive, neutral, or negative moods, then performed everyday gestures like strokes, knocks, and slaps on the hairy surface. The device captured thousands of examples, and the team converted the raw signals into colorful time frequency images, adding indicators of stroke speed and force. A deep learning system learned to recognize both the gesture type and the likely emotional tone behind it. Across different people, it correctly identified the gesture nearly all the time and labeled the emotional state with more than 80 percent accuracy, showing that emotional cues in touch can be decoded from this artificial skin.

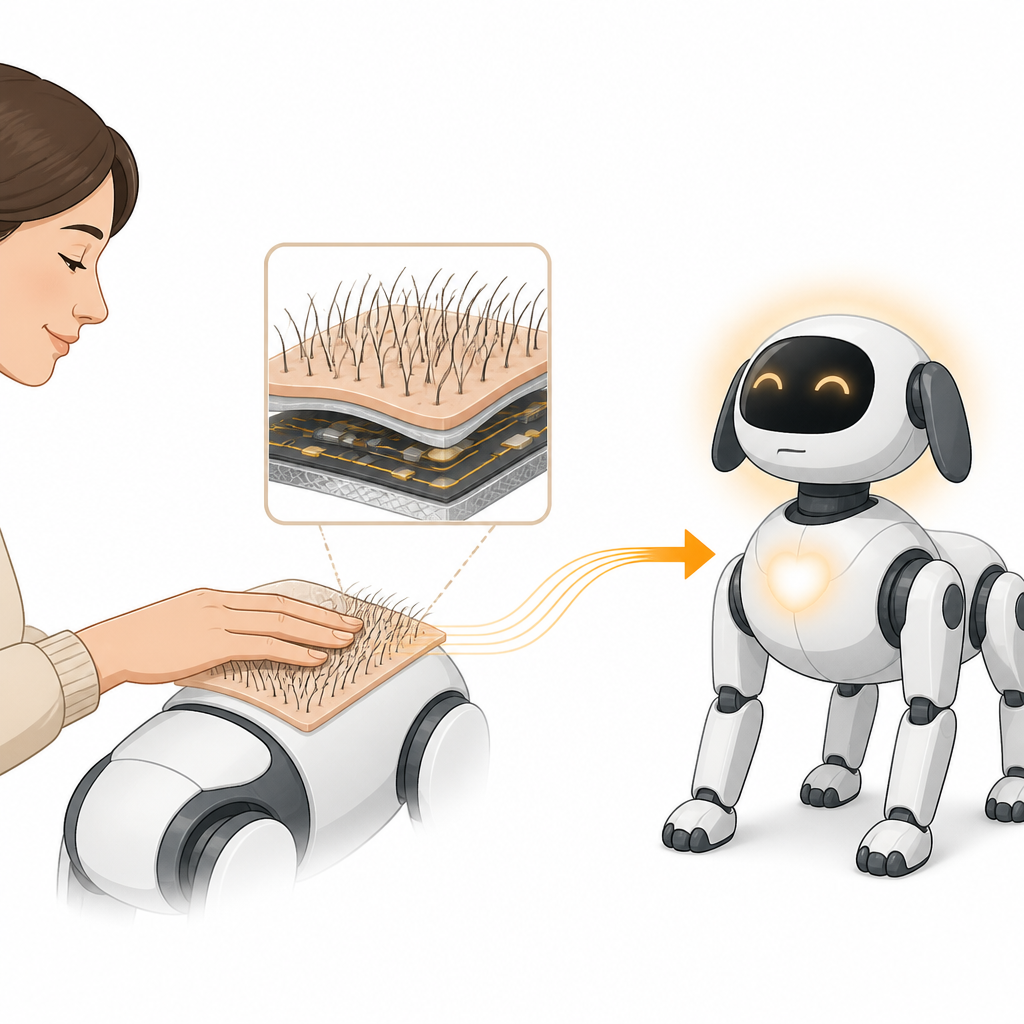

Closing the emotional loop with robots

Finally, the researchers combined the hairy skin with a robot dog and a large language model similar to modern chatbots. The skin sensed how the owner stroked the robot while the language model received extra context such as the situation and the relationship between human and robot. Together, they chose appropriate expressive actions, like jumping, resting, or nuzzling, to match the detected mood. This creates a full loop: a person expresses emotion through touch, the machine interprets both the touch and the context, and then responds in a way that feels emotionally fitting.

What this means for future human machine relationships

This work shows that a thin, soft, hair covered electronic skin can turn subtle patterns of touch into signals that machines can use to sense our feelings. By blending this sensor with modern AI, robots and devices can move beyond rigid button presses toward interactions that resemble the comfort of petting an animal or holding a hand. While the system still needs larger surfaces, more data, and other senses like temperature to fully match human touch, it points toward a future where technology can respond to our emotional state through touch, making digital companions, assistive robots, and therapeutic tools feel more human aware and supportive.

Citation: Hong, J., Xiao, Y., Chen, Y. et al. Biomimetic hairy affective-touch sensory AI interface. Nat Commun 17, 4146 (2026). https://doi.org/10.1038/s41467-026-70334-1

Keywords: affective touch, electronic skin, human robot interaction, tactile sensing, emotion recognition