Clear Sky Science · en

Large-array sub-millimeter precision coherent flash three-dimensional imaging

Seeing Depth with Incredible Detail

Imagine a camera that can not only see the world in three dimensions, but measure distances so precisely it can detect changes smaller than the thickness of a credit card from tens of meters away. This is what the researchers behind this work have built: a new kind of laser-based 3D camera that combines long range, very fine depth accuracy, and room to scale up to many more pixels. Such a tool could help monitor tiny shifts in bridges or buildings, preserve delicate artworks in digital form, and make virtual reality scenes far more lifelike.

Why Measuring Distance Is So Hard

Modern cars, robots, and mapping systems increasingly rely on LiDAR, a method that shines laser light onto a scene and times how long it takes to bounce back, building a 3D picture. Many current systems steer a narrow laser beam with moving mirrors, which limits speed and reliability. Solid-state approaches without moving parts are emerging, but they still face trade-offs: designs that steer beams electronically can be hard to scale up and often take a long time to scan, while highly sensitive single-photon detectors struggle to form large, low-noise arrays with fine depth accuracy. Conventional camera chips known as CCDs already offer huge numbers of pixels, but when used in 3D systems they typically rely on crude timing tricks that only resolve distances to within a few centimeters.

A New Way to Use Light and Radio Together

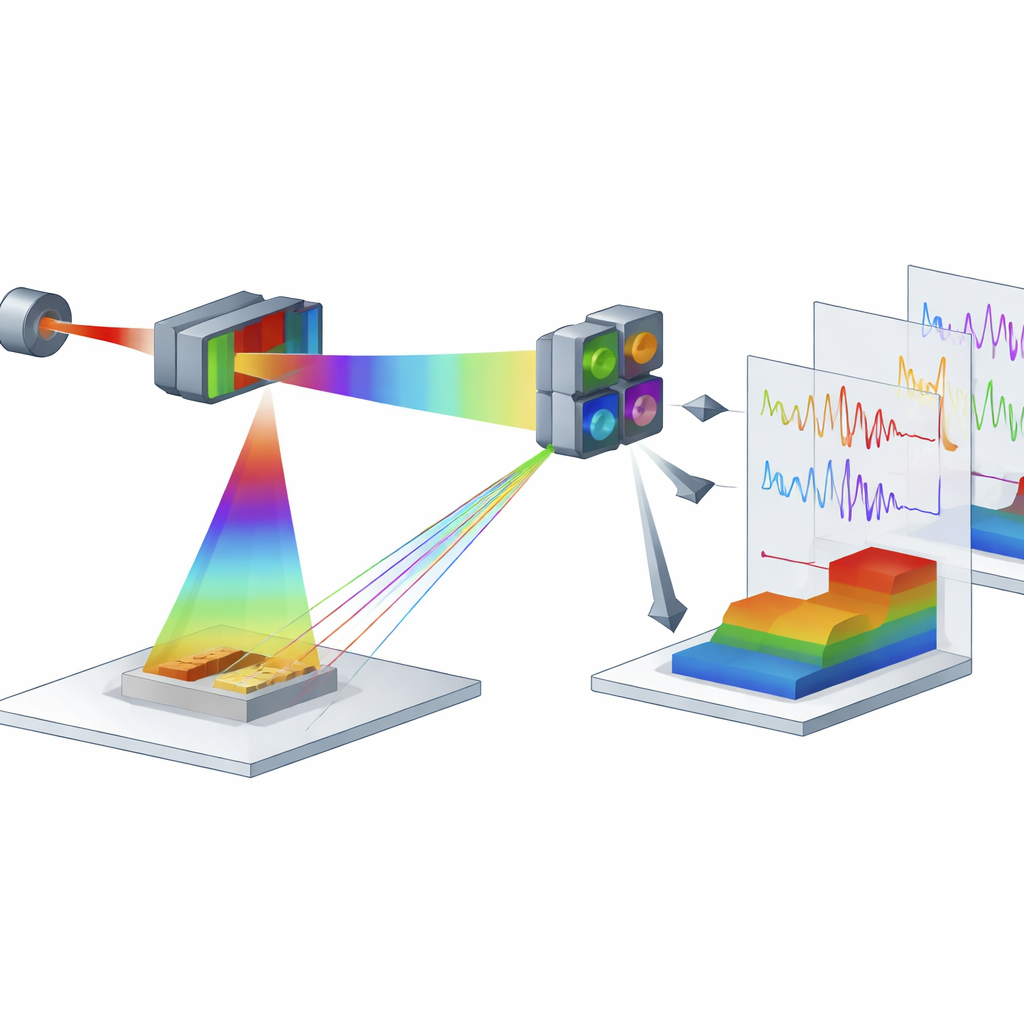

The authors present a different strategy that marries the maturity of CCD technology with a technique known as coherent detection, widely used in high-speed fiber-optic communications. Instead of firing short laser pulses, they continuously shine a laser whose brightness is rhythmically driven by a radio-frequency signal that steps through many closely spaced frequencies over time. Part of this modulated light floods the scene as a “probe,” while another part is kept as a “local reference.” At the receiver, an optical assembly combines the faint light returning from each point in the scene with the stronger reference light and feeds the result into four synchronized CCD cameras that act together as a single “coherent image sensor.” Within every pixel, the mixing of probe and reference encodes distance as a subtle pattern in the recorded signal.

Turning Flickers into a 3D Picture

During one full sweep of radio frequencies, each CCD pixel records a sequence of brightness values across thousands of frames. On their own, these raw images show almost nothing—the returning light is far weaker than the reference. But when the researchers apply a tailored processing algorithm, they tease out a tiny oscillating component in each pixel’s signal. The frequency of this oscillation turns out to be directly tied to how far that point in the scene is from the camera. By running a frequency analysis, the system converts each pixel’s time trace into an accurate distance measurement, stacking these measurements into a detailed 3D map. In tests with a staircase-shaped target 30.5 meters away, the camera clearly resolved steps only 5 millimeters high and reconstructed the full surface in three dimensions using all 320 × 256 pixels.

Testing Real Scenes and Pushing the Limits

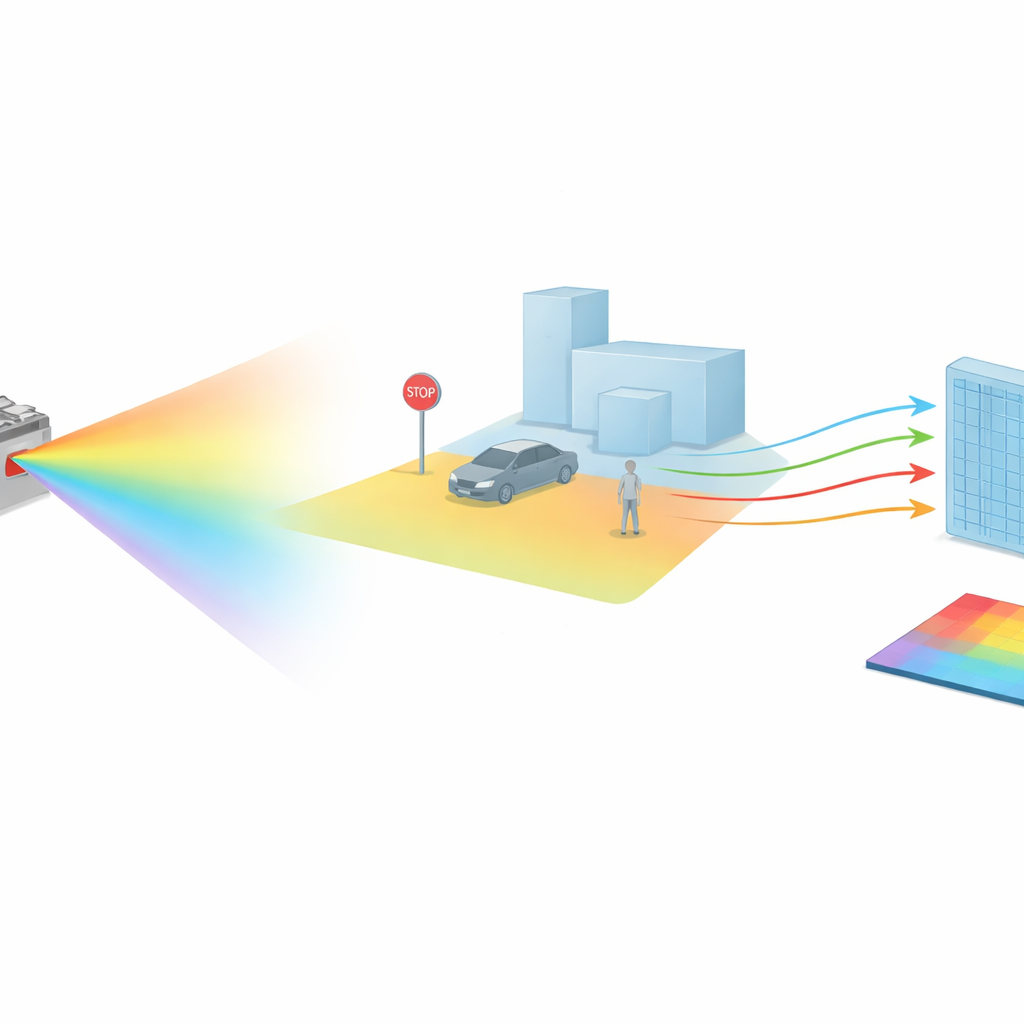

To show what the system can do in practice, the team imaged a miniature traffic scene containing a car, a pedestrian, and street fixtures at the same 30.5-meter distance. With only a few tens of milliwatts of optical power, the camera recovered a crisp 3D model where individual objects, their shadows, and millimeter-scale features were visible. Using a flat target, they measured a depth precision of just 0.47 millimeters—at least ten times better than many other solid-state LiDAR designs. They also explored how performance changes with the number of frames used, finding a trade-off between speed and precision: faster captures give rougher depth, while longer acquisitions sharpen the measurements. The system remained robust even when air turbulence was introduced along the optical path, and it could reconstruct objects moving sideways at up to 300 millimeters per second by shortening the exposure time.

From Statues to Future Digital Worlds

Beyond test patterns, the researchers used their camera to scan a bust sculpture from eight viewing angles, each at more than 30 meters away. By stitching these views together, they built a lifelike virtual model that can be rotated and examined from any direction. Because the technique can, in principle, work at visible wavelengths and share hardware with regular imaging, it opens the door to devices that capture both detailed color photographs and ultra-precise depth maps at once. Although today’s prototype is limited by the frame rate of its CCD sensors, faster image chips and smarter sampling schemes could boost speed dramatically. In simple terms, this work shows that by cleverly combining radio-style modulation with familiar camera technology, it is possible to see the 3D world at long range with sub-millimeter accuracy—an advance with far-reaching implications for monitoring, mapping, and immersive digital experiences.

Citation: Wang, B., Tian, J., Wang, J. et al. Large-array sub-millimeter precision coherent flash three-dimensional imaging. Nat Commun 17, 2780 (2026). https://doi.org/10.1038/s41467-026-69188-4

Keywords: 3D imaging, LiDAR, depth sensing, coherent detection, CCD cameras