Clear Sky Science · en

Neural network models vs. MT evaluation metrics: a comparison between two approaches to automated assessment of information fidelity in consecutive interpreting

Why this research matters to everyday language users

Whenever you listen to a speech interpreted from one language to another, you trust that the core message has survived the journey. Checking this "faithfulness" has long depended on expert humans, which is slow and expensive. This study asks whether modern artificial intelligence can help judge how accurately an interpreter has conveyed information, potentially making language services fairer, cheaper, and easier to quality‑check at scale.

Understanding faithful interpreting

Interpreting quality has many dimensions, but professionals overwhelmingly agree that information fidelity – how completely and accurately the meaning is carried over – is the most important. Traditionally, experts listen to the original speech and the interpreted version, or compare the interpretation to an ideal written version, and then score how well ideas, links between ideas, and the speaker’s tone have been preserved. These methods are rich and nuanced, but they require highly trained people to spend a lot of time replaying recordings, switching between languages, and making fine‑grained judgments. As a result, detailed human assessment is usually reserved for exams or research, not for everyday training and large‑scale quality control.

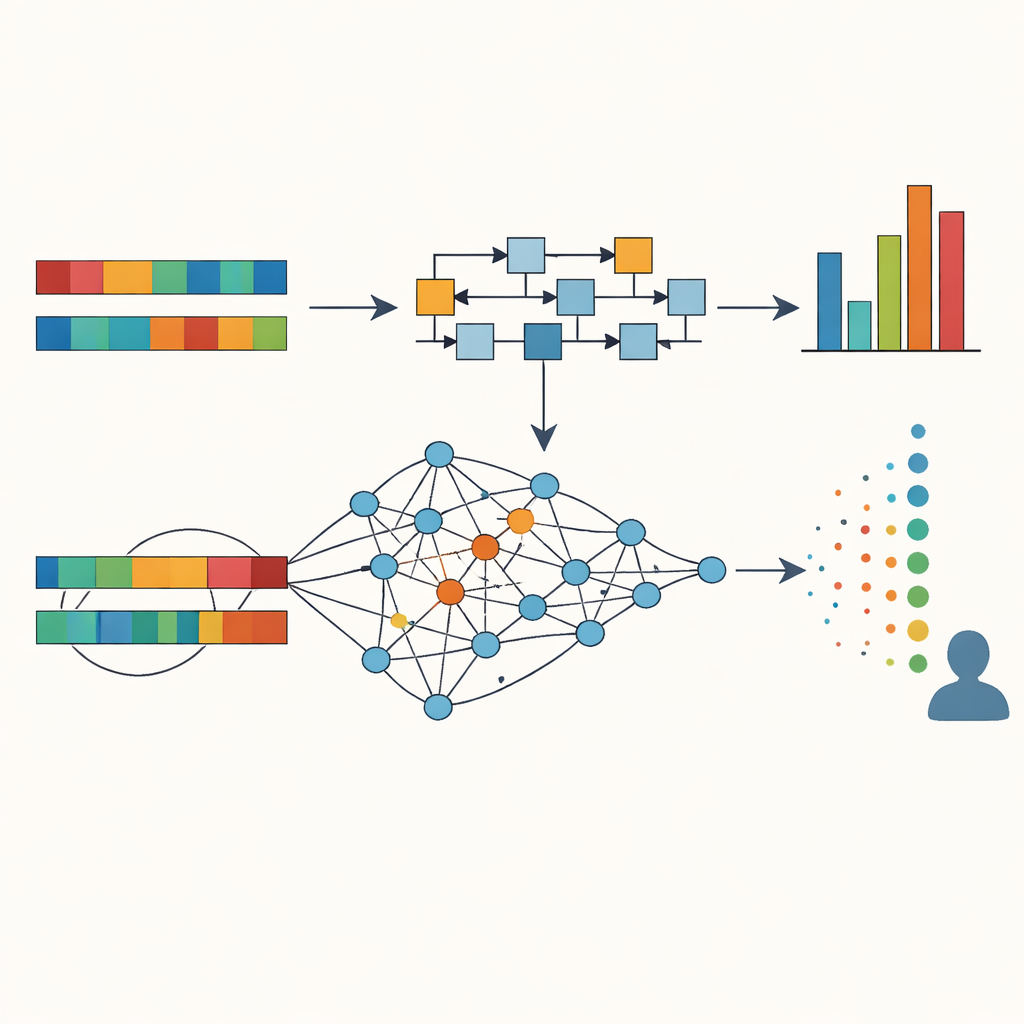

From translation yardsticks to smart models

To lighten the load on human raters, researchers have borrowed tools from machine translation, where computer programs compare a system’s output to several trusted human translations. Classic metrics such as BLEU and METEOR look for overlapping word patterns between what was said and a set of reference versions, producing a numerical score. They work best when several high‑quality reference translations are available, but such references are costly to produce, and word‑by‑word overlap often misses the bigger picture of meaning, especially across structurally different languages like English and Chinese.

How the study put humans and machines to the test

This research focused on English–Chinese consecutive interpreting by trainee interpreters. The authors selected three sample interpretations representing high, medium, and low overall quality from a larger pool. They transcribed both the original English speech and the Chinese interpretations, cleaned away fillers, and aligned them into 94 matching sentence pairs. Two experienced raters then scored each pair for fidelity – covering main ideas, how ideas linked together, supporting details, and the speaker’s attitude and intent – achieving very high agreement with one another. In parallel, the researchers computed automatic scores for each sentence using two families of tools: traditional translation metrics (BLEU and METEOR, based on multiple revised machine translations of the source speech as references) and a suite of neural models that measure cross‑lingual similarity directly between the English sentence and its interpreted Chinese version.

What the machines saw in the interpretations

The study compared machine scores with the human ratings using statistical correlations. Traditional metrics showed moderate alignment: on average, their scores tracked human judgments reasonably well (around r = 0.45), with the simplest BLEU variant performing slightly better than METEOR. Neural approaches did better overall, especially those that turn sentences in different languages into shared numerical "embeddings" capturing meaning. A multilingual sentence‑embedding model called MUSE showed the strongest match with human scores (r = 0.55), while embeddings from large language models such as GPT and LLaMA, and direct GPT‑based scoring, also correlated moderately well. Importantly, these models coped better with natural re‑phrasing, such as when a Chinese sentence reorganised an English one but preserved its sense, where word‑overlap metrics could falsely signal failure. Cluster analyses, which grouped interpretations by their machine scores, showed that combining several metrics together could separate low, medium, and high‑quality interpretations in ways that closely echoed human ratings.

What this means for future language assessment

For non‑specialists, the takeaway is that today’s AI can already provide useful, though not perfect, signals about how faithfully an interpreter has conveyed a speech. Cross‑lingual neural models that compare meanings directly, rather than just counting shared words against reference texts, come closest to human judgment and can spot good interpretations even when they use different wording or structure. The correlations are strong enough to be statistically meaningful but not to replace expert raters outright. Instead, the study suggests using a mix of neural scores and traditional metrics as a fast, low‑cost aid for "low‑stakes" situations: classroom feedback, practice sessions, or preliminary screening in large‑scale assessments. Human expertise remains crucial for high‑stakes decisions and for capturing nuances of style, context, and ethics that current machines cannot fully grasp, but AI‑based tools are poised to become valuable partners in safeguarding the fidelity of interpreted communication.

Citation: Wang, X., Wang, B. Neural network models vs. MT evaluation metrics: a comparison between two approaches to automated assessment of information fidelity in consecutive interpreting. Humanit Soc Sci Commun 13, 567 (2026). https://doi.org/10.1057/s41599-026-06562-z

Keywords: interpreting quality, information fidelity, neural network evaluation, machine translation metrics, English–Chinese interpreting