Clear Sky Science · en

Interpretable predictions from whole-body FDG-PET/CT using parameters associated with clinical outcome

Smarter Scans for Cancer Care

Doctors already rely on powerful body scans to find and track cancer, but much of the information in these images remains unused because it is too complex to analyze by eye. This study shows how an artificial intelligence (AI) system can turn whole‑body cancer scans into a compact, easy‑to‑analyze form and still make accurate, understandable predictions about a patient’s tumors and overall health. The approach aims not just to be smart, but also to be transparent enough that clinicians can see what the AI is “looking at,” helping to build trust in computer‑aided decisions.

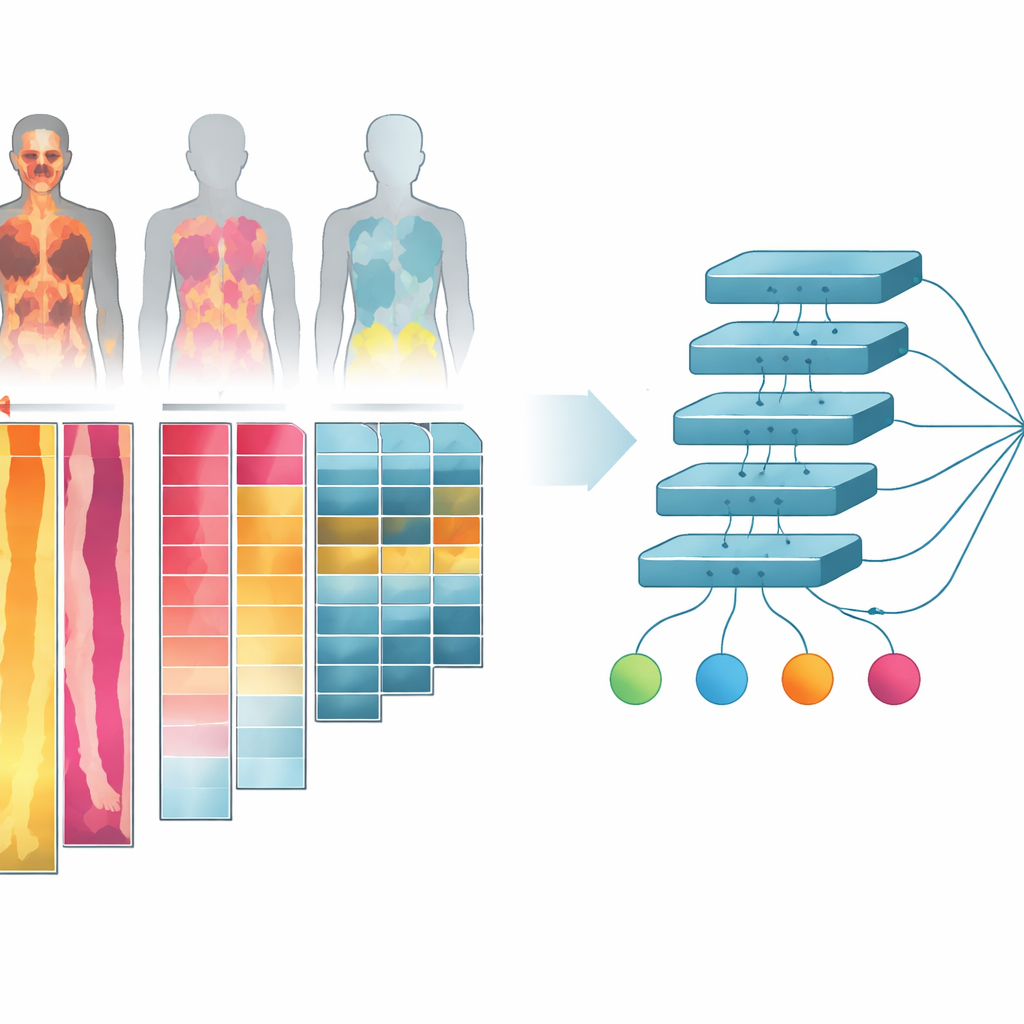

Turning 3D Body Scans into Flat Picture Strips

The researchers worked with more than one thousand combined PET/CT scans from cancer patients and healthy controls. These scans show both body structure (CT) and how sugar‑hungry different tissues are (PET), which is important because cancers usually consume more sugar than normal tissue. Instead of feeding the full three‑dimensional images into the AI—which would be very demanding for computer hardware—they collapsed the scans into two‑dimensional “projections.” They also separated the body into simple tissue groups: bone, lean tissue like muscle and organs, body fat, and air‑filled spaces such as lungs. For each tissue type and for different viewing angles, they created image channels that capture how the tissue looks and how active it is, then arranged these channels into a single collage‑like image for each person.

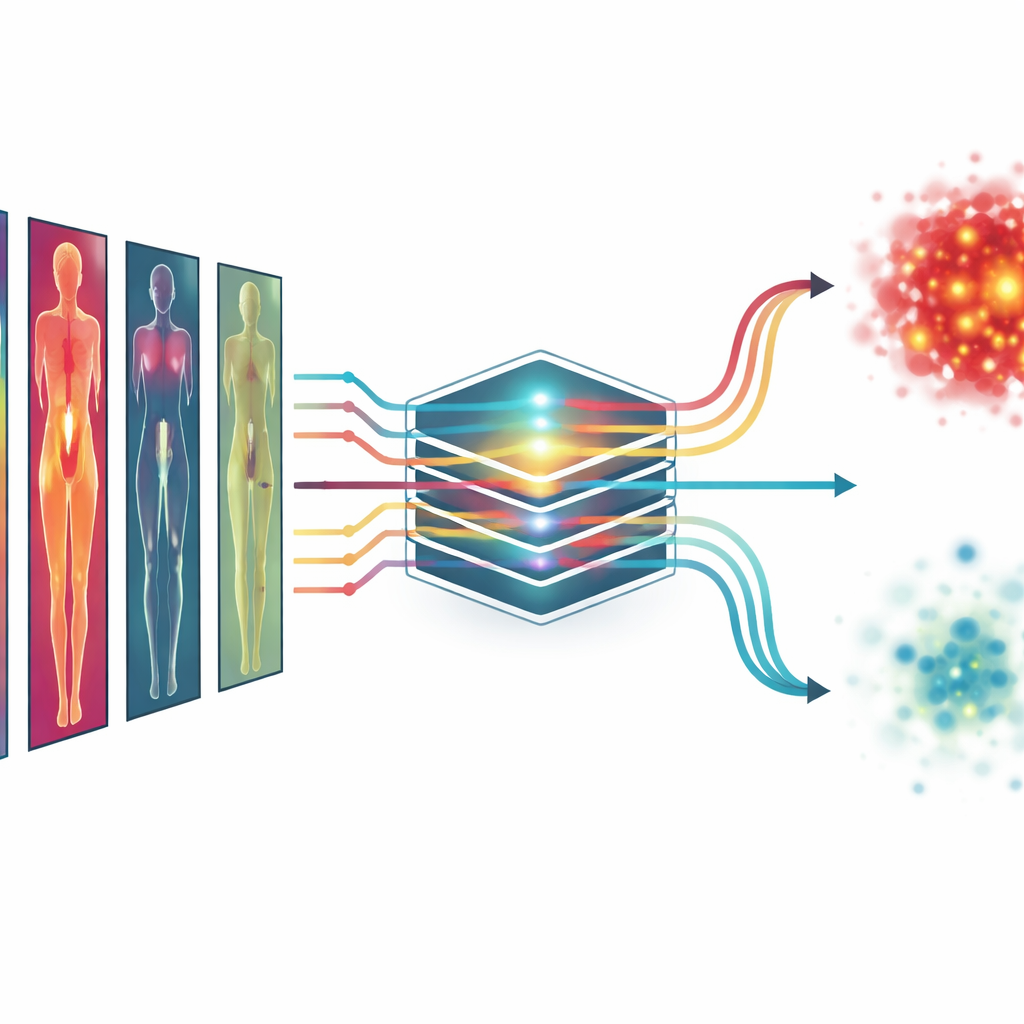

Teaching the AI to Read Health Signals

Using these tissue‑wise collages, the team trained a deep learning model to predict several key numbers that are known to matter for cancer outcomes. These included the total metabolic tumor volume (a measure of how much active tumor is present), the number of separate lesions, the patient’s age, and whether the patient had cancer or not. The model was also asked to tell male from female. Some of these targets, like tumor volume, are visible in the scans; others, like age, are not obvious at all and require the AI to pick up subtle patterns in body structure and metabolism. To make the evaluation fair, the researchers repeatedly split the data into training and testing groups, so that each scan served as an independent test at some point.

How Well the System Performed

The AI using all tissue‑specific PET channels predicted tumor volume and lesion count with high accuracy, getting close to the performance of more complex three‑dimensional methods that examine every slice of the scan. It also estimated age to within about seven years on average and classified sex almost perfectly. When deciding whether a scan came from a person with cancer, the system reached a very high accuracy as well. Importantly, including information from multiple tissues and viewing directions nearly always improved performance compared with using a single, simpler image. This suggests that cancers “leave their mark” not just in obvious hot spots, but also in more subtle changes across the body that the multi‑channel projections help to reveal.

Seeing Where the AI Looks

To address a common worry about AI—that it works like a black box—the researchers used a technique called saliency mapping to highlight which parts of the images most influenced each prediction. For tumor volume and cancer vs. no‑cancer, the hot spots in these maps matched real tumor locations, showing that the model was paying attention to medically sensible regions. For age prediction, it focused on areas such as the liver, thigh and shoulder muscles, and regions near the pelvis, which are known to change with age and body composition. For sex classification, it concentrated on the chest and pelvic areas. These patterns, confirmed across the whole group of patients, suggest that the system is learning meaningful cues rather than latching onto random noise.

What This Could Mean for Patients

Although this work is an early proof of concept, it shows that complex whole‑body cancer scans can be distilled into efficient, interpretable 2D images that still allow AI to predict clinically important information. In the future, similar models might move beyond tumor size and count to estimate how long a patient is likely to live, how well they may respond to treatment, or how their disease is progressing—using information that is already being collected in routine care. Because the method also shows where the AI is getting its answers, it could help doctors better understand and trust its suggestions, supporting more personalized and transparent cancer care.

Citation: Tarai, S., Lundström, E., Ahmad, N. et al. Interpretable predictions from whole-body FDG-PET/CT using parameters associated with clinical outcome. Commun Med 6, 232 (2026). https://doi.org/10.1038/s43856-026-01567-w

Keywords: FDG-PET/CT imaging, deep learning in oncology, tumor burden prediction, explainable AI, clinical outcome modeling